AI strategy sounds confident in conference rooms.

It looks good in slide decks.

It survives executive reviews.

It often receives budget approval.

And yet, most AI strategies collapse the moment execution begins.

Not because the vision was wrong.

Not because the tools were inadequate.

But because the strategy was never translated into explicit, executable work.

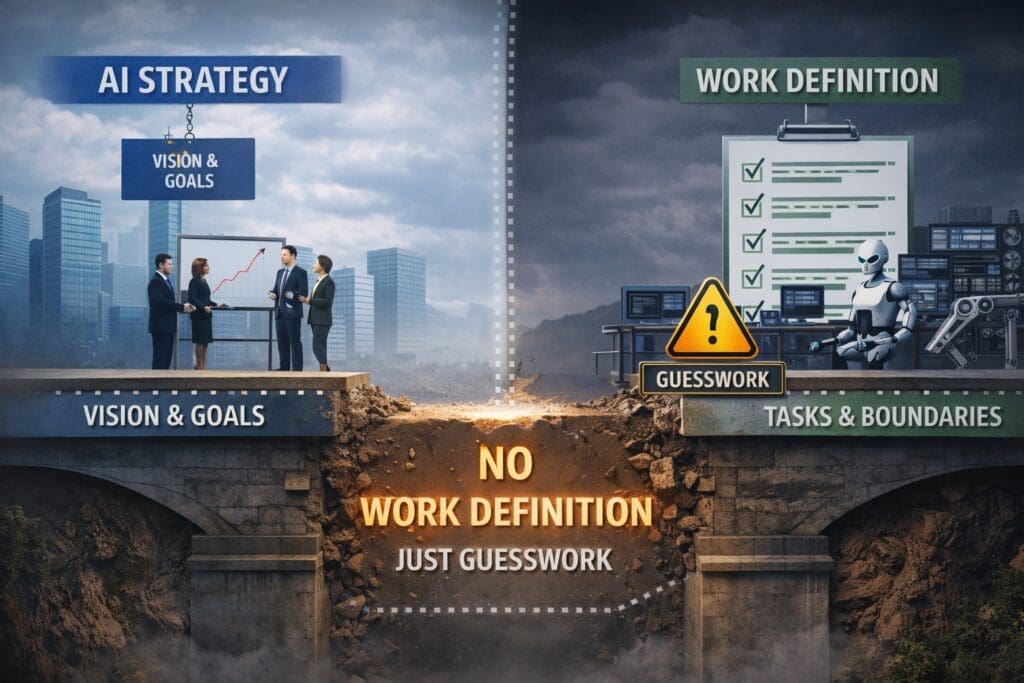

Without work definition, AI strategy isn’t a plan.

It’s hope.

Strategy Answers Why — Execution Requires What

An AI strategy typically answers questions like:

- Why are we investing in AI?

- What outcomes do we want?

- Where do we expect efficiency or advantage?

- How does this align with business goals?

Those are necessary questions.

They are also insufficient.

Execution doesn’t run on intent.

It runs on tasks, decisions, inputs, outputs, and constraints.

When strategy is handed directly to implementation teams without defining those elements, teams are forced to guess — and guesswork is not execution.

The Assumption That Breaks AI Initiatives

Most organizations operate under an unspoken assumption:

If the strategy is clear, the work will define itself.

That assumption holds in areas where:

- Work is already standardized

- Variability is low

- Outcomes are deterministic

AI lives in the opposite environment.

AI is introduced specifically where:

- Judgment exists

- Variability is high

- Exceptions are common

- Outcomes are probabilistic

That makes explicit work definition mandatory, not optional.

What “Work Definition” Actually Means

Defining work is not documentation theater.

It is the process of making execution visible and transferable.

Proper work definition answers:

- What task or decision is being performed?

- What inputs are required?

- What outputs are produced?

- What quality criteria define success?

- What happens when inputs are missing or ambiguous?

- When must a human intervene?

- What level of error is acceptable?

Until those questions are answered, AI has nothing solid to execute.

Why AI Strategy Without Work Definition Feels “Stalled”

When work isn’t defined, AI initiatives exhibit predictable symptoms:

- Pilots that never scale

- Systems that work “sometimes”

- Endless refinement cycles

- Loss of trust from users

- Quiet abandonment instead of explicit failure

From the outside, it looks like a technology problem.

Internally, teams sense something is wrong — but can’t articulate it.

The missing piece is almost always work clarity.

Engineers Don’t Resist Strategy – They Resist Ambiguity

This is where friction often appears.

Leadership sees resistance.

Engineers see risk.

When strategy lacks defined work boundaries, engineers are forced to:

- Invent requirements

- Make business decisions implicitly

- Own failure modes they don’t control

That’s not resistance.

That’s a rational response to undefined responsibility.

Work definition aligns authority with execution — and removes most of that tension.

Why Better Models Don’t Fix This Problem

A common reaction to stalled AI initiatives is escalation:

- New vendors

- Better models

- Larger budgets

- More tooling

This rarely works.

Better AI does not compensate for unclear work.

It amplifies the consequences of ambiguity.

When AI behaves unpredictably, the root cause is often not intelligence — it’s undefined expectations.

Strategy Becomes Real Only When Work Is Explicit

An AI strategy becomes executable only after it is decomposed into:

- Clearly bounded tasks

- Testable decisions

- Observable behavior

- Known failure modes

This decomposition is the bridge between vision and execution.

Skip it, and AI becomes fragile.

Build it, and AI becomes predictable.

How Successful Teams Do This Differently

Teams that succeed with AI don’t start with automation.

They start by asking:

Can a human execute this work consistently if we had to write it down?

If the answer is no, AI isn’t the problem — clarity is.

Successful teams:

- Define work independently of technology

- Validate small units of execution first

- Treat AI as an implementation detail

- Introduce autonomy only after reliability is proven

This approach feels slower initially — and moves faster in the long run.

Strategy Without Work Definition Is Not Leadership

This is the uncomfortable truth.

Strategy without execution clarity doesn’t reduce risk.

It shifts it downstream — to teams least equipped to absorb it.

Leadership isn’t about vision alone.

It’s about making execution possible.

Work definition is not beneath strategy.

It is what gives strategy teeth.

Closing the Gap Between Strategy and Execution

If your AI strategy feels solid but results remain elusive, ask one question:

What work have we explicitly defined – and what are we hoping AI figures out?

Whatever falls into the second category is where failure hides.

AI doesn’t fail because it can’t think.

It fails because it’s asked to act without clarity.

Strategy without work definition is just hope.

Execution begins when the work is made explicit.

Related Reading

If this resonates, you may also want to read:

Why AI Fails Between Strategy and Execution (And How to Fix It)

That article explains how work definition fits into a larger execution discipline — and why skipping it is the most common AI failure pattern in enterprises.

Frequently Asked Questions

What does “work definition” mean in an AI strategy?

Work definition is the process of explicitly describing the tasks and decisions AI is expected to perform. This includes inputs, outputs, quality criteria, failure handling, and human escalation paths. Without this clarity, AI systems are forced to guess, leading to inconsistent behavior and loss of trust.

Why does AI strategy fail without work definition?

AI strategy fails without work definition because strategy explains intent, not execution. AI systems cannot act on vision or alignment alone — they require clearly bounded, testable work. When this layer is missing, implementation teams fill in gaps inconsistently, causing execution to stall or fail quietly.

Isn’t defining work something engineers do later?

No. Defining work is not a technical detail — it is an execution prerequisite. Engineers can implement defined work, but they should not be forced to invent it. When work definition is deferred, responsibility and risk are pushed downstream, increasing failure rates.

How is work definition different from requirements gathering?

Traditional requirements often focus on features or tools. Work definition focuses on execution reality: what actually happens, what decisions are made, what inputs exist, and what constitutes success or failure. show the system must behave, not just what it should include.

Why do AI initiatives feel stalled even with executive support?

Because executive alignment does not automatically create execution clarity. Without explicit task and decision boundaries, AI initiatives remain stuck in pilots and proofs of concept, unable to scale safely into production.

Can better AI models compensate for poor work definition?

No. Better models amplify the consequences of ambiguity. When work is undefined, AI appears unreliable regardless of model quality. Intelligence cannot replace clarity.

Why do engineers often resist AI strategies?

Engineers don’t resist strategy — they resist ambiguity. When AI strategies lack defined work boundaries, engineers are forced to make business decisions implicitly and absorb uncontrolled risk. Clear work definition aligns authority with responsibility.

What are the signs that work definition is missing?

Common indicators include:

- AI systems that work “sometimes”

- Endless refinement without rollout

- Low user trust

- Frequent manual overrides

- Projects that fade instead of failing decisively

These are structural issues, not technical ones.

How can organizations define work effectively for AI?

Successful teams:

- Describe work independently of tools

- Validate human execution first

- Define inputs, outputs, and quality thresholds

- Identify acceptable error and escalation paths

- Prove small capabilities before scaling

This creates predictable execution.

Is work definition only necessary for complex AI systems?

No. Work definition is essential even for simple AI use cases. In fact, smaller systems fail faster when work is unclear because there is less room to absorb ambiguity.

What happens after work is clearly defined?

Once work is defined, AI becomes an implementation choice rather than a risk. Systems become testable, observable, and scalable. Automation and agents can then be introduced safely, instead of being used as a substitute for clarity.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish