Most AI initiatives don’t fail because the technology is bad.

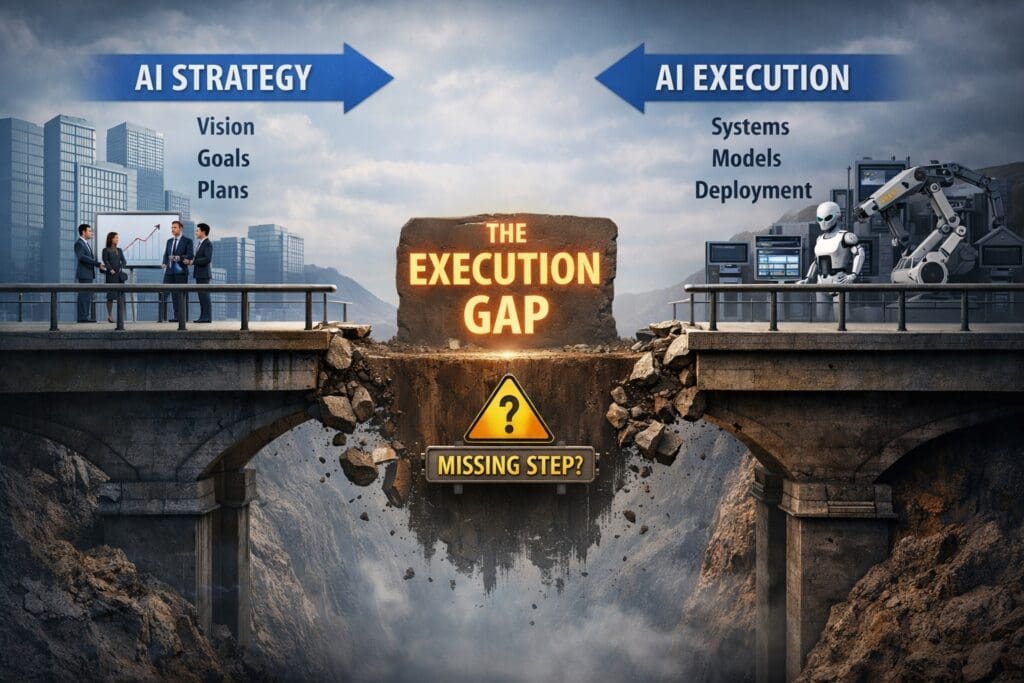

They fail quietly — in the space between strategy and execution.

Leadership approves a vision.

Teams build prototypes.

Demos look impressive.

And then… nothing meaningful happens.

No explosion.

No obvious disaster.

Just stalled pilots, brittle systems, and a slow loss of confidence.

This is the most common failure mode in enterprise AI — and the most dangerous one — because it rarely looks like failure at first.

This article explains where that gap comes from, why most teams miss it, and how organizations close it without betting the company on hype.

The Myth: “If the Strategy Is Clear, Execution Will Follow”

Most organizations believe this, even if they wouldn’t say it out loud:

If leadership aligns on AI strategy, the rest is just implementation.

That assumption works reasonably well for:

- Standard software projects

- Infrastructure upgrades

- Vendor-led rollouts

It breaks down completely with AI.

Why?

Because AI does not execute strategy directly.

It executes work — and only when that work is explicitly defined.

Strategy answers why.

Execution requires what, how, under what conditions, and with what tolerance for error.

The gap between those two is where AI initiatives quietly die.

Where AI Actually Breaks (And It’s Not Where People Look)

When AI projects fail, postmortems usually focus on:

- Model accuracy

- Data quality

- Tool selection

- Vendor performance

Those issues matter — but they’re rarely the root cause.

The real failure usually happens earlier, before models or tools ever had a chance to succeed.

Specifically, AI breaks when organizations move directly from:

High-level intent → AI implementation

…and skip the hardest, least glamorous step:

👉 Explicitly defining the work AI is expected to perform.

Strategy Is Not Executable by Default

AI strategy typically sounds like this:

- “We want to automate X.”

- “We want AI-assisted decision-making.”

- “We want to improve efficiency and reduce cost.”

- “We want to leverage AI across the organization.”

None of those statements are wrong.

None of them are executable.

AI cannot act on:

- Intent

- Vision

- Alignment

- Slide decks

AI acts on tasks, decisions, inputs, outputs, constraints, and quality thresholds.

When those are missing, teams fill in the gaps themselves — each in a different way.

That’s when execution starts to drift.

The Missing Middle: From Vision to Defined Work

Most failed AI initiatives share the same invisible hole in the middle:

Strategy → ❌ → Implementation

What’s missing is a work-definition layer that translates intent into something executable.

This layer answers questions like:

- What work actually happens today?

- What inputs are used?

- What does a “good” outcome look like?

- Where is judgment required?

- Where is automation acceptable — and where is it not?

- What happens when the system is uncertain or wrong?

When this layer is skipped, AI systems are asked to solve undefined problems.

They don’t fail loudly.

They behave inconsistently.

They become “hard to trust.”

Why Demos Succeed and Production Systems Fail

This is where many teams get confused.

They’ve seen AI work.

They’ve run:

- Proofs of concept

- Internal demos

- Pilot projects

So why does everything fall apart later?

Because demos quietly avoid everything production systems cannot.

Demos:

- Use clean data

- Ignore edge cases

- Assume perfect inputs

- Skip exception handling

- Have humans correcting mistakes behind the scenes

Production systems:

- Inherit messy reality

- Must run every day

- Face ambiguity constantly

- Require accountability

- Cannot “just try again” without consequences

Without defined work boundaries, AI looks intelligent in demos — and unreliable in production.

AI Doesn’t Fail Loudly — It Fails Quietly

Traditional system failures are obvious:

- Outages

- Errors

- Crashes

- Alarms

AI failures are different.

They look like:

- “It kind of works”

- “We’re not confident enough to roll it out”

- “Users stopped trusting it”

- “We’ll revisit this next quarter”

No one pulls the plug.

No one declares failure.

The initiative simply loses momentum.

This quiet failure mode is why many organizations believe they are “behind on AI” — when in reality, they’ve just never crossed the execution gap.

Engineers See the Problem Long Before Leadership Does

This gap also explains a common tension:

Why do engineers seem skeptical of AI initiatives?

Because engineers are the first people forced to translate vision into reality.

They’re the ones asking:

- What exactly should the system do?

- What happens when inputs are incomplete?

- How do we test this?

- How do we know when it’s wrong?

- Who owns the decision?

When those answers don’t exist, engineers don’t see “innovation.”

They see unbounded risk.

That skepticism isn’t resistance — it’s a signal that the execution layer is missing.

Execution Readiness Is Not About Tools or Talent

Many organizations respond to stalled AI initiatives by:

- Buying more platforms

- Switching vendors

- Hiring specialists

- Chasing newer models

That rarely fixes the problem.

Execution readiness has very little to do with tools.

It has everything to do with whether:

- Work is explicitly defined

- Tasks are testable

- Inputs and outputs are clear

- Failure modes are understood

- Human escalation paths exist

If those conditions aren’t met, better AI only amplifies confusion faster.

How Successful Teams Close the Gap

Organizations that succeed with AI don’t start by automating everything.

They start by making work visible.

Practically, that means:

1. Defining Work Independently of Technology

They describe tasks as if a human had to execute them consistently — before involving AI.

2. Proving Small Capabilities First

They validate small, reliable units of work instead of chasing big transformations.

3. Treating AI as an Implementation Detail

AI is used where it helps execution — not where it replaces thinking.

4. Introducing Autonomy Last

Automation and agents come after reliability, not before.

This approach feels slower at first — but compounds quickly because systems remain explainable and trustworthy.

The Real Value: Reducing the Leap of Faith

The hardest part of approving AI initiatives isn’t cost.

It’s uncertainty.

Executives are asked to approve systems they can’t mentally simulate.

When the execution gap is closed, something important happens:

AI stops feeling mysterious.

Leaders can:

- See where risk lives

- Understand what’s being automated

- Know what happens when things go wrong

- Decide what not to automate

That’s not hype.

That’s control.

AI Doesn’t Replace Thinking — It Amplifies It

AI initiatives don’t fail because organizations lack intelligence.

They fail because clarity is assumed instead of engineered.

The gap between strategy and execution isn’t a technology problem.

It’s a discipline problem.

And once that discipline is in place, AI stops being fragile — and starts becoming useful.

Final Thought

If your AI initiatives feel stalled, unreliable, or perpetually “almost ready,” the problem is probably not the model.

It’s the missing middle.

Close that gap — and execution finally catches up to strategy.

Frequently Asked Questions

Why do most AI initiatives fail in enterprises?

Most AI initiatives fail before technology becomes the problem.

They break down in the gap between high-level strategy and executable work. When tasks, decisions, inputs, outputs, and quality criteria are not explicitly defined, AI systems are asked to solve undefined problems — leading to unreliable results and loss of trust.

Is AI failure usually caused by poor models or bad data?

Not usually.

While models and data matter, they are rarely the root cause. In most failed initiatives, AI was introduced before the underlying work was clearly specified. Better models often amplify confusion faster rather than fixing it.

What does “the gap between strategy and execution” mean in AI?

It refers to the missing layer where business intent is translated into explicit, testable work.

Strategy defines why AI should exist. Execution requires clearly defined what, how, when, and under what conditions. When that translation layer is skipped, AI initiatives stall or quietly fail.

Why do AI demos succeed while production systems fail?

Demos avoid real-world constraints.

They typically use clean data, limited scenarios, human correction, and no accountability. Production systems must handle ambiguity, edge cases, failures, and daily operation. Without clear work definitions, AI looks intelligent in demos but unreliable in reality.

What is execution readiness in AI?

Execution readiness means the organization has:

- Clearly defined tasks and decisions

- Explicit input and output criteria

- Known failure modes

- Human escalation paths

- Testable and observable behavior

It has very little to do with tools or vendors and everything to do with structure and clarity.

Why are engineers often skeptical of AI initiatives?

Because engineers are responsible for making systems run reliably.

When AI initiatives lack clear requirements, boundaries, and failure handling, engineers see risk — not innovation. That skepticism is usually an early warning signal that execution discipline is missing.

Can off-the-shelf AI tools solve this execution gap?

Only when workflows closely match the tool’s assumptions.

Most enterprise environments have unique processes, constraints, and governance requirements. Off-the-shelf tools work best when combined with clearly defined work and selectively customized where necessary.

Does adding AI agents help fix execution problems?

No. Agents amplify existing systems — they don’t fix them.

If core tasks are unreliable or poorly defined, agents simply automate failure faster. Autonomy should only be introduced after execution is proven stable and observable.

How can organizations close the AI execution gap?

Successful teams focus on:

- Defining work independently of technology

- Proving small, reliable capabilities first

- Treating AI as an implementation detail

- Introducing automation and agents last

This approach reduces risk, improves trust, and allows AI systems to scale safely.

How long does it take to see value from AI when done correctly?

Value should appear early — but modestly.

Successful AI programs deliver visible, defensible improvements quickly by targeting small, high-friction tasks first. If meaningful progress isn’t visible early, that’s a signal to adjust or stop — not to push harder.

What is the biggest misconception about AI in enterprises?

That AI replaces thinking.

In reality, AI amplifies whatever clarity — or confusion — already exists. Organizations that succeed with AI invest more in defining work and decision boundaries than in chasing new models or tools.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish