Legacy Systems? Still AI-Ready.

Executive Summary

Many organizations assume that artificial intelligence (AI) and machine learning (ML) are only applicable to cloud-native, modern platforms. This assumption leaves valuable opportunities untapped—especially in enterprises and government entities that rely on legacy systems. In reality, legacy systems are not roadblocks to AI—they’re data-rich environments waiting to be augmented.

This guide shows how mid-to-senior technical and business leaders can bring AI to their existing infrastructure using the Microsoft ecosystem, .NET libraries, and proven integration strategies. By the end of this guide, you’ll understand:

- How to integrate AI into legacy environments without full system replacement

- Patterns for combining AI and older tech stacks

- Tools like ML.NET, Azure ML, Power Platform, and Semantic Kernel that make it possible

- How to build business cases and avoid common pitfalls

Let’s bust some myths and unlock the real power of your existing technology investments.

Understanding the Legacy Landscape

What Are Legacy Systems (Really)?

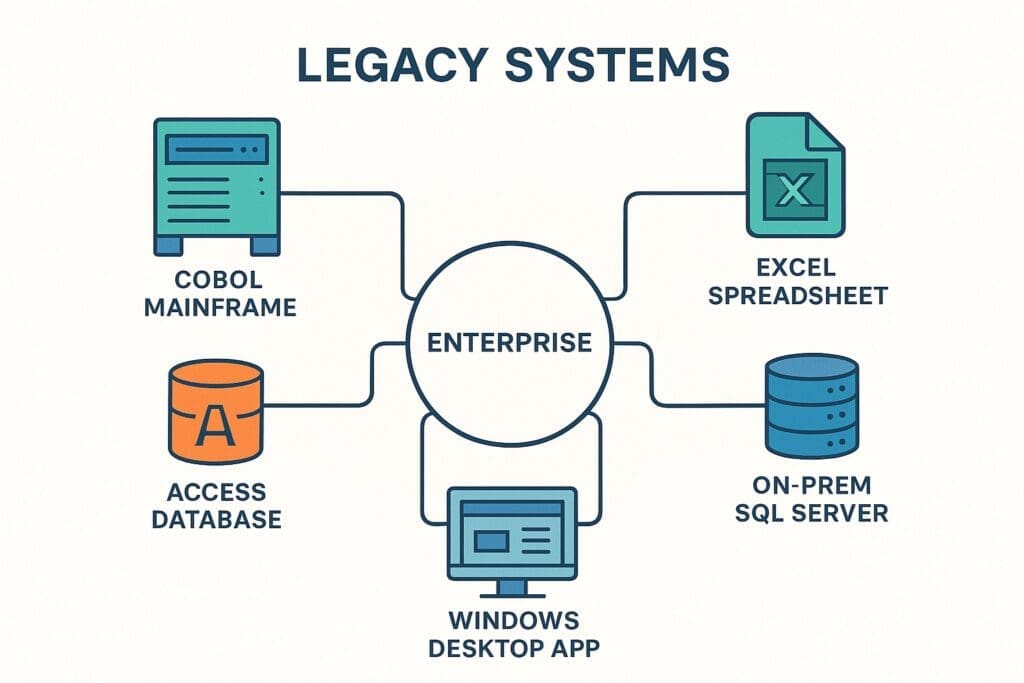

A legacy system is any software or hardware that is still critical to daily operations but is based on older technologies. That doesn’t mean it’s obsolete—it means it’s proven, stable, and often deeply integrated. Examples include:

- Mainframe applications (COBOL, DB2)

- Windows desktop apps (VB6, WinForms)

- Early .NET Framework apps (3.5 and earlier)

- SharePoint 2010 or earlier

- Access databases

- Excel macros and flat file batch processes

- On-prem SQL Server or Oracle installations

Legacy systems are everywhere—especially in government, manufacturing, energy, healthcare, and finance.

Why Legacy Systems Still Matter

Legacy systems still run the backbone of operations for many organizations because:

- They’re deeply integrated with business workflows.

- Rewriting them is risky and expensive.

- They’ve been heavily customized over years or decades.

- They contain valuable historical data that modern systems need.

Many CIOs and CTOs are looking to “modernize,” but complete rip-and-replace initiatives often fail. A better approach? Use AI to incrementally enhance legacy systems without replacing them.

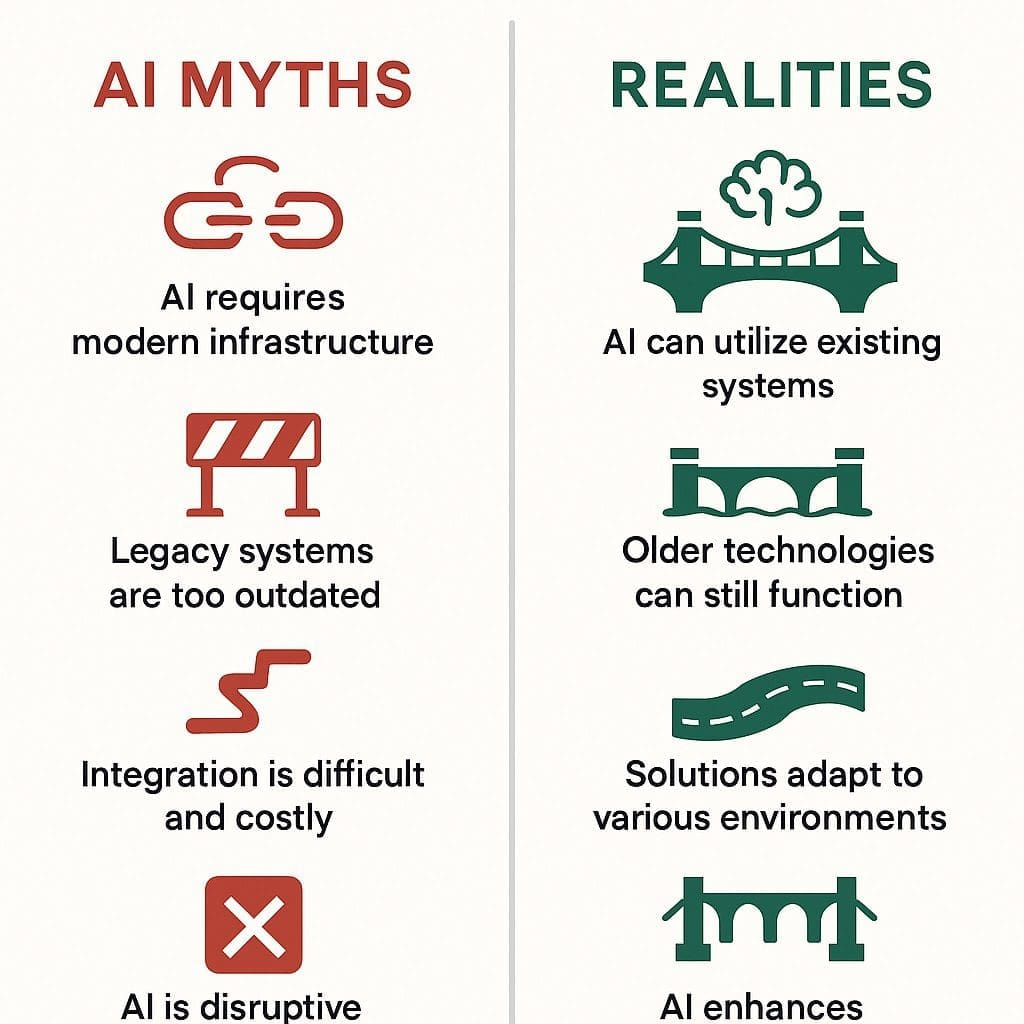

Common Myths About AI and Legacy Systems

| Myth | Reality |

|---|---|

| AI requires the cloud | Hybrid and on-prem AI deployments are fully supported |

| Legacy systems can’t talk to AI | Integration is often just a matter of data extraction and API wrapping |

| Our data is too siloed | Data brokers, connectors, and export tools make it accessible |

| We need to modernize first | AI can be the start of your modernization journey |

These myths stop valuable AI initiatives before they begin. In reality, AI and legacy can coexist—and even thrive.

Why AI Can Work with Legacy Systems

What Modern AI Needs from a System

AI models are surprisingly low maintenance when it comes to integration. Here’s what they actually need:

- Data access: From a file, database, API, or even screen scrape

- A compute surface: A place to run models (.NET service, Azure Function, etc.)

- An orchestration trigger: Something to kick off the process (cron job, user event, API call)

That’s it. No massive overhaul necessary.

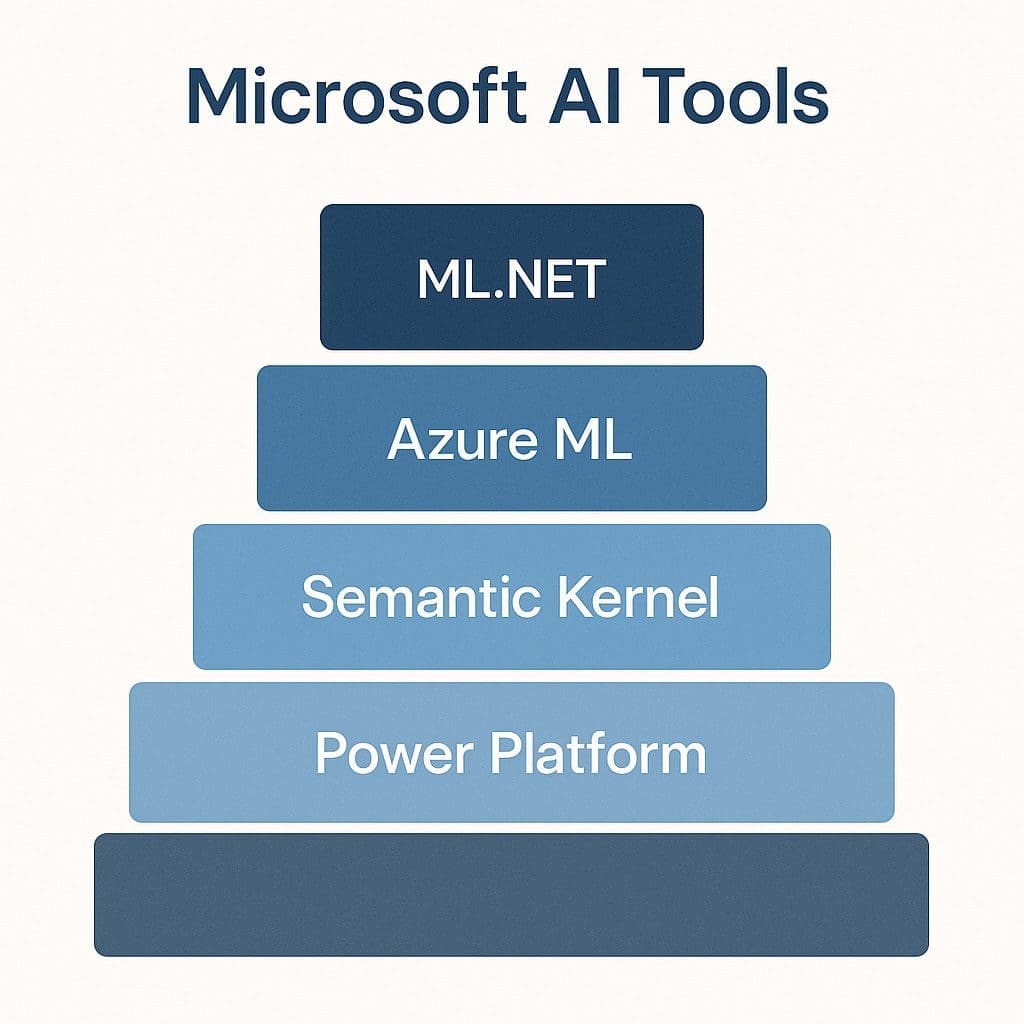

Microsoft Technologies That Bridge the Gap

Microsoft provides the most comprehensive and backward-compatible ecosystem for AI + legacy integration:

- ML.NET – Bring machine learning into .NET apps without leaving your stack

- Azure Machine Learning – Full-service ML platform that can deploy anywhere

- .NET Worker Services / Web APIs – Build wrappers around AI models to expose via HTTP

- Power Platform – Use low-code tools like Power Automate to integrate across systems

- Semantic Kernel – Orchestrate AI tasks using embeddings, memory, and connectors

If your organization uses Microsoft technologies, you already own the tools to get started.

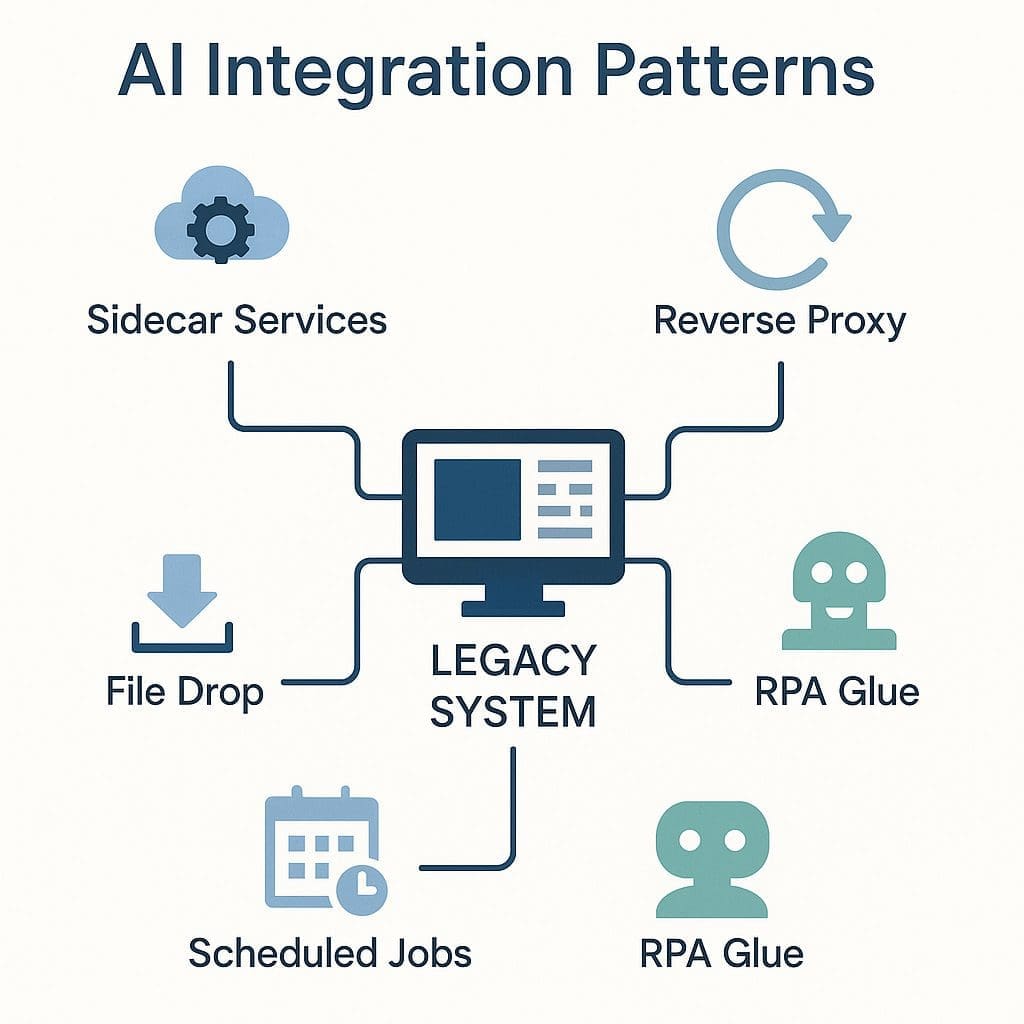

Integration Patterns That Work

| Pattern | Description | Technologies | Best For |

| Sidecar Services | AI model runs in parallel; legacy system calls it | ML.NET, .NET APIs | Predictive models, NLP, etc. |

| Reverse Proxy | Intercepts calls to add intelligence | Azure API Management + AI | Classification, transformation |

| File Drop Pipelines | AI reads from file exports | Azure Logic Apps, .NET Service | Batch processing, air-gapped systems |

| Scheduled Jobs | Nightly/weekly batch ML runs | Windows Task Scheduler, Azure Functions | Forecasting, batch scoring |

| RPA Glue | Bots simulate user interaction | Power Automate Desktop | UI-only legacy systems |

Each pattern lets you preserve your core systems while embedding intelligent behavior.

Tools & Libraries That Make This Easy (.NET-Centric)

Microsoft has invested heavily in making its ecosystem AI-friendly—without requiring a rip-and-replace strategy. Here are the core tools and libraries you can lean on to bring AI to your legacy systems:

ML.NET

- Use case: Embedding predictive models inside .NET apps

- Why it matters: Keeps everything inside the C# ecosystem—no need to switch to Python

- Best for: Classification, regression, forecasting, anomaly detection

- Bonus: Works offline, deployable anywhere

ONNX Runtime

- Use case: Deploying pre-trained models across platforms

- Why it matters: Supports models trained in TensorFlow, PyTorch, or scikit-learn

- Best for: High-performance inference in .NET apps

Azure Machine Learning

- Use case: Full model lifecycle—training, tuning, deploying, monitoring

- Why it matters: Enterprise-grade tools and integrations

- Best for: Organizations ready to scale ML across teams or systems

.NET Worker Services and Web APIs

- Use case: Run AI tasks as background processes or expose via REST endpoints

- Why it matters: Seamlessly plugs into your legacy architecture

- Best for: Sidecar AI, orchestration, or internal service buses

Power Automate and Power Platform

- Use case: No-code/low-code automation, data extraction, and RPA

- Why it matters: Extend reach of AI without extensive developer effort

- Best for: Bridging gaps between systems when APIs don’t exist

Semantic Kernel

- Use case: AI orchestration layer for calling plugins, APIs, and LLMs

- Why it matters: Brings memory and intelligence to your AI agents

- Best for: Complex workflows and hybrid AI/ML/NLP tasks

When used together, these tools make .NET one of the most flexible environments for bridging legacy systems with intelligent automation.

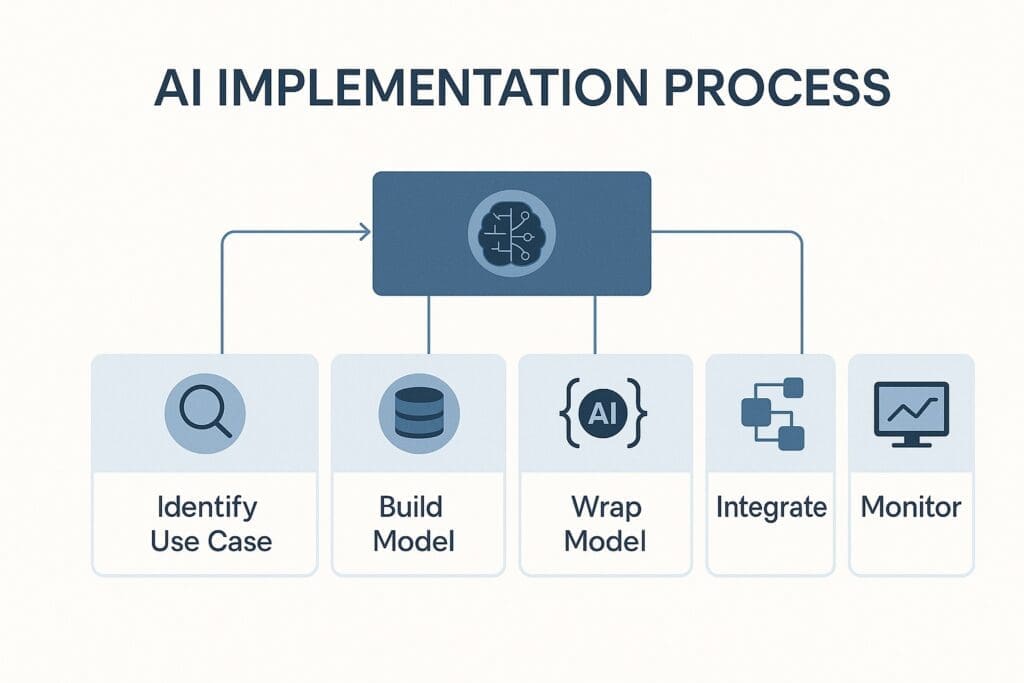

Step-by-Step Process to Add AI to Legacy Systems

Step 1: Identify the Pain Point or Opportunity

Don’t start with technology. Start with impact. What repetitive task, prediction, decision, or classification problem could benefit from AI? Look for pain points that are:

- Labor-intensive

- Prone to human error

- Requiring frequent decision-making

- Dependent on historical patterns or data

Example: “We get 1,000 emails a week and spend hours manually categorizing and routing them.”

Step 2: Extract the Data or Signal

Legacy systems may not expose an API, but that doesn’t mean they’re closed off. Data can usually be extracted by:

- Database queries

- Log file exports

- CSV reports

- Screen scraping (via RPA)

- Email parsing

At this stage, define what data you need and how frequently you need it.

Step 3: Build and Train the AI Model

Depending on your project scope:

- Use ML.NET to train directly within your .NET project

- Use Azure Machine Learning for larger-scale or collaborative modeling

- Use pretrained models and fine-tune them for your domain (e.g., using ONNX)

Important: Don’t over-optimize. Aim for useful and accurate—not perfect.

Step 4: Wrap the Model in a Deployable Component

Package the trained model into a format your legacy system can communicate with:

- A .NET console app or worker service

- A REST API built with ASP.NET Core

- A shared class library (NuGet)

This lets you call the AI like any other service or component.

Step 5: Integrate Back into the Legacy System

Now that the AI is ready, plug it in:

- Schedule jobs to run periodically (batch)

- Trigger actions via API calls (real-time)

- Insert insights into dashboards, alerts, or workflows

You’re not replacing the system—you’re enhancing it.

Step 6: Monitor, Maintain, and Iterate

AI is not “set and forget.”

- Log performance and usage

- Monitor for drift or anomalies

- Retrain as needed (weekly, monthly, quarterly)

- Solicit user feedback and refine predictions

Make AI a living part of your legacy ecosystem—not a bolt-on experiment.

Real-World Scenarios and Case Studies

| Industry | AI Use Case | Legacy System | Result |

|---|---|---|---|

| Healthcare | Claims anomaly detection | COBOL + SQL Server | Reduced audit workload by 85% |

| Manufacturing | Predictive maintenance | PLC + Access DB | Avoided $1.2M in unplanned downtime |

| Government | Email triage & auto-routing | Lotus Notes + Exchange | Saved 40 staff hours per week |

| Utilities | Document classification & tagging | File shares + SharePoint 2010 | Achieved regulatory compliance & searchability |

| Financial | Loan default prediction | Mainframe + Excel workflows | Reduced delinquency rates by 20% |

| Retail | Demand forecasting | On-prem ERP + flat file export | Improved inventory accuracy by 30% |

These are real-world examples of AI working with legacy—not replacing it. Notice a pattern:

- The legacy system remains operational

- AI is integrated through intermediary services or exports

- Impact is measurable—productivity, accuracy, cost savings

Organizations didn’t wait to modernize—they started modernizing outcomes with AI while keeping their infrastructure intact.

Let these examples serve as templates or conversation starters for your own AI+legacy strategy.

Security, Risk, and Governance

Deploying AI Without Compromising Control

Legacy systems are often kept around not because of nostalgia, but because of compliance, security, or governance requirements. AI adoption must respect those boundaries.

Here’s how to do it:

On-Premise or Hybrid Deployment Options

- ML.NET: Can run entirely on-premise with no internet connectivity.

- Azure ML: Supports hybrid and private cloud deployment models.

- Containers: Package and deploy AI in Docker containers on internal infrastructure.

This ensures your AI operates inside existing firewalls and security perimeters.

Auditability and Explainability

- Use model versioning and immutable logs to track all AI decisions.

- Incorporate model explainers (e.g., SHAP, LIME) to show how decisions are made.

- Ensure models used in regulated workflows meet audit criteria (especially in finance, healthcare, and government).

Role-Based Access and Data Governance

- Restrict who can train, deploy, or update models

- Track lineage of data sources and model outputs

- Encrypt data in transit and at rest

- Implement row-level security and data masking as needed

Regulatory Compliance Alignment

AI integrations must align with:

- HIPAA (for healthcare)

- GDPR (for personal data)

- SOX (for financial disclosures)

- CJIS, FISMA, or ITAR (for public sector and defense)

Microsoft’s AI stack provides features to support these needs across cloud, hybrid, and on-prem solutions.

Human-in-the-Loop Safeguards

In critical decision paths:

- Include humans for final validation or override

- Surface AI confidence scores

- Log overrides and compare performance over time

The goal: AI that is transparent, accountable, and complements—not replaces—human oversight.

Security is not a reason to delay AI adoption. It’s a reason to adopt it responsibly, using the robust tools already available in your enterprise environment.

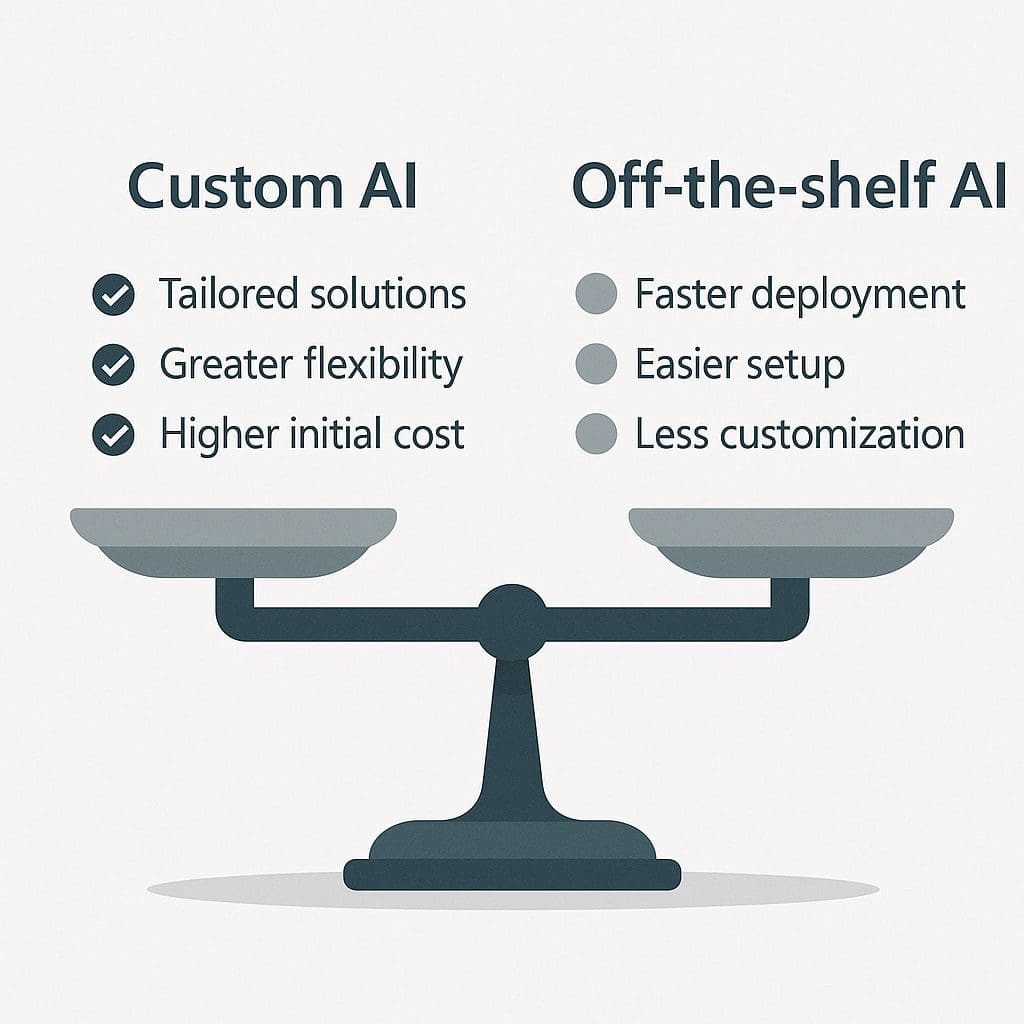

Build vs. Buy: How to Evaluate Solutions

When introducing AI into a legacy environment, one of the first strategic decisions is whether to build custom solutions using your internal teams or to buy off-the-shelf AI products. Each approach has trade-offs in flexibility, speed, cost, and ownership.

| Criteria | Custom (.NET) AI | Off-the-Shelf AI |

|---|---|---|

| Flexibility | Total control over logic, data, and UI | Limited to vendor features and roadmap |

| Integration | Tailored to your environment and systems | May require workarounds or RPA bridges |

| Cost | Higher up-front; lower long-term costs | Faster to deploy but may have high licensing fees |

| Ownership | Full intellectual property (IP) retained | Data and logic often owned by vendor |

| Security | Fully under your governance policies | Must vet vendor’s compliance rigorously |

| Scalability | Grows with your architecture | May be constrained by subscription tiers |

| Talent Required | Needs internal or contract dev resources | Easier for teams with minimal AI expertise |

When to Build

- You have specific workflows or constraints that commercial tools don’t support

- You already use .NET and Microsoft infrastructure extensively

- You need to retain control over models, code, and data

- You want to gradually extend AI across multiple systems

When to Buy

- You need rapid time-to-value with minimal engineering effort

- You’re solving a common problem (e.g., chatbot, invoice processing)

- You’re piloting AI and want to minimize upfront risk

Hybrid Option: Buy to Prototype, Build to Scale

Some organizations start by buying to validate value, then build internal solutions when scale, customization, or cost control become critical.

Whatever path you choose, ensure your strategy includes:

- Integration plan (data pipelines, APIs, outputs)

- Governance, security, and monitoring

- Clear handoff between systems or vendors

- Exit plan if switching from vendor to internal build later

In legacy settings, the ability to own your stack and evolve incrementally often makes custom AI development in .NET the most sustainable long-term strategy.

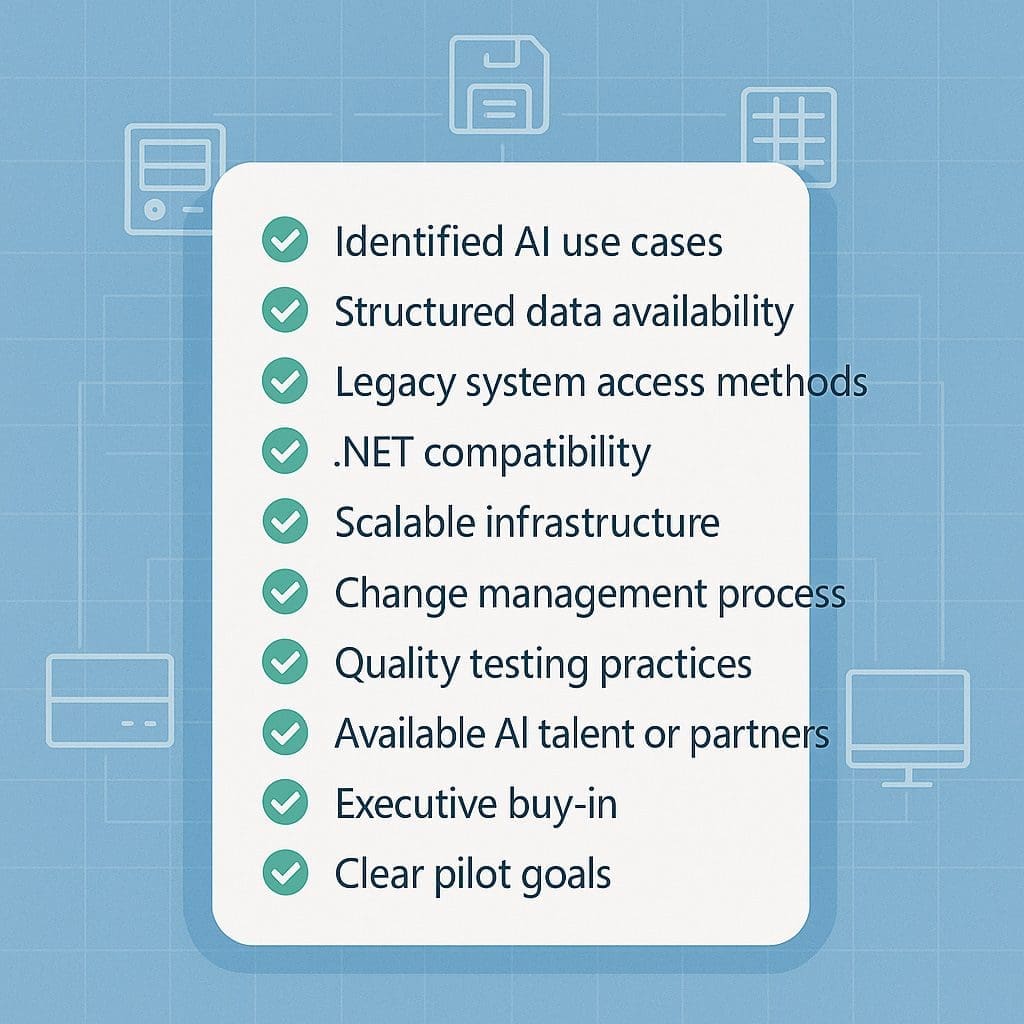

Checklist: Is Your Legacy System AI-Ready?

Use this quick diagnostic to assess whether your environment is prepared for a practical and productive AI integration. Even if you can’t check every box today, this checklist helps identify where to start.

| ✅ | Question |

|---|---|

| Do you have access to structured or semi-structured data from your system? | |

| Can you extract data via queries, exports, reports, or logs? | |

| Do you have a business problem that could benefit from prediction, classification, or automation? | |

| Can you deploy .NET applications on a server, VM, or cloud platform? | |

| Is there at least one reliable trigger (file drop, user input, schedule, API) to start an AI task? | |

| Can your system accept updates, results, or recommendations from another service? | |

| Do you have a secure location (on-prem or cloud) where AI models can run? | |

| Does your team have access to .NET development resources or partners? | |

| Are you tracking data quality and changes over time? | |

| Is your IT team open to incremental improvements without full system replacement? |

Scoring:

- 8–10 YES: You’re highly AI-ready — start with a proof of concept ASAP.

- 5–7 YES: You’re in a good place to begin pilot projects with clear scoping.

- 0–4 YES: Consider starting with RPA, Power Platform, or data extraction groundwork.

This checklist is less about technical perfection and more about practical capability. If you can move data, run .NET code, and solve a business problem—you can add AI.

The Business Case for Hybrid AI with Legacy Systems

AI isn’t just a technology trend—it’s a force multiplier for legacy environments. A well-implemented AI initiative can dramatically reduce costs, boost productivity, and surface new insights—without the upheaval of system migration.

Faster Time to Value

- AI overlays on legacy systems can be developed and deployed in weeks—not years.

- Pilot projects can deliver measurable ROI with minimal disruption.

- No need to wait for a full modernization program to finish.

Lower Risk

- Incremental AI integrations avoid the “big bang” risk of major system overhauls.

- You can test new capabilities in isolation and scale only when proven.

- Rollbacks are easy—AI services can be decoupled or disabled without impact.

Leverage Existing Investments

- Your legacy system already contains years—sometimes decades—of operational data.

- That data is fuel for AI. And now, you don’t have to move it or reformat it to gain insights.

- Combine new intelligence with the structure and stability of existing platforms.

Empower Employees

- Give workers new tools: smarter search, suggested next steps, predictive alerts.

- Automate rote or time-consuming tasks so people can focus on judgment and innovation.

- Bridge skill gaps: AI can enhance decision-making even for non-technical roles.

Modernize the Outcome, Not the System

- AI is often perceived as part of “modernization,” but you don’t need to modernize your system to modernize the result.

- Whether it’s document classification, forecasting, or fraud detection—the value is in the outcome, not the architecture.

Bottom line: AI lets you extend the life, value, and relevance of your legacy systems—without betting the farm on risky migrations.

Common Pitfalls and How to Avoid Them

Even well-intentioned AI initiatives can stall or backfire when paired with legacy systems—especially if they’re treated like modern greenfield projects. Here are the most common traps and how to sidestep them.

❌ Pitfall 1: Choosing the Wrong Use Case

- What goes wrong: Teams choose AI projects that are either too complex or too vague (e.g., “automate everything”).

- Avoid it: Start with narrow, high-impact tasks—classification, routing, scoring, ranking.

❌ Pitfall 2: Assuming a System Must Be Modernized First

- What goes wrong: Teams delay AI until they can fully modernize the core system.

- Avoid it: Build AI alongside what exists. Modernize outcomes, not infrastructure.

❌ Pitfall 3: Lack of Integration Planning

- What goes wrong: Models are trained but not connected to real systems or triggers.

- Avoid it: Plan from the start how data gets in, how predictions come out, and how results affect operations.

❌ Pitfall 4: No Monitoring or Feedback Loop

- What goes wrong: Models degrade over time, confidence erodes, users stop trusting outputs.

- Avoid it: Log predictions, compare with outcomes, and retrain periodically.

❌ Pitfall 5: AI in a Vacuum

- What goes wrong: Developers or data scientists operate in isolation from operations and IT.

- Avoid it: Include domain experts, legacy system owners, and IT stakeholders from day one.

❌ Pitfall 6: Overengineering

- What goes wrong: Teams use too many tools, frameworks, or services—leading to unnecessary complexity.

- Avoid it: Use what you already have: .NET, SQL Server, Excel, or Power Platform.

❌ Pitfall 7: Not Getting User Buy-In

- What goes wrong: AI insights are ignored or resisted by the people meant to use them.

- Avoid it: Involve users in testing, show results early, and iterate with their input.

Avoiding these pitfalls is not about perfection—it’s about starting small, learning fast, and building sustainable momentum. AI in legacy environments succeeds when it’s practical, humble, and user-centered.

Frequently Asked Questions (FAQs)

Can we really use AI if our systems are 20+ years old?

Yes. Many legacy systems still manage structured data, which is all AI needs. With modern .NET tools, even systems from the 1990s can benefit from predictive and classification models.

What if we can’t expose our system to the cloud?

No problem. ML.NET and ONNX Runtime support fully on-prem deployments. You can keep AI models behind your firewall and maintain full control.

What are the simplest AI use cases to start with?

Email routing, document classification, anomaly detection, AI assistants, chatbots answering FAQs, and Intelligent Document Processing (reading and converting forms), and demand forecasting are common, high-ROI starting points.

How does ML.NET help with legacy integration?

ML.NET runs natively in .NET apps. You can embed trained models directly into console apps, services, or APIs that interact with your legacy systems.

Do we need a data lake to get started?

Not at all. AI models can be trained on CSVs, SQL exports, or even log files. Structured and accessible data matters more than fancy infrastructure.

How much does this typically cost to implement?

Pilot prototype projects using your existing Microsoft stack can cost less than $25,000 in internal time and resources. Costs scale with scope and ambition.

Can AI make decisions inside legacy systems?

Yes—indirectly. You can run AI externally and feed the decision back into the legacy system through data updates, triggers, or UI overlays.

How do we avoid “shadow AI” or unmanaged experiments?

Implement governance practices: approval workflows, logging, version control, and audit trails. Treat models like production code.

Is RPA a good long-term solution?

It’s a great short- to mid-term bridge when APIs don’t exist. Over time, aim to replace RPA with more resilient integrations.

How does Semantic Kernel help legacy systems?

It lets you orchestrate AI tasks across different systems, add memory and context to workflows, and chain together complex actions—great for legacy environments.

What skills does our team need to have?

.NET development, basic understanding of machine learning workflows, and system integration skills. You don’t need a PhD in data science.

Can this approach scale across multiple systems?

Yes. Once your AI layer is abstracted (e.g., through APIs or services), you can scale horizontally across business units or departments.

How do we test and validate AI outcomes in legacy settings?

Use A/B testing, historical data replay, and human-in-the-loop verification. Log predictions and actual outcomes to refine over time.

How do we keep our models secure?

Run them on-prem, encrypt inputs/outputs, restrict access via RBAC, and use secure API endpoints.

Who should lead this initiative internally?

Ideally a cross-functional team: IT lead + business owner + developer. One person should own coordination, but shared accountability drives success. See our first book on how to build an AI Innovation Team

Final Thoughts: Legacy Isn’t Dead. It’s Data-Rich.

Legacy systems aren’t the enemy of innovation. They’re often the most valuable systems in your entire organization—because they contain your history, your process knowledge, and your proprietary edge. The challenge isn’t replacing them. The challenge is unlocking their value.

That’s where AI comes in.

With .NET, ML.NET, Azure ML, Semantic Kernel, and other Microsoft-centric tools, your organization can bring intelligence to its infrastructure—without replatforming, rehosting, or rewriting from scratch. You can:

- Modernize outcomes instead of code

- Augment human decision-making

- Reduce inefficiencies and hidden costs

- Gain competitive advantage without abandoning your existing systems

Most importantly: You can start now. Today. With the systems you already have.

The future of AI isn’t just about what’s new—it’s about making the old work smarter.

Want more information?

- Visit the hub to see all the resources offer

- Want help identifying your first AI use case? Contact us