How .NET Teams Can Get Started with AI

How Medium-to-Large .NET Teams Can Get Started with AI

If you’re responsible for innovation, development, or IT strategy in a medium-to-large organization running on .NET, the AI conversation has likely landed on your desk—and not always gently. Maybe you’re hearing buzzwords from the boardroom, pressure from business units, or even a subtle fear that you’re falling behind. We get it.

There’s no shortage of confusion, hype, and misinformation. From vendor pitches that overpromise, to headlines that suggest your team will be obsolete, the noise can make even the most seasoned IT leader hesitate. But here’s the good news: you’re already in a stronger position than most.

You’ve been building enterprise-grade, data-driven .NET applications for years. You know your systems, your data pipelines, and your business logic. That foundational experience puts you miles ahead of teams trying to bolt AI onto consumer apps or low-quality data.

This guide is for you. It’s built around your reality—your tools, your infrastructure, your challenges. It outlines how .NET teams can move from uncertainty to action, from curiosity to prototypes, using Microsoft-first technologies and pragmatic steps.

We’ll help you:

- Identify high-value AI use cases

- Leverage Microsoft tools your team already knows

- Build a lightweight but effective AI innovation team

- Deliver real results in weeks—not years

Let’s cut through the fog and help you take the first confident step into AI.

The Business Case for AI in .NET

Artificial Intelligence is no longer a futuristic ideal—it’s a practical solution to everyday inefficiencies, decision bottlenecks, and resource constraints. For organizations that have invested in .NET for decades, the business case for AI is not about reinventing the wheel. It’s about upgrading the vehicle that’s already running reliably.

Your .NET ecosystem is mature. You’ve built processes, automation, and systems that reflect your business logic. But many of those systems still depend on manual inputs, human triage, or static rules that can’t adapt in real-time. AI brings the missing layer of intelligence.

Imagine:

- Customer service platforms that route inquiries based on meaning, not just keywords

- Finance systems that predict which invoices will be late

- Legal workflows that pre-screen contracts based on risk

- HR platforms that score resumes using past hiring outcomes

These are not moonshot ideas. These are achievable enhancements when AI meets the structured, well-maintained environments common in .NET-based businesses.

AI also addresses a growing operational need: scaling without proportional hiring. As labor costs rise and skilled workers become harder to retain, the pressure to do more with less intensifies. AI allows you to increase throughput, reduce errors, and enhance responsiveness—without adding headcount.

Finally, AI positions your team as innovation leaders, not just maintainers. Internally, it signals adaptability. Externally, it signals competitiveness.

The best part? Microsoft has already laid much of the groundwork. With tools like ML.NET, Azure Cognitive Services, and Azure OpenAI, you can start implementing intelligent features using familiar languages and hosting platforms.

If you’ve ever thought, “We should be doing more with AI, but we don’t know where to start,” this is where to start.

✅ If you can consume a REST API and work with JSON, you’re ready.

Use Case Identification

Choosing the right starting point is critical. Many AI projects fail not because of poor technology, but because they chase the wrong problem. In a .NET environment, you’re likely sitting on dozens of viable use cases—you just need a process to surface the best ones.

✅ Microsoft’s ecosystem is designed for enterprise-grade security and compliance.

What Makes a Good First Use Case?

Start with problems that are:

- Repetitive and Rule-Based – If a task follows clear steps, it’s a candidate for automation or augmentation.

- Data-Rich – You need data to train or feed AI models. Focus on processes with historical records.

- Low-Risk but High-Visibility – Pick a use case that isn’t mission-critical, but shows off AI’s potential.

- Painful but Boring – Where do employees waste hours doing low-value work?

- New junior employee – If you were to hire a new junior employee, what tasks would you assign them?

You’re not looking for “moonshot” ideas. You’re looking for impactful improvements with short feedback loops.

✅ Use AI to support decision-making while you improve data quality over time.

Framework: The 3-Lens Filter

Use these lenses to qualify and rank ideas:

- Operational Lens: Does it affect daily work volume? Will automation relieve capacity pressure?

- Strategic Lens: Is it tied to a departmental goal (e.g., response time, cost reduction)?

- Technical Lens: Is the data accessible? Is the process stable and well-understood?

Run each candidate through these filters. A use case that hits all three? Move it to the top of your roadmap.

✅ Treat AI like a digital teammate, not a threat.

Sample Use Cases by Department

| Department | Pain Point | AI Solution |

|---|---|---|

| Finance | Manual invoice coding | Document classification and auto-tagging |

| HR | Screening resumes | NLP-based resume scoring |

| Customer Support | Long triage times | Email/ticket classification + response drafting |

| Legal | Contract review overload | Clause extraction and risk scoring |

| Operations | Demand variability | Forecasting models using ML.NET |

| IT Helpdesk | Password resets, repeated tickets | AI-driven chatbot with handoff logic |

✅ Tip: Don’t try to guess. Interview stakeholders in each department. Ask: “If we could give you a smart assistant for one task—what would it do?”

✅ Start with a capped budget or a fixed sprint. Measure results before you scale.

Use Case Matrix (Internal Tool Suggestion)

To formalize this process, use a spreadsheet to:

- List all potential use cases

- Score each on value, feasibility, urgency, visibility

- Add notes on stakeholders, data sources, blockers

The right use case won’t just give you a win—it’ll build internal momentum, generate leadership interest, and turn skeptics into supporters.

Now that you’ve identified the right problem, let’s talk tools.

✅ Aim for internal productivity wins that build confidence.

Microsoft AI Ecosystem for .NET Teams

One of the greatest advantages for .NET organizations getting started with AI is this: you don’t have to reinvent your tech stack. Microsoft has quietly assembled one of the most powerful and interoperable AI ecosystems—already integrated with tools, languages, and services your team uses daily.

Below is an overview of the most relevant Microsoft AI offerings for medium-to-large organizations building in .NET:

1. ML.NET – Traditional Machine Learning in C#

Use this when: You need predictive models, classification, or regression based on your own datasets.

ML.NET is an open-source machine learning framework built for .NET developers. It supports tasks like:

- Binary classification (e.g., yes/no, approve/deny)

- Multi-class classification (e.g., categorizing tickets or documents)

- Regression (e.g., demand or revenue forecasting)

- Time series predictions

- Anomaly detection

Why it’s valuable:

- Trains models on your data

- Integrates directly into .NET applications

- No Python required

- Production-ready models in days

2. Azure OpenAI Service – Large Language Models with Enterprise Controls

Use this when: You need powerful text generation, summarization, classification, or Q&A.

Azure OpenAI gives you access to OpenAI models like GPT-4 and GPT-3.5—but with the governance, security, and privacy controls enterprises demand.

Examples:

- Automatically summarize customer feedback

- Extract structured data from unstructured text

- Generate boilerplate reports or legal drafts

- Build copilots for internal tools

Why it’s valuable:

- Private deployment (your data is not used to train public models)

- Integrates with Azure Active Directory and enterprise logging

- Works with REST APIs, SDKs, and .NET integrations

3. Semantic Kernel – Reasoning, Planning, and Orchestration in .NET

Use this when: You want to combine multiple AI tasks into intelligent agents or workflows.

Semantic Kernel is Microsoft’s SDK for integrating LLMs into your apps as logic and agents, not just text responders.

Examples:

- An AI assistant that looks up documents, summarizes them, and drafts emails

- Multi-step workflows with AI + API calls + conditional logic

- Natural language interfaces for internal tools

Why it’s valuable:

- Written for C# and Python

- Lets you chain skills, plugins, and memory

- Open-source and extensible

4. Azure Cognitive Services – Plug-and-Play AI APIs

Use this when: You need fast, reliable AI capabilities like image recognition or speech-to-text.

Azure Cognitive Services include pre-trained, scalable APIs such as:

- Computer Vision (OCR, image analysis)

- Text Analytics (sentiment, key phrase extraction)

- Translator (real-time translation)

- Speech-to-Text and Text-to-Speech

Why it’s valuable:

- No model training needed

- Simple API calls

- Can be used from Power Platform, .NET, or Logic Apps

5. Power Platform AI Builder – Low-Code AI for Business Teams

Use this when: You want to empower non-developers or rapidly prototype without full-code investment.

AI Builder lets business users build forms, tag documents, and automate tasks using prebuilt AI models inside Power Apps or Power Automate.

Examples:

- Scan PDFs and extract invoice data

- Classify customer emails

- Automate document workflows with SharePoint or Dynamics

Why it’s valuable:

- Built-in Microsoft 365 integrations

- Role-based access and controls

- Rapid iteration with no-code/low-code

🧠 Choosing the Right Tool

| Need | Microsoft Tool |

|---|---|

| Build models with your data in C# | ML.NET |

| Use GPT-4 securely in Azure | Azure OpenAI |

| Chain AI tasks with .NET logic | Semantic Kernel |

| Add pre-trained AI features quickly | Azure Cognitive Services |

| Empower business users with AI | Power Platform AI Builder |

The power of Microsoft’s ecosystem isn’t just in the tools—it’s in how they work together inside the platform you’re already invested in. Your .NET team is closer to building AI than you think.

Building the AI Innovation Team

Getting started with AI is not a tooling problem—it’s a team structure problem. The right people, aligned around the right goals, can deliver high-impact AI pilots in 60–90 days. The wrong team (or no clear team at all) almost guarantees analysis paralysis, political delays, and shelfware.

Here’s how to assemble a nimble, cross-functional AI innovation team using the talent you likely already have.

🎯 The Core Three Roles

1. Senior .NET Developer

- Comfortable with APIs, JSON, SDKs

- Can prototype quickly in C# using ML.NET, Azure SDKs, or Semantic Kernel

- Knows your internal systems and deployment pipelines

2. Business Analyst / SME

- Understands the business workflow or pain point

- Can translate user complaints into process maps or requirements

- Serves as the voice of the end user and helps validate output quality

3. Project Sponsor

- Mid- or senior-level manager (Director, VP, or Principal)

- Can greenlight resources, communicate outcomes to leadership, and remove blockers

- Often aligned with the business unit that owns the problem domain (e.g., finance, HR)

👥 Optional Add-ons

Depending on the size of your org or the complexity of the pilot, you may also want:

- A Database Administrator to help shape or clean source data

- A UX/UI Designer to polish interfaces for demos or internal tools

- An IT/Security Lead to ensure data use and APIs meet compliance

🛠 Team Operating Model

Run this team in sprints—just like an agile software project.

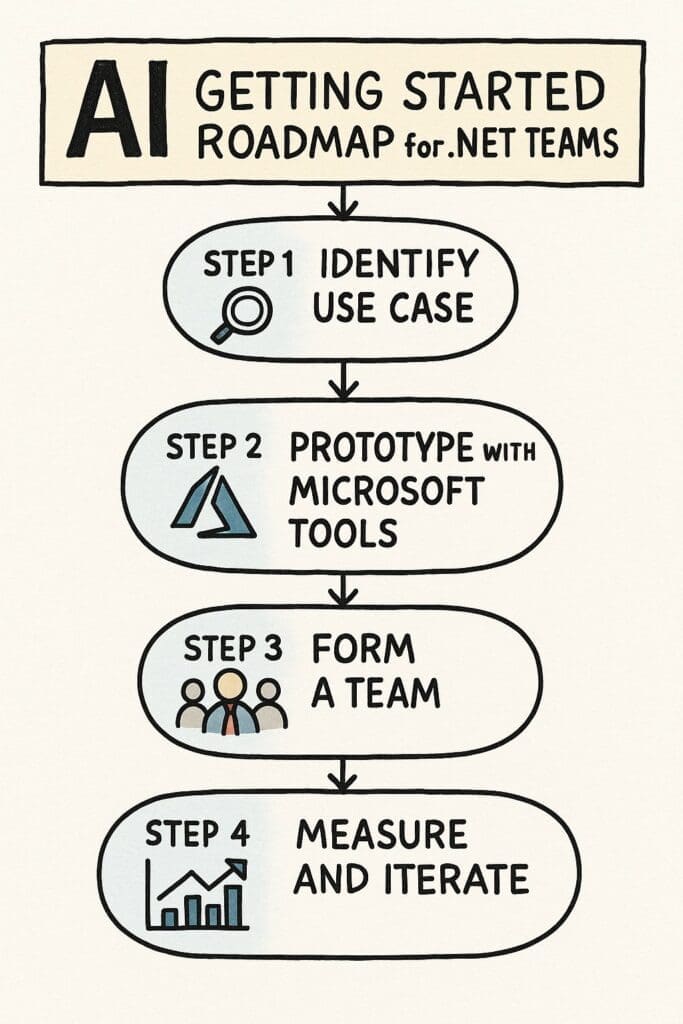

30 Day AI Sprint Framework:

- Week 1–2: Define problem and success metrics

- Week 3–4: Build proof-of-concept using Microsoft tools

- Week 5–6: Validate with real users or test data

- Week 7–8: Refine output and improve performance

- Week 9–10: Document impact, prep for stakeholder demo

This is not a “pilot program committee.” This is a delivery team.

✅ Tips for Success

- Give the team a name and a sponsor—it signals commitment.

- Have leadership present at the kickoff and demo.

- Track progress with visible metrics (time saved, feedback scores).

- Reuse this same team structure for future use cases.

AI isn’t a solo activity. It’s a team sport—with a very short season. The right team gets you real results, builds momentum, and earns trust for broader AI adoption.

Pilot Planning & Execution

Once you’ve formed your AI innovation team and selected a viable use case, it’s time to execute—but execution must be tight, scoped, and iterative. Think in terms of sprints, not marathons. The goal of a pilot is to deliver something functional, measurable, and insightful—not perfect.

🛠 How to Structure Your First AI Pilot

🎯 Pilot Objective:

Prove that your chosen use case can be improved by AI in a way that is:

- Technically feasible

- Measurably valuable

- Understandable to stakeholders

📅 Pilot Timeline (6–10 weeks):

| Phase | Focus Area |

|---|---|

| Weeks 1–2 | Define use case scope, success metrics, gather data |

| Weeks 3–4 | Build prototype using Microsoft tools (ML.NET, Azure, etc.) |

| Weeks 5–6 | Test with real users or sample data, gather feedback |

| Weeks 7–8 | Improve output quality, add light UX, refine integrations |

| Weeks 9–10 | Document results, prepare demo, share outcomes |

✅ Tip: Schedule stakeholder demos during week 5 and week 10—keep leadership informed and involved.

🔒 Infrastructure & Access Considerations

- Use a sandbox environment for prototyping

- Provision temporary Azure resources with budget caps

- Use anonymized data or create realistic sample data

- Assign RBAC roles in Azure and Git to ensure proper audit controls

✅ Tip: If you need approval for LLMs, start with Azure OpenAI in a locked-down environment. Document all data flows and retention policies.

📊 Pilot Metrics to Track

| Metric Category | Examples |

|---|---|

| Time Saved | “Avg handling time reduced from 17 to 6 minutes” |

| Accuracy | “Model accuracy: 91%, up from 72% baseline” |

| Business Value | “Estimated $48K savings/yr in labor costs” |

| Adoption | “74% of users said they’d use this tool weekly” |

| Risk Reduction | “Auto-classifier caught 96% of previously missed exceptions” |

⚖️ What to Do After the Pilot

- If successful: Present metrics to senior stakeholders and nominate a second use case for the team.

- If mixed results: Document lessons, refine or pivot the use case.

- If failed: Document why. Not every idea will work. That’s the point of piloting.

✅ Tip: Don’t skip the internal debrief. What worked? What didn’t? What would you do differently next time?

Your first AI pilot sets the tone. Make it visible. Make it real. Make it useful. It doesn’t have to be revolutionary—it just has to work and prove the process.

Common Pitfalls & How to Avoid Them

Even with the right tools, people, and intentions, many AI projects fail—or stall indefinitely. The reasons usually aren’t technical. They’re structural, psychological, or organizational.

Let’s call them out so you can navigate around them.

⚠️ 1. Starting Too Big

The Pitfall: Choosing a project that’s complex, high-stakes, or has unclear ownership.

Avoid It By:

- Picking a small, well-scoped use case with a single business owner.

- Focusing on a specific process, not a whole department or product line.

- Keeping your first goal modest: working code that solves a real problem.

⚠️ 2. No Business Champion

The Pitfall: The tech team is excited, but no one from the business side is involved or accountable.

Avoid It By:

- Recruiting a manager or director who owns the pain point.

- Involving them in scoping, review meetings, and pilot demos.

- Making them the face of success when it works (important politically).

⚠️ 3. Over-Reliance on Vendors or Consultants

The Pitfall: Handing the entire effort to a vendor with no internal capability-building.

Avoid It By:

- Make sure the AI solutions align with your dev work in-house, even if you bring in advisors.

- Using consultants as accelerators—not owners—of your AI journey.

- Making sure internal staff can maintain, update, and scale solutions.

⚠️ 4. Misunderstanding AI’s Role

The Pitfall: Expecting 100% accuracy or assuming AI replaces entire job functions.

Avoid It By:

- Framing AI as an assistant—not a replacement.

- Setting expectations that AI improves decision-making, not guarantees it.

- Testing AI in low-risk workflows first.

⚠️ 5. Ignoring the End User

The Pitfall: Building a model that works technically but doesn’t fit into daily operations.

Avoid It By:

- Involving frontline users early in the pilot.

- Watching how they interact with the tool—not just asking.

- Iterating on UX, not just model accuracy.

⚠️ 6. No Plan for What’s Next

The Pitfall: The pilot ends, people nod in approval… then nothing happens.

Avoid It By:

- Defining what success means before the pilot starts.

- Assigning post-pilot actions during the final demo (e.g., scale, fund, staff).

- Documenting the repeatable process you used for future pilots.

AI pilots are not just about proving the tech. They’re about proving your process. If your team can avoid these common traps, you’ll gain something more valuable than a working app—you’ll gain organizational momentum.

✅ The real failure is not trying. The value of a pilot is learning—either way.

✅ Explore Our Learning Ecosystem:

- 🔗 Check out all the resources we have to offer on our hub

- 📚 Most of the information for this article come from our first two books on Amazon –

- Check out our whitepaper on Why AI in .NET? (requires registering for our free newletter)

- Free Infographic to download and share: AI in .NET

- Free Infographic to download and share: AI Implementation Roadmap

- Free Infographic to download and share: AI Innovation Team Roles & Responsibilities

- Free Infographic to download and share: A Step-by-Step Guide to Developing AI Applications for Your Business

Whether you’re just starting or ready to go deeper, we’ve got content designed for every stage of your AI journey.

Frequently Asked Questions (FAQs)

These are the real questions being asked by .NET development leads, IT managers, and executives across medium-to-large organizations. Let’s answer them head-on.

Do we need to hire data scientists to get started?

No. Microsoft has built tools specifically for developers who are already comfortable with .NET, C#, SQL Server and Azure. With ML.NET, Azure OpenAI, and Cognitive Services, your existing team can build functioning prototypes without a PhD in machine learning. You’ve probably been developing data driven applications for decades. Let your Database Administrators and .NET developers work it out.

What if we don’t have clean data?

You’re in good company. Most AI projects start with imperfect data. Many models—especially LLMs or pre-trained APIs—don’t require labeled training data at all. You can start with AI that assists workflows rather than replacing them.

How much does it cost to start?

It can be surprisingly low. Many orgs have unused Azure credits from Microsoft licensing agreements, Azure OpenAI and Cognitive Services can be tested with a few dollars, ML.NET is open-source and runs locally

What’s a safe first use case?

Look for something that: 1. Doesn’t directly impact customers, 2. Already frustrates internal staff, 3. Can be improved by better decision speed or classification, 4. Low impact on your operations. Start simple. Get more complex with experience.

Examples: 1. Generating routine report summaries, 2. Auto-tagging documents, 3. Classifying support tickets, 4. Extracting text from PDFs

Will this replace employees?

No—and saying otherwise is the fastest way to kill AI adoption internally.

AI in .NET environments is about amplification, not elimination. Automate repetitive tasks so humans can focus on judgment calls. Improve speed and consistency, not remove entire roles

How do we secure our data and models?

Use Azure OpenAI, not public OpenAI endpoints

Set RBAC roles and API access controls

Ensure models are not trained on proprietary data unless anonymized

Work with internal infosec teams early in the process

What if the pilot doesn’t work?

Good. That’s what pilots are for. A failed pilot gives you:

Credibility that your process is rigorous

A clearer sense of what doesn’t work

Documentation of constraints and blockers

What’s the long-term roadmap after the first pilot?

Institutionalize the AI innovation team

Build a backlog of use cases and revisit quarterly

Track AI projects like software features—prioritize, sprint, and iterate

Begin establishing AI ops, monitoring, and governance models

Can we use our existing .NET apps?

Yes. You can wrap AI APIs into .NET projects, build Razor components, or integrate via microservices.

What about data privacy?

Azure compliance is top-tier. For extra security, build models in-house using ML.NET.

What does success look like?

One small pilot that saves time, works reliably, and gains buy-in from both technical and business teams.

Do these AI solutions work with our legacy systems?

Yes. You would simply place one or more .NET Web API interfaces on your AI solutions. Then any legacy system can call the APIs.

✅ Your first win earns permission for the next five.