ML.NET for Data Prep – AI-Ready Preprocessing in .NET

📌 Summary: Why This Guide Matters

This guide is written for both technical teams and their non-technical managers — and serves two critical purposes:

👨💻 For C# Developers and DBAs:

- Collaborate effectively: Agree on who owns which parts of the data pipeline

- Leverage ML.NET: Use built-in tools to reduce custom code and speed up preprocessing

- Make smart tradeoffs: Choose the right mix of SQL, C#, and ML.NET for each application

- Aim for a hybrid approach: Combine strengths of traditional and AI-specific data prep

🧑💼 For Managers and Decision Makers:

- Understand the stakes: Data preparation is more complex — and more important — than it appears

- Respect the process: Developers and DBAs need time to get this right

- Support alignment: Misalignment in data prep leads to failed AI initiatives later

- Prioritize correctly: This step is not busywork — it directly impacts model performance and long-term success

When data prep is treated as an afterthought, AI fails.

When it’s treated as a shared responsibility, AI becomes sustainable.

🧠 Introduction

ML.NET for Data Prep – AI-Ready Preprocessing in .NET

In the world of artificial intelligence, data is the fuel — but raw data is crude oil. It’s messy, inconsistent, and often incomplete. To power an effective AI system, especially within enterprise environments built on Microsoft technologies, data must be refined, shaped, and formatted correctly. This process is known as data preparation, and it’s the backbone of every successful machine learning (ML) project.

Yet, despite its importance, data prep remains one of the most frustrating pain points for enterprise AI adoption — particularly for mid-to-senior .NET developers and database administrators. The frustration doesn’t come from inexperience with data. Quite the opposite. Most .NET teams and SQL Server DBAs have spent years building data-heavy business applications. They’re skilled in writing ETL routines, managing stored procedures, and optimizing queries across relational datasets.

But AI projects bring a new dimension of complexity to data work. From encoding categorical values to handling missing labels, scaling numeric fields, and preparing unstructured inputs like text or images — the rules of the game change. And what worked well in a business application may not translate effectively into an AI pipeline.

That’s where ML.NET enters the conversation.

ML.NET is Microsoft’s machine learning framework for .NET developers. It provides a clean, C#-native way to build, train, evaluate, and deploy machine learning models. But more importantly — and less discussed — is that ML.NET includes a powerful set of tools for data preparation. It offers a rich catalog of data transforms that can save weeks of custom coding while remaining entirely within the .NET stack.

For developers and DBAs who want:

- Full control over the data pipeline

- Deep integration with existing .NET systems

- Faster experimentation with AI

- And reduced friction when going from data to model

…ML.NET’s data preparation features are worth serious attention.

In this guide, we’ll explore how .NET professionals can use ML.NET to accelerate and improve the preprocessing stage of AI workflows. We’ll compare traditional approaches like SQL scripts and C# utilities to ML.NET’s transformer pipeline model. We’ll walk through example code, enterprise-level best practices, and actionable checklists to help you choose the right tools for the job — without rewriting everything from scratch.

Whether you’re building your first AI project or modernizing legacy systems to make them AI-ready, this guide will show you how to:

✅ Reduce friction in your data workflows

✅ Eliminate repetitive prep tasks with reusable pipelines

✅ Leverage .NET-native tools that fit your enterprise stack

✅ Know when to use custom code — and when to offload it to ML.NET

🔍 Section 1: What is Data Preparation for AI?

Data preparation is the foundation on which all successful AI and machine learning systems are built. It’s not flashy, and it rarely makes headlines — but it’s where most AI projects succeed or fail. Before you can build a model, deploy it, or make predictions, you must clean, shape, and structure your data into a form the machine learning algorithms can understand.

For seasoned .NET developers and SQL Server DBAs, this might sound familiar. After all, you’ve been writing ETL routines and transforming data for years. But AI data prep is not the same as business app ETL.

Let’s start by defining the core idea.

📘 What is AI Data Preparation?

AI data preparation refers to the set of processes and transformations applied to raw data to make it usable by machine learning models.

This typically includes:

| Transformation Step | Purpose |

|---|---|

| Handling Missing Values | Replace, remove, or infer gaps in the data |

| Normalization/Scaling | Bring numeric values into a standard range |

| Encoding Categorical Variables | Convert text labels into machine-readable formats |

| Text Processing | Tokenization, stop word removal, n-grams, TF-IDF |

| Feature Engineering | Create new variables or metrics from raw data |

| Shuffling/Splitting | Randomize and divide data into training/test sets |

| Outlier Detection | Identify and handle anomalies or rare values |

| Column Projection | Remove unused or irrelevant fields |

These steps are often chained together into a pipeline — a reusable series of transformations that ensure your model sees consistent, clean input data during both training and inference.

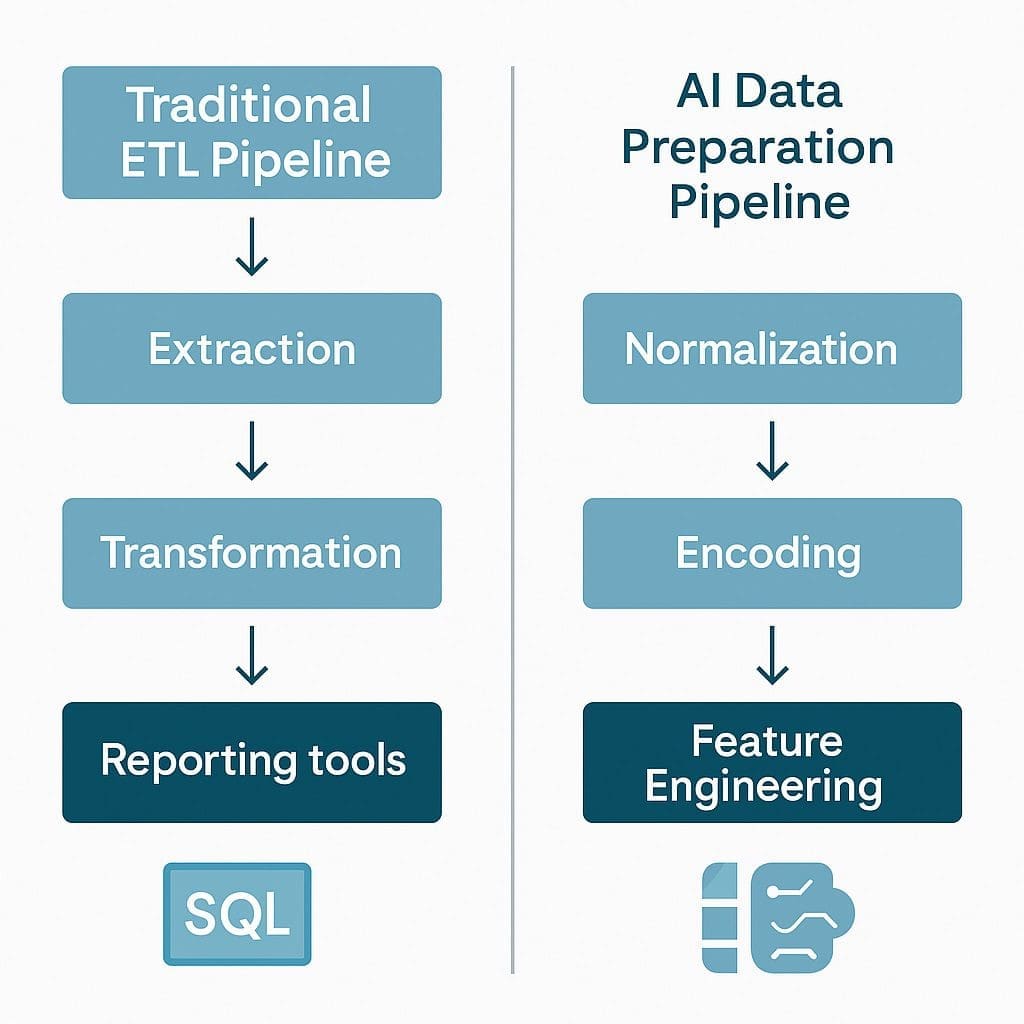

🏗️ AI Data Prep vs. Traditional ETL: What’s Different?

Traditional ETL (Extract, Transform, Load) — the kind DBAs and .NET developers are used to — is designed for business operations: reports, dashboards, transactional systems, auditing.

AI data prep is fundamentally different because it’s designed for statistical learning. Models don’t tolerate inconsistency. They don’t intuit missing data. And they definitely don’t like surprises at inference time.

Here’s a breakdown of the differences:

| Aspect | Traditional ETL | AI Data Preparation |

|---|---|---|

| Goal | Normalize for business logic | Standardize for algorithm compatibility |

| Tolerance for missing data | Often ignored or tolerated | Must be handled explicitly |

| Data types | Mostly structured (e.g., numbers, dates) | Structured + unstructured (text, images, etc.) |

| Output | Clean tables or reports | Feature matrices and label vectors |

| Process | Procedural or ad hoc | Pipeline-driven, repeatable, model-aligned |

| Performance concern | Batch speed and load frequency | Training consistency and generalization accuracy |

The difference is subtle but critical. For example, in a business report, a blank zip code might be fine. In an AI model, that same blank can break feature extraction or introduce bias if not handled.

🔁 Why Preprocessing is Critical for AI Accuracy

The performance of your model is only as good as the quality and structure of your data.

Poor data preparation leads to:

- Garbage-in, garbage-out predictions

- Models that memorize noise instead of patterns

- Increased risk of overfitting or underfitting

- Pipeline failures in production due to unseen categories or bad formatting

On the flip side, a solid preprocessing pipeline:

✅ Improves model performance

✅ Makes training faster and more stable

✅ Increases reproducibility

✅ Ensures the same logic is applied at runtime (when making predictions)

In enterprise scenarios — especially with evolving data — consistency is everything. Preprocessing should be deterministic, versioned, and testable.

🔄 Why .NET Teams Need to Evolve Their Thinking

.NET developers and DBAs are already great at data. That’s not the issue.

The issue is that AI data prep asks different questions:

- Can your transform be reused in both training and production?

- Can it adapt to unseen inputs or categories?

- Can you audit and explain what happened at each transformation step?

- Can non-developers (e.g., data analysts) reproduce your logic?

That’s where ML.NET’s data prep tools shine — offering consistency, reusability, and explainability — without leaving the .NET ecosystem.

In the next section, we’ll dive into the traditional .NET and SQL-based methods, highlight their strengths, and begin drawing a bridge to how ML.NET complements or replaces them.

🔧 Section 2: Traditional .NET and SQL-Based Data Prep

What Experienced Developers and DBAs Already Know (and Do Well)

Before diving into ML.NET’s built-in preprocessing tools, it’s worth acknowledging a hard truth:

Most enterprise AI teams already have the skills and systems to handle data prep — just not in the way AI expects.

For decades, .NET developers and SQL Server DBAs have built robust ETL systems for reporting, warehousing, and transactional applications. These pipelines often involve:

- Stored procedures to clean and reshape data

- C# functions to validate, transform, and enrich records

- SSIS packages to orchestrate workflows

- Scheduled jobs to monitor and manage batch data

This section examines the strengths and limitations of those traditional methods — and why they sometimes struggle in the context of AI.

🛠 How Traditional .NET and SQL Data Prep Works

If you’re an experienced developer or database admin, this process probably looks familiar:

🔹 SQL-Based Workflow:

- Write stored procedures to clean or impute missing values

- Use

CASE,ISNULL, orCOALESCEfor conditional logic - Apply joins and subqueries to enrich data from multiple sources

- Normalize or bucket values using computed columns or lookup tables

🔹 .NET-Based Workflow:

- Use LINQ and C# methods to manipulate in-memory datasets

- Apply business rules using custom functions

- Create helper classes for formatting, validation, and sanitization

- Output structured data to a staging database, CSV, or API

These methods are highly customizable. They give full control to the developer or DBA. They also reflect years of institutional knowledge embedded in SQL logic and .NET code.

✅ Strengths of Custom Data Prep (SQL + C#)

| Strength | Description |

|---|---|

| Precision | Developers have complete control over every rule and exception |

| Performance Tuning | SQL queries and stored procedures can be heavily optimized |

| System Integration | Easy to integrate with legacy systems and line-of-business apps |

| Security and Compliance | Leverages existing database roles, audits, and controls |

| Team Familiarity | .NET teams already know how to build, test, and deploy this logic |

| Reusable Patterns | Common logic can be reused across reporting and dashboards |

Custom prep pipelines shine in environments where every data transformation needs to be fully transparent, traceable, and governed — especially in finance, healthcare, and government settings.

⚠️ Weaknesses of Traditional Approaches for AI Use Cases

The same strengths become liabilities when AI enters the picture. Here’s why:

| Weakness | Impact in AI Context |

|---|---|

| Hard to Reuse for Inference | Code for training isn’t always used in production scoring, leading to inconsistencies |

| Low Modularity | Data prep logic is often buried in monolithic stored procedures or controller logic |

| Difficult to Track Versions | Changes to prep logic are not always documented or reproducible |

| Not Pipeline-Aware | Most ETL jobs aren’t structured as sequential feature engineering pipelines |

| Slow Experimentation | Making even small changes (e.g., swapping a normalization technique) can take hours or days |

| AI-Naïve Defaults | Traditional ETL doesn’t handle encoding, vectorization, or feature scaling out of the box |

In AI, the same prep logic must be applied consistently at train time and inference time. That’s where custom SQL and C# start to feel brittle or overly verbose.

🔍 Real-World Examples of Traditional Prep Limitations in AI

Let’s look at three common enterprise scenarios where traditional prep methods hit a wall:

1. Encoding Categorical Variables

SQL lacks a native way to one-hot encode categories, handle new labels, or gracefully degrade when values change over time. Custom C# code can handle it — but maintaining it is a nightmare.

2. Text Processing

Cleaning and tokenizing text in SQL or vanilla C# is painful and inconsistent. No TF-IDF, no n-grams, no vocabulary builders. You’re stuck reinventing the wheel.

3. Pipeline Portability

You write stored procedures for training data, but then what? How do you ensure the same logic applies when scoring new data in real time? Too often, the answer is: you can’t — not easily.

🔁 You Don’t Have to Throw It All Away

The point here isn’t to abandon SQL or C# — far from it. In many cases, your existing prep logic can:

- Be reused before ML.NET ingestion

- Serve as the first layer of cleanup

- Or become part of a hybrid pipeline where ML.NET picks up where SQL leaves off

ML.NET is not a replacement for your DBAs. It’s a force multiplier — letting you convert raw or partially processed data into ML-ready features, using .NET-native tools.

📊 Summary Table: Traditional Prep vs ML.NET Prep

| Feature | SQL / C# Prep | ML.NET Prep |

|---|---|---|

| Custom Logic | ✅ High | ⚠️ Moderate (but extendable) |

| Pipeline Reuse | ❌ Hard | ✅ Built-in |

| Version Control | ⚠️ Manual | ✅ Declarative Pipelines |

| Encoding Support | ❌ Limited | ✅ Native |

| Text Vectorization | ❌ Manual | ✅ Built-in |

| Production Integration | ✅ Easy via API or batch | ✅ Easy via model + transformer |

| Model Alignment | ❌ Risk of mismatch | ✅ Guaranteed consistency |

In the next section, we’ll explore what makes AI data prep fundamentally different — and why your existing methods, while powerful, need help in this new domain.

🧠 Section 3: What Makes AI Data Prep Different?

Why Prepping Data for AI is Not Just “Fancy ETL”

If you’re coming from a traditional software development or database background, the phrase “data preparation” might sound like just another form of ETL (Extract, Transform, Load). But AI introduces different assumptions, tolerances, and downstream consequences. What works in reporting systems or transactional databases can quietly sabotage a machine learning model.

In this section, we’ll examine how and why AI data preparation differs from traditional practices — and what new skills or tools are required to do it well.

🔁 Traditional Systems vs. Machine Learning: A Mental Model Shift

Traditional Systems:

- Expect clean, relational data

- Are forgiving of nulls or outliers if business logic accounts for them

- Can rely on human-defined rules for every possible case

- Are evaluated by business logic correctness

Machine Learning Systems:

- Require numeric, encoded, consistent input formats

- Are highly sensitive to bad or inconsistent input

- Generalize patterns statistically — meaning small prep mistakes cause major accuracy loss

- Are evaluated by performance metrics, not rules

Here’s a side-by-side comparison:

| Aspect | Traditional App / Report | AI Model Input |

|---|---|---|

| Input Format | Tabular, typed | Structured, encoded feature vectors |

| Missing Data | Often tolerated | Must be handled explicitly |

| Human-in-the-loop? | Usually | Rarely |

| Flexibility in Logic | High (custom if/else logic) | None (fixed transforms) |

| Output Format | Query results, tables | Numeric prediction, probability, classification |

| Validation | Business rules | Statistical metrics (e.g., accuracy, recall) |

🔬 The Core Requirements of AI Data Prep

ML models expect data that conforms to very specific mathematical and statistical requirements. These requirements are rarely encountered in business intelligence (BI) or web application contexts.

Here are the most common AI-specific preprocessing steps — with brief explanations:

| Step | What It Does | Why It Matters |

|---|---|---|

| Missing Value Replacement | Replaces nulls with mean, median, or placeholder | Prevents runtime errors and model confusion |

| Normalization | Scales numerical values (e.g., Min-Max, Z-score) | Avoids bias toward large-magnitude features |

| One-Hot Encoding | Converts categorical strings into binary columns | Enables use of categorical variables in numeric models |

| Label Encoding | Maps string labels to integers | Required for classification tasks |

| Text Featurization | Converts raw text into token vectors or embeddings | Enables NLP models to learn from language |

| Outlier Detection | Removes or flags anomalies | Prevents skewed models from rare events |

| Feature Engineering | Creates new columns from existing ones | Enhances signal-to-noise ratio in training |

| Shuffling and Splitting | Randomizes data order and separates training/test sets | Prevents data leakage and bias |

Each of these steps must be applied consistently, in the correct order, and with reproducible parameters — both during training and production inference.

⚠️ The Consequences of Doing it Wrong

In traditional applications, a bad transformation might mean a broken report. In AI?

You might never realize something is broken — but your model silently becomes worse.

Examples:

- One-hot encoding during training but forgetting to apply it in production? → Wrong shape of input → crash or bad predictions

- Nulls during training were dropped, but nulls in production are sent through? → Drift in input space

- Normalizing one column but not another? → Model bias toward one feature

- Training on ordered data without shuffling? → Overfitting to input order

These are invisible bugs. The app doesn’t crash, but the decisions it makes are degraded — slowly, subtly, and often at scale.

🧪 Data Prep Isn’t Just Setup — It’s an Experiment Control Layer

One of the most underappreciated roles of data preparation in AI is that it acts like the laboratory standard in scientific experiments. It creates the conditions under which your model trains and makes predictions. If you change the lab conditions, you change the outcome — even with the same model and the same data.

For that reason:

✅ Data prep must be repeatable

✅ Data prep must be versioned

✅ Data prep must be modular and inspectable

✅ Data prep must be portable between dev and prod

ML.NET helps with this by offering declarative, chainable transformation pipelines — letting you control every preprocessing step in one place and reuse it during training and inference.

✅ Developer Checklist: Are You AI-Ready?

Here’s a quick self-assessment to know if your current data prep approach is AI-ready:

| Question | Yes / No |

|---|---|

| Do I replace missing values systematically? | ✅ / ❌ |

| Do I normalize or scale numeric fields? | ✅ / ❌ |

| Do I consistently encode categorical variables across environments? | ✅ / ❌ |

| Is my training data shuffled and split? | ✅ / ❌ |

| Can I recreate the same prep steps tomorrow (versioned)? | ✅ / ❌ |

| Can I export my prep pipeline into production? | ✅ / ❌ |

| Have I accounted for rare/unseen values? | ✅ / ❌ |

| Do I apply the same transforms at inference time as at training? | ✅ / ❌ |

If you answered “No” to more than 2–3 of these, your current prep flow might not be AI-ready — and ML.NET’s tools can help.

In the next section, we’ll cover the fundamentals of ML.NET itself — focusing on its built-in architecture and where data prep fits within it.

🤖 Section 4: What is ML.NET? (Mini Primer)

A .NET-Native Machine Learning Framework Built for Engineers, Not Data Scientists

If you’re a .NET developer or enterprise architect, you’ve likely seen Python dominate the machine learning space. Scikit-learn, pandas, TensorFlow, and PyTorch get most of the press. But Microsoft quietly built something different — a framework that feels like C#, integrates with .NET tools, and doesn’t require switching ecosystems.

That framework is ML.NET — and it’s not just for training models.

It’s also a practical, fast, and reusable system for preprocessing AI data in enterprise applications.

Let’s break it down.

🏗️ What Is ML.NET?

ML.NET is an open-source, cross-platform machine learning framework developed by Microsoft, specifically for .NET developers. It allows you to build, train, evaluate, and deploy custom machine learning models — entirely in C#, F#, or VB.NET, without writing a single line of Python or R.

Originally developed as an internal tool at Microsoft (for products like Outlook and Bing Ads), ML.NET was open-sourced in 2018 and has evolved into a capable enterprise ML platform.

Core Features:

- End-to-end machine learning in C#

- Data loading, cleaning, transformation, and featurization

- Binary classification, regression, clustering, anomaly detection

- Support for ONNX and TensorFlow models

- Seamless integration with .NET, ASP.NET, Azure, and desktop apps

- Model consumption via REST APIs, gRPC, Blazor, or console apps

It’s built for developers who already know .NET — not data scientists who live in Jupyter notebooks.

🔍 ML.NET Architecture (Relevant to Data Prep)

ML.NET is based on a pipeline architecture, where data flows through a series of transformations before reaching a trainer or being used for prediction. Think of it like a middleware chain — but for data.

Key Concepts for Data Preparation:

| Component | Description |

|---|---|

| IDataView | The core data structure in ML.NET (similar to a DataFrame, but lazy-evaluated and memory-efficient) |

| DataOperationsCatalog | A fluent API to load, cache, shuffle, and transform data |

| Transformers | Objects that apply a specific preprocessing step (e.g., normalization, encoding) |

| Estimators | Factory objects that define how a transformer will be built based on input data |

| Pipeline | A chained sequence of estimators that define your data transformation logic |

csharpCopyEditvar pipeline = mlContext.Transforms

.ReplaceMissingValues("Age")

.Append(mlContext.Transforms.NormalizeMinMax("Income"))

.Append(mlContext.Transforms.Categorical.OneHotEncoding("JobTitle"));

The example above creates a pipeline that:

- Fills in missing values in the

Agecolumn - Normalizes the

Incomecolumn to a 0–1 range - One-hot encodes the

JobTitlecolumn

Each step is modular, inspectable, and reusable.

🔄 How ML.NET Bridges Dev Workflows and ML Needs

What makes ML.NET compelling isn’t just that it’s written in C#. It’s that it:

- Feels like LINQ for data preprocessing

- Follows the same design principles as ASP.NET and EF Core

- Allows you to version, serialize, and reuse your data prep logic

This means you can:

- Build your training pipeline as C# code

- Save the model and its preprocessing steps together

- Load the pipeline in your production application with zero change to the data logic

That last point solves one of the biggest headaches in AI development:

Training and inference pipelines drifting apart.

🧠 Built-In Preprocessing Capabilities

ML.NET ships with dozens of transformers and estimators that handle the most common data prep tasks:

| Transformer | Purpose |

|---|---|

ReplaceMissingValues() | Fill in nulls or empty fields |

NormalizeMinMax() / NormalizeMeanVariance() | Scale numerical values |

Categorical.OneHotEncoding() | Convert categorical strings into vectors |

Text.FeaturizeText() | Convert raw text into tokenized vectors |

Concatenate() | Merge multiple columns into a single feature vector |

DropColumns() | Remove unwanted or sensitive columns |

ConvertType() | Cast values between numeric types |

These transformations are stateless or stateful, and can be cached, reused, and exported as part of your model.

🧩 Where ML.NET Fits in the AI Stack

Here’s where ML.NET sits in a .NET-powered enterprise architecture:

pgsqlCopyEdit ┌─────────────────────────────┐

│ SQL Server / Azure SQL DB │

└────────────┬────────────────┘

│

Load via EF Core, ADO.NET, or CSV

│

┌────────────▼────────────┐

│ ML.NET │

│ Data Preparation │

│ + Model Training │

└────────────┬────────────┘

│

Save model + transforms to file

│

┌────────────▼────────────┐

│ ASP.NET Core API │

│ Loads model + logic │

│ Makes predictions │

└─────────────────────────┘

This integration is seamless for teams already building enterprise .NET apps — no Python handoffs, no duplicated logic across stacks.

🏁 Recap: Why ML.NET for Data Prep?

If you’re asking:

Can’t I just keep using SQL and C# for data prep?

Sure, you can — but with ML.NET, you get:

✅ Reusable, chainable, and testable transformation logic

✅ Consistent data processing in training and production

✅ Easier experimentation (swap in/out transforms with one line)

✅ Seamless serialization with the trained model

✅ Zero context-switching outside the .NET ecosystem

You get the precision of traditional .NET, with the power of modern ML workflows.

In the next section, we’ll take a deeper dive into ML.NET’s preprocessing transformers — exploring how to load data, clean it, normalize it, and encode it using production-grade C# code.

🧰 Section 5: Data Preparation Tools in ML.NET

Transforming Raw Data into Machine-Learning-Ready Features

Now that we’ve introduced ML.NET’s architecture and purpose, it’s time to zoom in on the heart of this article: data preparation within ML.NET.

ML.NET offers a rich and evolving catalog of built-in transformers — the building blocks of feature engineering pipelines. These tools allow .NET developers to clean, encode, scale, and shape data into forms that AI models can understand — all without leaving C#.

This section provides a hands-on look at the core ML.NET preprocessing tools, how they’re used, and why they matter.

📥 Loading Data into ML.NET

Everything starts with getting your data into the right structure. ML.NET uses a lazy, memory-efficient format called IDataView, optimized for streaming and transformation.

🗃️ Common Loading Methods

| Method | Description |

|---|---|

LoadFromTextFile<T>() | Load structured CSV/TSV into a typed object |

LoadFromEnumerable<T>() | Load in-memory collections (e.g., List<T>) |

LoadFromBinary() | Load previously saved model data |

LoadFromDatabase() | Indirectly supported via EF Core or manual bridging |

Example: Loading from a CSV file

csharpCopyEditvar mlContext = new MLContext();

var data = mlContext.Data.LoadFromTextFile<ModelInput>(

path: "data.csv",

hasHeader: true,

separatorChar: ',');

Where ModelInput is a class defining the column schema.

🔧 Common ML.NET Data Preparation Transformers

Let’s walk through the most useful ML.NET data prep tools. These are applied using mlContext.Transforms.

1. Missing Value Handling

csharpCopyEdit.ReplaceMissingValues("Age", replacementMode: MissingValueReplacingEstimator.ReplacementMode.Mean)

Fills missing Age values with the column mean.

2. Normalization and Scaling

csharpCopyEdit.NormalizeMinMax("Income")

.NormalizeMeanVariance("Age")

Ensures numerical features are within expected ranges, reducing model bias.

3. Categorical Encoding

csharpCopyEdit.Categorical.OneHotEncoding("JobTitle")

.Categorical.OneHotHashEncoding("Department", numberOfBits: 4)

Converts categorical strings into binary feature vectors — essential for tree-based or linear models.

4. Text Processing (Featurization)

csharpCopyEdit.Text.FeaturizeText("Comments")

Tokenizes and vectorizes unstructured text, including stopword removal and word embeddings.

5. Column Management

csharpCopyEdit.DropColumns("SSN", "UserId")

.Conversion.ConvertType("Age", DataKind.Single)

.Concatenate("Features", "Age", "Income", "YearsExperience")

Includes tools for type conversion, projection, and constructing a final Features vector.

📊 Reference Table: ML.NET Data Prep Transformers

| Transformer | Description | Use Case |

|---|---|---|

ReplaceMissingValues() | Impute nulls with mean, min, or placeholder | Incomplete data from real-world systems |

NormalizeMinMax() / NormalizeMeanVariance() | Rescale numeric values | Bring data to common scale for models |

OneHotEncoding() | Binary encode categorical strings | Jobs, industries, zip codes |

Text.FeaturizeText() | Turn sentences into numerical vectors | Customer feedback, reviews, emails |

ConvertType() | Cast between numeric types | Aligning with model input requirements |

DropColumns() | Remove unnecessary or sensitive data | Reduce model complexity or comply with PII laws |

Concatenate() | Combine multiple columns into one vector | Final Features input for model training |

🧵 Combining Transformers into Pipelines

ML.NET pipelines are chainable — you can stack multiple transformations in one logical flow.

Example:

csharpCopyEditvar pipeline = mlContext.Transforms

.ReplaceMissingValues("Age")

.Append(mlContext.Transforms.NormalizeMinMax("Income"))

.Append(mlContext.Transforms.Categorical.OneHotEncoding("JobTitle"))

.Append(mlContext.Transforms.Concatenate("Features", "Age", "Income", "JobTitle"));

This chain:

- Cleans up null

Agevalues - Normalizes

Income - Encodes

JobTitle - Produces a single

Featurescolumn for training

You can also save and reload the entire pipeline as part of your trained model file — keeping training and inference 100% aligned.

💡 Bonus: Diagnostics and Schema Inspection

After applying a transform, you can inspect the resulting schema:

csharpCopyEditvar preview = transformedData.Preview();

foreach (var column in preview.Schema)

Console.WriteLine($"Column: {column.Name}, Type: {column.Type}");

This is critical for debugging transformations, validating correctness, and ensuring no information leakage before training.

🧪 Testability and Repeatability

Because ML.NET pipelines are code-based and declarative:

- You can unit test them

- You can version control them

- You can log inputs and outputs for auditing

This makes ML.NET data prep ideal for regulated industries like healthcare, finance, and government — where traceability and consistency are non-negotiable.

🛠️ Summary

ML.NET’s data prep tools give .NET developers what they’ve always wanted but never had in traditional AI tools:

✅ Full control over every preprocessing step

✅ Declarative, testable, and modular logic

✅ Consistent behavior from training to production

✅ C#-native syntax that feels like LINQ and EF Core

In the next section, we’ll walk through a real-world example pipeline, showing how to take raw data and turn it into a model-ready dataset using ML.NET.

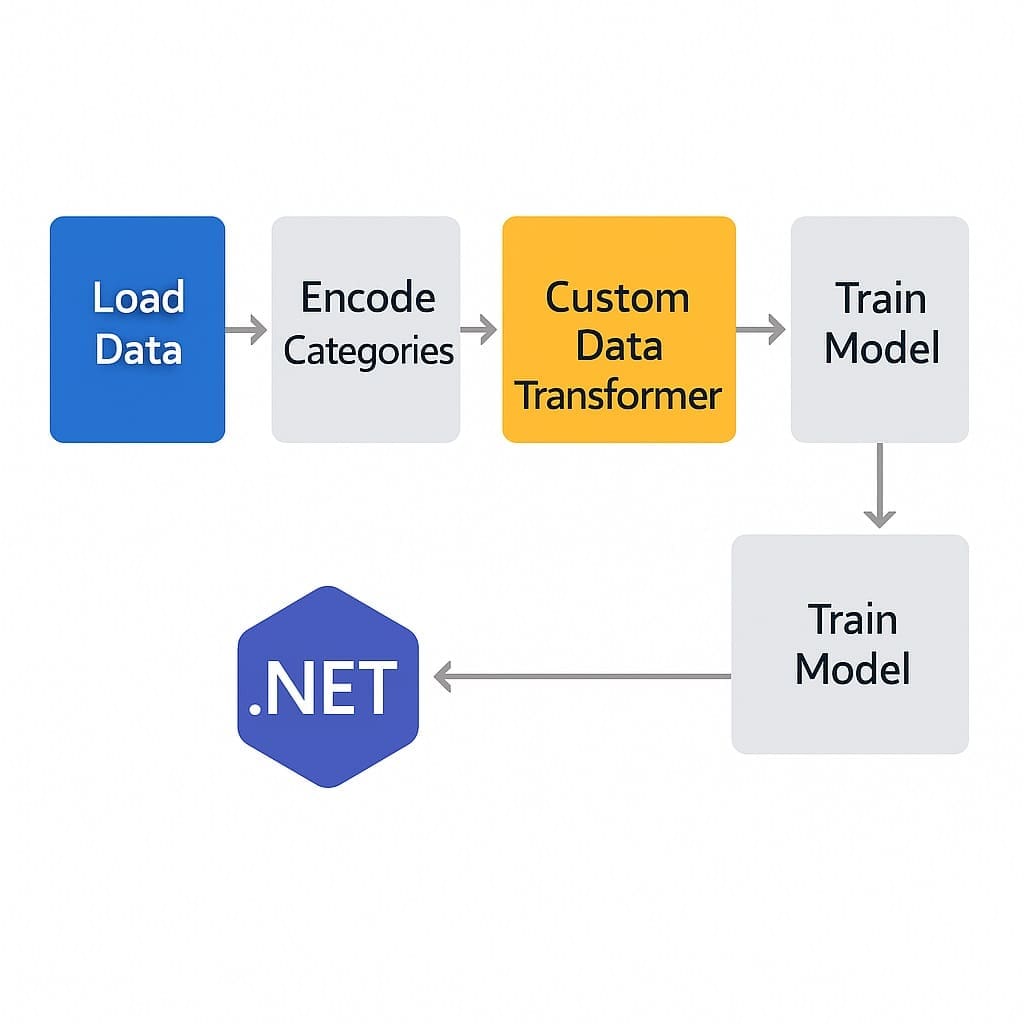

🏗️ Section 6: Sample Data Prep Pipeline in ML.NET

A Step-by-Step Example Using Real-World Business Data

Theory is helpful — but nothing beats seeing the full pipeline in action. In this section, we’ll build a complete ML.NET data preparation workflow using a practical, business-relevant dataset.

We’ll prepare a dataset for a salary prediction model. This is a common use case in HR systems, where an organization wants to estimate expected compensation based on experience, education level, and job title.

🧾 The Dataset: HR Salary Data

Let’s say we have the following columns:

| Column | Type | Description |

|---|---|---|

YearsExperience | float | Number of years in the industry |

EducationLevel | string | Categorical field: “High School”, “Bachelor”, “Master”, etc. |

JobTitle | string | Categorical field: e.g., “Software Engineer”, “Project Manager” |

Salary | float | The numeric label we want to predict |

Notes | string | Optional free-text comments (may contain noise) |

This dataset is messy, real-world, and typical of what you’ll find in enterprise HR databases.

🧪 Goal:

Build a preprocessing pipeline that:

- Replaces missing values

- Encodes categorical variables

- Normalizes numeric values

- Featurizes text (optional)

- Combines all features into one vector

- Is ready for model training or export

🧰 Step-by-Step: Building the Pipeline

1. Define Input Schema

Create a class that maps to the incoming data:

csharpCopyEditpublic class ModelInput

{

public float YearsExperience { get; set; }

public string EducationLevel { get; set; }

public string JobTitle { get; set; }

public string Notes { get; set; }

public float Salary { get; set; } // Label

}

2. Load the Data

csharpCopyEditvar mlContext = new MLContext();

var data = mlContext.Data.LoadFromTextFile<ModelInput>(

path: "hr_salary_data.csv",

hasHeader: true,

separatorChar: ',');

3. Build the Preprocessing Pipeline

csharpCopyEditvar pipeline = mlContext.Transforms

.ReplaceMissingValues("YearsExperience", replacementMode: MissingValueReplacingEstimator.ReplacementMode.Mean)

.Append(mlContext.Transforms.NormalizeMinMax("YearsExperience"))

.Append(mlContext.Transforms.Categorical.OneHotEncoding("EducationLevel"))

.Append(mlContext.Transforms.Categorical.OneHotEncoding("JobTitle"))

.Append(mlContext.Transforms.Text.FeaturizeText("Notes"))

.Append(mlContext.Transforms.Concatenate("Features",

"YearsExperience", "EducationLevel", "JobTitle", "Notes"))

.AppendCacheCheckpoint(mlContext); // Optional but useful for performance

This pipeline performs:

- Null value replacement

- Normalization

- Categorical encoding

- Text vectorization

- Feature concatenation

4. Apply the Pipeline

csharpCopyEditvar transformedData = pipeline.Fit(data).Transform(data);

Now transformedData is ready for use in training or evaluation. It contains a Features column and a Label (Salary), perfectly structured for a regression model.

5. Inspect the Result

csharpCopyEditvar preview = transformedData.Preview(maxRows: 5);

foreach (var column in preview.Schema)

Console.WriteLine($"Column: {column.Name}, Type: {column.Type}");

Useful for debugging and ensuring the correct data structure before training.

💾 Optional: Save the Pipeline + Model

You can serialize both the trained model and the preprocessing pipeline to ensure consistency in production:

csharpCopyEditmlContext.Model.Save(trainedModel, data.Schema, "salary_model.zip");

This allows inference-time logic to mirror training-time logic exactly — no more “it worked during training, but not in prod” problems.

🧠 Summary

Here’s a recap of the full ML.NET preprocessing flow for our HR salary prediction:

| Step | ML.NET Transformer | Notes |

|---|---|---|

| Handle missing experience | ReplaceMissingValues() | Fill gaps with average |

| Normalize experience | NormalizeMinMax() | Avoid scale issues |

| Encode education/job | OneHotEncoding() | Makes strings machine-readable |

| Process free text | FeaturizeText() | Optional, adds insight from comments |

| Combine features | Concatenate() | Required for model input |

| Cache result | AppendCacheCheckpoint() | Speeds up model training |

📌 Key Takeaways

- You can prep real-world business data with ML.NET using a fluent, readable C# pipeline

- The pipeline handles data cleaning, encoding, scaling, and combination — all inside your .NET application

- You can preview, test, and export the pipeline just like any other production code

- The result is portable, repeatable, and perfectly aligned with your model’s expectations

In the next section, we’ll compare this approach to SSIS, Azure Data Factory, and custom ETL tools — exploring where ML.NET fits best in the broader enterprise data strategy.

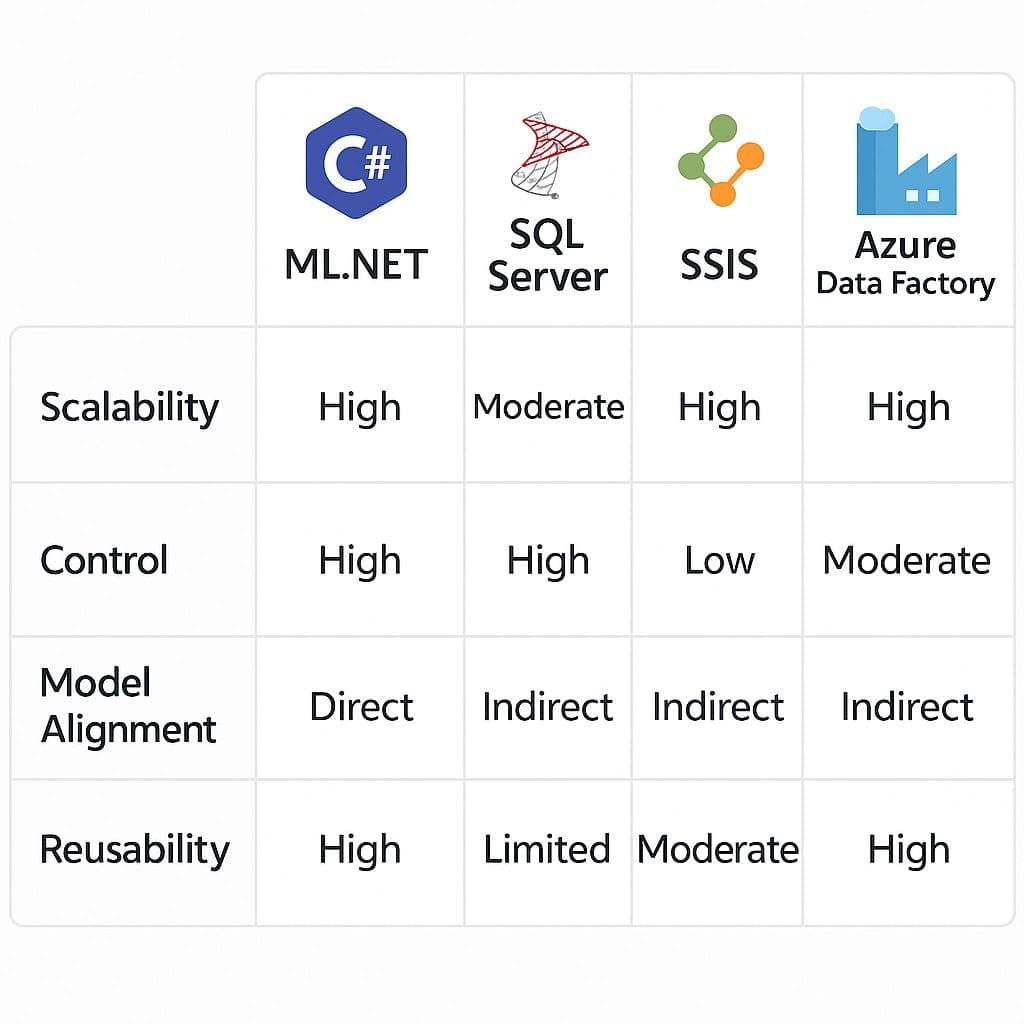

🔄 Section 7: Comparing ML.NET with Traditional ETL Tools

Where ML.NET Fits — and Where It Doesn’t

Let’s be clear: ML.NET is not trying to replace your enterprise ETL stack. Tools like SSIS, Azure Data Factory, and custom SQL Server + C# pipelines are mature, robust systems built for massive-scale data movement, orchestration, and warehousing.

ML.NET is focused on a specific niche:

✅ Transforming data for machine learning models, inside .NET applications

But where do you draw the line? When should you stick to SSIS or your trusted DBA workflows? When does it make sense to use ML.NET instead? This section breaks it down.

🧠 Core Differences: ETL vs. ML Data Prep

| Feature | Traditional ETL Tools | ML.NET Pipelines |

|---|---|---|

| Goal | Move, clean, reshape data for storage/reporting | Transform data for ML training & inference |

| Tooling | SSIS, Data Factory, T-SQL, C# utils | ML.NET Estimators & Transformers |

| Skillset | DBA / IT Ops / BI Team | .NET developer or ML engineer |

| Use Case | Warehousing, reports, dashboards | AI model input/output, preprocessing |

| Target Format | Tables, rows, cubes | Feature vectors, label matrices |

| Runtime Context | Batch or scheduled jobs | Real-time or in-app prediction pipelines |

| Production Use | ETL pipelines or BI dashboards | Integrated model pipelines in services or APIs |

| Auditing | Logs, SSIS reports, triggers | Model versioning and saved pipeline artifacts |

🛠️ Use Case Comparison: Which Tool to Use When?

| Scenario | Best Tool | Why |

|---|---|---|

| Importing large CSVs into a database | SSIS or ADF | Designed for bulk data ingestion |

| Building a model to predict customer churn | ML.NET | Tight C# integration and built-in transforms |

| Cleaning financial data nightly for reports | SQL + SSIS | Well-established and fast for known schema |

| Creating a predictive model pipeline inside an ASP.NET API | ML.NET | Declarative, reusable, model-aligned logic |

| Tokenizing millions of text comments from customers | ML.NET | Has optimized built-in FeaturizeText() |

| Aggregating daily metrics into cubes | Azure Data Factory | Designed for long-term, large-scale ETL |

🧩 Hybrid Strategy: ML.NET + ETL Together

In real-world systems, you don’t choose one tool — you compose them.

Example:

- Azure Data Factory extracts data from SQL, CRM, or blob storage

- SSIS or SQL scripts clean up missing rows, basic formatting

- ML.NET handles:

- Final normalization

- Text vectorization

- Feature engineering

- Model inference or export

This division of labor keeps the right tools doing what they do best.

🧱 Strengths of ML.NET for Preprocessing

ML.NET offers capabilities traditional ETL tools don’t:

| Capability | Benefit |

|---|---|

| Text Featurization | Built-in support for NLP-style transformations |

| Model-Aware Pipelines | Ensures the same prep logic is used during training and production |

| Serialization of Transforms | Save data prep logic alongside model binary |

| Dynamic Column Handling | Supports transformations on unseen or runtime-defined columns |

| API-Friendly | Ideal for use in microservices or backend APIs that include AI |

| Streaming-Compatible | Can process rows one at a time with low memory use |

🚧 When NOT to Use ML.NET for Data Prep

ML.NET is not a silver bullet. It’s not great at:

- Orchestrating multi-source joins across disparate systems

- Monitoring pipeline performance across ETL stages

- Handling petabyte-scale movement between warehouses

- Managing permissions, triggers, or database policy enforcement

- Coordinating data scheduling, retries, and failure handling

If you’re moving 20 million rows between SAP, Oracle, and Azure Synapse every night… ML.NET is not your tool.

⚖️ Summary Table: ML.NET vs. Traditional ETL Tools

| Feature | ML.NET | SSIS / ADF / SQL |

|---|---|---|

| Native to .NET | ✅ | ❌ |

| Declarative pipelines | ✅ | ❌ |

| Reusability in inference | ✅ | ❌ |

| Easy joins across systems | ❌ | ✅ |

| Designed for massive batch jobs | ⚠️ | ✅ |

| Text, NLP, encoding support | ✅ | ❌ |

| Graphical authoring tools | ❌ | ✅ |

| DevOps-friendly (code-first) | ✅ | ⚠️ (more config-driven) |

| Target output | ML-ready features | Structured reports, cubes |

🧩 Bottom Line

Use SSIS, ADF, or SQL Server when:

- You’re moving large volumes of data between systems

- You’re building operational data pipelines or reports

- You need time-based scheduling, monitoring, or alerts

Use ML.NET when:

- You’re preparing data for training or scoring models

- You want prep logic embedded directly in your .NET apps

- You need guaranteed consistency from training to production

- You’re doing lightweight, modular transformations at runtime

Use both when:

- Your raw data pipeline needs scale and structure (ETL)

- Your AI pipeline needs precision, alignment, and reusability (ML.NET)

In the next section, we’ll tackle performance — showing how ML.NET handles speed, memory, and scale, and how you can optimize data prep pipelines for enterprise-grade systems.

📈 Section 8: Performance Considerations

How to Scale ML.NET Data Prep for Enterprise Workloads

When it comes to AI data pipelines, performance matters — not just in training, but in preprocessing. Poorly optimized data prep can bottleneck your entire system, especially when:

- Processing large datasets

- Operating in production with real-time APIs

- Training repeatedly during hyperparameter tuning

- Serving hundreds of concurrent inference requests

ML.NET is designed with performance in mind — but like any framework, it rewards those who understand what’s fast, what’s lazy, and what needs to be cached.

This section shows you how to make ML.NET’s data prep work efficiently at scale, both during training and inference.

⚙️ ML.NET Performance Philosophy

ML.NET is lazy and memory-efficient by default.

This means:

- Data is streamed row-by-row via

IDataView - Transformations are not executed until you call

.Fit()or.Transform() - Pipelines don’t copy entire datasets into memory unless explicitly forced

These defaults are great for handling millions of rows without blowing up RAM — especially useful in environments with constrained compute or shared infrastructure.

🚀 Key Optimization Techniques

✅ 1. Use AppendCacheCheckpoint()

Caching improves performance when the same data is reused across multiple training or validation steps.

csharpCopyEditvar pipeline = mlContext.Transforms

.NormalizeMinMax("Income")

.AppendCacheCheckpoint(mlContext);

📌 When to use:

- During iterative model training

- When your pipeline does expensive transformations (e.g.,

FeaturizeText()) - When you’ll call

Fit()orEvaluate()multiple times

📌 When NOT to use:

- On real-time inference paths (adds overhead)

- When memory is extremely limited

✅ 2. Select Only Required Columns Early

Avoid passing unnecessary data downstream.

csharpCopyEditmlContext.Transforms.SelectColumns("Age", "Income", "JobTitle")

Every column adds memory and transform overhead — especially with wide tables. Prune early, prune often.

✅ 3. Use Batching for Large Files

For massive CSVs or streaming datasets, load data in chunks to reduce memory spikes:

csharpCopyEditmlContext.Data.LoadFromTextFile<ModelInput>(

path: "large.csv",

hasHeader: true,

separatorChar: ',',

allowQuoting: true,

trimWhitespace: true);

ML.NET processes rows lazily, but large source files can still benefit from batching and line-level prevalidation.

✅ 4. Use Parallelization for Custom Transforms

If you write your own ITransformer, ensure the Transform() method supports multi-threading or vectorized operations.

ML.NET itself does not parallelize transformations internally — you control that.

✅ 5. Cache Static Metadata or Lookup Tables

When joining external tables or enriching data:

- Do the join before ML.NET if possible (e.g., in SQL)

- Or cache lookup tables as static dictionaries in your transform class

This avoids per-record I/O or repeated calls to databases and APIs.

✅ 6. Be Strategic with Text Featurization

FeaturizeText() is powerful — but expensive.

Options to improve performance:

- Truncate long fields before featurization

- Avoid n-gram extraction unless truly useful

- Reduce

wordEmbeddingDimensionormaxTokensif using embeddings

✅ 7. Benchmark Preprocessing Time

ML.NET doesn’t offer built-in performance tracing, but simple Stopwatch usage around Fit() or Transform() calls gives visibility:

csharpCopyEditvar sw = Stopwatch.StartNew();

var transformedData = pipeline.Fit(rawData).Transform(rawData);

sw.Stop();

Console.WriteLine($"Transform took: {sw.ElapsedMilliseconds}ms");

Use this during development to track regressions.

🔍 Memory Footprint Considerations

ML.NET typically keeps its memory footprint low due to IDataView, but memory spikes can occur when:

- Using

Preview()on large datasets (avoid in production) - Loading large in-memory

List<T>withLoadFromEnumerable() - Calling

.ToList()orEnumerable.ToArray()on transformed data

Stick with streaming interfaces where possible.

🧠 Real-World Example: Scaling a Prediction API

Let’s say you’ve built a .NET Core API to predict housing prices using a saved ML.NET model and prep pipeline.

To keep inference under 100ms:

| Action | Optimization |

|---|---|

| Load model + pipeline once at startup | Cache with ITransformer singleton |

| Validate input columns before transform | Early column projection |

| Drop unused columns from payload | Saves memory and CPU cycles |

| Avoid caching in production inference | Skip AppendCacheCheckpoint() |

| Use pooled memory buffers | Avoids frequent allocations on each request |

You don’t need GPU acceleration for fast inference — just tight prep logic and good software hygiene.

📊 Summary: ML.NET Prep Optimization Cheat Sheet

| Optimization | When to Use |

|---|---|

AppendCacheCheckpoint() | Repeated training on same dataset |

SelectColumns() early | Datasets with 20+ columns |

Avoid Preview() on large sets | Production use |

| Use batching for file input | 10M+ rows |

| Reduce text featurization size | Free-text inputs or NLP |

| Avoid loading entire datasets into memory | Use IDataView, not List<T> |

Measure with Stopwatch | Local benchmarking or A/B testing |

🏁 Bottom Line

ML.NET offers a balanced mix of performance and control — especially for mid-to-large datasets common in enterprise apps. But like any tool, it rewards developers who optimize thoughtfully.

In the next section, we’ll explore how to decide whether to use ML.NET, C#, SQL, or external tools — and how to create a decision matrix to guide your data prep strategy.

🧩 Section 9: When to Use ML.NET for Data Prep (and When Not To)

Making Smart, Strategic Choices About Tools and Control

By now, you’ve seen that ML.NET offers a powerful, C#-native way to prepare data for machine learning — but also that it’s not a silver bullet.

This section is about making strategic decisions.

The truth is: some data prep is better done in SQL. Some in C#. Some in ML.NET. And sometimes in Azure or with tools like Power BI.

The best developers and architects don’t pick one tool — they build the right toolchain for the job. Here’s how to think through when ML.NET is the right choice — and when it’s not.

✅ When ML.NET Data Prep is a Great Fit

ML.NET shines in very specific scenarios, especially for mid-to-senior .NET developers building production-grade AI applications.

🎯 Use ML.NET When:

| Scenario | Why ML.NET Works |

|---|---|

| You’re preparing data for a .NET-based ML model | Ensures training/inference parity |

| Your model runs inside a .NET API or application | Pipeline can be reused in production with no changes |

| You want versioned, testable data prep logic | Pipelines are code, not config |

| You need categorical encoding or text featurization | Built-in, optimized transformers |

| You want to avoid switching to Python or R | Stay 100% in C# |

| You want to experiment quickly with preprocessing variations | Pipelines are easy to modify and rerun |

| You’re building a prototype with a small to medium-sized dataset | Easy to iterate without separate infrastructure |

| You need to save the model and prep steps together | Supports full pipeline serialization |

🧠 Think of ML.NET as:

A self-contained preprocessing lab for .NET teams that need full control, consistency, and repeatability — especially when the same logic must run in both dev and prod.

⚠️ When ML.NET Is Not the Best Fit

There are scenarios where ML.NET becomes overkill, inefficient, or too narrow in scope.

🚫 Avoid ML.NET When:

| Scenario | Better Alternative |

|---|---|

| You’re doing high-volume ETL across systems | Use Azure Data Factory, SSIS, or Spark |

| You need joins across dozens of tables and systems | Use SQL Server or dedicated data lake tools |

| You’re not doing AI — just cleansing/reporting | Use your existing ETL or Power BI flows |

| You’re preparing multi-terabyte datasets | Use Python, Spark, or Databricks |

| Your organization has a data science team already using Python | Let them handle prep in pandas or scikit-learn |

| You need built-in visualization or dashboards | Use Power BI or Excel |

| You don’t need to reuse the data prep logic later | Quick scripts in SQL or C# may suffice |

ML.NET is not a replacement for mature, high-scale data engineering stacks. It’s a precision tool for AI workflows — not a general-purpose hammer.

🔧 Hybrid Pipelines Are Often Best

Real enterprise systems combine tools, like:

- SQL for joins, filtering, and base cleaning

- ML.NET for scaling, encoding, text vectorization, and model-specific prep

- Azure Data Factory for orchestration and movement between systems

- C# functions for custom logic or transformations not natively supported

Example Flow:

- Pull data from SQL Server with basic filters and null handling

- Use ML.NET to:

- Normalize numerical fields

- Encode job titles and departments

- Featurize optional notes/comments

- Train model or run inference

- Write result back to a database or serve via API

ML.NET fits neatly between your existing data warehouse and your prediction layer.

🧭 Decision Matrix: Which Tool Should You Use?

| Question | Recommended Tool |

|---|---|

| Do you need to train and serve a model inside .NET? | ML.NET |

| Do you need to move millions of rows across systems? | Azure Data Factory / SSIS |

| Are you doing basic filtering and column cleanup? | SQL Server / EF Core |

| Do you need reusable, serialized pipelines? | ML.NET |

| Are you training in Python and serving via REST? | scikit-learn or TensorFlow pipelines |

| Do you need explainability and versioning for compliance? | ML.NET + serialization + Git |

| Are you building a data lake or MLOps system? | Azure Synapse / Spark / Databricks |

🧠 Real-World Guidance for .NET Devs and DBAs

| Role | Suggested Approach |

|---|---|

| .NET Developer | Use ML.NET for full data prep, especially for prototypes and internal tools |

| DBA or ETL Engineer | Clean and prep core data in SQL or SSIS, then hand off to ML.NET |

| Team Lead / Architect | Standardize around reusable ML.NET pipelines for AI workloads, and use traditional tools for everything else |

| DevOps / Infra | Ensure model + pipeline binaries are version-controlled and environment-consistent |

✅ Summary

Use ML.NET for data prep when:

- You’re working with ML models inside .NET

- You need encoding, scaling, or NLP features

- You want consistency between training and inference

- You need pipelines you can test, version, and serialize

Avoid ML.NET for:

- Massive ETL jobs across systems

- Reporting/dashboard pipelines

- Non-AI workflows

The smartest teams don’t pick one tool — they pick the right mix.

ML.NET is a scalpel in a toolbelt full of hammers. Know when to reach for it.

In the next section, we’ll look at how to extend ML.NET with custom transformations, so you’re not limited by the built-in feature set.

🔌 Section 10: Extending ML.NET with Custom Transforms

Building Reusable, Domain-Specific Preprocessing for Unique Business Needs

ML.NET provides a powerful library of built-in transformers for most common preprocessing tasks — scaling, encoding, tokenization, missing value replacement, and so on. But what if your business needs something very specific?

Maybe you need to:

- Mask personally identifiable information (PII)

- Apply domain-specific scaling (e.g., logarithmic transformation)

- Inject metadata from external sources (e.g., enrichment via lookup tables)

- Filter or transform based on conditional logic not covered by existing transformers

The good news? ML.NET is extensible.

In this section, you’ll learn how to write custom data transformations that plug seamlessly into the ML.NET pipeline architecture — giving you the power to shape data exactly how your use case demands.

🧱 The ML.NET Transformer Model: Recap

To extend ML.NET, you’ll build a custom transformer and a corresponding estimator.

- Estimator: Defines how the transformer is trained or initialized

- Transformer: Applies the actual transformation logic during

Transform()

This mirrors ML.NET’s internal architecture — and ensures your custom code is fully reusable, testable, and serializable.

🛠 Use Case: Masking PII in Free-Text Fields

Let’s say you want to scan a Notes field and redact phone numbers or emails before vectorizing the text for model training.

This is not built into ML.NET — but it’s easy to implement.

✅ Step 1: Create the Transformer Class

csharpCopyEditpublic class PiiMaskingTransformer : ITransformer

{

private readonly MLContext _mlContext;

public PiiMaskingTransformer(MLContext mlContext)

{

_mlContext = mlContext;

}

public IDataView Transform(IDataView input)

{

return _mlContext.Data.CreateEnumerable<ModelInput>(input, reuseRowObject: false)

.Select(row => new ModelInput

{

Notes = MaskPii(row.Notes),

// pass other fields unchanged

JobTitle = row.JobTitle,

EducationLevel = row.EducationLevel,

YearsExperience = row.YearsExperience,

Salary = row.Salary

})

.ToDataView(_mlContext);

}

private string MaskPii(string text)

{

if (string.IsNullOrWhiteSpace(text)) return text;

// Very basic regex for example

var emailPattern = @"\b[\w\.-]+@[\w\.-]+\.\w{2,4}\b";

return Regex.Replace(text, emailPattern, "[EMAIL]");

}

public SchemaShape GetOutputSchema(SchemaShape inputSchema) => inputSchema;

public bool IsRowToRowMapper => false;

public DataViewSchema GetOutputSchema(DataViewSchema inputSchema) => inputSchema;

}

✅ Step 2: Create a Wrapper Estimator

csharpCopyEditpublic class PiiMaskingEstimator : IEstimator<ITransformer>

{

private readonly MLContext _mlContext;

public PiiMaskingEstimator(MLContext mlContext)

{

_mlContext = mlContext;

}

public ITransformer Fit(IDataView input)

{

return new PiiMaskingTransformer(_mlContext);

}

public SchemaShape GetOutputSchema(SchemaShape inputSchema) => inputSchema;

}

✅ Step 3: Add Your Custom Estimator to the Pipeline

csharpCopyEditvar pipeline = new PiiMaskingEstimator(mlContext)

.Append(mlContext.Transforms.Text.FeaturizeText("Notes"))

.Append(mlContext.Transforms.Concatenate("Features", "Notes", "JobTitle", "YearsExperience"));

Now you’ve seamlessly added custom preprocessing logic before ML.NET’s native transformers — without disrupting the pipeline model.

🔍 Tips for Writing Efficient Custom Transformers

| Tip | Reason |

|---|---|

Use CreateEnumerable() carefully | Materializes data in memory — better for small/medium datasets |

| Avoid async or I/O in transforms | Keep logic CPU-bound and deterministic |

Implement IsRowToRowMapper if applicable | Required for use in real-time prediction |

| Use caching where possible | Speed up performance on repeated calls |

| Implement schema validation | Optional, but helps with debugging and tooling |

🧪 When to Extend ML.NET

| Good Reasons to Extend | Poor Reasons to Extend |

|---|---|

| Redacting sensitive data | Replacing a built-in transformer out of curiosity |

| Domain-specific transformations (e.g., score conversions, thresholds) | Wrapping simple logic you could do in SQL |

| Integrating rules from external services (e.g., business logic APIs) | Reinventing encoding/scaling from scratch |

| Legacy data translation (e.g., old label mappings) | Avoiding ETL steps better handled upstream |

Extending ML.NET is powerful — but only when needed. Use it to embed business knowledge, not to bypass existing tools.

🔁 Can Custom Transformers Be Reused?

Yes — if you follow the ITransformer and IEstimator interfaces, your custom logic:

- Can be unit tested

- Can be chained into ML.NET pipelines

- Can be saved and loaded with models

- Can be used at inference time in APIs

This ensures consistency across training and production environments — one of the biggest challenges in AI deployment.

🧠 Summary

| Feature | Value |

|---|---|

| Custom transformers | Let you inject business-specific logic into ML.NET |

| Follows ML.NET design | Estimator + Transformer pattern |

| Testable and serializable | Can be used in training and inference |

| Great for edge cases | Masking, enrichment, external integration |

| Should be used judiciously | Avoid reinventing common logic or bloating pipelines |

In the next section, we’ll explore how to use ML.NET pipelines in production, including saving models, deploying them as APIs, and ensuring consistent prep at inference time.

🛠️ Section 11: Integrating ML.NET Prep into Production Systems

How to Operationalize Data Preparation for Real-World Use Cases

Getting a machine learning model to work in development is one thing. Deploying that model — with reliable, consistent preprocessing — is another challenge entirely. Most AI projects fail not because of the model, but because the data pipeline breaks when moved to production.

ML.NET solves this problem by treating data prep as a first-class citizen, enabling the same logic used during training to be reused when serving predictions.

This section explains how to:

- Save and load models with preprocessing logic embedded

- Expose models via APIs or services

- Handle dynamic or runtime data

- Maintain versioning and rollback safety

🎯 Why Integration Matters

Most enterprise applications are not experiments — they’re systems that:

- Must serve predictions consistently

- Operate with real-world, messy data

- Require traceability and governance

- Need to evolve without breaking downstream consumers

You need confidence that:

✅ Preprocessing is applied the same way in training and production

✅ Models behave predictably, even when inputs change

✅ Changes to data logic can be tested and rolled back

💾 Saving the Full Model + Preprocessing Pipeline

In ML.NET, when you call .Fit(), the trained model includes the entire data pipeline — not just the model weights.

Example:

csharpCopyEditvar pipeline = mlContext.Transforms

.NormalizeMinMax("YearsExperience")

.Append(mlContext.Transforms.Categorical.OneHotEncoding("JobTitle"))

.Append(mlContext.Transforms.Concatenate("Features", "YearsExperience", "JobTitle"))

.Append(mlContext.Regression.Trainers.FastTree());

var model = pipeline.Fit(trainingData);

mlContext.Model.Save(model, trainingData.Schema, "trained_model.zip");

✅ The .zip file contains:

- The preprocessing steps

- The schema

- The model itself

You can deploy this file as-is into a .NET application or API.

📥 Loading and Using the Model in Production

csharpCopyEditITransformer trainedModel;

DataViewSchema inputSchema;

using var stream = new FileStream("trained_model.zip", FileMode.Open, FileAccess.Read);

trainedModel = mlContext.Model.Load(stream, out inputSchema);

Now you can create a prediction engine:

csharpCopyEditvar predictionEngine = mlContext.Model.CreatePredictionEngine<ModelInput, ModelOutput>(trainedModel);

Then run predictions with automatic preprocessing:

csharpCopyEditvar result = predictionEngine.Predict(new ModelInput

{

YearsExperience = 5,

JobTitle = "Software Engineer"

});

There’s no need to duplicate or reimplement data prep logic — it’s already baked into the model.

🌐 Deploying as an ASP.NET Core API

ML.NET pipelines fit naturally into ASP.NET Core apps.

Example API Controller

csharpCopyEdit[ApiController]

[Route("predict")]

public class PredictionController : ControllerBase

{

private readonly PredictionEngine<ModelInput, ModelOutput> _engine;

public PredictionController(PredictionEngine<ModelInput, ModelOutput> engine)

{

_engine = engine;

}

[HttpPost]

public ActionResult<ModelOutput> Predict([FromBody] ModelInput input)

{

var prediction = _engine.Predict(input);

return Ok(prediction);

}

}

Inject the PredictionEngine via Startup.cs using services.AddPredictionEnginePool<>() for thread-safe reuse.

🧱 Building a Preprocessing Microservice

If your preprocessing is heavy or shared across teams/models, you can split it into its own microservice:

- Load and apply the saved pipeline

- Return clean feature vectors as JSON

- Let multiple models or systems use the same consistent logic

✅ This improves reusability, compliance, and traceability across the org.

🧪 Versioning and Auditing Your Pipeline

Every saved ML.NET model includes the pipeline version at time of creation. But for true enterprise-grade version control:

| Strategy | Benefit |

|---|---|

Save pipeline + model as a versioned .zip | Enables rollback |

| Store in Git or model registry | Track changes over time |

| Log input/output schema to file | Enables postmortems and audits |

Include AssemblyVersion or pipeline hash in metadata | Proves consistency across environments |

🔁 Handling Changes Over Time

Let’s say your HR department adds a new JobTitle value that didn’t exist during training.

If you used one-hot encoding, this could cause:

- Mismatched feature vectors

- Invalid prediction inputs

- Pipeline runtime errors

✅ Solution:

- Use

OneHotHashEncoding()(hash-based) - Or retrain the model and resave the pipeline

- Or catch input schema drift in code and return a validation error

ML.NET doesn’t fix schema drift for you — but it makes it detectable and testable, which is often all you need.

🧠 Summary: Integration Best Practices

| Task | ML.NET Support |

|---|---|

| Save pipeline + model together | ✅ |

| Load pipeline in production | ✅ |

| Serve via ASP.NET Core | ✅ |

| Use DI-friendly prediction engines | ✅ |

| Version and rollback pipelines | ✅ (manual versioning) |

| Detect schema changes | ✅ (with inspection tools) |

| Microservice architecture | ✅ (optional) |

💡 Pro Tip: Separation of Concerns

For cleaner architecture, split your responsibilities:

- Data ingestion & validation → Upstream (.NET controller or queue)

- Preprocessing & vectorization → ML.NET pipeline

- Prediction logic → Model + scoring service

- Logging & monitoring → Custom middleware or telemetry layer

This makes your AI system more modular, testable, and enterprise-ready.

In the next section, we’ll summarize everything with an Enterprise AI Prep Checklist — so your team knows exactly what to verify before deploying any data pipeline or model.

✅ Section 12: Enterprise Checklist for AI Data Prep in .NET

A Field-Tested Readiness Guide for Production-Grade AI Projects

Enterprise AI isn’t about hacks, experiments, or Jupyter notebooks with undocumented logic. It’s about repeatable systems, controlled inputs, compliant outputs, and production stability.

If you’re leading or supporting an AI project using ML.NET, this checklist helps ensure that your data preparation pipeline is:

- Secure

- Version-controlled

- Reusable

- Production-ready

- Maintainable by others

Here’s a pragmatic list that technical leaders, senior developers, and architects can walk through before deploying or approving any model.

🧾 Data Readiness

| Item | Details | Status |

|---|---|---|

| ✅ Data schema is well-defined | All columns typed and documented | ☐ |

| ✅ Missing values are explicitly handled | Via SQL, C#, or ReplaceMissingValues() | ☐ |

| ✅ Categorical variables are encoded | Prefer OneHotEncoding or OneHotHashEncoding | ☐ |

| ✅ Numeric variables are normalized or scaled | Use NormalizeMinMax() or NormalizeMeanVariance() | ☐ |

| ✅ Free-text fields are featurized | Use FeaturizeText() or custom vectorizers | ☐ |

| ✅ Feature columns are concatenated | Concatenate("Features", ...) used consistently | ☐ |

🧩 Pipeline Structure

| Item | Details | Status |

|---|---|---|

| ✅ Data prep steps are in an ML.NET pipeline | All logic is chainable and testable | ☐ |

| ✅ Training and inference pipelines match | No duplicated or diverged logic | ☐ |

| ✅ Pipeline is modular | Reusable in different projects or services | ☐ |

| ✅ Caching is applied appropriately | Use AppendCacheCheckpoint() for large training sets | ☐ |

💾 Model + Pipeline Versioning

| Item | Details | Status |

|---|---|---|

| ✅ Model + prep pipeline are saved together | Use mlContext.Model.Save() | ☐ |

| ✅ Each saved model has a unique version | Timestamp or Git hash in filename or metadata | ☐ |

| ✅ Previous models can be restored easily | Stored safely with rollback process defined | ☐ |

| ✅ Input/output schema is documented | For each model version | ☐ |

🚀 Deployment Readiness

| Item | Details | Status |

|---|---|---|

| ✅ Model is loaded once at app startup | Avoid per-request loading | ☐ |

✅ PredictionEngine or Transform() logic is DI-ready | Thread-safe, cached, injected into services | ☐ |

| ✅ Inputs are validated before transformation | Prevent runtime errors due to schema mismatch | ☐ |

| ✅ Outputs are logged or traced | For observability and compliance | ☐ |

🔁 Schema Change Monitoring

| Item | Details | Status |

|---|---|---|

| ✅ You’ve defined acceptable input ranges | Document min/max and valid categorical values | ☐ |

| ✅ Pipeline uses encoding tolerant of new values | Prefer hash encoding if category drift is common | ☐ |

| ✅ System logs or alerts on unseen inputs | E.g., new JobTitle not in training set | ☐ |

🧠 Team and Process Hygiene

| Item | Details | Status |

|---|---|---|

| ✅ Pipeline logic is stored in Git | No hardcoded “notebook logic” | ☐ |

| ✅ All transformations are testable in isolation | Each step has test coverage or visual inspection | ☐ |

| ✅ Devs and DBAs agree on data boundaries | Split of responsibilities is documented | ☐ |

| ✅ Project has onboarding documentation | New team members can understand the prep pipeline | ☐ |

🛡️ Compliance, Privacy, and Risk

| Item | Details | Status |

|---|---|---|

| ✅ Sensitive fields are dropped or masked | SSNs, emails, PII not fed to model | ☐ |

| ✅ Data flow complies with industry standards | HIPAA, GDPR, or internal governance | ☐ |

| ✅ Feature importance is monitored | Prevents use of discriminatory variables | ☐ |

| ✅ Data lineage is traceable | You can reproduce input → features → prediction | ☐ |

📌 Executive Summary

A production-grade ML.NET pipeline should be:

✅ Deterministic — Same input always leads to the same output

✅ Versioned — Every change is logged, rollback-ready

✅ Modular — Reusable across projects

✅ Auditable — Logs, schema, and logic are all traceable

✅ Aligned with model use — Preprocessing perfectly matches the trained model

✅ Safe — Resilient to bad inputs, schema changes, and usage drift

💡 Pro Tip: Turn This Checklist Into a CI Gate

For larger teams, consider turning this checklist into a CI/CD gate, where:

- Pipelines must pass a test suite

- Schemas are validated on pull request

- Changes to data prep require code review

- Models are version-tagged and deployed via pipeline

This turns your AI system from “project” into “infrastructure.”

In the next section, we’ll give readers a curated list of resources and learning paths to continue leveling up their ML.NET data preparation skills.

📚 Section 13: Resources and Learning Path

Where to Go Next to Level Up Your ML.NET Data Preparation Skills

Mastering data preparation in ML.NET unlocks more than just a better model — it gives .NET developers and DBAs a seat at the AI table. But the learning never stops. The ecosystem is growing, tools evolve, and best practices deepen as more real-world applications come online.

This section provides a curated, battle-tested set of official documentation, practical tutorials, sample projects, and community resources that will help you and your team sharpen your edge.

📘 Official Microsoft Documentation

These are the authoritative sources straight from Microsoft:

| Resource | Why It’s Useful |

|---|---|

| ML.NET Documentation | The official hub for all things ML.NET |

| ML.NET API Reference | Full API docs with definitions and parameters |

| Model Builder | GUI tool that auto-generates pipelines you can reverse-engineer |

| ML.NET GitHub Repository | Source code, discussions, issues, and updates |

| ML.NET Roadmap | See what’s coming next from the dev team |

🧪 Sample Projects and Templates

Use these to experiment or bootstrap your own systems:

| Resource | Description |

|---|---|

| ML.NET Samples GitHub | Dozens of end-to-end projects: regression, classification, text, and more |

| Customer Segmentation Sample | Real-world scenario with full pipeline code |

| ML.NET CLI Tool | Auto-generates models and pipelines from the command line |

| .NET AI Templates | Create new projects with pre-built ML.NET structure via dotnet new |

🧠 Recommended Books

While ML.NET books are still rare, these resources help with adjacent topics:

| Book | Why It’s Helpful |

|---|---|

| Machine Learning with ML.NET by Jarred Capellman | One of the few ML.NET-specific books (intro-level, but practical) |

| AI Simplified: Harnessing Microsoft Technologies… by Keith Baldwin | Focused on .NET-first AI adoption and real-world applications |

| Programming ML.NET by Nish Anil (work-in-progress online) | Authoritative guidance from a Microsoft ML.NET engineer |

| Hands-On Machine Learning with C# | Great for .NET developers new to AI (often pairs ML.NET with custom logic) |

🗣️ Community and Support

ML.NET has a growing (if niche) community. Here’s where to plug in:

| Community | Why It Matters |

|---|---|

| .NET Machine Learning on Discord | Ask ML.NET-specific questions in real time |

| Stack Overflow – ML.NET | Browse common issues and solutions |

| ML.NET Community Standups (YouTube) | Monthly updates, demos, and roadmap previews |

| LinkedIn #mlnet and #dotnet | Follow practitioners, thought leaders, and real-world stories |

| Twitter/X #mlnet | Stay current on tool updates and releases |

🧭 Suggested Learning Path

Want to go from “aware” to “advanced”? Follow this progression:

Beginner

- ✅ Read Getting Started with ML.NET

- ✅ Run your first pipeline using Model Builder

- ✅ Load a CSV, normalize a column, and fit a regression model

Intermediate

- ✅ Manually create a pipeline with

IDataViewand transformers - ✅ Apply one-hot encoding and text featurization

- ✅ Save and load a model with full pipeline

- ✅ Embed prediction into a .NET Core API

Advanced

- ✅ Build a custom

ITransformer - ✅ Create test coverage for preprocessing logic

- ✅ Track pipeline versions with Git and CI

- ✅ Architect multi-stage pipelines across teams or services

🧠 For Architects and Decision Makers

If you’re building AI centers of excellence, you’ll also want:

| Resource | Value |

|---|---|

| Azure MLOps + ML.NET Integration | Strategy for scaling across teams and environments |

| AI Governance and Fairness Tools (Microsoft) | Ethical and legal frameworks for enterprise AI |

| ML.NET in Enterprise Series (coming soon on AInDotNet.com) | Deep dives into production patterns for regulated industries |

🎁 Bonus: Free Tools and Helpers

| Tool | Description |

|---|---|

| ML.NET CLI | Command-line model trainer for rapid prototyping |

| Netron | Visualize ONNX/ML.NET models and pipeline graphs |

| ML.NET Notebooks | C# notebooks for inline experimentation (try in VS Code) |

In the next (and final) major section, we’ll tackle the FAQ — answering the most common and critical questions professionals have about ML.NET data prep, deployment, and scaling.

❓ Section 14: FAQ – ML.NET Data Preparation

15 Practical Questions Answered for Developers, Architects, and AI Leads

What’s the difference between ETL and ML data prep?

ETL (Extract, Transform, Load) is for storing, reporting, and normalizing business data.

ML data prep focuses on formatting data for machine learning algorithms. ML prep includes encoding, normalization, vectorization, and label mapping — tasks that traditional ETL tools aren’t built for.

How do I normalize numeric data in ML.NET?

Use .NormalizeMinMax("ColumnName") or .NormalizeMeanVariance("ColumnName") within a pipeline. These scale your numeric features so they don’t dominate others due to large magnitude.

Can I preprocess SQL Server data directly with ML.NET?

Yes. You can pull data from SQL Server using ADO.NET, Entity Framework, or a CSV export. Once loaded into memory or file, use mlContext.Data.LoadFromEnumerable() or LoadFromTextFile() to bring it into ML.NET.

Should I replace missing values before or after encoding?

Always replace missing values before encoding or scaling. Transformers like OneHotEncoding or NormalizeMinMax assume valid, non-null inputs.

Can I use ML.NET data prep in an ASP.NET Core API?

Absolutely. Load your trained model with preprocessing steps baked in, and expose it via a controller using PredictionEngine<TInput, TOutput> or via Transform() in batch APIs.

How do I handle unseen categories at prediction time?

Use OneHotHashEncoding() instead of traditional one-hot. Hash encoding gracefully handles new values without requiring model retraining, though it may introduce minor collision risks.

Can I featurize free text in ML.NET?

Yes. Use .Text.FeaturizeText("TextColumn") to convert sentences into tokenized numeric vectors. This includes tokenization, stop word removal, and n-gram extraction under the hood.

Can I reuse my data prep pipeline during inference?

Yes. When you call .Fit() and save the model with mlContext.Model.Save(), the entire pipeline — including data transforms — is preserved and can be reloaded in production.

What if my input schema changes?

ML.NET will throw schema mismatch errors at runtime. To handle this gracefully:

Prefer hash encoding when category growth is expected

Use schema inspection before inference

Write defensive code to detect column drift

Does ML.NET support batching or streaming?

Yes. ML.NET pipelines are lazy and row-based, supporting streaming large datasets without loading everything into memory. For batch inference, use Transform() on an IDataView. For streaming, use row mappers or prediction engines in APIs.

Is ML.NET fast enough for enterprise data?