Most enterprise AI initiatives don’t fail because the model is weak.

They fail because the organization wasn’t execution-ready.

“Execution readiness” is frequently used in strategy meetings, vendor presentations, and AI roadmaps. But in practice, it is rarely defined with precision. It becomes a vague signal that a team feels prepared — not a measurable structural condition.

In enterprise AI, execution readiness is not about enthusiasm, tooling, or even talent.

It is about operational definition.

And without it, AI initiatives drift from strategic intent into partial, underperforming systems that never deliver measurable impact.

The Enterprise AI Strategy Execution Gap

The enterprise AI strategy execution gap appears when:

- Strategy is approved.

- Budget is allocated.

- Tools are selected.

- A team is assigned.

But no explicit work definition exists.

Execution readiness is what closes that gap.

Without it, teams move directly from aspiration to implementation — skipping the architectural layer that makes AI systems testable, measurable, and aligned.

What Execution Readiness Is Not

Before defining what execution readiness means in AI, it helps to clarify what it is not.

Execution readiness is not:

- Purchasing an AI platform

- Hiring data scientists

- Running a successful demo

- Integrating an LLM into an application

- Announcing an AI roadmap

All of those can exist without structural clarity.

And structural clarity is what determines whether AI initiatives succeed in production.

The Core Components of AI Execution Readiness

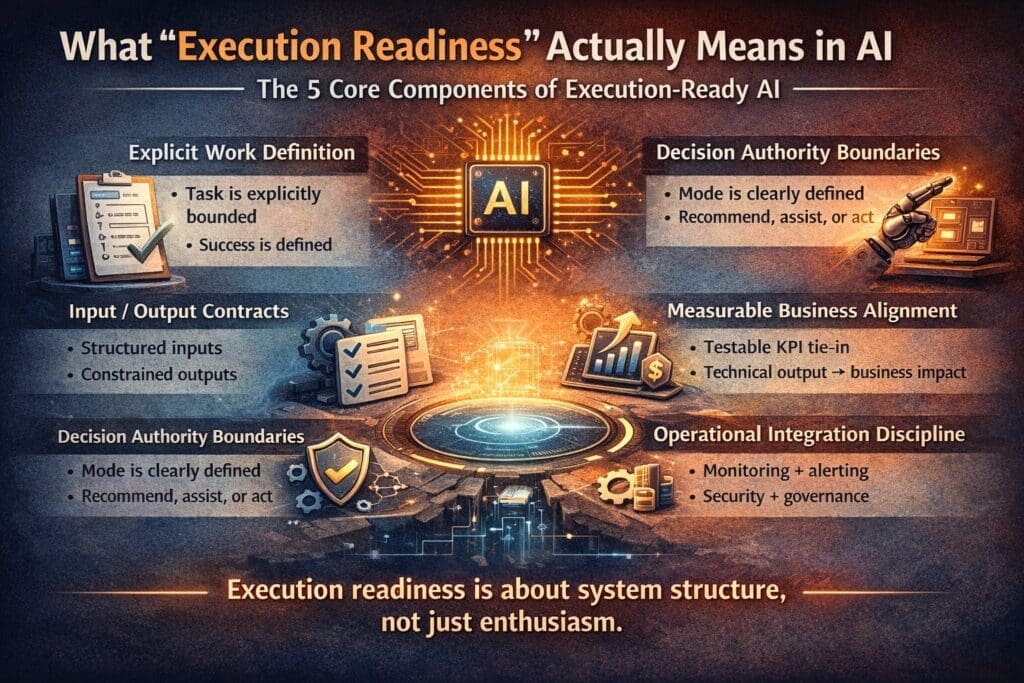

Execution readiness in enterprise AI consists of five structural elements.

If any one of these is missing, drift begins.

1. Explicit Work Definition

AI cannot execute abstract goals.

It can execute bounded tasks.

Execution readiness requires:

- A clearly defined operational task

- A known trigger condition

- A known output format

- A defined success threshold

For example:

Weak definition:

“Use AI to improve customer experience.”

Execution-ready definition:

“Classify inbound support emails into 8 defined categories with ≥90% precision and route automatically when confidence exceeds 0.8.”

The difference is not sophistication.

It is specificity.

2. Input and Output Contracts

Every production AI system must have:

- Structured inputs

- Constrained outputs

- Defined error states

- Confidence handling rules

Without contracts, AI outputs become subjective artifacts.

Subjective outputs cannot be reliably tested.

And untestable systems cannot be trusted.

Execution readiness means the system’s behavior is predictable within defined boundaries — even if the model itself is probabilistic.

3. Decision Authority Boundaries

AI systems operate in one of three modes:

- Recommend

- Assist

- Act autonomously

Execution readiness requires explicit agreement on which mode applies.

If AI is intended to recommend but accidentally acts, trust erodes.

If AI is intended to act but requires constant manual override, value disappears.

Decision authority must be defined before deployment — not negotiated afterward.

4. Measurable Business Alignment

Technical metrics are not business metrics.

Execution readiness requires direct linkage between:

- Model outputs

- Operational workflow impact

- Business KPI movement

If an AI system improves response draft quality but does not reduce handling time, improve resolution rate, or increase customer satisfaction, the initiative will eventually be questioned.

Execution-ready systems are built with measurement hooks from the start.

5. Operational Integration Discipline

AI does not operate in isolation.

It must integrate into:

- Authentication flows

- Logging systems

- Monitoring frameworks

- Alerting mechanisms

- Governance controls

- Security reviews

Execution readiness means the AI capability is treated like any other production-grade component — not a novelty feature bolted onto an application.

In Microsoft / .NET enterprise environments, this includes:

- Structured logging

- Exception handling

- Version control discipline

- CI/CD integration

- Security scanning

- Observability instrumentation

If AI bypasses these standards, it creates operational risk.

Why Demos Create False Confidence

Demos create the illusion of execution readiness.

A demo proves:

- The model can produce useful output.

- The integration works in a controlled setting.

- The idea is conceptually viable.

It does not prove:

- Edge-case resilience

- Data variability tolerance

- Production monitoring stability

- Organizational workflow alignment

Execution readiness is not proven in a demo.

It is proven under sustained operational conditions.

The Hidden Risk of Skipping Execution Readiness

When execution readiness is skipped, enterprise AI initiatives typically fail quietly.

Common outcomes:

- Feature exists but adoption is low

- Engineers distrust output reliability

- Metrics improve technically but not strategically

- Stakeholders describe success differently

This type of failure does not cause crisis.

It causes stagnation.

And stagnation reduces future executive appetite for AI investment.

Why Engineers Care About Execution Readiness

From an engineering perspective, execution readiness is not philosophical.

It is practical.

Engineers need:

- Defined boundaries

- Testable behavior

- Clear failure modes

- Deterministic integration contracts

When those are missing, the AI initiative feels structurally unstable.

This is often interpreted as “resistance to AI.”

In reality, it is resistance to ambiguity.

Execution readiness reduces that ambiguity.

Execution Readiness vs. Model Capability

Many organizations attempt to compensate for structural weakness with stronger models.

They upgrade:

- From one LLM to another

- From open-source to enterprise-tier APIs

- From basic prompts to agent frameworks

But model capability rarely fixes structural gaps.

If the task is poorly defined, a better model simply produces better-formatted ambiguity.

Execution readiness multiplies model capability.

Without readiness, capability is wasted.

How to Assess AI Execution Readiness

Before launching any AI initiative, ask:

- Is the work unit explicitly defined?

- Are inputs and outputs contractually structured?

- Is decision authority agreed upon?

- Are business KPIs directly tied to the AI capability?

- Is the AI integrated into production governance standards?

If the answer to any of these is unclear, the organization is not execution-ready.

And proceeding anyway increases the probability of quiet failure.

Execution Readiness Is Architectural, Not Cultural

Organizations often attempt to solve AI underperformance with:

- More training

- More alignment meetings

- More enthusiasm

Those are cultural interventions.

Execution readiness is architectural.

It is about system structure.

Structure determines whether AI remains aligned with business intent from deployment through scale.

The Strategic Advantage of Execution Discipline

Organizations that treat AI with execution discipline gain:

- Faster iteration cycles

- Higher trust among engineers

- Clearer ROI visibility

- Reduced implementation risk

- Sustainable scaling patterns

Execution readiness does not slow innovation.

It prevents rework.

And in enterprise environments, avoiding rework is often the highest-leverage advantage.

Final Thought: AI Success Is Boring by Design

Enterprise AI success rarely looks dramatic.

It looks structured.

It looks measurable.

It looks predictable.

Execution readiness is not exciting.

It is disciplined.

And in enterprise AI, discipline is what preserves meaning between strategy and execution.

Without it, even the strongest models cannot deliver lasting impact.

Related Reading

This article is part of the February series on why AI fails between strategy and execution.

For the broader framework behind these failure patterns, see:

👉 Why AI Fails Between Strategy and Execution (And Why Most Teams Never See It Coming)

Frequently Asked Questions

What does “execution readiness” mean in AI?

Execution readiness in AI refers to an organization’s structural preparedness to move from strategy to production. It includes explicit task definition, input/output contracts, defined decision authority, measurable business alignment, and proper operational integration. Without these elements, AI initiatives drift before delivering measurable impact.

Why do enterprise AI projects fail without execution readiness?

Enterprise AI projects fail when teams move directly from high-level goals to implementation without defining bounded work units and measurable outcomes. This creates ambiguity, metric drift, and mistrust between executives and engineers. The failure often appears gradual rather than catastrophic.

Is execution readiness about tools or architecture?

Execution readiness is architectural, not tool-based. Purchasing AI platforms or integrating LLM APIs does not ensure readiness. Structural clarity — how the AI system operates within defined boundaries — determines whether the initiative succeeds in production.

How can organizations assess AI execution readiness?

Organizations can assess readiness by asking:

- Is the task explicitly defined?

- Are inputs and outputs structured?

- Is AI decision authority clearly defined?

- Are business KPIs directly tied to the AI capability?

- Is the system integrated into monitoring and governance processes?

If any of these are unclear, execution readiness is incomplete.

What is the difference between a successful AI demo and execution readiness?

A demo proves conceptual viability in a controlled environment. Execution readiness proves operational durability in real-world conditions. Production AI systems must handle variability, edge cases, monitoring, governance, and sustained usage — factors that demos rarely test.

Why do engineers prioritize execution readiness?

Engineers prioritize execution readiness because production systems require predictable behavior, defined failure states, and testable outputs. When AI initiatives lack structural clarity, engineers perceive operational risk. Their skepticism often signals missing specification rather than resistance to AI itself.

Does better model performance solve execution problems?

No. Stronger models cannot compensate for poorly defined tasks or unclear decision boundaries. Model capability amplifies structural clarity; it does not replace it. Execution readiness multiplies model effectiveness.

How does execution readiness improve AI ROI?

Execution readiness improves ROI by:

- Reducing rework

- Preventing misalignment

- Increasing trust in outputs

- Linking technical metrics to business KPIs

- Enabling scalable iteration

It ensures AI systems deliver measurable business value instead of technical novelty.

What role does governance play in AI execution readiness?

Governance ensures AI systems operate within defined compliance, security, and monitoring standards. Logging, auditability, alerting, and version control are essential components of execution readiness in enterprise environments.

Is execution readiness different for generative AI compared to predictive AI?

The core principles remain the same. However, generative AI increases the importance of boundary definition and output constraints due to its probabilistic nature. This makes explicit contracts and decision authority even more critical in LLM-based systems.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish