Most enterprise teams think about governance too late.

They treat governance like a final review step. Something that happens after the AI demo works, after the business sponsor gets excited, after users start asking for access, and after the project team has already made most of the important design decisions.

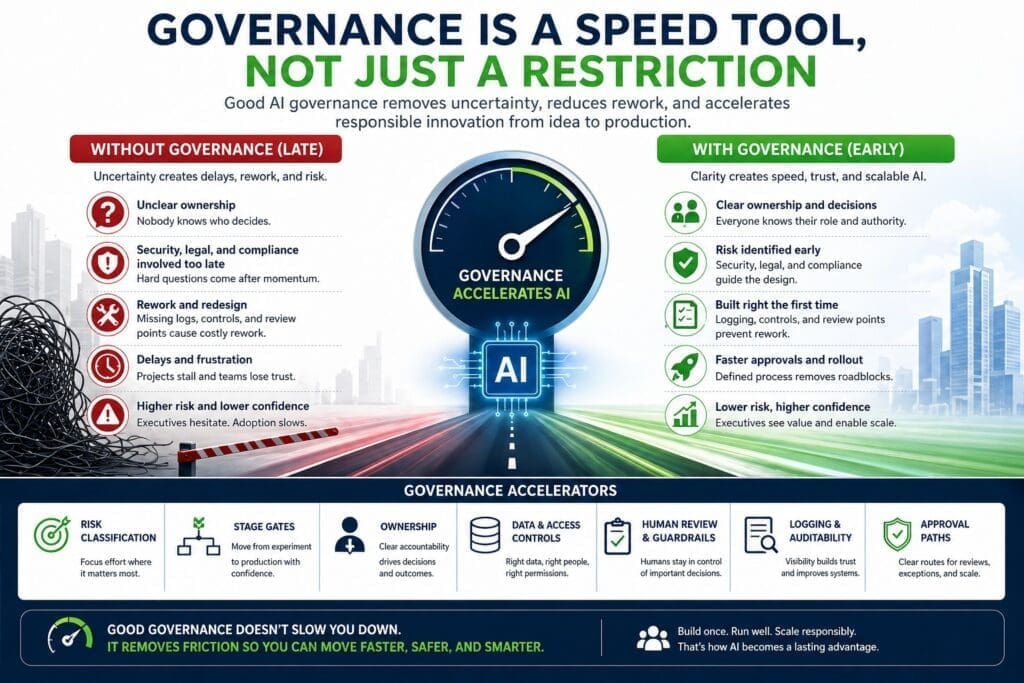

That is exactly why governance feels slow.

The real problem is not governance.

The real problem is late governance.

When governance shows up at the end, security, legal, compliance, infrastructure, and leadership are forced to review something they did not help shape. They have to ask hard questions after momentum already exists. That creates rework, delays, frustration, and internal politics.

But when governance is built into the AI process early, it does the opposite.

Good governance helps teams move faster because it defines the rules of the road before everyone is already arguing about direction.

For Microsoft-centric organizations adopting AI across Microsoft 365, Azure, Power Platform, SharePoint, Teams, SQL Server, custom .NET applications, and existing enterprise workflows, governance should not be treated as paperwork.

It should be treated as an operating system for moving AI from idea to production with less confusion, less rework, and more trust.

This is the key point:

AI governance is not just a restriction. Done correctly, governance is a speed tool.

That is why this topic fits the April 2026 content strategy for AInDotNet: start with the practical enterprise pain point, then introduce the framework, architecture, and operating model that solves it.

Why Governance Gets a Bad Reputation

Governance gets a bad reputation because many organizations experience it in the worst possible form.

Late.

Vague.

Reactive.

Political.

In many enterprise AI projects, the sequence looks like this:

- A department identifies an AI opportunity.

- A team builds a quick prototype.

- The demo looks promising.

- People get excited.

- The pilot expands.

- Security, legal, compliance, infrastructure, or enterprise architecture finally gets involved.

- The project gets slowed down by questions nobody answered earlier.

At that point, governance feels like a brake pedal.

The project team thinks:

“We already built this. Why are they blocking us now?”

Security thinks:

“Why were we not involved before this touched sensitive data?”

Legal thinks:

“Why are we reviewing risk after the use case was already announced?”

Compliance thinks:

“Why was recordkeeping not considered earlier?”

IT thinks:

“Who is supposed to support this when users rely on it?”

Executives think:

“Why did something that looked simple become complicated?”

That entire mess is predictable.

Governance gets blamed because governance is visible at the moment of delay.

But the delay was created earlier by the lack of governance.

Bad Governance Slows AI Down

Let’s be blunt.

Not all governance is good.

Bad governance absolutely slows organizations down.

Bad AI governance usually has several traits:

- It is unclear.

- It is inconsistent.

- It is overly centralized.

- It treats every AI use case as equally risky.

- It requires approval without defining approval criteria.

- It involves reviewers too late.

- It has no stage gates.

- It has no risk categories.

- It has no clear ownership model.

- It turns every project into a custom negotiation.

That kind of governance is not a speed tool.

It is bureaucracy.

A business unit should not need the same review process for a low-risk internal summarization assistant as it would need for an AI system that influences legal, financial, HR, medical, compliance, or customer-facing decisions.

If every project follows the same heavy process, low-risk innovation dies.

If every project follows no process, high-risk mistakes multiply.

Both approaches are broken.

The right answer is risk-based governance.

Good Governance Speeds AI Up

Good governance speeds AI adoption because it reduces uncertainty.

It answers common questions before every project has to rediscover them from scratch.

Questions like:

- Which AI use cases are acceptable for early experimentation?

- Which data sources can be used?

- Which data sources are restricted?

- Who must approve access to sensitive data?

- What must be logged?

- When is human review required?

- Who owns the workflow?

- Who owns technical support?

- What separates an experiment from a pilot?

- What separates a pilot from production?

- What evidence is needed for broader rollout?

- When do legal, security, and compliance need to be involved?

Without governance, every AI project has to negotiate those questions independently.

That is slow.

With governance, teams have a known path.

That is faster.

Good governance does not mean every team can do whatever they want.

It means teams understand what they are allowed to do, what they are not allowed to do, and what they need to prove before they move forward.

That creates controlled speed.

Governance Creates Decision Clarity

Enterprise AI projects often stall because nobody knows who has the authority to say yes.

Or no.

Or not yet.

This creates one of the most common AI bottlenecks: decision ambiguity.

The project team is ready to move forward, but the organization has not defined:

- Who approves data access?

- Who approves user expansion?

- Who approves production deployment?

- Who accepts business risk?

- Who owns support after rollout?

- Who reviews legal exposure?

- Who determines whether human approval is required?

- Who decides whether an AI system can take action automatically?

When these decisions are undefined, teams wait.

Or worse, they move forward informally and create risk.

Governance solves this by assigning decision rights.

A practical AI governance model should define:

- Business owner

- Technical owner

- Data owner

- Security reviewer

- Compliance/legal reviewer when needed

- Support owner

- Executive sponsor for higher-risk systems

- Approval authority by risk level

Decision clarity is speed.

When people know who decides, projects move faster.

Governance Reduces Rework

Rework is one of the most expensive forms of enterprise AI waste.

It happens when a team builds something that later has to be redesigned because basic governance questions were not answered early.

Common examples include:

- The system accesses data it should not access.

- The workflow has no human review point.

- The architecture does not support logging.

- The model output is stored in a way that creates compliance concerns.

- The application has no rollback path.

- The pilot has no support owner.

- The system cannot explain what happened when a user challenges the output.

- The tool violates internal security or retention policies.

- The business sponsor wants production rollout before production criteria exist.

None of those problems are surprising.

They are foreseeable.

Governance prevents rework by forcing the right questions earlier.

That does not mean every answer must be perfect on day one.

It means the team designs with awareness of constraints before the project hardens around bad assumptions.

Early governance is cheaper than late redesign.

Governance Helps Teams Choose Better Use Cases

One of the fastest ways to slow down AI adoption is to start with the wrong use case.

Some AI use cases are politically risky, technically complex, data-sensitive, or hard to support. They may still be worth doing eventually, but they are poor starting points.

Good governance helps identify better starting points.

A strong early AI use case usually has:

- Clear business value

- Clear workflow ownership

- Limited data sensitivity

- Reversible outcomes

- Human review when needed

- Low regulatory exposure

- Measurable impact

- A realistic support path

- A path from pilot to production

These are bounded-risk use cases.

They help the organization learn, build trust, and create repeatable patterns.

Examples may include:

- Internal document summarization

- Draft generation with human approval

- Support ticket classification

- Meeting summary workflows

- Requirements analysis assistance

- Internal knowledge search

- Report drafting

- Workflow triage

- Data cleanup recommendations

These use cases are not risk-free.

But they are usually easier to govern than AI systems that autonomously affect customers, employees, contracts, finances, compliance decisions, or production data.

Governance speeds AI adoption by helping teams avoid starting with the hardest, riskiest, most politically fragile project.

Governance Separates Experiments From Production Systems

One of the biggest enterprise AI mistakes is treating all AI work as if it belongs in one category.

It does not.

An AI experiment is not a pilot.

A pilot is not an MVP.

An MVP is not a production system.

Each stage has different expectations.

| Stage | Purpose | Governance Focus |

|---|---|---|

| Experiment | Learn what is possible | Keep scope limited, avoid sensitive data, document assumptions |

| Pilot | Test with limited users | Define owner, usage boundaries, feedback loop, basic risk review |

| MVP | Validate real workflow value | Add logging, human review, support path, security review, success metrics |

| Production | Operational deployment | Formal approval, monitoring, escalation, change control, lifecycle management |

This kind of stage-gate model is one of the best ways to make governance practical.

It allows teams to move quickly during early learning while still requiring stronger controls before broader rollout.

Without stage gates, organizations tend to make one of two mistakes:

- They over-govern every experiment and kill learning.

- They under-govern serious systems and create operational risk.

Stage gates solve that problem.

They define what must be true before an AI effort graduates to the next level.

That is not bureaucracy.

That is construction order.

Governance Builds Executive Confidence

Executives do not want AI chaos.

They want progress they can defend.

That distinction matters.

A business leader may support AI experimentation, but once AI touches customers, regulated data, employee workflows, financial processes, legal risk, or operational decisions, the questions change.

Executives need to know:

- Are we using AI responsibly?

- Are we protecting sensitive data?

- Are we creating legal exposure?

- Are we improving productivity or just creating demos?

- Can we support these systems?

- Can we audit what happened?

- Do we know which use cases are safe to scale?

- Are we creating business value?

- Do we have a repeatable operating model?

Good governance gives leadership a reason to say yes.

Without governance, executives are asked to trust enthusiasm.

With governance, executives can trust a process.

That makes approvals easier.

It also makes funding easier because AI becomes less of a gamble and more of a managed business capability.

Governance Helps Security and Legal Become Partners Instead of Blockers

Security, legal, and compliance teams are often treated as obstacles in enterprise AI.

That is usually a symptom of bad process.

When those teams are involved late, they are forced to challenge decisions that have already been made.

That creates conflict.

When they are involved early, they can help define safer paths forward.

For example:

- Security can define acceptable data access patterns.

- Legal can identify use cases that require caution.

- Compliance can define retention and audit requirements.

- IT can define support and monitoring expectations.

- Enterprise architecture can guide integration patterns.

- Business leaders can define ownership and accountability.

This does not mean every AI experiment needs a full legal review.

That would be overkill.

It means governance should define when those teams need to be involved and what they are expected to review.

That changes the relationship from:

“Please approve this thing we already built.”

to:

“Help us define the safest and fastest path to build this correctly.”

That is a very different conversation.

Governance Improves User Trust

AI adoption depends on trust.

Users need to trust that the system is useful.

Managers need to trust that the workflow is controlled.

Executives need to trust that the risk is understood.

IT needs to trust that the system can be supported.

Security needs to trust that data exposure is controlled.

Legal and compliance need to trust that obligations are being respected.

Governance supports that trust by making the system inspectable.

A trustworthy AI system should have clear answers to questions like:

- What is the system supposed to do?

- What is it not supposed to do?

- What data does it use?

- Who can access it?

- How are outputs reviewed?

- What is logged?

- Who monitors errors?

- How are user complaints handled?

- How are bad outputs escalated?

- Who is accountable?

When those answers exist, adoption becomes easier.

When they do not, every mistake becomes evidence that AI is unsafe, unreliable, or poorly managed.

Logging Is a Governance Accelerator

Logging is often treated as a technical detail.

It is not.

For enterprise AI, logging is a governance accelerator.

If you cannot inspect what happened, you cannot govern the system.

Depending on the use case, logs may include:

- User request

- Input data references

- Prompt or instruction version

- AI response

- Model or service used

- System configuration

- Exceptions

- Latency

- User feedback

- Human override

- Escalation

- Approval action

- Version history

Logs help answer critical questions:

- Did the system behave as expected?

- Did the user provide bad input?

- Did the AI produce a risky response?

- Was the output reviewed?

- Was the issue escalated?

- Did a recent configuration change cause the problem?

- Are users trying to use the system outside its intended purpose?

Without logging, teams argue from memory.

With logging, teams can inspect evidence.

That speeds support, compliance review, debugging, improvement, and executive reporting.

The blunt rule is simple:

No logging, no trust. No trust, no scale.

Governance Makes AI More Scalable

A single AI pilot can survive on heroics.

A mature enterprise AI program cannot.

Early AI projects often depend on a few motivated people who understand the use case, the prototype, the data, and the risks.

That works temporarily.

It does not scale.

At some point, the organization needs repeatable patterns:

- Repeatable use case intake

- Repeatable risk classification

- Repeatable data review

- Repeatable approval rules

- Repeatable logging standards

- Repeatable human review patterns

- Repeatable support models

- Repeatable production criteria

- Repeatable rollback plans

- Repeatable measurement

Governance is how AI moves from isolated effort to organizational capability.

Without governance, every project is custom.

Custom does not scale well.

Governance creates templates, patterns, and decision rules that allow more teams to move faster without reinventing the entire process.

How Microsoft-Centric Organizations Should Think About AI Governance

For Microsoft-centric organizations, AI governance should not be abstract.

It should connect to the systems already running the business.

That may include:

- Microsoft 365

- SharePoint

- Teams

- OneDrive

- Azure

- Power Platform

- Power BI

- SQL Server

- Entra ID

- Custom .NET applications

- Existing DevOps pipelines

- Existing security policies

- Existing data governance practices

- Existing business workflows

The practical question is not simply:

“Are we using AI responsibly?”

The better question is:

“How do we make AI work safely inside our existing Microsoft enterprise environment?”

That means governance should address:

- Identity and access control

- Role-based permissions

- Data sensitivity

- SharePoint and Teams content exposure

- Azure resource ownership

- Power Platform environment strategy

- Custom .NET integration

- Logging and telemetry

- Auditability

- Human review

- Approval routing

- Support ownership

- Change control

- Monitoring

- Production promotion criteria

The governance model should match the environment.

Generic AI policy is not enough.

Enterprise AI needs operational governance.

A Practical AI Governance Speed Model

If your organization wants AI governance to accelerate progress instead of slow it down, start with a simple operating model.

1. Create an AI use case intake process

Every AI idea should be captured in a consistent format:

- Business problem

- Workflow owner

- Target users

- Expected value

- Data required

- Risk level

- Human review needs

- Production intent

- Success metrics

This prevents vague ideas from turning into random pilots.

2. Classify risk early

Define risk levels before teams build too much.

For example:

- Low risk: internal productivity support, no sensitive data, human-reviewed output

- Medium risk: department workflow support, some internal data, limited user group

- High risk: sensitive data, customer impact, regulated workflows, financial/legal/HR implications

- Critical risk: autonomous decisions, external consequences, high compliance exposure

Each risk level should have different review requirements.

3. Define stage gates

Do not let projects drift from experiment to production informally.

Define gates for:

- Experiment

- Pilot

- MVP

- Production

Each gate should specify what evidence is required before moving forward.

4. Define ownership

Every AI initiative should have named owners:

- Business owner

- Technical owner

- Data owner

- Support owner

- Security reviewer

- Compliance/legal reviewer when needed

- Executive sponsor for higher-risk initiatives

No owner, no scale.

5. Define logging requirements

Before rollout, decide what must be captured.

The higher the risk, the stronger the logging requirements.

At minimum, serious systems should capture enough information to troubleshoot, audit, improve, and defend the system.

6. Define human review rules

Be explicit about what AI can do independently and where humans remain accountable.

Common patterns include:

- AI drafts, human approves

- AI recommends, human decides

- AI classifies, human can override

- AI summarizes, human validates

- AI acts automatically only within narrow, approved boundaries

7. Define approval paths

Make it obvious who approves what.

For example:

- Low-risk experiment: department or technical lead approval

- Pilot with internal users: business owner plus technical owner

- MVP using sensitive data: security and data owner review

- Production system: formal approval, support plan, monitoring, escalation, and executive sponsor

Approval rules should be visible before the project begins.

What Happens When Governance Works

When governance works, the organization changes its AI behavior.

Instead of random AI enthusiasm, it gets structured AI progress.

Instead of scattered pilots, it gets a pipeline.

Instead of security surprises, it gets early risk classification.

Instead of legal blockers, it gets defined review triggers.

Instead of unsupported tools, it gets ownership.

Instead of unlogged AI output, it gets evidence.

Instead of executive hesitation, it gets confidence.

Instead of slow adoption, it gets controlled speed.

That is the real value of governance.

It does not just reduce risk.

It increases throughput.

Common Objection: “We Do Not Want to Slow Down Experimentation”

This objection is valid.

AI experimentation needs speed.

Teams need freedom to learn.

Not every idea should require a committee.

But that is exactly why governance needs tiers.

A good AI governance model should make low-risk experimentation easier, not harder.

For example:

- Allow sandbox experiments with no sensitive data.

- Provide pre-approved tools and environments.

- Offer standard prompt and data handling guidance.

- Define lightweight documentation requirements.

- Use clear thresholds for when additional review is required.

- Provide templates for pilots and production candidates.

The goal is not to make every experiment heavy.

The goal is to prevent experiments from quietly becoming production systems without review.

Governance should protect experimentation by making the boundaries clear.

Common Objection: “Our People Already Know What They Are Doing”

Maybe they do.

But enterprise AI is not just a developer competence issue.

It involves:

- Business process ownership

- Data access

- User behavior

- Security

- Compliance

- Legal exposure

- Production support

- Monitoring

- Change control

- Cost management

- Executive accountability

A skilled developer can still build a system with unclear ownership.

A strong business sponsor can still underestimate data risk.

A good prototype can still fail in production.

Governance is not an insult to the team.

It is how the organization coordinates across roles.

Common Objection: “We Can Add Governance Later”

You can.

But it will usually be more expensive.

Adding governance later often means:

- Reworking architecture

- Restricting data access

- Rewriting workflows

- Adding missing logs

- Creating support paths after users already depend on the system

- Re-negotiating approvals

- Reducing scope

- Delaying rollout

- Damaging trust

Late governance is retrofit governance.

Retrofit governance is expensive.

Early governance is design governance.

Design governance is faster.

The Bottom Line

Governance is often treated as the thing that slows AI down.

That is true when governance is late, vague, political, or one-size-fits-all.

But that is not what good governance should be.

Good AI governance helps enterprise teams move faster by creating decision clarity, reducing rework, classifying risk, defining ownership, setting stage gates, requiring the right logging, involving the right reviewers at the right time, and giving executives confidence that AI adoption is being managed responsibly.

For Microsoft-centric organizations, this matters because AI is not floating in isolation. It often touches Microsoft 365, Azure, Power Platform, SharePoint, Teams, SQL Server, custom .NET systems, sensitive data, and real business workflows.

The practical message is simple:

Governance is not the enemy of enterprise AI progress.

Bad governance slows AI down.

Late governance slows AI down.

Good governance speeds AI up.

Frequently Asked Questions

How can governance make AI projects move faster?

Governance makes AI projects move faster by removing uncertainty early. When teams already know the rules for data access, risk classification, approvals, logging, human review, ownership, and production readiness, they spend less time waiting, arguing, redesigning, or guessing.

The speed comes from clarity. Good governance gives AI teams a known path from idea to experiment, pilot, MVP, and production.

Why does AI governance usually feel like a restriction?

AI governance feels restrictive when it shows up late, after the team has already built a prototype, excited users, and made key architecture decisions.

At that point, security, legal, compliance, and infrastructure teams are forced to review something they did not help design. Their questions create delays and rework, so governance gets blamed. The real issue is not governance itself. The issue is late governance.

What is the difference between good governance and bad governance?

Bad governance is vague, slow, inconsistent, overly centralized, and one-size-fits-all. It treats every AI project as equally risky and forces every team into custom approval battles.

Good governance is clear, risk-based, repeatable, and practical. It defines which AI use cases can move quickly, which require review, which need stronger controls, and what must be true before a project can move from experiment to production.

What does “governance is a speed tool” mean?

It means governance should help teams move faster by defining the rules of the road before the project is blocked by unanswered questions.

A good AI governance model tells teams:

- what they can test quickly

- what data they can use

- who owns the decision

- what must be logged

- when human review is required

- who approves each stage

- what is required before production rollout

That reduces friction and increases delivery confidence.

What are AI stage gates?

AI stage gates are checkpoints that separate different levels of AI maturity, such as experiment, pilot, MVP, and production.

Each stage should have different requirements. A quick experiment may only need a limited scope and no sensitive data. A production AI system may need logging, monitoring, support ownership, risk review, human review rules, security approval, and change control.

Stage gates prevent teams from accidentally treating a demo like a production system.

Why is risk classification important for AI governance?

Risk classification helps organizations avoid over-governing simple use cases and under-governing dangerous ones.

A low-risk internal summarization tool should not require the same review process as an AI system that affects customers, employees, legal matters, financial decisions, regulated workflows, or production data.

Risk classification lets teams match the level of governance to the level of risk.

What should every enterprise AI governance model include?

A practical enterprise AI governance model should include:

- use case intake

- risk classification

- data access rules

- ownership assignments

- human review requirements

- logging and auditability

- approval paths

- stage gates

- support expectations

- production readiness criteria

Without these pieces, AI projects are more likely to stall, create rework, or become unsupported experiments.

Why is logging so important in AI governance?

Logging is important because an enterprise cannot govern what it cannot inspect.

For AI systems, logs may need to capture user requests, input references, prompts, responses, model versions, exceptions, latency, user feedback, human overrides, approval actions, and configuration changes.

Logging supports debugging, compliance, auditing, quality improvement, support, and trust. The blunt rule is: no logging, no trust. No trust, no scale.

When should security, legal, and compliance teams get involved?

Security, legal, and compliance teams should be involved before the solution direction is locked in, especially if the AI system touches sensitive data, regulated workflows, customer information, HR records, financial data, legal documents, or production systems.

They do not need to control every early experiment. But the governance model should define when their review is required and what evidence they need to approve the next stage.

How should Microsoft-centric organizations approach AI governance?

Microsoft-centric organizations should connect AI governance to the systems they already use, such as Microsoft 365, SharePoint, Teams, Azure, Power Platform, SQL Server, Entra ID, Power BI, DevOps pipelines, and custom .NET applications.

The governance model should address identity, permissions, data access, workflow ownership, logging, human review, approval routing, support responsibility, monitoring, and production promotion criteria.

Generic AI policy is not enough. Enterprise AI needs governance that fits the actual Microsoft technology environment.