Artificial intelligence architecture diagrams look clean.

Layered boxes.

Agents at the top.

LLMs in the middle.

Data pipelines below.

They look complete.

They look transferable.

They look modern.

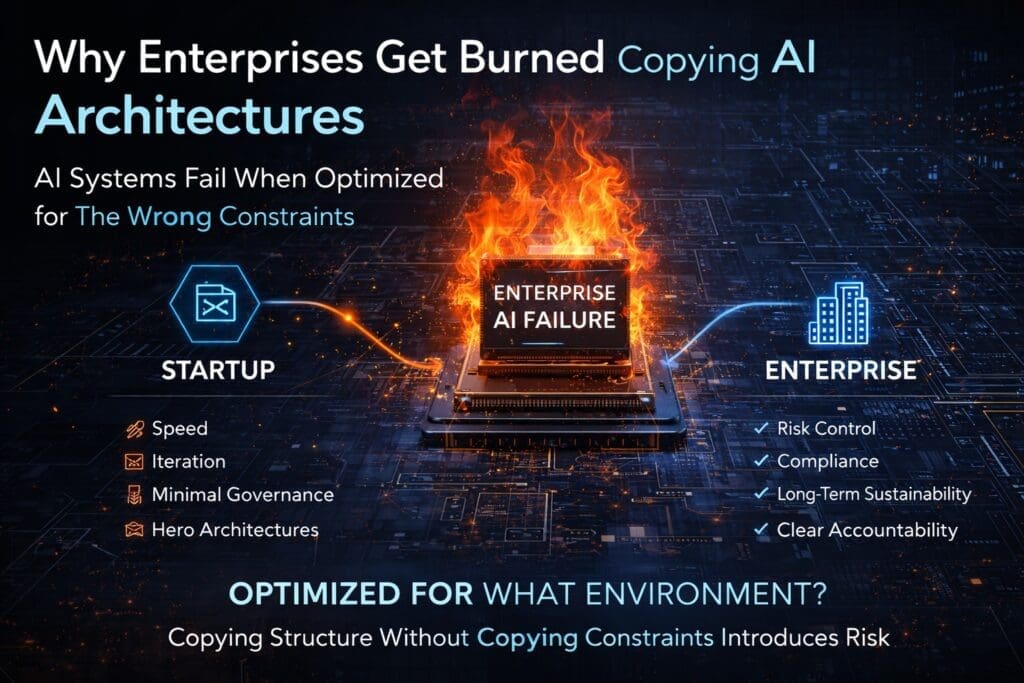

And that is exactly why enterprises get burned copying them.

The failure is rarely technical incompetence.

It is constraint mismatch.

AI Architectures Are Built for Specific Constraints

No AI architecture is universal.

Each one is optimized for a specific environment:

- Startups optimize for speed and survival.

- Research teams optimize for experimentation.

- Cloud vendors optimize for platform integration.

- Agent-first systems optimize for autonomy.

- Government systems optimize for risk containment.

- LLM-centric designs optimize for conversational interaction.

When enterprises copy architecture without copying the underlying constraints, they inherit misalignment.

And misalignment compounds at scale.

The Enterprise Constraint Reality

Medium and large enterprises operate under pressures that many popular AI architectures do not assume:

- Regulatory compliance

- Auditability

- Cross-department approval chains

- Budget governance

- Security policies

- Long-term maintainability

- Accountability for automated decisions

These are not optional considerations.

They are structural requirements.

An architecture optimized for rapid iteration may not survive in a regulated healthcare, finance, or government environment.

An agent-first design optimized for autonomy may introduce unacceptable risk in a defense or public sector setting.

The Constraint Mismatch Pattern

Enterprise AI failures typically follow this sequence:

- Architecture is selected based on industry visibility.

- A pilot succeeds in a controlled environment.

- Governance and compliance teams are engaged late.

- Integration friction increases.

- Workflows are undefined.

- Accountability boundaries become unclear.

- Deployment stalls or is heavily redesigned.

The architecture did not “fail.”

It was misapplied.

Common Architectures That Don’t Transfer Cleanly

1. Startup AI Architectures

Startups optimize for:

- Rapid iteration

- Tight feedback loops

- Minimal bureaucracy

- Survival runway

Enterprises optimize for:

- Risk reduction

- Sustainability

- Governance

- Stability

A startup architecture that works with five engineers cannot be assumed to scale across five departments.

2. Agent-First Architectures

Agent-first designs prioritize autonomy.

In enterprise environments, autonomy requires:

- Clearly defined work

- Explicit decision boundaries

- Human override controls

- Logged execution trails

Without these layers, agent autonomy becomes governance exposure.

Autonomy must follow discipline — not replace it.

3. LLM-Centric Architectures

Large language model (LLM) systems are often positioned as “the system.”

But LLMs are probabilistic components.

Enterprise systems require deterministic controls.

When business logic is embedded in prompts rather than structured capabilities:

- Auditability weakens

- Testing becomes difficult

- Accountability blurs

- Risk surfaces expand

LLMs are powerful tools — not enterprise backbones.

4. Vendor Reference Architectures

Cloud provider reference diagrams often assume:

- Full platform adoption

- Centralized cloud strategy

- High data maturity

- Advanced DevOps pipelines

Many enterprises operate in hybrid environments with legacy .NET systems and distributed ownership.

Vendor-optimized architecture may not align with existing enterprise realities.

The Maturity Assumption Gap

Most AI architecture diagrams do not explicitly state their assumed maturity level.

They often assume:

- Clean data pipelines

- Strong engineering discipline

- Clear ownership models

- Cross-functional alignment

If your organization is still defining work processes, governance standards, or capability boundaries, adopting advanced architecture prematurely introduces instability.

Architecture cannot compensate for organizational immaturity.

The Work Definition Problem

Before AI can automate anything, work must be defined.

That includes:

- Task boundaries

- Decision authority

- Inputs and outputs

- Exception paths

- Escalation procedures

Many copied AI architectures assume work clarity already exists.

In enterprise reality, work is often informal, tribal, and inconsistent.

Automating undefined work produces unpredictable outcomes.

And unpredictable outcomes create compliance risk.

Governance Cannot Be Retrofitted Cheaply

One of the most expensive enterprise AI mistakes is retrofitting governance after implementation.

If an architecture does not explicitly define:

- Logging standards

- Decision traceability

- Human override

- Security boundaries

- Data access control

Those capabilities must be bolted on later.

Retrofits cost more than disciplined upfront design.

The Real Question Enterprises Should Ask

Instead of asking:

“Is this architecture good?”

Enterprises should ask:

“What problem was this architecture built to solve?”

Then follow with:

- Do we share that optimization target?

- Do we meet the assumed maturity level?

- Are we comfortable with the risk surface?

- Does this align with our governance obligations?

If the answers are unclear, adoption should pause.

Enterprise AI Requires Structural Alignment

AI architecture in enterprise environments must account for:

- Strategy alignment

- Defined work systems

- Capability-first backend design

- AI core application containment

- Clear interface boundaries

- Guarded agent orchestration

- Horizontal governance layers

Copying structure without aligning these layers leads to friction.

Alignment precedes automation.

Why This Matters More in Microsoft and .NET Environments

Many enterprises operate complex, long-lived .NET ecosystems.

These systems often include:

- Layered service architectures

- Established DevOps pipelines

- Audit-integrated logging

- Role-based access models

- Hybrid cloud infrastructure

Introducing AI without respecting these layers destabilizes existing systems.

AI must integrate into structured enterprise foundations — not bypass them.

A More Disciplined Approach

Before adopting any AI architecture:

- Identify its optimization target.

- Assess maturity assumptions.

- Map governance placement.

- Evaluate AI placement within system layers.

- Define work before automation.

- Measure expanded risk surface.

- Confirm regulatory portability.

Architecture evaluation should precede implementation funding.

Not follow deployment friction.

Conclusion: Copying Is Easy. Alignment Is Hard.

Most enterprises do not fail because AI is impossible.

They fail because they adopt architecture designed for someone else’s constraints.

Architecture must align with:

- Organizational maturity

- Governance requirements

- Risk tolerance

- Existing technical foundations

AI does not remove structural responsibility.

It amplifies it.

And enterprises that understand this build systems that scale.

Enterprises that copy blindly inherit instability.

Judgment must precede adoption.

Always.

Frequently Asked Questions

Why do enterprises fail when copying AI architectures?

Enterprises fail when copying AI architectures because they copy structure without copying constraints.

Most AI architectures are optimized for specific environments such as startups, research labs, or cloud-native ecosystems. Enterprises operate under governance, compliance, and long-term maintainability requirements. When those constraints are ignored, friction appears during production deployment.

What is constraint mismatch in AI architecture?

Constraint mismatch occurs when an organization adopts an AI architecture optimized for a different operational environment.

For example:

- Startup architectures optimize for speed.

- Enterprise environments optimize for risk control and sustainability.

If optimization targets differ, the architecture will introduce instability or governance gaps.

What is the most common mistake enterprises make with AI architecture?

The most common mistake is adopting agent-first or LLM-centric designs before defining work, governance boundaries, and backend capabilities.

Automation introduced before structure increases risk and accountability exposure.

Why don’t startup AI architectures transfer well to enterprises?

Startup AI architectures assume:

- Small teams

- Minimal approval layers

- Rapid iteration cycles

- Low regulatory burden

Enterprises require:

- Auditability

- Compliance

- Defined ownership

- Cross-department coordination

- Long system lifecycles

The operating conditions are fundamentally different.

Can AI governance be added after implementation?

AI governance can be added later — but at significantly higher cost and risk.

Retrofitting logging, audit trails, access controls, and accountability boundaries is more complex than embedding them into the architecture from the beginning.

Governance should be structural, not reactive.

Are AI agents appropriate for enterprise environments?

AI agents can be appropriate in enterprise environments if:

- Work is clearly defined

- Capabilities are modular and controlled

- Decision boundaries are explicit

- Human override mechanisms exist

- Execution is logged and auditable

Agents should orchestrate defined capabilities, not invent workflows.

Why are LLM-centric architectures risky in enterprises?

Large language models are probabilistic systems.

When LLMs become the primary logic layer:

- Testing becomes difficult

- Business rules become embedded in prompts

- Auditability weakens

- Accountability blurs

LLMs should be contained within structured architectural layers.

What does “optimization under constraint” mean in AI architecture?

Optimization under constraint means that every architecture prioritizes certain goals (speed, autonomy, platform centralization, risk containment) over others.

Before adopting an architecture, enterprises must confirm that its optimization target aligns with their operational requirements.

How should Microsoft and .NET enterprises approach AI architecture adoption?

Microsoft and .NET enterprises should:

- Preserve layered backend service architectures

- Keep business logic in deterministic capability layers

- Integrate AI as a service consumer, not system owner

- Ensure Azure governance and security policies apply consistently

- Maintain DevOps logging and monitoring discipline

AI should integrate into enterprise foundations — not bypass them.

What should enterprises evaluate before copying an AI architecture?

Enterprises should evaluate:

- The architecture’s optimization target

- Assumed maturity level

- Governance placement

- AI structural placement

- Risk surface expansion

- Portability to regulated environments

Adoption should follow structured evaluation, not industry visibility.

When should an enterprise redesign its AI architecture?

Redesign should be considered when:

- AI components own core business logic

- Agents operate without defined boundaries

- Governance is unclear or retrofitted

- Audit trails are incomplete

- Interfaces control workflows

- Compliance concerns delay deployment

Early architectural correction is significantly less expensive than post-failure remediation.

Is there a “safe” universal AI architecture for enterprises?

No universal architecture exists.

Safe enterprise AI architecture depends on:

- Organizational maturity

- Risk tolerance

- Regulatory exposure

- Existing system complexity

- Governance discipline

Alignment matters more than trend adoption.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish