Government and defense organizations approach artificial intelligence very differently than startups or commercial tech companies.

While the private sector often prioritizes speed, experimentation, and rapid iteration, government and defense AI systems are designed under a completely different set of constraints.

These environments must operate with:

- High risk tolerance for failure (meaning failure is unacceptable)

- Strict accountability requirements

- Detailed audit trails

- Security-first architecture

- Long operational lifecycles

Because of these constraints, government and defense AI architectures emphasize discipline over speed.

Enterprise organizations can learn a great deal from these systems — even if they operate in very different industries.

The key is understanding which elements transfer well to enterprise environments and which do not.

Why Government and Defense AI Architectures Exist

Government and military organizations deploy AI systems in environments where the cost of failure can be catastrophic.

Examples include:

- National security systems

- Critical infrastructure monitoring

- Intelligence analysis

- Public safety operations

- Defense logistics and planning

In these contexts, systems must be:

- Explainable

- Auditable

- Governable

- Secure

- Accountable

This leads to architectural patterns that emphasize risk containment and operational control.

Frameworks such as those developed by organizations like NIST, DARPA, MITRE, and the U.S. Department of Defense reflect this mindset.

Their architectures often prioritize:

- Clear responsibility layers

- Data governance

- controlled automation

- human oversight

- continuous monitoring

For enterprises operating under regulatory pressure, these design priorities are highly relevant.

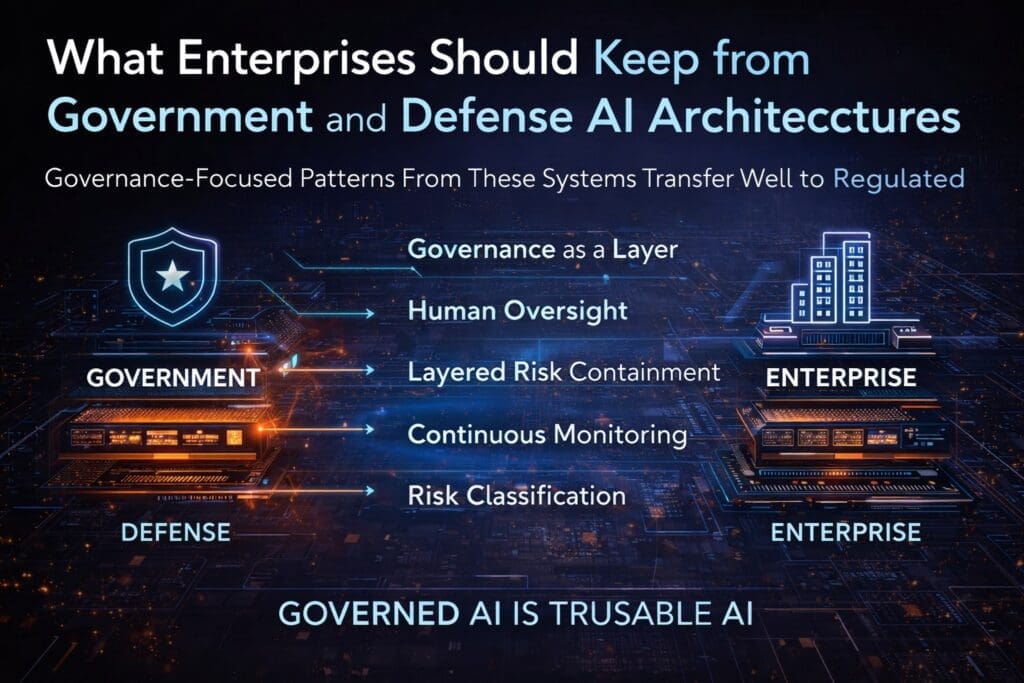

Architectural Principles That Transfer Well to Enterprises

While enterprise organizations are not defense agencies, many architectural principles from government AI systems translate directly into enterprise environments.

Below are several patterns enterprises should strongly consider adopting.

1. Governance as a Structural Layer

In many commercial AI projects, governance is treated as a policy discussion rather than an architectural component.

Government AI systems treat governance differently.

Governance is embedded directly into the architecture through:

- logging systems

- access controls

- decision traceability

- audit mechanisms

- approval workflows

These elements are not optional additions — they are structural requirements.

Enterprise AI systems increasingly face similar pressures from:

- financial regulators

- healthcare compliance frameworks

- privacy laws

- internal audit departments

Embedding governance early prevents expensive retrofits later.

2. Human Oversight and Decision Accountability

Defense AI architectures almost always assume that automated decisions require human accountability.

Instead of fully autonomous systems, many government designs implement:

- human-in-the-loop models

- human-on-the-loop supervision

- escalation procedures

- decision override capabilities

This pattern ensures that automated systems remain accountable.

Enterprises deploying AI for areas such as:

- financial approvals

- hiring recommendations

- fraud detection

- operational decisions

benefit from similar safeguards.

Human oversight does not eliminate automation.

It ensures responsible automation.

3. Layered Risk Containment

Government architectures often isolate high-risk components behind controlled layers.

Examples include:

- model containment zones

- controlled data pipelines

- staged deployment environments

- operational approval gates

This layered design reduces the likelihood that a single model or component can cause systemic disruption.

Enterprise environments can apply similar patterns by separating:

- AI experimentation environments

- production decision systems

- data ingestion pipelines

- automated action layers

Layering reduces blast radius when systems behave unpredictably.

4. Continuous Monitoring and Observability

Government AI systems are rarely deployed without extensive monitoring.

Architectures typically include:

- system performance monitoring

- model drift detection

- anomaly detection

- audit logging

- operational telemetry

These monitoring systems allow operators to detect issues early and intervene before problems escalate.

Enterprise organizations should adopt the same philosophy.

AI systems that influence decisions should be observable just like financial systems or network infrastructure.

Monitoring is not optional in production AI environments.

5. Explicit Risk Classification

Government AI frameworks often categorize AI systems by risk level.

For example:

- low-risk decision support systems

- moderate-risk automation systems

- high-risk operational decision systems

Each classification triggers different requirements for:

- testing

- governance

- oversight

- deployment approval

Enterprises can apply a similar classification model internally.

Not all AI systems require the same level of scrutiny.

By classifying AI applications according to risk, organizations can allocate governance resources more effectively.

Where Government AI Architectures May Create Friction

Although many government AI patterns are valuable, some aspects of these architectures may introduce challenges for commercial enterprises.

1. Slower Innovation Cycles

Government AI development often includes lengthy approval processes and documentation requirements.

While this rigor supports accountability, it can slow innovation.

Enterprises operating in competitive markets must balance governance with agility.

Excessively bureaucratic processes may reduce the speed of experimentation and product development.

2. Heavy Documentation Requirements

Government programs frequently require extensive documentation at every stage of development.

While documentation improves traceability, it can also increase operational overhead.

Enterprises should adopt documentation standards that support governance without unnecessarily slowing development teams.

3. Resource-Intensive Compliance Processes

Defense AI systems often involve specialized compliance teams and dedicated oversight structures.

Smaller enterprises may not have the resources to replicate these processes fully.

Instead, organizations should focus on adopting core governance principles rather than attempting to mirror government bureaucracy.

When Government AI Architecture Is the Right Model

Despite these potential challenges, government-style architecture is particularly valuable in enterprise environments where risk tolerance is low.

Industries that benefit most include:

- healthcare

- financial services

- insurance

- transportation

- utilities

- defense contracting

- government services

These sectors often face strict compliance requirements and cannot tolerate unpredictable AI behavior.

In such environments, governance-driven architecture provides long-term stability.

Applying These Lessons in Enterprise AI Systems

Enterprise organizations do not need to replicate defense architecture entirely.

However, several lessons should be incorporated into enterprise AI strategies:

- embed governance into architecture

- maintain human oversight for critical decisions

- implement layered system containment

- monitor AI systems continuously

- classify AI systems by operational risk

These principles allow organizations to adopt AI responsibly while maintaining operational agility.

Enterprise AI Requires Discipline, Not Just Innovation

Many AI discussions focus on cutting-edge capabilities.

But enterprise AI success depends less on novelty and more on discipline.

Government and defense AI architectures remind us that:

- accountability matters

- risk containment matters

- observability matters

- governance matters

Enterprises that adopt these principles build AI systems that scale responsibly.

Organizations that ignore them often discover the consequences after deployment.

Conclusion

Government and defense AI architectures were not designed for startups or rapid consumer innovation.

They were designed for environments where reliability, accountability, and safety matter more than speed.

For enterprises operating under similar pressures, these architectural patterns provide valuable guidance.

The goal is not to copy government systems wholesale.

It is to extract the principles that align with enterprise constraints.

When organizations focus on governance, oversight, monitoring, and risk classification, AI systems become more stable, trustworthy, and sustainable.

Enterprise AI is not just about building intelligent systems.

It is about building systems that can be trusted.

Frequently Asked Questions

Why do government and defense organizations design AI systems differently from commercial companies?

Government and defense organizations design AI systems under strict operational constraints such as national security, regulatory compliance, and mission-critical reliability. These systems prioritize governance, auditability, risk containment, and human oversight rather than rapid experimentation or feature velocity.

What lessons can enterprises learn from government AI architectures?

Enterprises can adopt several proven practices from government AI systems, including:

- embedding governance into architecture

- maintaining human oversight for high-risk decisions

- implementing layered risk containment

- monitoring AI systems continuously

- classifying AI applications by operational risk

These practices help organizations deploy AI safely and sustainably.

What is governance-driven AI architecture?

Governance-driven AI architecture is an approach where accountability, security, monitoring, and compliance are embedded directly into the system design rather than added after deployment.

This typically includes:

- audit logging

- access controls

- decision traceability

- approval workflows

- operational monitoring

Embedding governance early reduces regulatory and operational risk.

What is human-in-the-loop AI?

Human-in-the-loop AI refers to systems where automated processes include human review or approval before final decisions are executed.

This approach ensures that:

- critical decisions remain accountable

- errors can be corrected quickly

- organizations maintain oversight over automated systems

It is commonly used in financial services, healthcare, and government systems.

Why is risk classification important in enterprise AI systems?

Risk classification helps organizations apply appropriate governance controls based on the impact of an AI system.

For example:

- low-risk AI tools may require minimal oversight

- decision-support systems require moderate governance

- high-risk operational systems require strict monitoring and human oversight

This approach helps enterprises allocate governance resources effectively.

What is layered risk containment in AI architecture?

Layered risk containment separates AI components into controlled architectural layers to limit the impact of failures or unexpected behavior.

Typical layers include:

- data ingestion pipelines

- model evaluation environments

- production decision services

- automated execution systems

This structure prevents a single AI component from disrupting an entire enterprise system.

Are government AI architectures too restrictive for private companies?

Government architectures are often more restrictive because they prioritize accountability and risk control. However, many of their core principles — governance, monitoring, and oversight — translate well to enterprises, especially in regulated industries.

The goal is not to replicate government bureaucracy but to adopt the architectural discipline behind it.

Which industries benefit most from government-style AI architecture?

Industries with strict compliance requirements often benefit from governance-driven AI architecture, including:

- healthcare

- financial services

- insurance

- utilities

- transportation

- government contracting

- defense suppliers

These sectors require high reliability and accountability in automated decision systems.

Why is continuous monitoring important for enterprise AI systems?

AI systems can change behavior over time due to:

- data drift

- model degradation

- changing business conditions

Continuous monitoring allows organizations to detect these issues early and intervene before they create operational or compliance risks.

What is the biggest architectural mistake enterprises make with AI?

One of the most common mistakes is deploying AI capabilities without building governance and monitoring into the architecture.

When oversight mechanisms are added later, organizations often face costly redesigns and compliance challenges.

Embedding governance from the beginning avoids these problems.

Want More?

- Check out our free blog articles

- Check out our free infographics

- Check out our free whitepapers

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish