Artificial intelligence architectures are everywhere.

Vendor reference diagrams.

Consulting frameworks.

Startup blueprints.

Agent-first stacks.

LLM-centric systems.

Each promises acceleration.

Each claims scalability.

Each appears complete.

Yet enterprise AI failures continue to increase.

Why?

Because most organizations do not evaluate AI architectures.

They copy them.

And copying architecture without copying the constraints it was designed for is one of the fastest paths to systemic failure.

This article provides a practical evaluation framework enterprise teams can use before adopting any AI architecture — whether from Big Tech, a consulting firm, a startup, or internal innovation teams.

Architecture Is Optimization Under Constraint

Every architecture optimizes for something.

Not everything.

Something.

Examples:

- Startup AI architectures optimize for speed and survival.

- Research architectures optimize for experimentation.

- Cloud vendor architectures optimize for platform consumption.

- Agent-first architectures optimize for autonomy.

- Government architectures optimize for risk containment.

- LLM-centric systems optimize for conversational interaction.

When enterprises copy these architectures, they often copy structure without copying the optimization target.

That is the mismatch.

Before adopting any AI architecture, the first question must be:

What problem was this architecture built to solve?

If the answer is unclear, do not adopt it.

Why Enterprises Get Burned

Enterprise environments operate under different constraints:

- Regulatory compliance

- Audit requirements

- Budget governance

- Security boundaries

- Cross-functional dependencies

- Long system lifecycles

- Accountability for decisions

Many popular AI architectures assume:

- High data maturity

- Small decision loops

- Minimal approval layers

- Platform centralization

- Tolerance for rapid iteration

These assumptions do not transfer cleanly into regulated, multi-department environments.

Architecture misalignment is rarely visible in the demo phase.

It appears in production.

The Enterprise AI Architecture Evaluation Framework

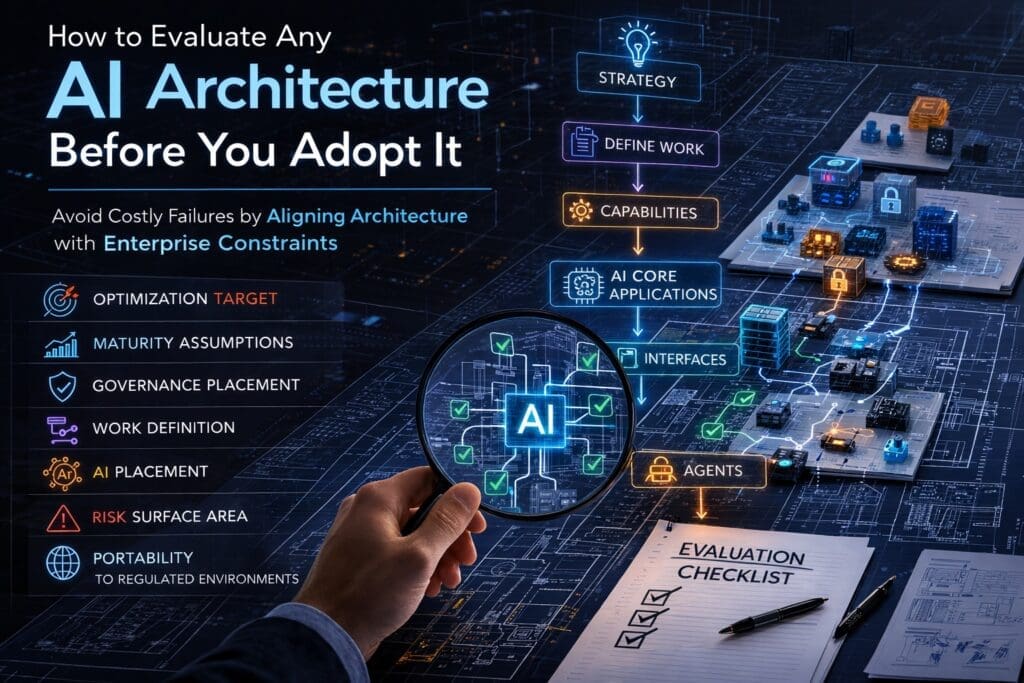

Below is a practical rubric you can apply to any AI architecture before adoption.

Use it in architecture reviews.

Use it in executive discussions.

Use it before funding approval.

1. Optimization Target

What is this architecture optimized for?

- Speed?

- Autonomy?

- Research?

- Platform integration?

- Risk containment?

- Conversational UX?

Architectures are tradeoff engines.

If the optimization target conflicts with your organizational priorities, friction is inevitable.

2. Assumed Organizational Maturity

Every architecture assumes a certain level of:

- Data quality

- Engineering discipline

- DevOps maturity

- Governance clarity

- Cross-team coordination

Ask:

What maturity level does this model require to function safely?

If your organization is below that level, you are adopting risk.

3. Governance Placement

Where does governance live in this architecture?

- Is it embedded?

- Is it an afterthought?

- Is it external?

- Is it assumed?

Enterprise AI systems must define:

- Accountability boundaries

- Decision authority

- Logging standards

- Audit traceability

- Human override mechanisms

If governance is not structurally visible in the architecture, it will become expensive to retrofit later.

4. Work Definition Discipline

Before AI can automate or assist, work must be clearly defined.

Does this architecture require:

- Formal task modeling?

- Explicit decision boundaries?

- Defined inputs and outputs?

- Exception handling paths?

Or does it assume AI can infer structure dynamically?

Enterprises cannot depend on inference where accountability exists.

If work is undefined, automation becomes unpredictable.

5. AI Placement

Where does AI sit in the system?

Is AI:

- A core application?

- An interface layer?

- An orchestration agent?

- A decision service?

- A probabilistic component wrapped in deterministic controls?

Architectures fail when AI is placed in the wrong structural position.

Common failure patterns include:

- LLM as the system of record

- Interface owning business logic

- Agents executing undefined workflows

Placement determines stability.

6. Risk Surface Area

What new risks does this architecture introduce?

Examples:

- Autonomous decision risk

- Prompt injection exposure

- Data leakage risk

- Vendor lock-in

- Audit opacity

- Unbounded workflow execution

Every architectural decision expands or contracts risk surfaces.

If risk is not explicitly mapped, it is silently expanding.

7. Portability to Regulated Environments

Can this architecture function under:

- Government compliance frameworks?

- Healthcare regulations?

- Financial audit standards?

- Defense procurement requirements?

If not, it may be optimized for a different environment entirely.

Portability matters for long-term survivability.

Common Enterprise Failure Patterns

Across industries, failures tend to cluster around the same mistakes:

Agent-First Adoption

Autonomy introduced before structure.

LLM-as-the-System

Language models embedded as core business logic.

Interface-Driven Architecture

Chat interfaces owning workflows.

Governance Retrofit

Compliance added after deployment.

Capability Entanglement

Business logic buried inside prompts.

None of these failures begin maliciously.

They begin enthusiastically.

A Practical Way to Use This Framework

Before approving or adopting any AI architecture:

- Conduct a structured architecture review using the 7 dimensions.

- Score alignment across:

- Business intent

- Risk tolerance

- Engineering maturity

- Governance capacity

- Identify structural mismatches early.

- Redesign before implementation.

Architecture evaluation should occur before procurement.

Not after deployment.

Enterprise AI Is Not About Templates

There is no universal AI architecture.

There are optimization patterns.

Mature organizations do not worship frameworks.

They extract what transfers under constraint.

The correct question is never:

“Is this architecture good?”

The correct question is:

“Is this architecture aligned with our constraints, maturity, and governance requirements?”

When enterprises begin asking that question consistently, AI projects become:

- Predictable

- Governable

- Auditable

- Maintainable

- Scalable

Architecture is not about diagrams.

It is about disciplined placement under constraint.

And that discipline begins before adoption.

If your organization is currently evaluating AI architectures, this framework can serve as a starting point for structured discussion across executives, architects, and engineering teams.

Judgment precedes implementation.

Always.

Frequently Asked Questions

What is an AI architecture?

An AI architecture is the structural design that defines how AI components interact within a system. It specifies where models operate, how data flows, how decisions are made, and how governance, logging, and security are enforced.

In enterprise environments, AI architecture must also define accountability boundaries, audit mechanisms, and integration with existing systems.

Why do AI architectures fail in enterprises?

AI architectures often fail in enterprises due to constraint mismatch.

Many popular AI architectures are optimized for startups, research environments, or vendor ecosystems. Enterprises operate under governance, compliance, budget oversight, and long lifecycle requirements. When those constraints are not accounted for, systems break down during production deployment.

What should enterprises evaluate before adopting an AI architecture?

Enterprises should evaluate:

- Optimization target

- Organizational maturity assumptions

- Governance placement

- Work definition discipline

- AI placement (core vs interface vs agent)

- Risk surface expansion

- Portability to regulated environments

Architectures must align with enterprise constraints before adoption.

What is the most common mistake when adopting AI architecture?

The most common mistake is copying structure without understanding the optimization target.

Organizations often adopt agent-first or LLM-centric architectures without defining work, governance, and capability boundaries first. This creates accountability gaps and unstable automation.

Should AI agents be part of enterprise architecture?

AI agents can be part of enterprise architecture — but only after foundational layers are defined.

Agents should orchestrate well-defined capabilities. They should not invent workflows, override governance, or act without bounded authority.

Autonomy must follow structure.

What is the difference between AI architecture and AI tools?

AI tools are individual technologies such as LLMs, vector databases, or orchestration frameworks.

AI architecture defines how those tools are structured, governed, integrated, and controlled within an enterprise system.

Tools can be replaced. Architecture determines long-term survivability.

Can large language models (LLMs) serve as enterprise systems?

LLMs should not serve as the core system of record in enterprise environments.

They are probabilistic components and must be wrapped in deterministic layers that enforce validation, logging, access control, and business rules.

LLMs are powerful — but they require containment.

How does governance fit into AI architecture?

Governance must be structurally embedded in the architecture, not added later.

This includes:

- Logging and observability

- Decision accountability

- Human override mechanisms

- Access controls

- Audit trails

If governance is not visible in architectural diagrams, it is likely insufficient.

What is “optimization under constraint” in AI architecture?

Every architecture is optimized for something: speed, autonomy, research flexibility, platform centralization, or risk containment.

Optimization under constraint means evaluating whether that priority aligns with enterprise needs. Misalignment leads to systemic friction.

How can Microsoft/.NET enterprises evaluate AI architecture fit?

Microsoft and .NET enterprises should assess:

- How AI integrates with existing service layers

- Whether business logic remains in backend capabilities

- How Azure governance policies apply

- Whether logging and monitoring integrate with existing DevOps pipelines

- Whether AI interfaces are separated from core services

Integration discipline is more important than novelty.

Is there a universal best AI architecture?

No.

There are optimization patterns. There is no universal template.

The correct architecture depends on:

- Organizational maturity

- Risk tolerance

- Regulatory exposure

- Engineering discipline

- Strategic objectives

Evaluation must precede adoption.

When should an enterprise redesign its AI architecture?

Redesign should occur when:

- AI components own business logic

- Agents operate without defined task boundaries

- Governance is being retrofitted

- LLMs function as systems of record

- Auditability is unclear

Early redesign is significantly less expensive than post-deployment remediation.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish