Over the past decade, major technology companies such as Microsoft, Google, Amazon, and Meta have developed sophisticated AI architectures designed to support large-scale machine learning systems.

These “reference architectures” are often used as models for organizations beginning their own AI initiatives. They demonstrate how AI systems can be integrated into large digital platforms, data ecosystems, and cloud infrastructure.

However, enterprise organizations must be careful when adopting these architectures.

Big Tech companies operate under very different constraints than most enterprises. Their systems are optimized for global-scale platforms, massive datasets, and specialized engineering teams.

The goal for enterprise leaders should not be to copy Big Tech architectures wholesale.

Instead, organizations should identify the architectural principles that transfer well to enterprise environments while avoiding patterns that assume hyperscale infrastructure.

Why Big Tech AI Architectures Exist

Technology platforms such as Microsoft Azure, Google Cloud, and Amazon Web Services must support AI workloads across thousands of customers, industries, and use cases.

To accomplish this, their architectures emphasize:

- massive scalability

- distributed computing

- automated model training pipelines

- high-performance data processing

- platform-based service delivery

These architectures are designed to support:

- recommendation systems

- search engines

- advertising platforms

- real-time personalization systems

- large-scale machine learning training pipelines

For Big Tech companies, AI is deeply integrated into their digital platforms and infrastructure.

Enterprises can benefit from many of these architectural patterns — particularly those that improve scalability, modularity, and data accessibility.

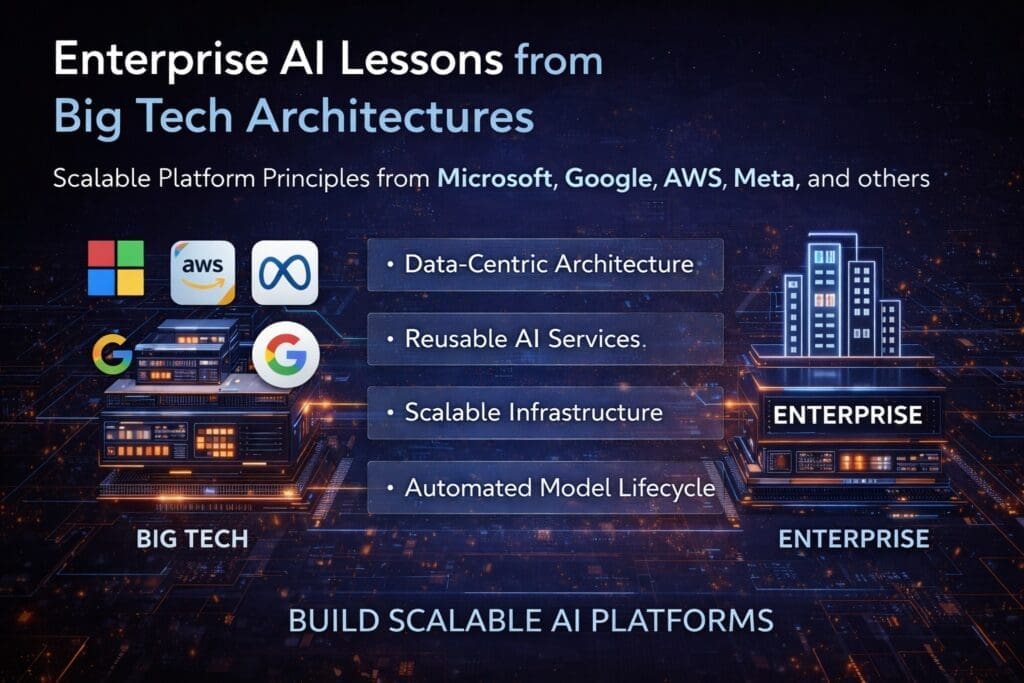

Architectural Patterns Enterprises Should Adopt

Although enterprise organizations operate at a different scale than technology platforms, several architectural principles from Big Tech AI systems translate well to enterprise environments.

1. Data-Centric Architecture

Big Tech AI architectures treat data as the central asset of the entire system.

Rather than building AI systems around individual models, these architectures emphasize:

- robust data pipelines

- centralized data platforms

- consistent data governance

- reusable data services

This approach enables organizations to reuse data across multiple applications and AI systems.

For enterprises, adopting a data-centric architecture improves:

- analytics capabilities

- machine learning model performance

- operational insights

- cross-department collaboration

Without strong data infrastructure, AI initiatives often stall due to poor data quality and fragmented systems.

2. Modular AI Services

Big Tech platforms often expose AI capabilities as modular services.

Examples include:

- language processing APIs

- recommendation services

- prediction services

- anomaly detection services

- computer vision services

These capabilities can be integrated into multiple applications across the organization.

Enterprises can benefit from this model by developing reusable AI services rather than embedding AI logic directly inside individual applications.

This improves maintainability and allows AI capabilities to scale across departments.

3. Scalable Infrastructure Design

Technology companies build AI architectures that scale automatically as workloads increase.

This often involves:

- distributed computing environments

- containerized services

- scalable storage systems

- automated resource allocation

Enterprise organizations may not require hyperscale infrastructure, but they should design systems that can grow as AI adoption increases.

A scalable architecture prevents early design decisions from limiting future growth.

4. Automated Model Lifecycle Management

Big Tech AI systems rely heavily on automation to manage machine learning models.

Typical lifecycle components include:

- model training pipelines

- automated evaluation

- model versioning

- deployment automation

- monitoring and retraining

This process is often referred to as MLOps.

Enterprises deploying multiple AI models should implement structured model lifecycle management to ensure:

- reproducibility

- reliability

- consistent model performance

Automation reduces operational risk and improves development efficiency.

5. Platform Thinking Instead of Project Thinking

One of the most important lessons from Big Tech AI architecture is the concept of platform thinking.

Technology companies do not treat AI as isolated projects.

Instead, they build AI platforms that support many applications across the organization.

This approach allows:

- shared infrastructure

- reusable models

- centralized governance

- faster innovation

Enterprise organizations that adopt platform thinking often see greater long-term value from their AI investments.

Where Big Tech Architectures May Create Challenges for Enterprises

While Big Tech architectures offer valuable insights, some aspects of these systems can create difficulties when applied directly to enterprise environments.

1. Hyperscale Infrastructure Assumptions

Many Big Tech architectures assume massive infrastructure resources.

These systems may rely on:

- thousands of compute nodes

- distributed data centers

- highly specialized engineering teams

Most enterprise environments operate at a much smaller scale.

Attempting to replicate hyperscale infrastructure can create unnecessary complexity and cost.

2. Extremely Specialized Engineering Roles

Technology platforms often employ large teams of specialists, including:

- machine learning engineers

- platform engineers

- data infrastructure engineers

- AI research scientists

Many enterprise organizations rely on smaller, cross-functional teams.

Architectures should be designed so they can be operated and maintained by the available workforce.

3. Platform-Level Complexity

Big Tech AI systems often involve complex internal platforms that take years to develop.

Enterprises should focus on practical architecture patterns rather than attempting to replicate the entire platform ecosystem.

Starting with manageable systems allows organizations to scale gradually.

When Big Tech AI Architecture Works Best for Enterprises

Big Tech architectural principles work particularly well in enterprises that:

- operate large digital platforms

- manage extensive data ecosystems

- deploy AI across many applications

- have mature engineering teams

Examples include:

- large financial institutions

- global retail platforms

- digital marketplaces

- telecommunications providers

- technology-driven enterprises

In these environments, platform-based AI architecture can significantly improve scalability and operational efficiency.

Applying Big Tech Lessons in Enterprise AI Systems

Enterprise organizations can adopt the most useful aspects of Big Tech architecture without copying the entire system.

Key principles include:

- building strong data infrastructure

- creating reusable AI services

- designing scalable systems

- implementing structured model lifecycle management

- adopting platform-oriented thinking

These principles allow organizations to build AI systems that grow alongside the business.

Enterprise AI Success Comes from Selective Adoption

Many organizations assume that successful AI architectures must mirror those used by major technology companies.

In reality, success comes from selective adoption.

Enterprises should extract the architectural patterns that support their goals while ignoring components designed for hyperscale environments.

This balanced approach allows organizations to benefit from Big Tech innovation without inheriting unnecessary complexity.

Conclusion

Big Tech companies have developed some of the most advanced AI architectures in the world.

Their systems demonstrate how artificial intelligence can operate at enormous scale and power global digital platforms.

For enterprise organizations, these architectures provide valuable insights into:

- scalable data systems

- modular AI services

- automated model lifecycle management

- platform-oriented design

However, enterprises should adopt these patterns thoughtfully.

The goal is not to replicate hyperscale technology platforms but to apply the principles that align with enterprise constraints.

When organizations adopt these lessons strategically, they can build AI systems that are scalable, maintainable, and capable of supporting long-term innovation.

Frequently Asked Questions

What are Big Tech AI reference architectures?

Big Tech AI reference architectures are architectural frameworks developed by companies like Microsoft, Google, Amazon, and Meta to support large-scale artificial intelligence systems. These architectures typically include scalable data platforms, automated machine learning pipelines, modular AI services, and infrastructure designed to handle massive datasets and global workloads.

Why do enterprises look to Big Tech for AI architecture guidance?

Enterprises often look to Big Tech because these companies operate some of the most advanced AI systems in the world. Their architectures demonstrate how AI can scale across large platforms, manage complex data ecosystems, and support thousands or millions of users simultaneously.

Should enterprises copy Big Tech AI architectures directly?

Enterprises should not copy Big Tech architectures directly. These systems are designed for hyperscale environments with massive infrastructure and specialized engineering teams. Instead, enterprises should adopt the architectural principles that align with their operational constraints and business goals.

What architectural patterns from Big Tech work well for enterprises?

Several patterns transfer well to enterprise environments, including:

- data-centric architecture

- modular AI services

- scalable infrastructure design

- automated model lifecycle management (MLOps)

- platform-based AI capabilities

These approaches help organizations build sustainable AI systems that can grow over time.

What is a data-centric AI architecture?

A data-centric AI architecture focuses on building strong data infrastructure as the foundation for AI systems. Instead of designing systems around individual models, organizations prioritize reliable data pipelines, governance frameworks, and centralized data platforms to ensure consistent and high-quality data for machine learning applications.

What is MLOps and why is it important for enterprises?

MLOps (Machine Learning Operations) is a framework for managing the lifecycle of machine learning models. It includes processes for training, testing, deploying, monitoring, and updating models.

For enterprises, MLOps ensures that AI systems remain reliable, auditable, and maintain consistent performance over time.

What does “platform thinking” mean in AI architecture?

Platform thinking means building shared AI infrastructure and services that support multiple applications across an organization. Instead of creating isolated AI projects, companies develop reusable components such as prediction services, data pipelines, and model management tools that can be used by many teams.

Why can hyperscale AI architectures create problems for enterprises?

Hyperscale architectures often assume enormous infrastructure resources, specialized engineering teams, and extremely large datasets. Enterprises that attempt to replicate these environments may introduce unnecessary complexity and operational costs.

Most organizations benefit from simpler architectures that can scale gradually.

How can enterprises adopt Big Tech AI principles without adding complexity?

Enterprises can selectively adopt Big Tech principles by focusing on:

- strong data infrastructure

- reusable AI services

- scalable but manageable infrastructure

- structured model lifecycle management

- platform-oriented thinking

This allows organizations to benefit from proven architectural ideas while maintaining practical systems.

What is the biggest mistake enterprises make when adopting Big Tech AI patterns?

The most common mistake is attempting to replicate the full architecture used by hyperscale technology companies. Successful enterprise AI initiatives focus on adapting the principles behind these systems rather than copying the entire architecture.

Want More?

- Check out our free blog articles

- Check out our free infographics

- Check out our free whitepapers

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish