AI projects rarely fail because of a lack of tools.

They fail because of a lack of structure.

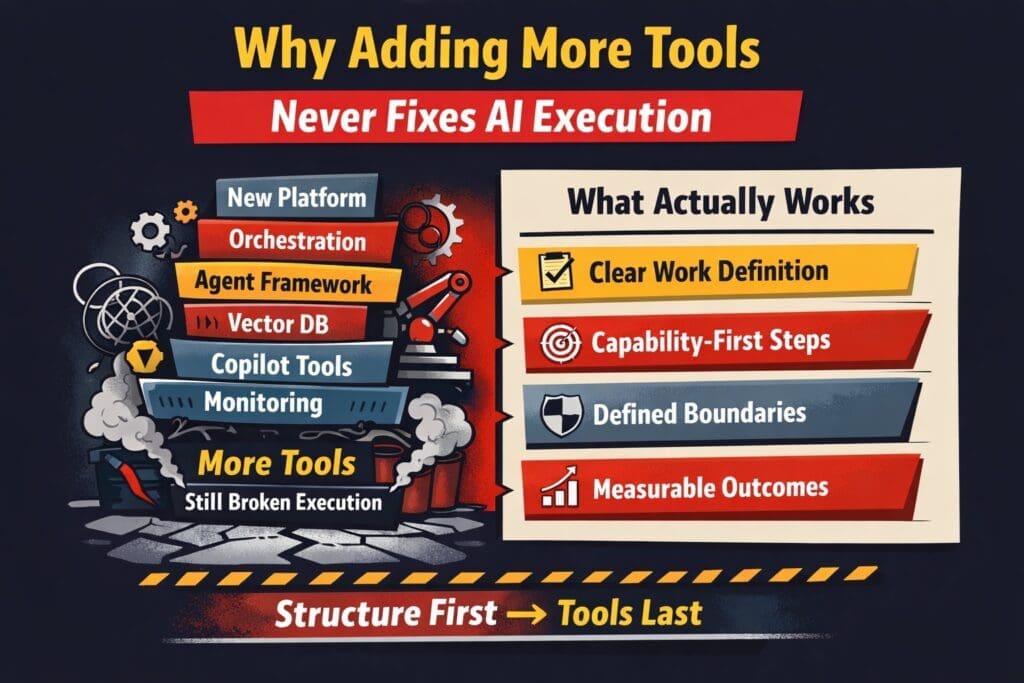

When execution stalls, most organizations respond predictably:

- Add a new AI platform

- Add orchestration tooling

- Add monitoring software

- Add vector databases

- Add agent frameworks

- Add Copilot-style layers

The stack grows.

Execution does not.

If your AI initiative isn’t delivering measurable business capability, adding more tools will not fix it. It will amplify the confusion.

Let’s break down why.

The Tool Reflex: Why Organizations Default to Buying More

When AI execution struggles, leadership sees one of three symptoms:

- Output quality is inconsistent

- Delivery timelines slip

- Teams appear blocked

The assumption becomes:

We must be missing something in our stack.

This is a natural reaction. It feels concrete. Buying a tool is action.

But AI execution failure is rarely a tooling gap. It is almost always one of these:

- Undefined work boundaries

- Ambiguous decision authority

- No input/output contracts

- No measurable success criteria

- No operational testing model

Tools cannot compensate for structural ambiguity.

They simply automate it.

The Hidden Cost of Tool Accumulation

Every new AI tool introduces:

- New abstractions

- New integration points

- New configuration layers

- New failure modes

- New vendor dependencies

If the underlying work is poorly defined, the complexity multiplies.

In enterprise .NET environments especially, you’ve already seen this pattern:

A poorly specified feature wrapped in more frameworks does not become clearer.

It becomes harder to debug.

The same rule applies to AI.

Why Tools Feel Like Progress (But Aren’t)

Tools create visible movement:

- New dashboards

- New demos

- New vendor presentations

- New architecture diagrams

This creates executive confidence.

But execution confidence comes from something different:

- Deterministic boundaries

- Clear ownership

- Testable capability slices

- Measurable output consistency

Those are structural artifacts — not software purchases.

The Real Failure Mode: Undefined Capability

Most AI initiatives start with a goal:

We want AI to help customer service.”

“We want AI to improve internal productivity.”

“We want AI to assist analysts.

These are not capabilities.

They are aspirations.

A capability must be defined as:

- A bounded task

- With defined inputs

- With expected outputs

- With measurable success criteria

- With clear exception handling

If this layer is missing, tools cannot compensate.

They only execute confusion faster.

What Actually Fixes AI Execution

If tools aren’t the answer, what is?

1. Explicit Work Specification

Before choosing tooling, define:

- What decision is being automated?

- What data is required?

- What output format is mandatory?

- What confidence threshold is acceptable?

- What triggers human review?

If you cannot answer these clearly, do not add tools.

Refine the work definition first.

2. Capability-First Architecture

Large AI platforms promise end-to-end transformation.

Execution succeeds through small, proven capabilities.

Instead of:

Deploy enterprise AI.

Start with:

- A single report generation workflow

- A bounded document classification task

- A structured summarization capability

- A narrow internal Copilot-style assistant

Prove it. Measure it. Harden it. Then expand.

Scale amplifies structure.

If the structure is weak, scale amplifies failure.

3. Deterministic Core + AI Layer Separation

In enterprise systems, especially within Microsoft stacks:

- Domain logic must remain deterministic

- AI should sit at defined integration boundaries

- AI outputs must be validated before system mutation

If AI logic leaks into domain rules, debugging becomes impossible.

More tools won’t fix that.

Architectural discipline will.

4. Execution Readiness Criteria

Before scaling AI, confirm:

- Is the workflow documented?

- Are failure modes known?

- Is logging implemented?

- Are outputs testable?

- Is rollback possible?

If not, you are not execution-ready.

Buying tools before readiness increases blast radius.

5. Measurement Over Novelty

AI maturity is not measured by:

- Number of tools

- Number of models

- Number of vendors

It is measured by:

- Repeatable performance

- Reduced operational friction

- Measurable time savings

- Controlled error rates

- Business-aligned outcomes

Tools don’t create those.

Execution discipline does.

Why This Matters in Microsoft-Centric Enterprises

Organizations already invested in:

- Azure

- Power Platform

- .NET applications

- Microsoft Copilot

- Enterprise data platforms

Do not need more AI tooling to begin.

They need:

- Work boundary clarity

- Structured integration points

- Explicit capability slicing

- Governance artifacts

- Test harnesses

You can build highly functional AI systems inside existing .NET environments without expanding your stack at all.

Execution breaks because structure breaks — not because tooling is insufficient.

The Tool Threshold Rule

Here is a practical guideline:

Only add a new tool when:

- The work is clearly defined

- The limitation is proven

- The performance gap is measurable

- The integration cost is justified

- The new tool solves a specific constraint

If you cannot articulate the constraint precisely, do not add the tool.

The Hard Truth

Most AI execution problems are not technology problems.

They are specification problems.

They are architecture problems.

They are ownership problems.

And those problems are less exciting than buying something new.

But they are fixable.

Final Perspective

If your AI initiative is struggling, ask this before approving another platform:

- Is the work explicitly defined?

- Are outputs measurable?

- Are responsibilities clear?

- Is the AI layer architecturally bounded?

If the answer is no, adding tools will not fix execution.

It will only make the failure harder to see.

Structure first.

Capabilities second.

Tools last.

That is what actually fixes AI execution.

Frequently Asked Questions

Why do AI projects fail even when organizations invest in more tools?

AI projects fail because tools do not solve structural problems. Most execution failures stem from unclear work definition, missing input/output contracts, undefined ownership, and lack of measurable success criteria. Adding more platforms increases complexity but does not fix ambiguity in execution.

Is tool sprawl a common issue in enterprise AI initiatives?

Yes. Tool sprawl is common in medium to large organizations where different teams adopt orchestration frameworks, agent platforms, monitoring tools, and AI services independently. Without a clearly defined execution architecture, these tools create integration friction and multiply failure points.

How can organizations determine whether they truly need a new AI tool?

A new tool should only be added when:

- The business capability is clearly defined

- The performance limitation is measurable

- The constraint cannot be solved within the current stack

- The integration cost is justified

- The tool addresses a specific architectural gap

If the constraint cannot be articulated precisely, the issue is likely structural rather than technical.

What does “capability-first AI architecture” mean?

Capability-first architecture means defining and proving small, bounded AI tasks before scaling. Each capability must include:

- Defined inputs

- Expected outputs

- Measurable performance criteria

- Exception handling rules

Instead of deploying enterprise-wide AI platforms, teams validate narrowly scoped capabilities and expand incrementally.

Why doesn’t adding an AI agent fix broken systems?

Agents orchestrate tasks — they do not repair poorly defined workflows. If the underlying business logic, data contracts, and decision boundaries are unclear, an agent simply coordinates flawed components more efficiently. This amplifies system instability rather than resolving it.

What is “execution readiness” in AI projects?

Execution readiness means the organization has:

- Documented workflows

- Explicit decision logic

- Testable AI outputs

- Logging and monitoring

- Clear rollback procedures

- Defined ownership

Without these elements, AI initiatives remain experimental rather than operational.

How does this apply to Microsoft and .NET environments?

Organizations using Azure, .NET, Power Platform, and Microsoft Copilot already have sufficient tooling to build AI capabilities. Success depends on architectural discipline — separating deterministic domain logic from AI integration layers and validating outputs before system mutation.

Execution problems in these environments are rarely stack limitations. They are work-definition failures.

What is the biggest misconception about scaling AI?

The biggest misconception is that scale fixes instability. In reality, scale amplifies whatever structure exists. If the foundation is weak, scaling increases inconsistency, cost, and operational risk.

Stable AI execution requires small, validated capabilities before enterprise rollout.

How can executives avoid the “tool reflex” when AI initiatives stall?

Executives should ask:

- Is the work explicitly defined?

- Are outputs measurable?

- Is ownership clear?

- Are failure modes known?

- Is performance testable?

If these questions cannot be answered confidently, purchasing another platform will not solve the problem.

What actually fixes AI execution failures?

AI execution improves through:

- Explicit work specification

- Capability-first design

- Deterministic core architecture

- Clear input/output contracts

- Measurable performance standards

- Governance and logging discipline

Structure first. Tools last.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish