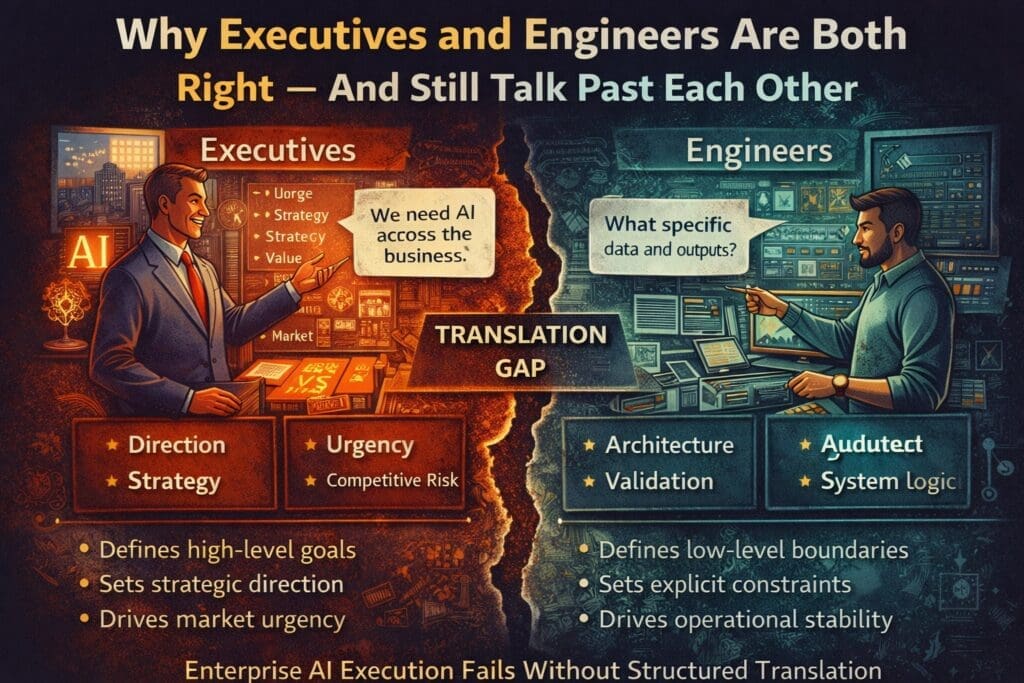

In most enterprise AI initiatives, there is tension.

Executives push for speed, transformation, and competitive urgency.

Engineers push for architecture, constraints, and risk control.

From the outside, it looks like disagreement.

In reality, both sides are usually correct.

They are just solving different problems.

And because they are solving different problems, they often talk past each other.

This disconnect is one of the primary reasons AI breaks between strategy and execution.

Executives Are Solving a Market Problem

Executives operate at the level of:

- Competitive pressure

- Market positioning

- Revenue growth

- Cost control

- Strategic differentiation

When they say:

“We need AI across the organization.”

They are expressing:

- Urgency

- Strategic direction

- Resource commitment

- Organizational intent

They are not attempting to define:

- Input schemas

- Output contracts

- Exception paths

- Logging models

That is not their role.

Their problem is direction and timing.

Engineers Are Solving a Systems Problem

Engineers operate at the level of:

- Deterministic behavior

- Integration boundaries

- Data contracts

- Performance thresholds

- Failure handling

When they say:

“This isn’t defined enough.”

They are expressing:

- Risk exposure

- Architectural ambiguity

- Operational instability

They are not resisting innovation.

They are attempting to prevent fragility.

Their problem is execution integrity.

The Translation Gap

The disconnect occurs in the layer between:

Strategic intent

and

Operational definition.

Executives speak in:

- Outcomes

- Impact

- Transformation

Engineers speak in:

- Constraints

- Dependencies

- Edge cases

Both languages are valid.

Neither automatically translates into the other.

When this translation layer is missing, AI initiatives stall.

Why AI Amplifies the Gap

Traditional software projects already require translation between business and engineering.

AI magnifies this tension for three reasons:

1. AI Is Probabilistic

Enterprise systems are deterministic.

AI introduces variability.

Engineers need boundaries and validation layers.

Executives see opportunity in flexibility.

Both perspectives are correct.

2. AI Is Market-Visible

AI carries competitive urgency.

Boards and shareholders expect movement.

This increases pressure for visible progress.

Speed becomes prioritized over specification.

3. AI Is Conceptually Abstract

AI discussions often revolve around:

- Intelligence

- Automation

- Copilots

- Agents

These are high-level constructs.

Engineering requires:

- Defined capabilities

- Input/output schemas

- Measurable thresholds

Conceptual clarity does not equal operational clarity.

How the Miscommunication Manifests

The pattern is predictable.

Executive Statement:

“We need AI to improve productivity across departments.”

Engineering Interpretation:

“What specific task? What data? What format? What constraints?”

Without structured translation, the result is:

- Scope drift

- Rework

- Frustration

- Delayed delivery

- Quiet erosion of trust

Not because either side is wrong.

Because the middle layer is missing.

The Missing Middle Layer

Between strategy and code is a discipline many organizations underinvest in:

Explicit work definition.

This includes:

- Clear capability boundaries

- Defined decision points

- Required inputs

- Mandatory output formats

- Performance thresholds

- Human review triggers

- Logging and monitoring rules

This layer translates intent into structure.

Without it, executives believe progress is happening while engineers see instability forming.

Microsoft and .NET Context: Where It Becomes Visible

In enterprise Microsoft environments:

- Business logic is structured.

- Data flows are defined.

- Integrations are explicit.

AI must be inserted carefully:

- At defined integration points

- With validation layers

- With logging discipline

- With rollback mechanisms

If executive intent is not translated into bounded capabilities, AI becomes:

- Unpredictable in structured workflows

- Difficult to debug

- Hard to govern

The tension becomes operational.

Why Both Sides Feel Frustrated

Executives feel:

- Engineering is slow.

- Teams are overcomplicating things.

- Innovation is being resisted.

Engineers feel:

- Requirements are vague.

- Risk is being ignored.

- Systems are being destabilized.

Both are observing real problems.

Neither sees the full structural gap.

Turning Conflict into Structure

The solution is not more meetings.

It is structured translation.

After any strategic AI directive, require:

- A written capability definition.

- A documented input schema.

- A required output format.

- Measurable performance criteria.

- Defined ownership.

- Monitoring and logging plans.

- Clear rollback procedures.

When these artifacts exist:

Executives gain confidence in measurable progress.

Engineers gain clarity in implementation boundaries.

Tension decreases because ambiguity decreases.

The Real Alignment Model

Healthy enterprise AI execution looks like this:

Executive Layer

→ Defines direction, urgency, and value.

Translation Layer

→ Converts intent into bounded, measurable capabilities.

Engineering Layer

→ Implements, tests, validates, and monitors.

If the translation layer is skipped, executives and engineers will continue talking past each other — even when they agree on the goal.

The Cost of Ignoring the Gap

When the disconnect persists:

- AI initiatives become politically fragile.

- Engineers disengage.

- Executives lose confidence in technical delivery.

- Tool purchases increase in an attempt to compensate.

The result is not explosive failure.

It is slow stagnation.

Final Perspective

Executives are right to demand urgency.

Engineers are right to demand structure.

AI fails when urgency bypasses structure.

It also fails when structure ignores urgency.

The goal is not to eliminate tension.

It is to formalize translation.

When intent becomes explicit capability,

strategy becomes executable.

Without that step,

both sides remain correct —

and execution remains unstable.

Frequently Asked Questions

Why do executives and engineers disagree on AI initiatives?

Executives focus on strategic urgency, competitive positioning, and business outcomes. Engineers focus on system stability, architectural boundaries, and operational risk. Both perspectives are valid, but they operate at different layers of abstraction, which creates communication gaps.

What causes executives and engineers to “talk past each other”?

They often use different languages. Executives discuss impact, value, and transformation. Engineers discuss constraints, schemas, and validation rules. Without a structured translation layer, strategic intent does not become executable specification.

What is the “translation gap” in enterprise AI?

The translation gap is the missing middle layer between business strategy and technical implementation. It includes explicit work definition, input/output contracts, measurable thresholds, and governance controls. When this layer is absent, AI execution becomes unstable.

Why does AI amplify the disconnect between leadership and engineering?

AI introduces probabilistic behavior into deterministic enterprise systems. Executives see opportunity in flexibility and scale. Engineers see risk in variability and integration complexity. Without clear boundaries, this tension increases.

How can organizations reduce friction between executives and engineers?

Organizations can reduce friction by formalizing structured translation:

- Define bounded AI capabilities

- Document required inputs and outputs

- Establish measurable performance criteria

- Assign clear ownership

- Define monitoring and rollback procedures

When these artifacts exist, strategic intent becomes operationally executable.

Is the tension between urgency and structure avoidable?

No. Healthy organizations require both urgency and structure. The goal is not to eliminate tension but to formalize how strategic goals are translated into bounded, measurable technical capabilities.

How does this issue show up in Microsoft and .NET environments?

In enterprise Microsoft environments, systems are structured and deterministic. AI must integrate at clearly defined boundaries with validation and logging controls. Vague executive directives without technical definition can destabilize otherwise predictable systems.

What happens if the translation layer is ignored?

Ignoring the translation layer leads to:

- Scope drift

- Rework

- Misaligned expectations

- Tool sprawl

- Loss of executive confidence

- Engineering disengagement

AI initiatives may not fail immediately, but they often stagnate.

Why do executives feel engineers are slowing progress?

Engineers often require detailed constraints before implementation. This can appear slow, but it reduces downstream instability and rework. Without those constraints, speed early in the project leads to delays later.

What is the key to sustainable enterprise AI execution?

Sustainable AI execution requires:

- Clear strategic direction

- Explicit capability definition

- Defined architectural boundaries

- Measurable performance thresholds

- Governance and monitoring

When intent is translated into structured capability, strategy becomes executable.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish