When AI initiatives fail, the explanation is almost always wrong.

“It’s too new.”

“The models aren’t mature.”

“The technology isn’t stable yet.”

That narrative is convenient.

It protects teams from a harder truth:

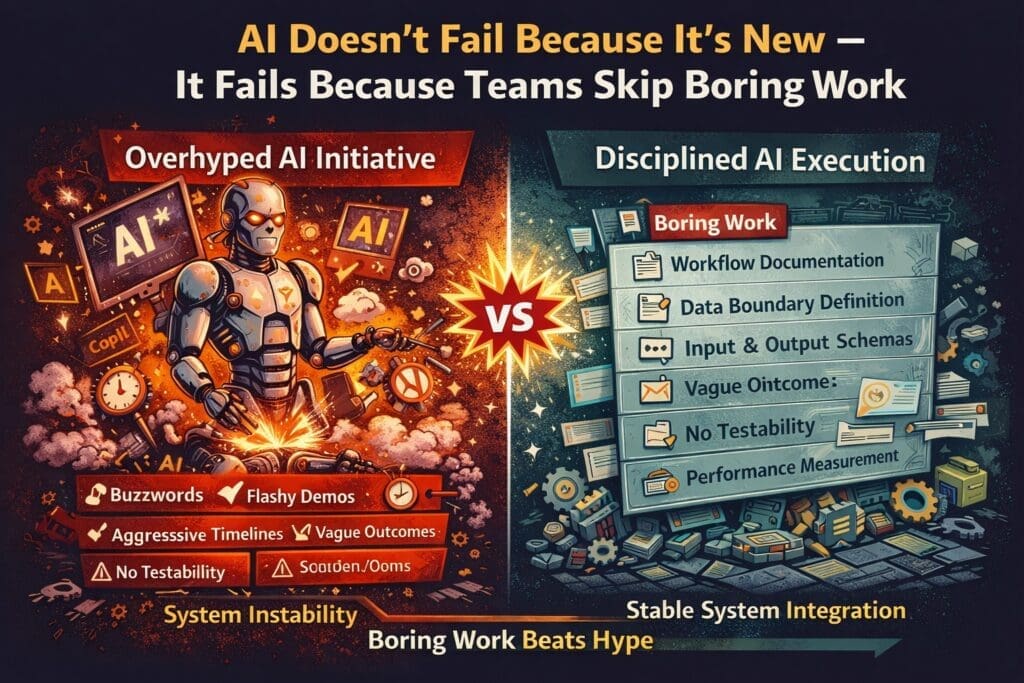

AI usually fails because organizations skip the boring work required to make it executable.

The failure is rarely innovation-related.

It is discipline-related.

The Myth of “New Technology Risk”

Every transformative technology is labeled unstable in its early years:

- Cloud computing

- Microservices

- DevOps

- Containerization

Yet these technologies didn’t fail because they were new.

They failed when teams implemented them without:

- Clear architecture

- Defined ownership

- Testing discipline

- Operational boundaries

AI is no different.

The models are powerful.

The APIs are mature.

The tooling is widely available.

What’s often missing is structure.

What Is the “Boring Work”?

The boring work is not glamorous.

It does not appear in vendor demos.

It does not generate keynote slides.

It includes:

- Workflow documentation

- Data boundary definition

- Input/output contracts

- Exception mapping

- Logging design

- Governance controls

- Test harness creation

- Performance measurement

This work feels incremental.

It feels procedural.

It feels unexciting.

But it is what turns AI from a demo into a system.

Why Teams Skip It

There are predictable reasons.

1. Executive Pressure for Speed

Leadership wants visible AI progress.

Boring work does not look like progress.

It produces:

- Documents

- Diagrams

- Definitions

- Specifications

Those artifacts feel slow compared to deploying a platform.

2. Vendor Messaging Emphasizes Capability, Not Discipline

AI platforms are marketed as:

- End-to-end transformation

- Intelligent automation ecosystems

- Enterprise copilots

Very little marketing discusses:

- Failure isolation

- Input validation

- Deterministic boundaries

- Exception escalation

Yet these are the foundations of durable AI execution.

3. Engineers Want to Build, Not Specify

Even technical teams prefer:

- Integrating APIs

- Building agent workflows

- Experimenting with orchestration

Specification work can feel like bureaucracy.

But skipping it creates fragile systems.

What Actually Breaks When Boring Work Is Skipped

When teams move straight to implementation:

Undefined Inputs

The AI receives inconsistent data.

Output quality fluctuates.

Debugging becomes guesswork.

Unbounded Outputs

The AI generates responses that:

- Don’t match required format

- Break downstream systems

- Introduce compliance risk

Without output contracts, systems become unstable.

No Failure Model

When performance degrades:

- No thresholds are defined

- No escalation path exists

- No rollback strategy is documented

Small errors become systemic issues.

Blurred Ownership

When AI behavior is inconsistent:

- Is it a prompt issue?

- A data issue?

- A model issue?

- A workflow issue?

Without clear boundaries, accountability dissolves.

AI Is Not Fragile. Architecture Is.

AI models are probabilistic by design.

Enterprise systems are deterministic by necessity.

The integration between those two worlds requires:

- Explicit boundaries

- Validation layers

- Measurable criteria

- Controlled mutation

If these are absent, instability is guaranteed.

The technology isn’t the problem.

The skipped architectural work is.

The Discipline Layer Most Teams Ignore

Successful AI execution in enterprise environments typically includes:

- Defined business capability

- Explicit input schema

- Required output format

- Measurable performance criteria

- Logging of all interactions

- Monitoring thresholds

- Human review triggers

- Version control for prompts and logic

None of this is glamorous.

All of it is necessary.

Why This Matters in Microsoft and .NET Environments

Organizations already operating within:

- Azure

- .NET applications

- SQL-backed systems

- Power Platform

- Microsoft Copilot

Do not lack tooling.

They often lack:

- Boundary enforcement

- Deterministic core separation

- Explicit AI integration layers

- Structured validation

These are architectural responsibilities — not platform purchases.

Skipping them creates instability regardless of model quality.

The Scale Multiplier Effect

AI amplifies whatever structure exists.

If structure is:

- Clear → AI increases productivity.

- Weak → AI increases inconsistency.

Scale does not solve fragility.

It magnifies it.

This is why organizations that rush to enterprise-wide AI deployments often experience:

- Inconsistent output

- Eroding trust

- Quiet abandonment

The system wasn’t flawed because AI was new.

It was flawed because discipline was skipped.

What the Boring Work Actually Produces

Done correctly, the “boring work” produces:

- Predictable output ranges

- Clear failure handling

- Measurable ROI

- Reduced operational risk

- Executive confidence

- Engineering trust

It creates the conditions for AI to operate safely inside enterprise systems.

A Practical Reset Framework

If your AI initiative is unstable, pause expansion and ask:

- Is the capability clearly defined?

- Are inputs standardized?

- Is output format enforced?

- Are error thresholds defined?

- Is logging complete?

- Is ownership clear?

If any answer is unclear, the solution is not another tool.

It is finishing the boring work.

Final Perspective

AI is not failing because it is new.

It fails when teams:

- Skip specification

- Ignore boundaries

- Avoid governance

- Confuse speed with execution

- Scale before stabilizing

The irony is simple:

The less exciting the work feels,

the more likely the AI system will succeed.

Discipline beats novelty.

Structure beats hype.

Boring work builds durable systems.

Frequently Asked Questions

Why do AI projects fail even when the technology is mature?

Most AI failures are not caused by immature models. They happen because organizations skip foundational work such as workflow definition, input/output contracts, performance measurement, and governance controls. Without these structural elements, AI systems produce inconsistent and unstable results.

What is the “boring work” required for successful AI execution?

The “boring work” includes:

- Workflow documentation

- Data boundary definition

- Input and output schema design

- Exception handling rules

- Logging and monitoring setup

- Performance measurement criteria

- Governance and compliance controls

These activities create the stability required for AI to operate reliably in production environments.

Why do teams avoid specification and documentation in AI initiatives?

Teams often skip specification work due to executive pressure for speed, vendor-driven hype, or the belief that AI experimentation should precede structure. However, skipping foundational discipline increases instability and slows long-term progress.

How does skipping boring work affect enterprise AI systems?

When foundational work is skipped, organizations experience:

- Inconsistent AI outputs

- Integration failures

- Debugging difficulties

- Compliance risks

- Eroded stakeholder trust

Small structural gaps expand rapidly when AI systems are scaled.

Is AI inherently unstable because it is probabilistic?

AI models are probabilistic by design, but enterprise systems are deterministic. Stability comes from clearly defined integration boundaries, validation layers, measurable thresholds, and controlled system mutation. Instability typically results from poor architecture, not from the probabilistic nature of AI itself.

How can organizations assess whether they have skipped critical groundwork?

Organizations should ask:

- Is the capability clearly defined?

- Are inputs standardized and validated?

- Is output format enforced?

- Are performance thresholds documented?

- Is logging comprehensive?

- Is ownership clearly assigned?

If any of these are unclear, foundational work may have been skipped.

Why is “boring work” especially important in Microsoft and .NET environments?

Enterprise environments built on Azure, .NET, SQL, and Power Platform rely on deterministic logic and structured integration. AI must be architecturally isolated, validated, and monitored within these systems. Skipping foundational structure introduces instability into otherwise predictable enterprise applications.

Can AI scale successfully without strong governance?

No. AI scaling without governance increases operational risk. Effective governance includes logging, monitoring, auditability, rollback procedures, and version control for prompts and integration logic. Scale magnifies weaknesses in governance.

What is the biggest misconception about AI execution speed?

The biggest misconception is that faster deployment equals progress. In reality, speed without structure leads to rework, instability, and erosion of trust. Sustainable AI execution depends on disciplined preparation before expansion.

What is the most important factor in long-term AI success?

The most important factor is disciplined execution. Clear work definition, measurable outcomes, architectural boundaries, and governance controls determine whether AI systems remain stable, scalable, and trusted over time.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish