Enterprise AI initiatives rarely fail in public.

They fail quietly — after months of meetings, workshops, slide decks, and “alignment sessions.”

Everyone agrees.

Everyone nods.

Everyone leaves the room believing progress has been made.

Then execution begins.

And everything unravels.

The uncomfortable truth is this:

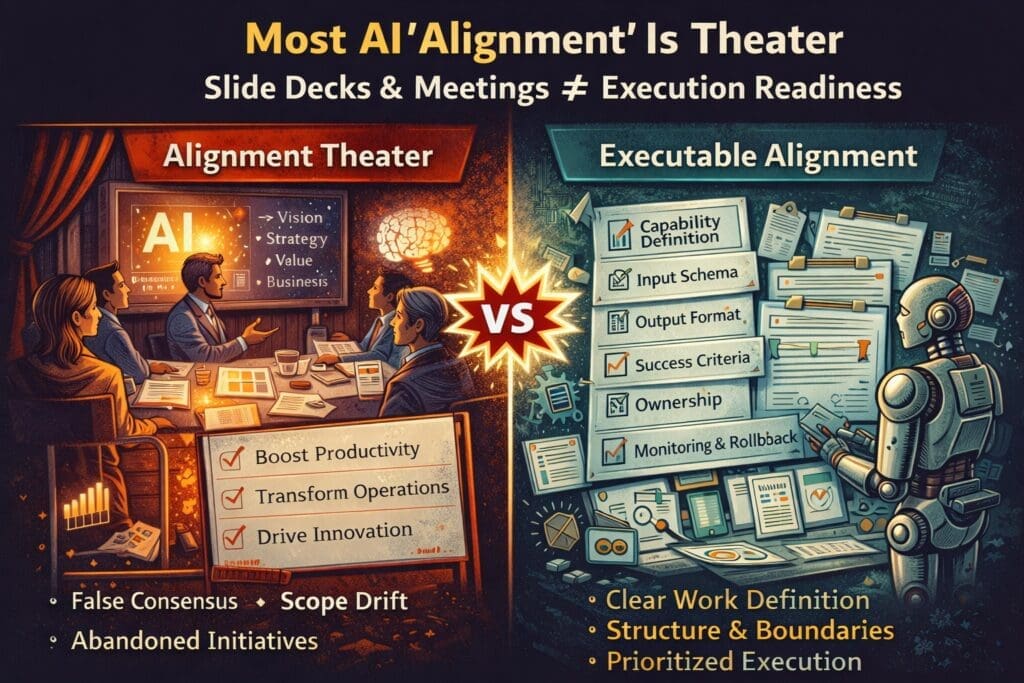

Most AI “alignment” is theater.

It looks productive.

It sounds strategic.

It produces slides.

But it does not produce executable structure.

What Alignment Is Supposed to Mean

In theory, AI alignment should produce:

- A shared definition of the business capability

- Clear ownership

- Defined inputs and outputs

- Measurable success criteria

- Explicit architectural boundaries

- Agreed failure thresholds

Alignment should reduce ambiguity.

In practice, it often preserves it.

The Symptoms of Alignment Theater

You can recognize performative alignment by these patterns:

1. Agreement Without Artifacts

The team agrees that:

- “AI will improve efficiency.”

- “We’ll automate decision support.”

- “We want enterprise copilots.”

But there are no documented:

- Input schemas

- Output contracts

- Decision rules

- Exception paths

Agreement without artifacts is not alignment.

It is consensus theater.

2. Strategy Without Work Definition

Executive alignment sessions often define:

- Vision

- Value statements

- Strategic themes

- Budget allocation

What they rarely define:

- The exact task AI will perform

- The boundaries of that task

- The measurable success conditions

Without work definition, execution becomes guesswork.

3. Engineers Interpreting Intent

After alignment meetings, engineering teams must translate:

“Improve productivity”

into

“Build feature X with constraints Y and performance Z.”

That translation layer is where most AI initiatives break.

Not because engineers are resistant.

Not because executives are unrealistic.

Because the work was never explicitly specified.

Why Alignment Theater Happens

There are structural reasons.

1. Alignment Feels Like Progress

Meetings create movement.

Slides create clarity.

Budget approval creates momentum.

But none of those create executable architecture.

Alignment without specification is forward motion without traction.

2. AI Is Abstract by Nature

AI discussions often revolve around:

- Capabilities

- Potential

- Transformation

- Intelligence

These are conceptual topics.

Execution requires:

- Schemas

- Thresholds

- Constraints

- Failure handling

Conceptual alignment does not automatically translate into operational definition.

3. Nobody Owns the “Middle Layer”

Between strategy and code is a layer most organizations underinvest in:

Explicit work specification.

This includes:

- Task boundaries

- Decision logic

- Input/output contracts

- Performance metrics

- Governance rules

Without this layer, alignment collapses under execution pressure.

The Cost of Performative Alignment

When alignment is theater, the organization experiences:

- Scope drift

- Conflicting interpretations

- Frustrated engineers

- Executive confusion

- Inconsistent AI output

- Quiet abandonment of initiatives

The failure rarely explodes.

It slowly loses trust.

Real Alignment Produces Constraints

True alignment produces artifacts.

For AI initiatives, that means documented answers to questions like:

- What specific decision is AI supporting or automating?

- What data inputs are required and validated?

- What output format is mandatory?

- What accuracy threshold is acceptable?

- When does human review trigger?

- What happens when performance degrades?

If these are not written down, alignment is incomplete.

Alignment in Microsoft and .NET Environments

In enterprise Microsoft-centric systems:

- Domain logic is deterministic.

- Integration points must be defined.

- Outputs often feed structured workflows.

AI alignment must account for:

- Architectural boundaries

- Validation layers

- Logging and monitoring

- Rollback procedures

If alignment discussions stay at the conceptual level, they fail when they hit structured enterprise systems.

How to Convert Alignment Theater into Execution Readiness

Here is a practical reset model.

After any AI alignment session, require:

- A written capability definition.

- A documented input schema.

- A required output format.

- Measurable success criteria.

- Defined ownership.

- A monitoring and logging plan.

- An exception and rollback model.

If these artifacts do not exist, alignment is incomplete.

Do not scale.

Do not add tools.

Finish the specification.

Why This Feels Slower — But Isn’t

Specification work feels slow because:

- It exposes ambiguity.

- It forces tradeoffs.

- It requires decisions.

But the alternative is slower:

- Rework

- Scope expansion

- Architectural drift

- Loss of stakeholder trust

Alignment that produces constraints accelerates execution.

Alignment that produces consensus without structure delays it.

The Executive-Engineer Disconnect

Executives are often correct about:

- Strategic direction

- Market urgency

- Competitive pressure

Engineers are often correct about:

- Technical feasibility

- System constraints

- Operational risk

The disconnect occurs because:

Intent is aligned.

Execution details are not.

Bridging that gap requires disciplined work definition — not more meetings.

Final Perspective

AI alignment should reduce ambiguity.

If it doesn’t produce:

- Defined capabilities

- Measurable thresholds

- Architectural boundaries

- Explicit ownership

It is performance, not preparation.

AI does not fail because teams disagree.

It fails because alignment never became executable.

Structure turns agreement into systems.

Without structure, alignment is theater.

Frequently Asked Questions

What does “AI alignment” mean in enterprise environments?

AI alignment refers to ensuring that business goals, technical implementation, governance controls, and measurable outcomes are clearly defined and agreed upon before execution. True alignment produces documented capabilities, boundaries, and performance criteria — not just strategic agreement.

Why is most AI alignment described as “theater”?

AI alignment becomes “theater” when meetings and strategy sessions create consensus but fail to produce executable artifacts. If alignment results only in slide decks, high-level vision statements, or budget approvals — without input/output definitions and measurable criteria — execution will likely fail.

What artifacts should real AI alignment produce?

ffective AI alignment should produce:

- A clearly defined business capability

- Documented input schemas

- Required output formats

- Measurable success thresholds

- Defined ownership

- Monitoring and logging plans

- Exception and rollback procedures

Without these artifacts, alignment is incomplete.

Why does alignment often break down during implementation?

Alignment breaks down because conceptual agreement does not automatically translate into operational definition. Engineers must interpret vague strategic intent into structured system logic. If work boundaries and constraints are not explicitly defined, inconsistent interpretations emerge.

How does alignment theater impact AI project success?

Alignment theater leads to:

- Scope drift

- Conflicting implementation decisions

- Eroded trust in AI outputs

- Rework and delays

- Quiet abandonment of initiatives

The failure is gradual and often difficult to diagnose.

How can organizations detect performative alignment early?

Warning signs include:

- Broad agreement without documented specifications

- No measurable success criteria

- Undefined failure thresholds

- Unclear ownership of AI decisions

- Lack of logging and monitoring plans

If these elements are missing, alignment is likely superficial.

Why is structured alignment critical in Microsoft and .NET environments?

Enterprise Microsoft environments rely on deterministic system behavior and structured integration points. AI must be integrated with clearly defined boundaries, validation layers, and governance controls. Conceptual alignment without technical definition creates instability within otherwise predictable systems.

What is the “middle layer” between strategy and execution?

The middle layer is explicit work specification. It translates strategic goals into defined capabilities with measurable criteria and architectural constraints. Many organizations underinvest in this layer, causing misalignment during implementation.

Can alignment meetings still be valuable?

Yes, but only if they produce documented constraints and executable definitions. Alignment meetings should end with artifacts that engineers can implement, test, and measure — not just shared enthusiasm.

What is the key difference between agreement and execution readiness?

Agreement reflects shared intent.

Execution readiness reflects documented structure.

AI initiatives succeed when alignment produces structure that can be tested, monitored, and governed — not merely consensus.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish