“Prompt engineer” is one of the fastest-spreading titles in AI.

It is also one of the most misleading.

Prompts matter.

Good prompts help.

But treating prompt engineering as a standalone job role is how organizations confuse tooling with engineering—and eventually ship fragile systems into production.

This article explains why prompt engineering is a skill, not a role—and what enterprises should do instead.

Where the Prompt Engineering Myth Came From

Prompt engineering emerged during a specific moment:

- Early large language models were brittle

- Small wording changes caused big behavior changes

- Few people understood model behavior

- Quick wins were possible without deep system changes

In that context, prompts looked powerful.

But temporary leverage is not structural responsibility.

Enterprises mistook an interface trick for an architectural foundation.

Prompts Are Inputs, Not Infrastructure

In production systems, prompts are:

- Configuration

- Context

- Parameters

- Inputs

They are not:

- Business logic

- Validation rules

- Authorization mechanisms

- Error handling

- Accountability systems

Calling prompt engineering a job role is like calling query writing a database architecture role.

It confuses usage with ownership.

Why Enterprises Get Burned by This Title

When “prompt engineer” becomes a role, organizations accidentally assign responsibility to the wrong layer.

This leads to:

- Business rules embedded in prompts

- Undocumented decision logic

- No version control for behavior

- Fragile systems tied to wording

- Impossible debugging after failures

When something breaks, no one can answer:

Why did the system behave this way?

Because the “logic” lived in text, not systems.

Prompts Change. Systems Must Endure.

Prompts are inherently unstable:

- Models change

- Context shifts

- Token limits evolve

- Safety layers update

- Vendors modify behavior

Enterprise systems must be resilient to prompt drift.

That requires:

- Clear separation of concerns

- Versioned capabilities

- Explicit business logic

- Observability and auditability

Prompts can assist systems.

They cannot replace them.

What Prompt Engineering Actually Is

Prompt engineering is best understood as:

- A technique

- A communication skill

- A tool usage pattern

It belongs inside roles such as:

- Software engineers

- ML engineers

- Architects

- Product engineers

- Domain experts working with engineers

It does not belong alone at the center of a production system.

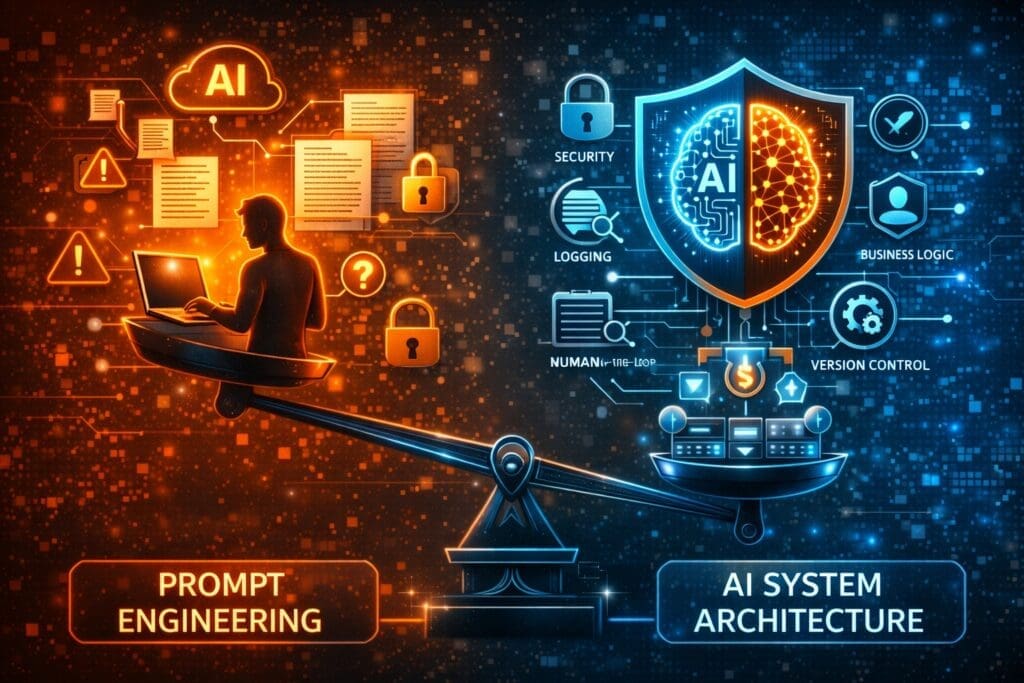

]The Real Enterprise Risk No One Talks About

The danger isn’t that prompt engineers exist.

The danger is that leadership believes:

If we get the prompt right, the system is done.

This belief causes organizations to skip:

- Architecture

- Security

- Logging

- Cost controls

- Human-in-the-loop safeguards

And those omissions don’t show up in demos.

They show up in incidents.

Why Engineers Push Back on Prompt-First Thinking

Experienced engineers resist prompt-centric systems because they’ve learned a hard lesson:

Anything that can’t be tested, versioned, audited, and reasoned about will fail in production.

Prompts alone fail every one of those tests.

This isn’t stubbornness.

It’s operational realism.

So What Should Enterprises Do Instead?

Treat prompts as:

- Configurable inputs

- Testable artifacts

- Versioned resources

- Replaceable components

And treat systems as:

- Owners of business logic

- Enforcers of constraints

- Sources of truth

- Guardians of accountability

That’s how AI becomes a capability, not a liability.

Conversation Starters: Engineering ↔ Leadership

For Leadership to Ask Engineering

(to understand system risk)

- Where do prompts currently encode business logic?

- What would break if a model update changed prompt behavior?

- How do we audit decisions made through prompts today?

For Engineering to Ask Leadership

(to align expectations)

- What decisions must remain explainable under audit?

- Where is flexibility acceptable—and where is it not?

- Who owns outcomes when AI behavior changes unexpectedly?

These are not technical questions.

They are governance questions.

The Bottom Line

Prompt engineering matters.

But prompts are not a profession.

They are a tool—powerful, useful, and dangerous when misunderstood.

Enterprise AI systems don’t succeed because someone wrote clever prompts.

They succeed because organizations built systems that don’t depend on cleverness to stay safe.

And that distinction matters more than any job title.

Frequently Asked Questions

What is prompt engineering?

Prompt engineering is the practice of crafting inputs, instructions, and context to guide AI model behavior. It is a skill and technique, not a complete system or standalone engineering discipline.

Is prompt engineering a real job role?

Not in enterprise production systems.

Prompt engineering is a supporting skill used within roles like software engineering, AI engineering, architecture, and product development. Treating it as a standalone job role creates fragile systems and misplaced responsibility.

Why do companies hire “prompt engineers”?

Early AI systems were highly sensitive to wording, and small prompt changes produced large behavior shifts. This created short-term value—but that value does not scale or replace proper system design.

What is the risk of relying too much on prompts?

Over-reliance on prompts leads to:

- Business logic embedded in text

- No version control for behavior

- Poor auditability

- Fragile systems that break when models change

- Difficult debugging after failures

These risks only appear in production—not demos

Are prompts considered business logic?

They shouldn’t be.

In enterprise systems, prompts should be treated as inputs or configuration, while business logic must live in versioned, testable, and auditable systems.

Can prompts be versioned and tested?

Yes—but only when treated as artifacts within a larger system:

- Stored and versioned explicitly

- Tested against known scenarios

- Observed through logging

- Decoupled from core decision logic

Prompts alone do not provide these guarantees.

Why do engineers push back on prompt-first AI designs?

Because prompts:

- Are fragile

- Change with model updates

- Cannot enforce constraints reliably

- Cannot own accountability

Experienced engineers prioritize systems that survive change, not clever shortcuts.

Does prompt engineering still matter?

Absolutely.

Prompt engineering matters as a tactical skill for:

- Improving AI output quality

- Reducing ambiguity

- Enhancing usability

It just doesn’t replace architecture, governance, or engineering discipline.

What roles should handle prompt design in enterprises?

Prompt design typically belongs within:

- Software engineering teams

- AI/ML engineering teams

- Architects

- Product teams working with engineers

Ownership should remain with teams responsible for system outcomes.

What happens when AI behavior changes due to a prompt?

If systems are poorly designed, no one can explain or audit the decision.

Enterprise-grade systems ensure:

- Prompt usage is logged

- Decisions are traceable

- Responsibility is clearly assigned

This protects both the business and its leaders.

What is the biggest misconception about prompt engineering?

That writing better prompts is enough to make AI production-ready.

In reality, success depends on:

- Architecture

- Security

- Observability

- Cost control

- Human accountability

Prompts assist systems. They do not replace them.

How should enterprises think about prompts instead?

Prompts should be treated as:

- Configurable inputs

- Replaceable components

- Testable artifacts

- One layer within a governed system

That framing enables flexibility without sacrificing control.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish