“Can we just add AI to this?”

It sounds harmless.

Optimistic, even.

In practice, it’s one of the fastest ways to destabilize a production system.

Most AI failures in enterprise environments don’t happen because the models are bad.

They happen because AI is treated like a feature instead of what it actually is:

A cross-cutting system that amplifies every existing weakness.

When organizations say “just add AI,” they’re usually underestimating what they’re really changing.

Why “Just Add AI” Feels Reasonable (At First)

From the outside, AI often looks modular.

- There’s an API

- There’s a prompt

- There’s a response

So the mental model becomes:

We’ll plug this in where the data already flows.

That assumption works right up until production.

Because AI doesn’t behave like traditional software components.

It doesn’t fail predictably, deterministically, or loudly.

It changes how systems behave under load, uncertainty, and edge cases — often without obvious signals.

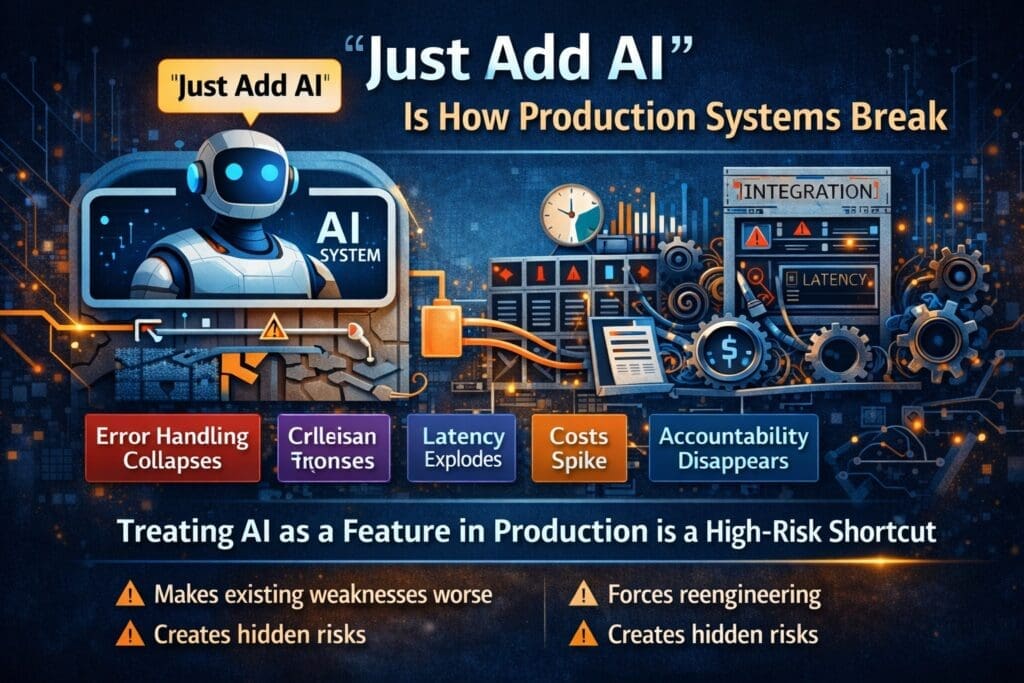

AI Is Not a Feature — It’s a System Multiplier

When you add AI to an existing system, you don’t just add capability.

You multiply:

- Latency

- Cost variability

- Error surface area

- Operational complexity

- Risk exposure

If the underlying system has weak logging, AI makes observability worse.

If error handling is inconsistent, AI makes failures harder to diagnose.

If ownership is unclear, AI makes accountability disappear.

AI doesn’t create these problems.

It exposes them.

The Hidden Assumption Behind “Just Add AI”

The phrase “just add AI” usually carries an unspoken belief:

Our system is already stable enough to absorb this.

That belief is often wrong.

Many production systems are:

- Barely observable

- Tightly coupled

- Held together by tribal knowledge

- Optimized for known behavior

AI introduces probabilistic behavior into environments designed for certainty.

That mismatch is where things start breaking.

What Actually Breaks First in Production

When AI is layered onto an existing system without architectural intent, failures follow a familiar pattern.

1. Error Handling Collapses

AI doesn’t return clean failures.

It returns:

- Partial answers

- Confident nonsense

- Incomplete outputs

- Responses that look “good enough”

Systems built for binary success/failure states don’t know what to do with that.

So errors slip through — quietly.

2. Costs Spike in Non-Linear Ways

Traditional systems scale predictably.

AI systems don’t.

- Retries amplify inference cost

- Timeouts trigger cascades

- Slight prompt changes alter token usage

- Edge cases generate expensive responses

Teams discover the problem only after invoices arrive.

3. Latency Becomes Everyone’s Problem

AI introduces:

- Network dependency

- Variable response times

- Queue backpressure

A single slow AI call can ripple through an otherwise fast system, degrading user experience in places no one expected.

4. Accountability Gets Fuzzy

When something goes wrong, questions start flying:

- Was it the model?

- The prompt?

- The data?

- The integration?

- The user?

Without clear boundaries, AI failures turn into blame diffusion.

Production systems don’t survive long in that environment.

Why Engineers Push Back (and Why They’re Right)

When engineers resist “just add AI,” it’s often misinterpreted as:

- Overengineering

- Fear of change

- Gold-plating

What they’re actually reacting to is unbounded risk.

Experienced engineers know that adding AI means revisiting:

- Architecture

- Observability

- Failure modes

- Escalation paths

- Ownership

They also know that skipping those conversations doesn’t save time — it just moves the cost downstream, where it’s more expensive and more visible.

AI Changes the Shape of the System

Even when AI is “only” an interface, it still reshapes the backend.

Production-ready AI systems require:

- Async processing and queues

- Idempotent operations

- Confidence scoring

- Human escalation paths

- Audit trails

- Cost controls

If the system wasn’t designed for these concerns, “adding AI” forces them in anyway — usually under pressure.

That’s not agility.

That’s reactive engineering.

The Difference Between Prototypes and Production

Prototypes exist to answer one question:

Is this idea even possible?

Production systems answer different questions:

- Can we operate this safely?

- Can we explain decisions?

- Can we recover from failure?

- Can we afford it at scale?

- Can we support it for years?

“Just add AI” is a prototype mindset applied to production reality.

That mismatch is why so many promising demos collapse when real users arrive.

A Better Question Than “Can We Just Add AI?”

The productive question isn’t whether AI can be added.

It’s:

What parts of the system does AI force us to re-think?

That reframing leads to better conversations about:

- Risk tolerance

- Ownership

- Cost ceilings

- Failure acceptance

- Trust boundaries

Those conversations slow things down at the beginning —

and speed everything up later.

This Is Not an Anti-AI Argument

AI belongs in production systems.

But only when it’s treated with the same seriousness as:

- Payments

- Security

- Identity

- Compliance

No one says “just add payments.”

No one says “just add authentication.”

AI deserves the same respect.

Conversation Starters: Engineering ↔ Leadership

For Leadership to Ask Engineering

(to understand integration risk and system impact)

- Which parts of our system are most fragile if AI behavior changes?

- Where would failures be hardest to detect?

- What would we need to redesign to support AI safely?

For Engineering to Ask Leadership

(to understand pressure and priorities)

- What risks are acceptable to accelerate delivery?

- Where would failure be least tolerable?

- What outcomes matter more than speed right now?

These questions are not meant to be answered immediately.

They are meant to be discussed.

Closing Thought

“Just add AI” isn’t a strategy.

It’s a signal that the system hasn’t been examined closely enough.

AI doesn’t break production systems by itself.

It breaks the assumptions those systems were built on.

The organizations that succeed with AI aren’t the ones moving fastest.

They’re the ones willing to slow down long enough to understand what they’re actually changing.

Frequently Asked Questions

What does “just add AI” mean in practice?

“Just add AI” refers to treating AI like a simple feature or plug-in—something that can be layered onto an existing system without rethinking architecture, observability, cost controls, or failure modes. This mindset works in demos but often fails in production.

Why does “just add AI” break production systems?

Because AI changes how systems behave under uncertainty. It introduces probabilistic outputs, variable latency, non-linear costs, and new failure modes that most production systems were never designed to handle.

Is AI the reason production systems fail?

No. AI doesn’t cause failures by itself. It exposes weaknesses that already exist—such as poor logging, brittle error handling, unclear ownership, or tight coupling. AI amplifies these issues instead of hiding them.

Why do AI demos work but production systems fail?

Demos answer one question: Is this possible?

Production systems must answer many others, including:

- Can we monitor and explain this?

- Can we recover from failure?

- Can we afford it at scale?

- Can we support it long-term?

“Just add AI” applies prototype thinking to production reality.

What breaks first when AI is added without proper design?

Common early failures include:

- Silent or partial errors passing undetected

- Unexpected cost spikes from retries and edge cases

- Latency and performance degradation

- Confusion about ownership and accountability

These failures often surface only after real users are involved.

Why do engineers push back on “just add AI” requests?

Engineers aren’t resisting AI—they’re reacting to unbounded risk. Experienced teams know that adding AI requires rethinking architecture, observability, escalation paths, and cost controls. Skipping those steps increases downstream cost and failure.

Isn’t slowing down to redesign anti-agile?

No. Thoughtful design early on reduces long-term drag. Reactive engineering—fixing problems after they reach production—is slower, more expensive, and more disruptive than intentional system design upfront.

How should AI be treated in production systems?

AI should be treated like other critical infrastructure:

- Payments

- Security

- Identity

- Compliance

No one says “just add payments” or “just add authentication.” AI deserves the same level of seriousness and planning.

Can AI be safely added to an existing system?

Yes—but not casually. Safe AI integration requires:

- Clear boundaries and ownership

- Observability and logging

- Cost and latency controls

- Error handling and escalation paths

- Alignment between engineering and leadership on risk

The question isn’t whether AI can be added, but what must change to support it.

What’s a better question than “Can we just add AI?”

A more productive question is:

“What assumptions in our system does AI force us to re-examine?”

That question leads to better decisions about risk, cost, accountability, and long-term sustainability.

Is this an argument against using AI in production?

Not at all. It’s an argument against careless integration. AI belongs in production systems—but only when it’s treated as a system-level change, not a cosmetic upgrade.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish