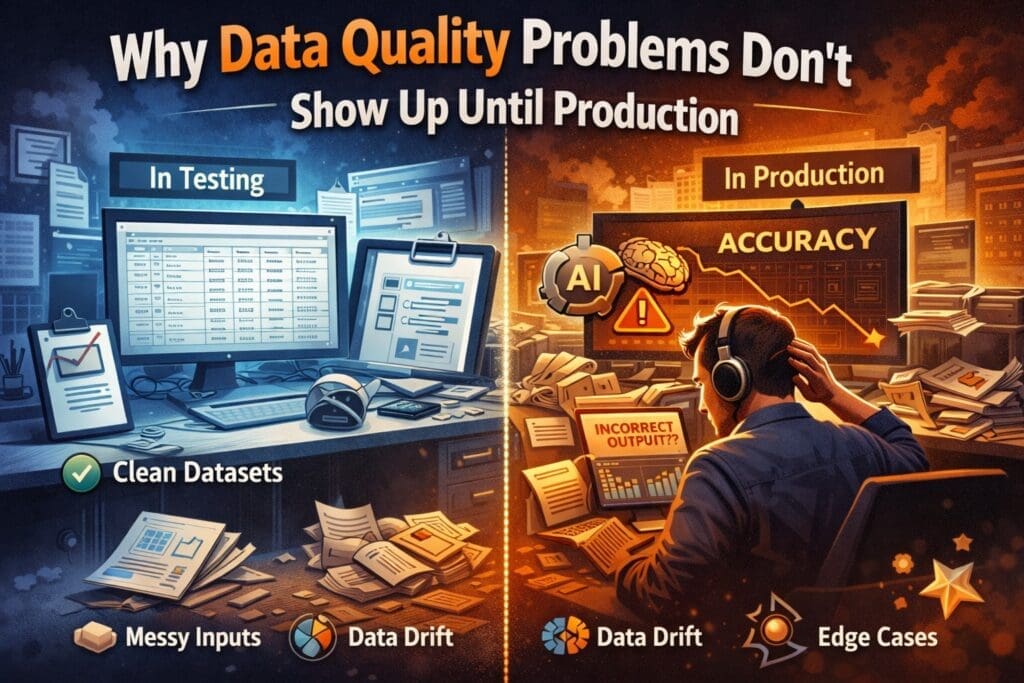

Data Looks Fine — Until It Doesn’t

Most AI systems don’t fail because the model is bad.

They fail because the data silently changes once real users, real workflows, and real edge cases appear.

In prototypes:

- Data is clean

- Inputs are curated

- Edge cases are rare

- Assumptions hold

In production:

- Inputs get messy

- Context changes

- Users behave creatively

- Reality leaks in

That’s when data quality problems finally surface — often too late, too publicly, and too expensively.

Why Prototypes Hide Data Quality Issues

Prototypes are designed to prove possibility, not durability.

They usually rely on:

- Historical datasets

- Manually selected examples

- Happy-path scenarios

- Known formats and structures

This creates a false sense of confidence.

If the AI works on your best data, it feels ready.

Production, however, introduces uncontrolled data, and that’s where assumptions collapse.

The Data Quality Traps That Only Appear in Production

1. Real Users Don’t Behave Like Test Data

Test data is polite.

Production data is not.

Users:

- Misspell

- Paste garbage

- Leave fields blank

- Use unexpected formats

- Change behavior over time

AI systems trained or tested on ideal inputs struggle when:

- Context is missing

- Intent is ambiguous

- Data is partially correct

Engineers expect this.

Prototypes rarely reveal it.

2. Data Drift Is Invisible at First

One of the most dangerous characteristics of data quality problems:

They start small.

Gradual changes include:

- New terminology

- Updated business processes

- Seasonal behavior

- Market or regulatory shifts

Models don’t “break” immediately.

They quietly degrade.

Without monitoring, teams only notice when:

- Accuracy complaints increase

- Manual overrides become common

- Trust erodes

By then, the root cause is harder to isolate.

3. Training Data ≠ Operational Data

Training datasets are usually:

- Historical

- Cleaned

- Structured

- Reviewed

Operational data is:

- Live

- Incomplete

- Inconsistent

- Often user-generated

The gap between the two is where production failures live.

AI systems don’t adapt automatically.

They repeat learned patterns — even when those patterns no longer match reality.

4. Upstream Changes Break Downstream AI

AI systems depend on data pipelines:

- APIs

- Databases

- Documents

- Third-party feeds

When upstream systems change:

- Field names shift

- Formats evolve

- Optional fields disappear

- Semantics drift

Traditional software throws errors.

AI systems often keep running — incorrectly.

This silent failure mode is especially dangerous.

5. Data Quality Problems Amplify at Scale

Small data issues are manageable in isolation.

At scale:

- Errors repeat thousands of times

- Incorrect outputs propagate

- Costs increase due to retries and reprocessing

- Human review becomes a bottleneck

What looked like a minor inconsistency becomes a systemic issue.

Why These Problems Are Hard to Catch Early

Data quality issues are elusive because:

- AI outputs are probabilistic

- Partial correctness feels acceptable

- Failures don’t always throw errors

- Users adapt instead of reporting issues

By the time leadership notices, engineering is already firefighting.

How Engineers Design for Data Reality

Production-ready teams assume data will degrade.

They design systems that:

- Validate inputs before inference

- Track confidence and anomaly signals

- Log data patterns over time

- Separate business logic from AI interpretation

- Enable human escalation when confidence drops

This isn’t pessimism.

It’s experience.

The Business Risk of Ignoring Data Quality

When data quality isn’t treated as an operational concern:

- AI trust declines

- Manual work increases

- Compliance risk rises

- Costs grow quietly

- Teams lose confidence in AI initiatives

Executives often ask:

Why did this work before?

The honest answer:

Because reality hadn’t arrived yet.

Conversation Starters: Engineering ↔ Leadership

For Leadership to Ask Engineering

(to understand risk and durability)

- How does the system detect changes in data quality over time?

- Which inputs are most sensitive to degradation?

- What signals tell us trust is declining before users complain?

For Engineering to Ask Leadership

(to align expectations and priorities)

- How much data variability is acceptable before intervention?

- Where is correctness more important than speed?

- Which workflows justify added validation or human review?

These conversations prevent surprises — not after them.

Final Thought

Data quality problems don’t appear suddenly.

They arrive quietly, gradually, and convincingly.

AI systems don’t fail because teams ignore data.

They fail because data behaves differently in the real world than it does in controlled environments.

The best AI systems aren’t the ones with perfect data.

They’re the ones designed for imperfect reality.

And that’s not an AI problem.

That’s an engineering one.

Frequently Asked Questions

Why do data quality problems usually appear only in production?

Because prototypes rely on curated, historical, and well-formed data. In production, real users introduce variability, incomplete inputs, edge cases, and unexpected behavior. These conditions don’t exist in controlled testing environments, so issues remain hidden until the system is exposed to reality.

What is data drift, and why is it so hard to detect?

Data drift occurs when the statistical properties of input data change over time. It’s difficult to detect because it often happens gradually. AI systems may continue producing outputs that appear reasonable while accuracy and trust quietly degrade.

Why does training data differ so much from operational data?

Training data is usually cleaned, labeled, reviewed, and static. Operational data is live, inconsistent, and influenced by user behavior, process changes, and external systems. AI models don’t automatically adapt to these differences unless they’re explicitly designed to.

Can AI systems detect poor data quality on their own?

Not reliably. AI models generally assume inputs are valid. Without validation, monitoring, or confidence tracking, systems may continue operating while producing increasingly incorrect results.

Why don’t data quality issues always trigger errors?

Unlike traditional software, AI outputs are probabilistic. A result can be partially correct or plausibly wrong without failing outright. This makes data quality problems harder to spot and easier to ignore until impact becomes visible.

How does poor data quality affect AI costs?

Poor data quality increases retries, manual reviews, reprocessing, and human intervention. Over time, these effects amplify inference costs, operational overhead, and support burden — often without clear attribution.

What types of data issues are most common in production AI systems?

Common issues include:

- Missing or incomplete inputs

- Unexpected formats or structures

- Ambiguous user intent

- Changes in upstream systems

- Gradual shifts in behavior or terminology

These rarely show up in early testing.

How do experienced engineering teams manage data quality in production?

They assume data will degrade and design for it by:

Escalating to humans when uncertainty increases

Validating inputs before inference

Monitoring data patterns over time

Tracking confidence and anomaly signals

Separating business logic from AI interpretation

Is data quality a data science problem or an engineering problem?

It’s both — but in production, it’s primarily an engineering responsibility. Data quality becomes an operational concern involving pipelines, monitoring, validation, and system design, not just model training.

What happens when data quality isn’t addressed early?

Organizations often experience:

- Declining trust in AI outputs

- Increased manual work

- Compliance and audit risks

- Quiet cost growth

- Eventually, stalled or abandoned AI initiatives

By the time leadership notices, remediation is much harder.

How early should teams plan for data quality issues?

Before the system reaches production. Data quality controls are far easier to design early than to retrofit later. Teams that wait often discover the problem only after user trust has already been damaged.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish