In traditional software, errors are usually obvious.

A service throws an exception.

A request fails.

A user sees a broken screen.

In AI systems, the most dangerous errors don’t crash anything.

They look like success.

That’s why error handling matters more in AI than it ever did in traditional software—and why teams that reuse old assumptions quietly ship risk into production.

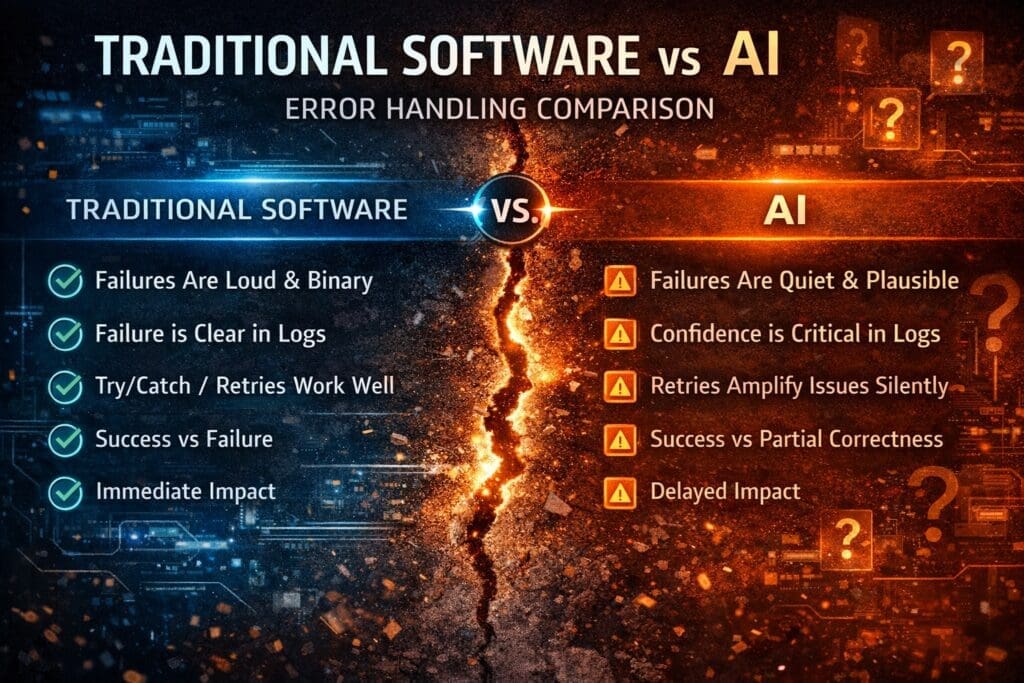

Traditional Software Fails Loudly

Most engineers were trained in environments where:

- Inputs are validated

- Outputs are deterministic

- Errors are explicit

- Failure is binary (it worked or it didn’t)

When something breaks, you know immediately.

Logs spike.

Alerts fire.

Customers complain.

This shaped decades of error-handling patterns:

- Try/catch blocks

- HTTP status codes

- Retries

- Circuit breakers

These still matter—but they’re not enough for AI.

AI Fails Quietly (and Convincingly)

AI introduces failure modes that traditional software never had to deal with:

- Partial correctness

- Plausible but wrong answers

- Confidence without accuracy

- Drift over time

- Context-dependent mistakes

An AI system can:

- Return something

- Sound confident

- Pass basic validation

- Be wrong in subtle, harmful ways

And nothing crashes.

From the system’s perspective, everything worked.

From the business’s perspective, damage is accumulating.

The Most Dangerous AI Error Is “Looks Fine”

Consider these real-world scenarios:

- A document extraction model misreads one clause in 3% of contracts

- A classification model routes edge cases to the wrong workflow

- A summarization model omits a critical exception

- An assistant provides outdated guidance with high confidence

No exception is thrown.

No alert fires.

No one notices—until consequences surface later.

Traditional error handling was never designed for this.

Error Handling in AI Is About Uncertainty, Not Exceptions

In AI systems, the key question is not:

Did it fail?

It’s:

How confident should we be in this result—and what do we do if we’re wrong?

That shifts error handling from:

- Exception-based → probability-based

- Binary outcomes → confidence thresholds

- Immediate failure → delayed impact

Which means error handling must be designed, not improvised.

Four AI Error-Handling Patterns That Matter in Production

1. Partial Correctness Must Be Treated as a First-Class Outcome

AI outputs are rarely 100% right or 100% wrong.

Systems must explicitly handle:

- “Mostly correct”

- “Correct but incomplete”

- “Correct structure, wrong substance”

If your system only understands success vs failure, it is blind to AI reality.

2. Confidence Thresholds Are Error Handling

Confidence scores are not metadata.

They are control signals.

Disciplined systems use confidence to:

- Route outputs to humans

- Trigger secondary validation

- Block automation

- Log high-risk decisions

Ignoring confidence is equivalent to disabling error handling entirely.

3. Retries Can Make Things Worse

In traditional software, retries often help.

In AI systems, retries can:

- Multiply cost

- Reinforce the same mistake

- Create inconsistent outputs

- Mask underlying data issues

Retry logic must be:

- Bounded

- Context-aware

- Cost-aware

“Just retry” is not an error strategy—it’s denial.

4. Human Escalation Is Not Failure

Human-in-the-loop is often framed as a compromise.

In reality, it is the final error handler.

Well-designed AI systems:

- Know when they are uncertain

- Hand off gracefully

- Preserve context for human review

- Learn from escalations

Bad systems pretend autonomy until something breaks.

Why AI Error Handling Is a Business Risk Topic

This isn’t just an engineering concern.

Poor AI error handling leads to:

- Legal exposure (no audit trail)

- Regulatory violations (no explainability)

- Reputation damage (silent wrong answers)

- Cost overruns (uncontrolled retries)

- Loss of executive trust (“we don’t know what it’s doing”)

When leaders say “we don’t trust the AI,” they’re often reacting to missing error handling, not bad models.

Error Handling Belongs Around AI, Not Inside It

A critical architectural principle:

AI should not decide how errors are handled.

Error handling belongs in deterministic systems that:

- Control workflows

- Enforce policies

- Track accountability

- Trigger escalation

AI produces signals.

Engineering systems decide what those signals mean.

This separation is what keeps AI usable at scale.

Why This Is Invisible When Done Right

Good AI error handling:

- Prevents incidents quietly

- Routes edge cases safely

- Reduces surprises

- Protects the business

No one notices.

Which is why it’s often underfunded, rushed, or skipped.

Until it isn’t.

Conversation Starters: Engineering ↔ Leadership

These questions are designed to surface assumptions before production does.

For Leadership to Ask Engineering

(to understand risk and operational exposure)

- How does the system handle “uncertain but plausible” AI outputs?

- Which errors would be invisible without explicit safeguards?

- Where do humans step in—and why there?

For Engineering to Ask Leadership

(to understand priorities and tolerance)

- Which mistakes are unacceptable even at low probability?

- Where is speed more important than certainty—and where isn’t it?

- How should we balance cost vs confidence?

These are not hypothetical questions.

They define how the system behaves under pressure.

Final Thought

Traditional software assumes correctness.

AI assumes uncertainty.

If your error handling hasn’t evolved to reflect that shift, the system hasn’t either.

AI doesn’t need perfection.

It needs discipline around imperfection.

That’s what keeps production systems—and businesses—safe.

Frequently Asked Questions

Why does error handling matter more in AI than traditional software?

Because AI failures are often silent and plausible. Traditional software usually fails loudly with exceptions or crashes. AI systems can return confident-sounding but incorrect results, making errors harder to detect and far more dangerous.

How do AI errors differ from traditional software errors?

Traditional software errors are binary—success or failure.

AI errors are probabilistic and nuanced, including:

- Partial correctness

- Context-dependent mistakes

- Confident but wrong outputs

- Gradual performance drift

These require different error-handling strategies.

What is the most dangerous type of AI error?

The most dangerous AI error is one that looks correct. Plausible outputs that are wrong can quietly influence decisions, workflows, and customers without triggering alerts or exceptions.

Why don’t standard try/catch and retry patterns work well for AI?

Because retries can:

- Multiply inference costs

- Repeat the same mistake

- Mask data or prompt issues

- Create inconsistent results

AI retries must be bounded, contextual, and cost-aware, not automatic.

What role do confidence scores play in AI error handling?

Confidence scores are not metadata—they are control signals.

They should determine:

- Whether automation proceeds

- When human review is required

- When additional validation is triggered

Ignoring confidence effectively disables AI error handling.

Is human-in-the-loop a sign of AI failure?

No. Human-in-the-loop is a safety mechanism, not a weakness. It acts as the final error handler when uncertainty is high and accountability matters.

How should AI systems handle partial correctness?

AI systems must explicitly model partial correctness as a valid outcome. Treating AI outputs as simply “right or wrong” ignores reality and leads to hidden risk in production.

Where should AI error handling live architecturally?

Error handling should live around AI, not inside it.

Deterministic systems should:

- Control workflows

- Enforce policies

- Handle escalation

- Preserve audit trails

AI should produce signals—not decide consequences.

Why don’t AI error-handling problems show up in prototypes?

Because prototypes operate under ideal conditions. Real-world data, edge cases, scale, cost pressure, and user behavior only appear in production—where weak error handling is exposed.

How does poor AI error handling impact the business?

It can lead to:

- Legal and compliance exposure

- Reputational damage

- Undetected data issues

- Cost overruns

- Loss of executive trust

These are business failures, not technical curiosities.

What’s the first step to improving AI error handling?

Start by asking:

- What happens when the AI is uncertain but still responds?

- Which mistakes are unacceptable, even at low probability?

- Where should humans intervene—and why there?

Good error handling begins with good questions.

Do AI systems require stricter engineering discipline than traditional software?

Yes. AI introduces uncertainty, ambiguity, and delayed consequences. That requires more rigor, not less—especially in error handling, observability, and escalation design.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish