Many organizations want AI. Few are willing to do the foundational work that makes it successful.

Many organizations feel pressure to “add AI.”

Sometimes that pressure comes from leadership.

Sometimes from competitors.

Sometimes from board decks, annual reports, or vendor presentations.

The problem is not interest in AI.

The problem is jumping straight to tools and models before the organization has agreed on what “better” actually means.

There is a simpler, safer place to start.

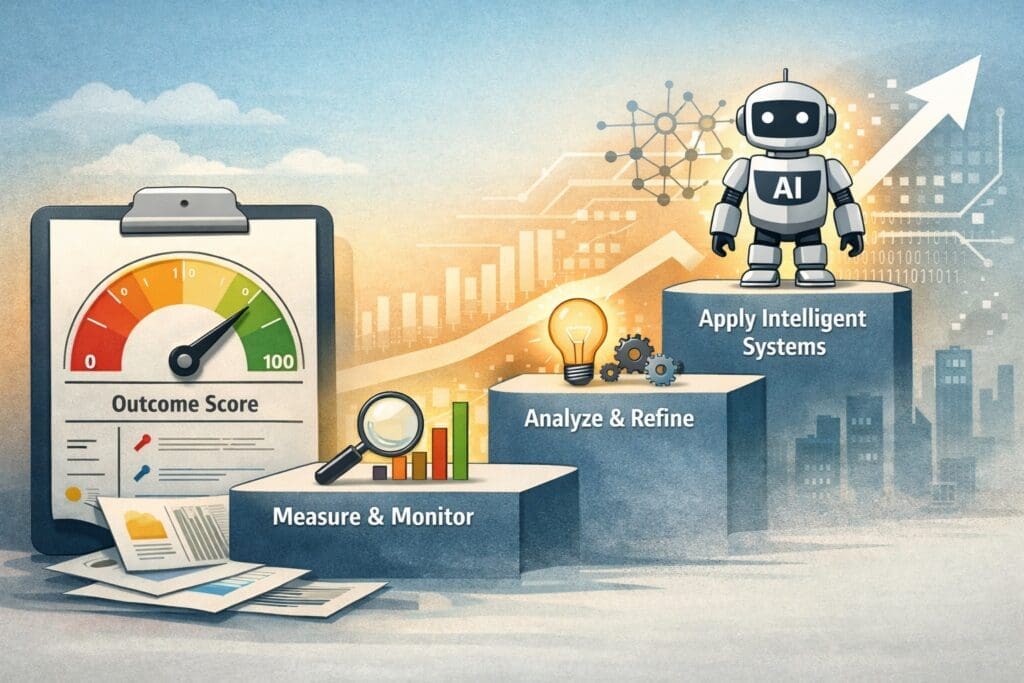

Start by Making Judgment Measurable

Before introducing machine learning models, large language models, or automation, organizations should ask a more basic question:

How do we know whether an outcome is improving or getting worse?

One practical approach is to define a single outcome score, typically on a 0–100 scale:

- 0 = unacceptable outcome

- 100 = ideal outcome

At first, the calculation behind this score will be imperfect:

- Business rules

- Weighted factors

- Heuristics

- Subjective inputs

That’s expected.

The goal is not precision on day one.

The goal is visibility.

Why an Imperfect Metric Is Better Than No Metric

Many AI initiatives fail not because the technology is weak, but because:

- Success was never clearly defined

- Judgment lived only in people’s heads

- Feedback loops were missing

- Improvement could not be measured

A visible metric — even a flawed one — forces important conversations:

- What does “good” actually mean?

- Which inputs matter most?

- Where are we guessing?

- What assumptions are we making?

- What would improvement look like?

Those discussions are foundational to applied AI.

This Is How Real Systems Are Built

In manufacturing, laboratory automation, and industrial systems, the pattern is well understood:

- Instrument first

- Measure something imperfect

- Observe trends

- Refine the signal

- Automate decisions only when confidence is earned

No responsible engineer installs a complex control system before sensors exist.

AI should follow the same discipline.

When the Business Needs Something to Report

Some organizations are under legitimate pressure to demonstrate AI progress:

- Board updates

- Executive reporting

- Annual disclosures

- Innovation initiatives

In those situations, something will be reported.

The responsible question becomes:

What can be reported honestly, defensibly, and without unnecessary risk?

An outcome-based metric provides a legitimate answer:

- The organization has defined an objective

- The objective is being measured consistently

- The measurement is improving over time

- A foundation for future intelligence exists

This is far more credible than claiming AI adoption based solely on tools, demos, or pilot projects that never reach production.

From Measurement to Real AI Capability

Once an organization is comfortable measuring outcomes, there are several responsible next steps.

1. Improve the Measurement

- Better data inputs

- Clearer weighting

- Human feedback loops

- Trend analysis

- Explainability

2. Introduce Intelligence Gradually

Only after the metric is trusted does it make sense to consider:

- Predictive models

- Anomaly detection

- Optimization

- Machine learning

- AI-assisted decision support

At this stage, AI becomes an incremental improvement, not a leap of faith.

Core AI Applications That Actually Work in Enterprises

When organizations are ready to move beyond early measurement, applied AI tends to succeed in a few well-defined areas:

- Decision support systems

- Risk and quality scoring

- Process monitoring and exception detection

- AI-assisted analysis for business and technical teams

These patterns work because:

- Risk is bounded

- Value is measurable

- Governance is enforceable

- Systems remain auditable and reversible

This is where AI stops being a reporting artifact and starts delivering real operational value.

Why This Approach Survives Reality

This staged approach to AI adoption:

- Works in Microsoft and .NET-centric environments

- Survives governance, legal, and security review

- Avoids catastrophic failure

- Reduces political risk

- Aligns technology with accountability

Most importantly, it respects how real organizations actually function.

A Quiet Truth About AI Adoption

If an organization cannot agree on how to measure improvement today,

no AI model will save it tomorrow.

But if it can define, observe, and improve a single meaningful outcome,

it has already done the hardest part of AI work — whether it calls it AI or not.

Final Thought

You don’t start by making systems intelligent.

You start by making judgment visible.

Everything else follows.

Frequently Asked Questions

What does “starting with a number” mean in AI adoption?

It means defining a measurable outcome — often on a 0–100 scale — that represents how well a process, decision, or result is performing. The calculation may be imperfect at first, but it makes judgment explicit and visible, which is a prerequisite for responsible AI adoption.

Is a simple score or metric really considered AI?

The metric itself is not advanced AI, but it is a legitimate foundation for applied AI. AI systems rely on measurable outcomes, feedback loops, and improvement over time. Without those, machine learning and automation tend to fail regardless of sophistication.

Why not start directly with machine learning or large language models?

Because models amplify whatever assumptions already exist. If an organization has not clearly defined what “better” means, AI models will optimize the wrong things faster. Starting with measurement reduces risk and improves long-term outcomes.

How does this approach help organizations that need to report AI progress?

Many organizations must show progress to executives, boards, or stakeholders. Defining and improving an outcome-based metric provides a defensible, honest way to report AI-related progress without overstating capability or introducing unnecessary risk.

Isn’t this just AI theater?

No — AI theater is claiming intelligence without substance. This approach explicitly acknowledges limitations, documents assumptions, and creates a path to real capability. It avoids pretending certainty exists where it does not.

When does it make sense to introduce “real” AI?

Once the metric is trusted and consistently used, organizations can responsibly introduce:

- Predictive models

- Anomaly detection

- Optimization algorithms

- Machine learning

- AI-assisted decision support

At that point, AI becomes an incremental enhancement rather than a gamble.

Does this approach work in enterprise and regulated environments?

Yes. This method aligns well with enterprise realities because it is:

- Explainable

- Auditable

- Reversible

- Compatible with governance, security, and compliance requirements

It is particularly effective in Microsoft and .NET-centric environments.

How does this differ for small businesses versus large enterprises?

Small businesses often need speed and leverage, while large enterprises need control, governance, and risk management. This approach scales to both, but the level of formality, documentation, and oversight differs significantly.

What are “core AI applications” in this context?

Core AI applications are applied patterns that consistently deliver value, such as:

- Decision support systems

- Risk and quality scoring

- Process monitoring

- Exception detection

- AI-assisted analysis

These focus on augmenting human judgment rather than replacing it.

What if leadership just wants to say “we have AI”?

If reporting pressure exists, the responsible approach is to define measurable outcomes and show continuous improvement. This is far more credible than deploying immature AI systems purely for optics.

What is the biggest mistake organizations make with AI?

Skipping measurement. Organizations often jump to tools and models without agreeing on outcomes, which leads to wasted effort, political conflict, and failed initiatives.

How do you determine whether an organization is ready for AI?

Readiness is less about technology and more about:

- Willingness to define outcomes

- Acceptance of imperfect metrics

- Openness to feedback and iteration

- Respect for governance and safeguards

Organizations unwilling to do these things are not ready for AI — regardless of budget.

How does this approach reduce risk?

By making assumptions explicit, keeping systems explainable, introducing intelligence gradually, and avoiding irreversible automation until confidence is earned.

Is this approach compatible with future AI advancements?

Yes. Starting with measurement creates a stable foundation that can absorb new AI capabilities over time without disrupting operations or increasing risk.

How can organizations move forward responsibly from here?

They can:

- Define a meaningful outcome metric

- Make it visible and inspectable

- Improve it incrementally

- Introduce intelligence only when justified

- Automate cautiously and reversibly

That sequence is what separates durable AI systems from failed experiments.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish