Independent analysis based on the 2025 McKinsey AI Report. McKinsey & Company does not endorse or affiliate with AInDotNet.

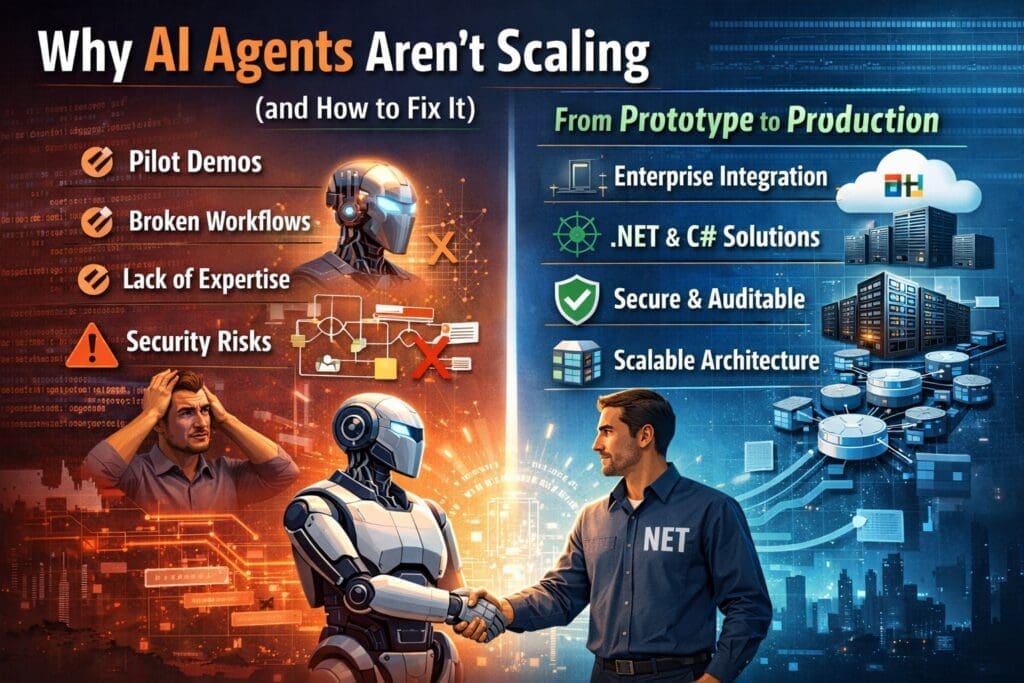

AI Agents Are Everywhere — But Almost Nowhere in Production

AI agents are one of the most talked-about trends in artificial intelligence.

According to McKinsey’s 2025 AI report, 62% of organizations are experimenting with AI agents, yet only 23% have managed to scale them, and no business function reports adoption above 10%.

That gap is not a coincidence.

It’s a signal.

AI agents are not failing because the idea is wrong — they’re failing because most organizations are trying to scale them without enterprise-grade engineering discipline.

What Most Companies Get Wrong About AI Agents

In theory, AI agents sound simple:

- Give an AI a goal

- Let it reason

- Let it act

- Let it iterate

In practice, enterprise environments are far more complex.

Most AI agent initiatives fail for five core reasons.

1. AI Agents Are Built as Demos, Not Systems

Most AI agents today start life as:

- Jupyter notebooks

- Standalone Python scripts

- SaaS tools with limited configurability

- API-only experiments

These are fine for experimentation — but they are not production systems.

Enterprise AI agents must handle:

- Concurrency

- Async workflows

- Error handling

- Retries and fallbacks

- Load spikes

- Permission boundaries

- Logging and audit trails

Without these fundamentals, agents collapse under real-world usage.

A clever agent is not the same thing as a reliable system.

2. AI Agents Aren’t Integrated Into Real Workflows

Many agents live outside the business.

They:

- Don’t understand role-based access

- Ignore approval chains

- Bypass business rules

- Operate without accountability

As a result, they become interesting side tools instead of operational assets.

High-performing organizations do the opposite.

They embed AI agents directly into existing workflows:

- Line-of-business applications

- Internal dashboards

- Approval systems

- Document pipelines

- Customer service platforms

If an AI agent isn’t part of the workflow, it won’t scale.

3. Most Teams Building Agents Lack Distributed Systems Experience

This is the uncomfortable truth.

Many AI agents are built by:

- Data scientists

- AI researchers

- Prompt engineers

These roles are valuable — but they are not trained to build scalable enterprise systems.

AI agents are fundamentally distributed systems:

- Multiple services

- External APIs

- Event-driven logic

- Stateful and stateless components

- Failure scenarios

This is why many agents work beautifully in isolation — and fall apart in production.

Enterprise AI agents require software engineers who already understand scale.

This is where experienced .NET teams excel.

4. Trust, Security, and Governance Are Afterthoughts

McKinsey highlights that risk, accuracy, and trust are among the top blockers to enterprise AI adoption.

AI agents amplify this risk because they:

- Make decisions

- Trigger actions

- Interact with sensitive data

Yet many agent systems lack:

- Full request/response logging

- Human-in-the-loop controls

- Confidence thresholds

- Approval gates

- Auditability

Without these, organizations cannot deploy agents in regulated or mission-critical environments.

Trust isn’t optional — it’s architectural.

5. AI Agents Are Treated as “Magic,” Not Automation

This may be the biggest mistake.

AI agents are often presented as:

- Autonomous workers

- Digital employees

- Self-running systems

That framing sets unrealistic expectations.

In reality:

AI agents are automation with probabilities.

They must be:

- Scoped

- Constrained

- Measured

- Supervised

Organizations that treat agents as magic are disappointed.

Organizations that treat agents as decision-support automation succeed.

How to Fix AI Agent Scaling — The Practical Path Forward

The good news: this problem is solvable.

And enterprises already own most of the tools required.

1. Start With Microsoft Copilot as the “Starter Agent”

Copilot succeeds where many agents fail because it is:

- Secure

- Familiar

- Governed

- Already licensed

- Already embedded in daily work

Copilot builds trust and fluency with AI-assisted workflows.

For many organizations, it is the on-ramp to agent-based thinking.

2. Extend Into Custom AI Agents Built in .NET

When Copilot isn’t enough, the next step is custom AI agents — built properly.

Enterprise-grade agents should be:

- Written in C#

- Embedded in existing applications

- Integrated with identity and permissions

- Fully logged and auditable

- Deployed via standard DevOps pipelines

This approach allows agents to inherit the company’s entire IT backbone:

- Active Directory

- Azure security

- Role-based access

- Existing data stores

- Monitoring and alerting

No parallel systems.

No shadow infrastructure.

3. Treat AI Agents Like Decision Engines, Not Employees

The most successful organizations define agents as:

- Assistive decision engines

- Task accelerators

- Recommendation systems

They:

- Require approvals for high-impact actions

- Escalate low-confidence results

- Track corrections and overrides

- Improve models over time

This keeps humans accountable — and AI valuable.

4. Build for Scale From Day One

If an agent might scale later, design it for scale now:

- Stateless services where possible

- Explicit failure handling

- Clear ownership boundaries

- Observability baked in

- Performance metrics tied to business outcomes

Retrofitting scale is far more expensive than designing for it.

Why AI Agents Will Separate Leaders From Laggards

McKinsey’s data makes one thing clear:

AI agents will matter — but only for organizations that can operationalize them.

The winners will be those who:

- Use existing enterprise platforms

- Leverage experienced software engineers

- Embed agents into workflows

- Treat AI as disciplined automation

- Build trust through governance and transparency

This is not about chasing the newest AI tools.

It’s about building systems that work — every day — at enterprise scale.

Final Thought

AI agents aren’t failing because the technology isn’t ready.

They’re failing because most organizations are trying to scale intelligence without scaling engineering discipline.

Fix that — and AI agents stop being experiments and start becoming assets.

Disclaimer

This article contains independent analysis and commentary on the publicly available 2025 McKinsey AI Report. AInDotNet, its authors, and its associated brands are not affiliated with, sponsored by, or endorsed by McKinsey & Company. All references to McKinsey’s findings are for discussion and educational purposes only. Any interpretations, opinions, or conclusions expressed are solely those of the author.

Frequently Asked Questions

What is an AI agent in an enterprise context?

An AI agent is a software component that uses AI models to assist with decisions, tasks, or workflows. In enterprise environments, AI agents typically support humans rather than operate autonomously, and are embedded into existing applications, workflows, and approval processes.

Why are AI agents so difficult to scale in large organizations?

AI agents struggle to scale because they are often built as experiments instead of production systems. Common issues include lack of integration with enterprise workflows, insufficient logging and governance, security concerns, and limited experience with distributed systems among development teams.

Are AI agents the same as autonomous AI systems?

No. Most enterprise-ready AI agents are not fully autonomous. They operate as decision-support or task-acceleration tools with defined boundaries, confidence thresholds, and human-in-the-loop controls. Treating AI agents as autonomous “digital employees” often leads to failure.

What does McKinsey say about AI agent adoption?

According to the 2025 McKinsey AI report, 62% of organizations are experimenting with AI agents, but only 23% have successfully scaled them, and no business function reports adoption above 10%. This highlights a significant gap between experimentation and operational deployment.

What role does software engineering play in scaling AI agents?

Scaling AI agents requires enterprise software engineering discipline, including experience with distributed systems, concurrency, error handling, security, logging, and DevOps. These skills are often found in experienced application development teams, such as .NET teams, rather than in experimental AI or research-focused roles.

Why do AI agents fail when built as standalone tools?

Standalone AI agents often fail because they are not connected to real business workflows. Without integration into systems like ERP, CRM, document management, or approval chains, agents remain isolated tools that lack accountability, governance, and operational relevance.

How does Microsoft Copilot fit into an AI agent strategy?

Microsoft Copilot serves as a safe and effective starting point for AI-assisted workflows. It is already embedded in Microsoft 365, governed by enterprise security, and familiar to users. Many organizations use Copilot to build trust and AI literacy before extending into custom AI agents.

Why is .NET a good platform for building scalable AI agents?

.NET is well-suited for enterprise AI agents because it supports:

- Scalable, distributed architectures

- Strong security and identity integration

- Robust logging and monitoring

- Mature DevOps pipelines

- Seamless integration with Microsoft data platforms

This allows AI agents to scale within existing enterprise infrastructure.

What governance controls should enterprise AI agents include?

Enterprise AI agents should include:

- Full request and response logging

- Human-in-the-loop approvals

- Confidence scoring and escalation rules

- Role-based access control

- Audit trails for compliance

These controls are essential for trust, regulatory compliance, and operational safety.

Can AI agents be used in regulated industries?

Yes, but only when they are designed with governance, transparency, and accountability from the start. Regulated industries such as finance, healthcare, and government require AI agents to be auditable, explainable, and secure — conditions that many experimental agent tools do not meet.

Should companies build AI agents from scratch or extend existing platforms?

In most cases, companies should extend existing platforms rather than build AI agents from scratch. Leveraging existing identity systems, security models, workflows, and DevOps pipelines significantly reduces risk and improves scalability.

What is the biggest mindset shift needed to scale AI agents?

The biggest shift is treating AI agents as automation with probabilities, not magic. Successful organizations scope agents carefully, measure performance, supervise outcomes, and continuously improve systems based on real-world feedback.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish