Disclaimer: This article is an independent analysis and commentary on the 2025 McKinsey AI Report. McKinsey & Company does not endorse, sponsor, or affiliate with AInDotNet or the viewpoints expressed here.

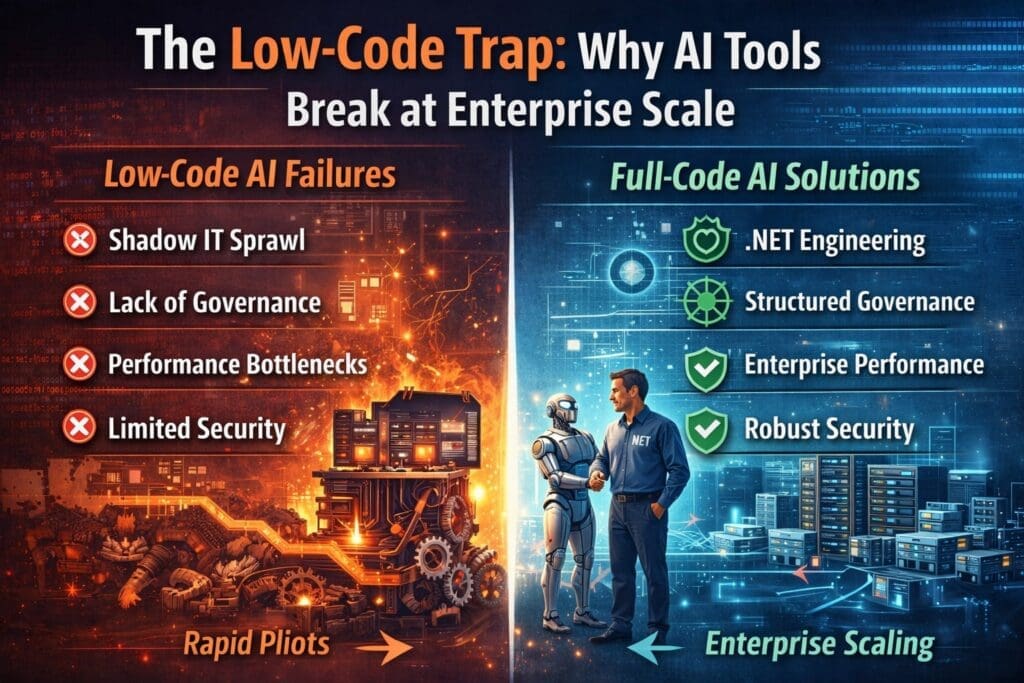

Low-Code AI Looks Like the Fastest Path — Until It Isn’t

Low-code and no-code AI tools are everywhere.

They promise:

- Faster development

- Lower cost

- Fewer engineers

- Rapid AI adoption

And at first, they deliver.

Teams build prototypes in days instead of months. Executives see quick demos. Momentum builds.

Then scale enters the picture.

According to McKinsey’s 2025 AI findings, most organizations fail to move AI from pilots into scaled production systems. While McKinsey doesn’t single out low-code platforms directly, enterprise experience makes the pattern clear:

Low-code AI tools are excellent for experimentation — and fragile at enterprise scale.

This is the low-code trap.

What Low-Code AI Tools Do Well (And Why They’re So Tempting)

Let’s be fair.

Low-code and no-code platforms succeed because they:

- Lower the barrier to entry

- Enable fast experimentation

- Empower non-developers

- Reduce initial friction

- Produce quick visual results

For:

- Individual productivity

- Department-level experiments

- Proofs of concept

- Learning AI capabilities

They are often the right starting point.

The problem begins when organizations mistake speed for scalability.

Why Low-Code AI Tools Break at Enterprise Scale

Enterprise systems fail differently than prototypes.

Here’s where low-code AI tools consistently collapse.

1. Low-Code AI Platforms Hide Complexity — Until It Explodes

Low-code platforms work by abstracting complexity away.

That’s helpful early on.

It’s dangerous later.

At scale, enterprises must manage:

- Concurrency

- Performance under load

- Failure modes

- Retry logic

- Partial outages

- Data contention

- Security boundaries

Low-code tools often:

- Obscure how logic executes

- Hide performance bottlenecks

- Limit control over execution paths

- Make debugging opaque

When something breaks, teams can’t see why.

Abstraction becomes a liability.

2. Governance and Security Don’t Scale in Low-Code AI

Enterprise AI must comply with:

- Identity and access controls

- Role-based permissions

- Audit requirements

- Data residency rules

- Regulatory compliance

Many low-code AI tools:

- Operate outside core identity systems

- Rely on simplified permission models

- Lack granular logging

- Provide limited audit trails

This makes them unsuitable for:

- Finance

- Healthcare

- Government

- Legal

- Regulated industries

Security and governance cannot be bolted on later — they must be architectural.

3. Low-Code AI Creates Shadow IT and Fragmentation

One of the most damaging effects of low-code AI adoption is tool sprawl.

Departments independently create:

- Their own AI apps

- Their own workflows

- Their own data pipelines

- Their own logic

Over time, organizations inherit:

- Duplicate logic

- Conflicting rules

- Inconsistent outcomes

- Unmaintainable systems

IT teams are then asked to “make it production-ready” — without control over the foundation.

This is not innovation.

It’s technical debt with a user interface.

4. Performance and Cost Collapse Under Real Usage

Low-code AI platforms are optimized for:

- Ease of use

- Low initial volume

- Simple workflows

They are rarely optimized for:

- High throughput

- Sustained load

- Cost efficiency at scale

As usage grows:

- Latency increases

- Costs spike

- Limits are hit

- Performance becomes unpredictable

What looked inexpensive at pilot scale becomes expensive at production scale.

5. Low-Code Tools Don’t Support Enterprise Software Lifecycle Discipline

Enterprise software requires:

- Source control

- Versioning

- Automated testing

- CI/CD pipelines

- Environment promotion

- Rollbacks

- Monitoring and alerting

Low-code AI tools often struggle to support:

- Proper DevOps practices

- Automated testing

- Controlled releases

- Environment parity

This creates risk every time a change is made.

At enterprise scale, process discipline matters more than speed.

The Critical Misconception: Low-Code Replaces Engineering

The biggest myth behind the low-code trap is this: Low-code lets us avoid engineering.

In reality:

- Low-code defers engineering

- It does not eliminate it

Eventually, the system must be:

- Secured

- Governed

- Scaled

- Maintained

- Supported

When that moment arrives, organizations either:

- Rewrite the solution properly

- Or live with a fragile system

There is no third option.

The Right Way to Use Low-Code AI in Enterprises

Low-code is not the enemy.

Misuse is.

Here’s the pragmatic approach that works.

1. Use Low-Code AI for Discovery and Validation

Low-code tools are excellent for:

- Identifying use cases

- Validating value

- Prototyping workflows

- Building executive understanding

They help answer:

- “Is this useful?”

- “Does this create value?”

- “Is this worth scaling?”

That’s their role.

2. Transition Successful Use Cases Into Full-Code Systems

Once value is proven, the solution should transition into:

- Full-code implementation

- Enterprise architecture

- Proper DevOps pipelines

For Microsoft-centric organizations, this means:

- C# and .NET

- Azure AI services

- SQL Server, Dataverse, SharePoint

- Azure DevOps or GitHub

- Centralized logging and monitoring

This preserves innovation without sacrificing scale.

3. Embed AI Into Existing Systems — Don’t Create New Platforms

Enterprise AI scales best when it:

- Lives inside existing applications

- Uses existing identity systems

- Honors existing permissions

- Reuses existing data pipelines

This avoids:

- New platforms

- New governance models

- New security risks

AI becomes an enhancement — not a disruption.

4. Treat AI Like Automation, Not a Shortcut

AI should follow the same discipline as automation:

- Clear scope

- Defined inputs and outputs

- Error handling

- Human oversight

- Measurable ROI

When AI is treated as a shortcut around engineering rigor, it fails.

When it’s treated as part of the system, it succeeds.

Why Experienced .NET Teams Win Here

This is where experienced enterprise teams shine.

.NET teams already understand:

- Scalable architectures

- Long-lived systems

- Governance and compliance

- Performance tuning

- Operational support

They don’t fear AI.

They integrate it.

And that’s why enterprises that rely on their existing engineering teams scale AI faster — and with less risk.

Final Thought

Low-code AI tools are not a strategy.

They are a phase.

They help organizations learn what to build — not how to run it at scale.

Enterprises that recognize this early avoid the low-code trap.

Those that don’t eventually pay for it — in rewrites, risk, and lost momentum.

AI doesn’t fail at scale because it’s too advanced.

It fails because it’s not engineered like everything else that already runs the business.

Disclaimer

This article contains independent analysis and commentary on the publicly available 2025 McKinsey AI Report. AInDotNet, its authors, and its associated brands are not affiliated with, sponsored by, or endorsed by McKinsey & Company. All references to McKinsey’s findings are for discussion and educational purposes only. Any interpretations, opinions, or conclusions expressed are solely those of the author.

Frequently Asked Questions

What are low-code AI tools?

Low-code AI tools are platforms that allow users to build AI-powered applications using visual designers, prebuilt components, and minimal programming. They are designed to speed up development and enable non-developers to create functional AI solutions.

Why do low-code AI tools struggle at enterprise scale?

Low-code AI tools often struggle at enterprise scale because they hide complexity that becomes unavoidable as usage grows. Challenges include performance bottlenecks, limited security controls, weak governance, poor observability, and difficulty integrating with enterprise workflows and DevOps pipelines.

Are low-code AI platforms bad for enterprises?

No. Low-code AI platforms are useful for experimentation, learning, and validating use cases. Problems arise when organizations attempt to run mission-critical, large-scale systems on tools that were never designed for long-term operational reliability.

What is the “low-code trap”?

The low-code trap occurs when organizations mistake rapid prototyping speed for production readiness. Early success leads to over-reliance on low-code tools, resulting in fragile systems that become difficult or expensive to scale, secure, and govern.

How do low-code AI tools affect security and compliance?

Many low-code AI tools offer simplified security models that do not fully integrate with enterprise identity, role-based access control, auditing, or regulatory requirements. This makes them risky in regulated industries such as finance, healthcare, government, and legal environments.

What is shadow IT, and how does low-code contribute to it?

Shadow IT occurs when departments build and operate systems outside central IT oversight. Low-code platforms can accelerate this by allowing teams to create AI tools independently, leading to duplicated logic, inconsistent outcomes, security gaps, and long-term technical debt.

When should enterprises move from low-code to full-code AI solutions?

Enterprises should transition to full-code AI solutions when:

- A use case proves real business value

- Usage grows beyond a single team

- Security, governance, or compliance become critical

- Performance and cost predictability matter

At that point, proper engineering discipline is required.

Why is full-code development better for scaling AI?

Full-code development allows teams to:

- Control architecture and performance

- Implement robust logging and monitoring

- Enforce security and governance policies

- Use standard DevOps practices

- Manage cost and scalability explicitly

This makes full-code systems more reliable at enterprise scale.

How does Microsoft’s ecosystem support scalable AI better than low-code tools alone?

Microsoft’s ecosystem allows AI to be embedded into existing enterprise systems using:

- .NET and C#

- Azure AI services

- Active Directory and role-based security

- Existing data platforms like SQL Server and SharePoint

This reduces risk by reusing tools organizations already trust and operate.

Can low-code and full-code AI coexist?

Yes — and they should.

A pragmatic enterprise strategy uses:

- Low-code AI for discovery and validation

- Full-code AI for production and scale

The key is knowing when to transition — and planning for it early.

Does low-code reduce the need for software engineers?

Low-code does not eliminate the need for software engineers — it delays it. Eventually, enterprise systems must be engineered, secured, tested, monitored, and supported. Organizations that plan for this early avoid costly rewrites later.

What is the biggest mistake enterprises make with low-code AI?

The biggest mistake is treating low-code AI tools as a long-term architecture rather than a temporary accelerator. AI succeeds at scale only when it follows the same engineering discipline as every other enterprise system.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish