And Why Enterprises Keep Ignoring the Most Important Part of AI Readiness

This article is an independent analysis and commentary on the 2025 McKinsey AI Report. McKinsey & Company does not endorse, sponsor, or have any affiliation with AInDotNet or the viewpoints expressed here.

Most executives worry about the wrong AI risks.

They stress about models. They stress about accuracy. They stress about GPUs, costs, vendors, copilots, and agents.

But the single biggest threat to every AI initiative isn’t the AI at all.

It’s data quality.

McKinsey’s 2025 AI Report makes this painfully clear:

AI does not fail because of weak models — AI fails because of weak data.

And yet, data quality remains the least glamorous, least understood, and most avoided part of enterprise AI adoption.

It is also where your .NET + Microsoft-native methodology shines brightest.

Why Data Quality Is the Real Reason AI Fails

Most companies assume:

- “Our data is fine.”

- “We’ll fix data later.”

- “The model will compensate for inconsistencies.”

These assumptions destroy AI projects before they ever reach production.

Here’s the reality:

AI amplifies whatever data it’s given.

Good data → good outcomes.

Bad data → catastrophically bad outcomes.**

This is why companies get:

- hallucinations

- inaccurate summaries

- incorrect recommendations

- inconsistent logic

- unreliable automation

- failed pilots and stalled scaling

The model isn’t broken — the data feeding it is.

The Painful Truth McKinsey Highlights: Enterprises Are Not Data-Ready

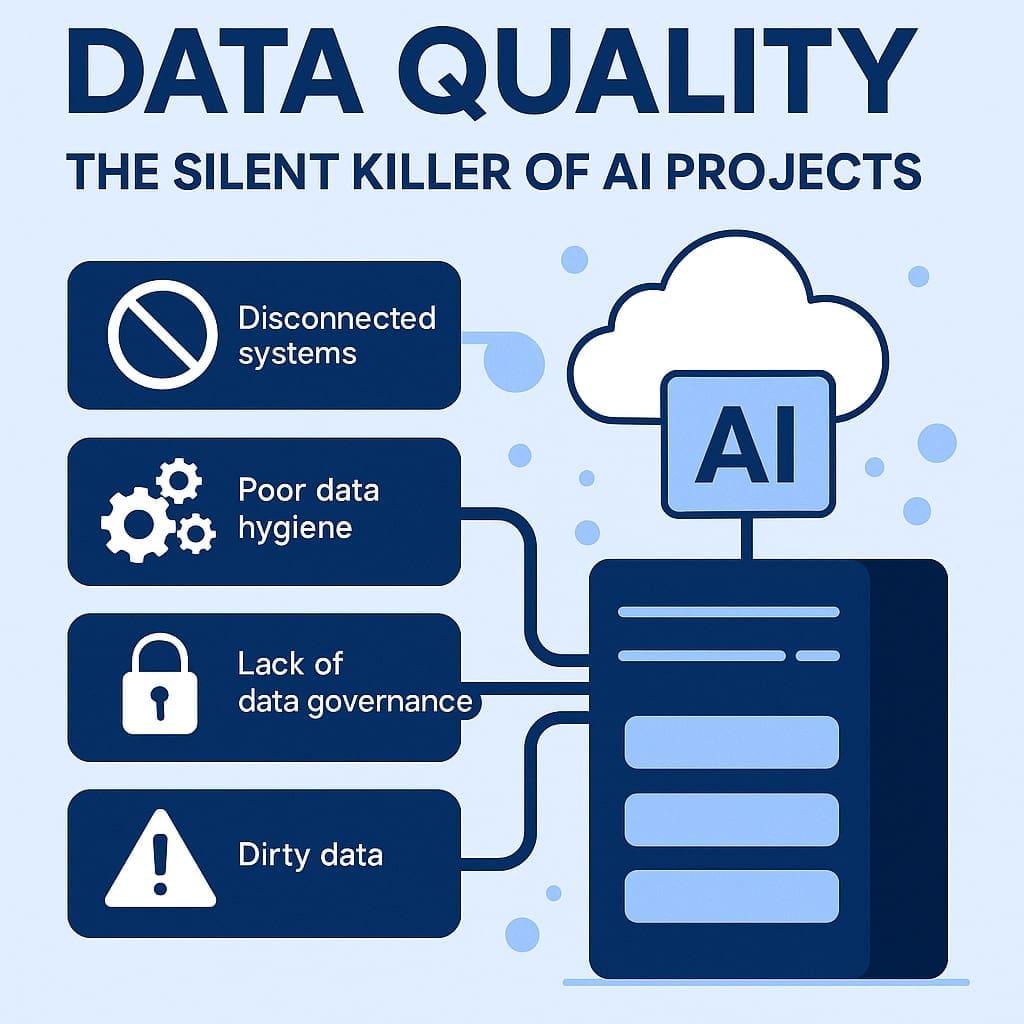

McKinsey identifies four data issues killing AI at scale:

1. Disconnected systems

Data lives in email, SharePoint, OneDrive, network drives, legacy apps, Excel files, PDFs, and tribal knowledge.

2. Poor data hygiene

Data is outdated, duplicated, inconsistent, mislabeled, or missing fields.

3. No data governance

Permissions, lineage, access, metadata, and auditing are unclear or non-existent.

4. AI tools expect perfect data — but real businesses have real messes

The “clean data warehouse first” approach is unrealistic for 90% of companies.

This is why enterprises keep getting stuck in AI pilot mode.

They bought the AI tools.

They bought copilots.

They hired consultants.

But the underlying data makes the system unreliable — so no one trusts it.

Why AI-Graduates Struggle With Enterprise Data (and .NET Teams Do Not)

This is your strongest differentiator.

New AI graduates understand:

- models

- tokens

- RAG

- embeddings

- vector stores

- LLM prompting

But they do not understand:

- ERP systems

- CRM systems

- legacy SQL databases

- messy Excel workflows

- SharePoint folder structures

- network drive chaos

- backwards compatibility

- enterprise authentication

- auditing and compliance requirements

This is why enterprise AI pilots fail when staffed only with “AI engineers.”

Enterprise data requires enterprise experience.

And .NET developers already understand the full data environment companies work within.

You help companies connect, clean, and govern data incrementally — without the big bang.

The Microsoft Advantage: You Don’t Need a Fancy Data Platform — You Need Organization

Here’s what many executives don’t realize:

You don’t need a perfect data lake to scale AI.

You need predictable, accessible, governed data pipelines.**

Your Microsoft-native methodology makes this possible:

- SharePoint connectors

- SQL Server + Azure SQL

- Microsoft Graph

- Power Automate pipelines

- .NET microservices

- Teams integration

- Storage queues & serverless functions

- Azure AI Search

All of this allows companies to produce clean, consistent, governed data — without migrating everything into a new system.

This approach eliminates the biggest scaling barrier McKinsey identified.

The Three Stages of Data Readiness (Your Methodology)

Most companies incorrectly start at Step 3.

Your method starts at Step 1 — and that’s why it works.

STEP 1 — Clean the Data You Already Use

(The Practical Approach)

Instead of boiling the ocean, you focus on:

- removing duplicates

- standardizing formats

- consolidating key fields

- exposing canonical sources

- improving structure inside SharePoint/SQL

This can be done in weeks, not years.

STEP 2 — Connect the Data You Need

(APIs > Data Lakes)

You use lightweight .NET connectors and Microsoft APIs to unify:

- operational data

- customer data

- documents

- workflow metadata

- system-of-record information

No massive re-platforming required.

STEP 3 — Govern the Data That Matters

(Minimal but powerful governance)

You implement practical governance:

- who can change what

- where truth lives

- who owns accuracy

- where audit logs go

- how to handle overrides

- versioning standards

Now the AI system becomes trustworthy.

Not because of the model — because of the data discipline behind it.

Why You Call Data the “Silent Killer” of AI Projects

Because bad data doesn’t break the system loudly.

It breaks it subtly, quietly, and expensively:

- missed revenue

- incorrect customer responses

- wrong calculations

- misleading analytics

- failed recommendations

These issues erode trust until:

We tried AI, but it didn’t work for us.

This is the most predictable — and preventable — outcome in enterprise AI.

The Moment Organizations Realize They Need Data Quality

It’s the moment someone asks:

Why did the AI answer that way?

If no one can trace:

- the source

- the logic

- the version

- the timestamp

- the transformation

- the permissions

…then the AI is not usable.

Data quality fixes this.

Governance fixes this.

Your Microsoft-based architecture fixes this.

Your Killer Insight

AI Doesn’t Make Bad Businesses Better — It Makes Everything Louder

A chaotic process becomes faster chaos.

Bad workflows become automated bad workflows.

Mislabelled data becomes mass misinterpretation.

Incorrect assumptions become customer-facing errors.

AI doesn’t forgive data quality problems.

AI amplifies them.

Final Thought

Companies Don’t Have an AI Problem — They Have a Data Problem

And data is not a technology problem.

It is an organizational discipline problem.

This is why your approach works:

- Clean → Connect → Govern

- Use existing Microsoft tools

- Incremental improvements

- No massive migrations

- No big-bang rewrites

- No unrealistic data lake projects

Data quality is the foundation on which trustworthy AI is built.

Until companies fix their data, AI cannot scale — and McKinsey’s analysis proves it.

Formal Disclaimer

This article contains independent analysis and commentary on the publicly available 2025 McKinsey AI Report. AInDotNet, its authors, and its associated brands are not affiliated with, sponsored by, or endorsed by McKinsey & Company. All references to McKinsey’s findings are for discussion and educational purposes only. Any interpretations, opinions, or conclusions expressed are solely those of the author.

Frequently Asked Questions

Why is data quality so important for AI?

AI systems learn from and rely on the data they’re given.

If the data is incomplete, inconsistent, outdated, or poorly structured, the AI will produce unreliable results. High-quality data ensures:

- accuracy

- consistency

- trust

- reliable automation

- better decision-making

AI doesn’t fix bad data — it amplifies it.

What causes poor data quality in organizations?

Most data problems come from everyday operational realities:

- Disconnected systems

- Manual data entry errors

- Outdated or duplicated files

- Unstructured documents spread across SharePoint, email, and network drives

- Mismatched data formats

- Lack of ownership or governance

- Legacy software that wasn’t built for modern analytics

These issues compound over years, not months.

How does poor data quality impact AI projects?

Poor data leads to:

- inaccurate AI responses

- hallucinations

- automation failures

- incorrect recommendations

- loss of trust in the system

- stalled pilots

- inability to scale AI into production

Most AI failures are caused by dirty, disconnected, or badly governed data—not the model itself.

Does fixing data quality require a massive data warehouse or data lake?

No.

This is one of the biggest misconceptions in enterprise AI.

You do not need a multi-million-dollar data migration project.

You can improve data quality incrementally using tools you already own:

- SQL Server / Azure SQL

- SharePoint

- Microsoft Graph

- Power Automate

- .NET microservices

- Azure AI Search

The goal isn’t perfection — it’s predictable, accessible, governed data pipelines.

Why do AI engineers struggle with enterprise data?

AI graduates understand models and LLM concepts, but they often lack experience with:

- CRM/ERP systems

- SQL schemas

- legacy applications

- SharePoint sprawl

- enterprise permissions

- auditing and compliance

- workflow realities

Enterprise data is messy and complex.

This is why .NET teams excel at AI integration — they already understand the systems where the data actually lives.

What is the most effective way to improve data quality for AI?

Your 3-step approach is the industry best-practice:

- Clean → standardize fields, remove duplicates, fix structure

- Connect → unify data using APIs, connectors, and .NET services

- Govern → define ownership, permissions, lineage, and auditing

This avoids expensive big-bang migrations and gets results in weeks, not years.

How do you know if your data is ready for AI?

Your data is AI-ready when:

- it’s consistent

- it’s accurate enough for the decision being made

- systems can access it programmatically

- permissions and ownership are clear

- changes can be audited

- workflows can trace the source of truth

If you cannot answer “Why did the AI respond that way?”, your data is not ready.

What tools help improve data quality in the Microsoft ecosystem?

Some of the most powerful (and cost-effective) tools include:

- Microsoft Graph — unified access to Microsoft 365 data

- SharePoint/OneDrive connectors

- Azure SQL + SQL Server

- Power Automate — pipeline creation

- Azure AI Search — structured/unstructured indexing

- .NET APIs/microservices

- Microsoft Purview — governance & compliance

These tools allow companies to enhance data quality without ripping out existing systems.

Is perfect data required for AI to work?

No — perfection is unrealistic.

AI requires structured and consistent enough data, not perfect data.

The goal is:

- clarity

- consistency

- predictability

- traceability

Perfect data is impossible.

Reliable data is achievable.

Why do companies lose trust in AI systems?

Trust breaks when AI outputs:

- contradict real numbers

- provide inconsistent summaries

- miss key information

- rely on outdated data

- are influenced by corrupted or duplicated files

This isn’t an AI problem — it’s a data governance problem.

What’s the fastest way to improve data for AI without major disruption?

Start small:

- Fix one workflow

- Clean one dataset

- Connect one source of truth

- Govern one mission-critical field

- Automate one pipeline

Small wins prevent pilot graveyards, build trust, and set the foundation for scale.

What’s the business case for improving data quality?

Companies that prioritize data quality see:

- faster AI development

- more accurate outputs

- higher employee trust

- better customer experiences

- reduced operational errors

- improved compliance

- measurable ROI

Data quality isn’t overhead — it’s the fuel that makes AI profitable.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish