For decades, developers were trained to obsess over optimization — crafting micro-efficient loops, shaving milliseconds from SQL queries, and squeezing every ounce of performance out of infrastructure. But in the AI-accelerated era of software development, that mindset can quietly sabotage enterprise progress.

Today, the teams who win aren’t the ones who write the fastest code first.

They’re the ones who ship working functionality early, validate business value, and then let data — not ego — guide what to optimize.

This is the foundation of a pragmatic AI-era strategy, and nowhere is it more effective than inside the modern .NET ecosystem.

Why Functionality Comes First in the Age of AI

1. AI accelerates development, not clairvoyance

Thanks to GitHub Copilot, ChatGPT, and Microsoft’s Copilot ecosystem, generating optimized code snippets is easier than ever. But no AI tool can accurately predict:

- Which use cases the business will adopt fastest

- What workflows users will depend on

- Which features generate revenue

- Which modules will handle the highest load

Only real users and real usage data reveal that.

2. Premature optimization now costs more

In the AI era, writing hyper-optimized code early isn’t “smart engineering.”

It’s a risk multiplier:

- It increases upfront development cost

- It complicates the codebase

- It slows down feature delivery

- It makes AI-assisted scaffolding harder

- And worst of all… it often optimizes the wrong thing

3. Modern infrastructure already absorbs the early inefficiencies

Between:

- EF Core auto-optimizations

- ASP.NET Core runtime improvements

- SQL Server intelligent query processing

- Azure’s autoscaling

…the platform already handles 80–90% of basic performance tuning.

You don’t hand-plane every board before building the house — you first assemble the structure.

The .NET Philosophy That Wins Now: Build It to Work First

The purpose of software is to deliver business outcomes, not technical elegance.

Functionality first means:

- Deliver core behavior quickly

- Capture the rules, workflows, and logic cleanly

- Validate that the business layer is correct

- Let real traffic prove what matters

- Optimize with evidence, not assumptions

This aligns perfectly with modern .NET architecture and Domain-Driven Design, where the business layer is the true asset — not the surrounding technical plumbing.

Where AI Fits into this Strategy

AI speeds up steps 2–5 after the functionality is delivered.

Once your business logic works:

- AI can help profile the system

- AI can identify slow endpoints

- AI can suggest optimized SQL

- AI can rewrite loops, queries, and LINQ expressions

- AI can auto-generate performance tests

- AI can refactor inefficiencies without breaking behavior

AI becomes a post-functionality optimization engine — not a clairvoyant architect.

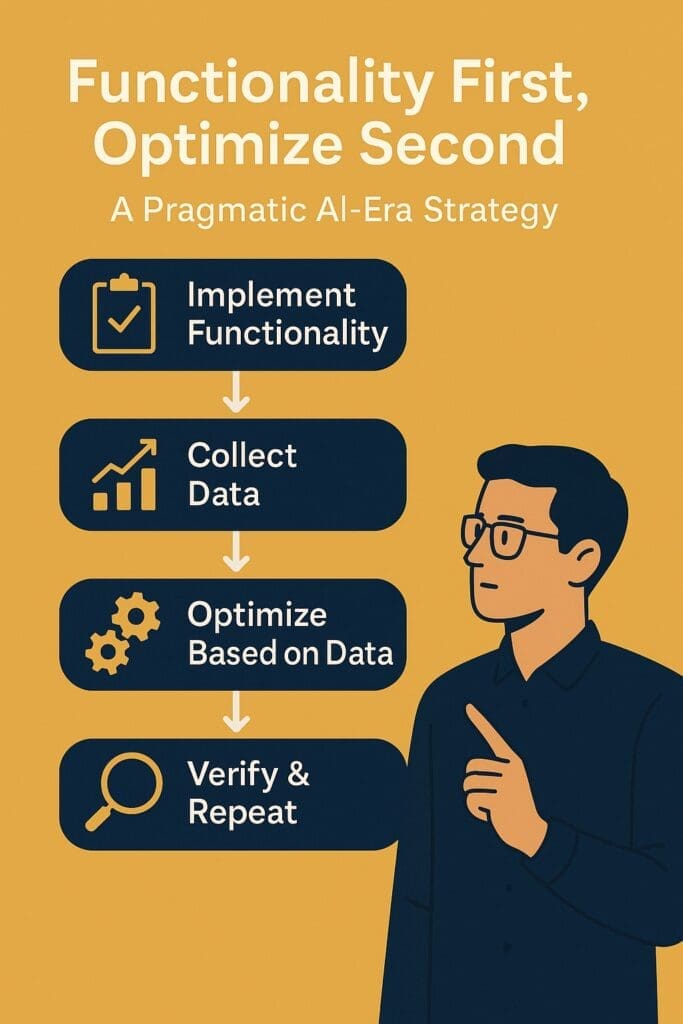

The Practical Workflow: A Functionality-First, Optimize-Second Blueprint

Below is the modern AI-accelerated .NET workflow your teams should adopt.

Step 1: Implement the Feature End-to-End (Even if Inefficient)

You prioritize:

- Clean domain models

- Correct business rules

- Clear workflows

- Proper separation of concerns

Let AI generate:

- Controllers

- DTOs

- Unit tests

- Data layer scaffolding

Don’t micro-optimize.

Don’t over-engineer.

Focus on correctness.

Step 2: Collect Usage and Performance Data

Use built-in and AI-enhanced telemetry:

- Application Insights

- EF Core logging

- ASP.NET Core request diagnostics

- SQL query stats

- Azure Monitor

- AI-assisted insights (Copilot or ChatGPT log analysis)

This tells you exactly:

- Which endpoints are slow

- Which queries degrade under load

- Which workflows matter most

- What users actually do (vs. what you thought they’d do)

This is the data that should drive optimization.

Step 3: Targeted Optimization — The 20% That Matters

Now you find your bottlenecks. Spend your time optimizing where it matters. Examples:

- Replace heavy LINQ with compiled queries

- Introduce caching

- Refactor expensive loops

- Fine-tune EF Core includes

- Move hot paths to background workers

- Introduce batching, pooling, or caching

- Add ML-driven anomaly detection for performance dips

AI becomes your assistant:

- “Optimize this query for SQL Server 2022.”

- “Rewrite this LINQ expression for speed.”

- “Analyze this memory dump.”

- “Profile this controller and summarize bottlenecks.”

This creates dramatic gains with minimal effort.

Step 4: Verify That Optimization Didn’t Break Business Logic

Because your business layer is clean, testable, and well isolated, verification becomes easy:

- Run your automated tests

- Let AI extend missing tests

- Validate business consistency

- Compare outputs before & after

Your core logic remains intact, stable, and future-proof.

Step 5: Repeat Only When Needed

Optimization becomes a surgical process, not a guessing game.

Why This Strategy Is Perfect for Enterprise .NET Teams

✔ Predictable Delivery

You ship features on schedule — not get lost in endless optimization cycles.

✔ Higher Quality Business Logic

Your business layer becomes your enterprise asset — clean, testable, and independent of frameworks.

✔ Better Use of AI

AI works best once the function is correct, not during premature planning.

✔ Lower Technical Debt

Complexity arrives only where data proves it is necessary.

✔ Faster Iteration

Your architecture supports rapid change — perfect for evolving AI capabilities.

A Real-World Example: The 95/5 Rule in Action

In one Fortune 500 system:

- 5 endpoints handled 92% of traffic

- Only 2 database queries caused 87% of performance complaints

- Optimizing just those 5% of operations

- Improved performance by 400%

All without touching the remaining 95% of the system.

This is why you never optimize blindly.

Conclusion: AI Rewards the Teams Who Build Smart, Not Early

AI has changed how software is built — and optimization is now a data-driven, post-implementation activity.

The winning .NET strategy is simple:

- Build functionality first

- Ship early

- Observe real-world usage, find your bottlenecks

- Optimize what matters

- Let AI do the heavy lifting in step 4

This approach doesn’t just produce better software.

It produces better businesses, because you align development effort with real-world value — not assumptions.

Frequently Asked Questions

Why is “functionality first” the best strategy in modern .NET development?

Because AI has drastically reduced the cost of generating code, the biggest risk today isn’t slow performance — it’s building features that nobody uses. Delivering functionality early allows teams to validate business value, gather real usage data, and optimize only where it matters. This leads to faster delivery, lower technical debt, and better alignment with business goals.

Doesn’t early optimization save time later?

Not anymore. With EF Core, ASP.NET Core, SQL Server, autoscaling, and AI tooling, the .NET ecosystem automatically handles much of the early optimization for you. Premature optimization now often slows teams down, adds complexity, and optimizes the wrong things.

How does AI assist with software optimization?

AI tools like GitHub Copilot, ChatGPT, and Azure AI can:

- Refactor heavy code blocks

- Profile slow endpoints

- Rewrite inefficient LINQ queries

- Suggest SQL improvements

- Generate performance tests

- Highlight bottlenecks

AI is most effective after functionality is delivered and the system is measurable.

When should teams start optimizing performance?

After:

- The feature works end-to-end

- Users begin interacting with it

- Real telemetry identifies where performance truly matters

This ensures your optimization efforts are evidence-based, not guesswork.

How do I know which parts of my .NET application need optimization?

Use:

- Application Insights

- EF Core logging

- SQL Server query plans

- ASP.NET Core diagnostics

- Azure Monitor

- Load testing results

Look for:

- Hot endpoints

- High-latency operations

- Heavy database queries

- Methods with outsized CPU or memory usage

This data reveals the 5–10% of code worth optimizing.

Isn’t optimization important for scaling?

Yes — but only the right optimization.

Enterprises scale fastest by:

- Shipping features early

- Observing real production patterns

- Optimizing bottlenecks identified by real traffic

Scaling succeeds when optimization is targeted, not premature.

Won’t slow code frustrate users early on?

Modern .NET applications are surprisingly fast by default.

Even unoptimized code typically performs well enough for early adoption.

And if something is truly slow, telemetry will show it immediately — allowing for quick, AI-assisted fixes.

How does this strategy support clean architecture and Domain-Driven Design?

“Functionality first” emphasizes:

- Clear domain models

- Well-defined business rules

- Independent business layers

- Testable logic

By minimizing early micro-optimization, architects can focus on the long-term asset of the enterprise: the business logic.

Does this approach work for both AI and non-AI applications?

Absolutely.

While the strategy aligns perfectly with AI-assisted development, it’s equally effective for traditional applications. The core principle — build correctness first, tune later — has always been true. AI simply makes the “optimize later” phase faster and more powerful.

What are the biggest mistakes developers make when trying to optimize too early?

Common pitfalls include:

- Over-engineering solutions

- Creating overly complex abstractions

- Making the code harder for AI tools to scaffold

- Guessing at bottlenecks instead of measuring them

- Delaying real value delivery to the business

The result is a harder-to-maintain system with little real performance benefit.

Want More?

- Check out all of our free blog articles

- Check out all of our free infographics

- We currently have two books published

- Check out our hub for social media links to stay updated on what we publish