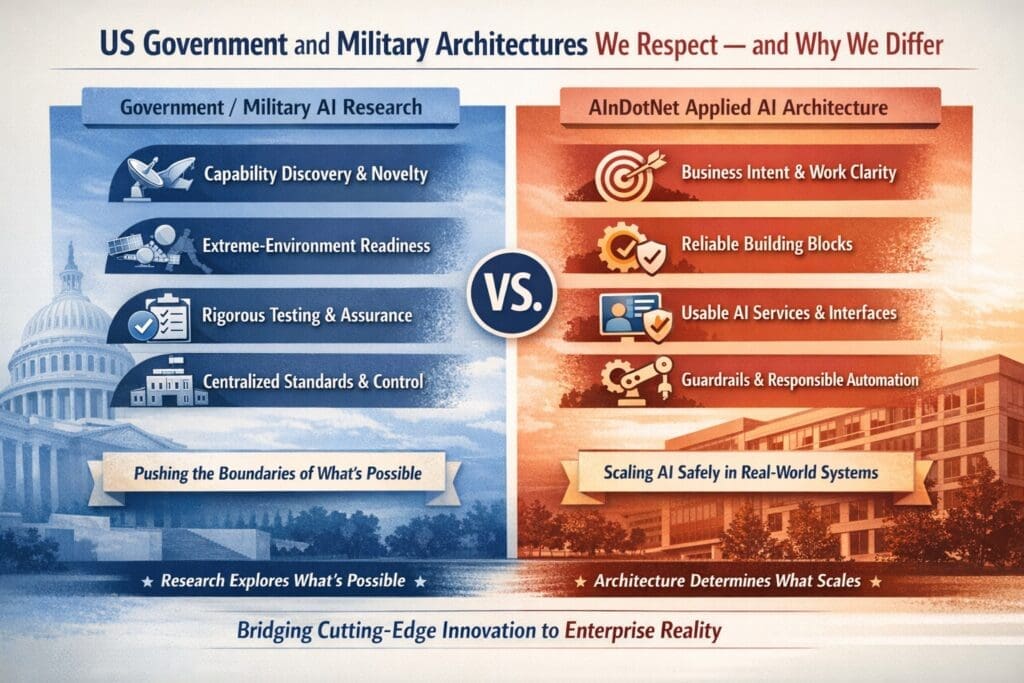

US Government and Military Architectures We Respect — and Why We Differ

America’s government and defense research ecosystem is world-class.

Organizations like DARPA, IARPA, AFRL, NIST, MITRE, and others have produced decades of foundational work in autonomy, testing and evaluation, assurance, edge compute, and trustworthy AI.

We respect that work deeply.

But our mission at AInDotNet is different.

We focus on applied AI architecture for medium to large businesses and operational government organizations—where AI must coexist with existing systems, real workflows, real constraints, and real accountability. Accountability, governance, and scalability matter more than experimentation.

That difference in mission changes what an architecture must prioritize.

This page explains:

- What these government and defense architectures are often optimizing for

- Where AInDotNet aligns with them

- Why our architecture emphasizes different things (on purpose)

The Core Difference: Research Discovery vs Operational Execution

Many government and military AI efforts are structured around a research mandate:

- Discover new capabilities

- Push performance boundaries

- Operate in extreme or adversarial environments

- Prove what is possible

- Advance national strategic advantage

AInDotNet’s mandate is operational:

- Make AI adoptable inside existing enterprises

- Reduce risk and increase clarity

- Deliver reliable value under real-world constraints

- Make systems governable, explainable, and maintainable

- Scale responsibly

In short:

Research discovers what is possible.

Architecture determines what scales.

Architectures We Respect (and What They Typically Emphasize)

Below are common themes that appear across U.S. government and defense AI programs, labs, and standards bodies.

1) Capability Discovery and Novelty

Research organizations often prioritize:

- new algorithms and methods

- advanced autonomy

- cutting-edge performance and new capabilities

That makes sense when the goal is to expand the frontier.

2) Extreme-Environment Readiness

Defense-oriented architectures often assume:

- degraded networks

- constrained hardware

- contested or adversarial conditions

- incomplete information

These assumptions drive emphasis on:

- edge compute

- resilience

- robustness

- autonomy under uncertainty

3) Assurance, Testing, and Formal Evaluation

Government systems frequently require:

- strong auditability

- defensibility

- structured evaluation

- robust safety analysis

This shapes architectures toward:

- test-and-eval frameworks

- monitoring, provenance, and traceability

- risk management discipline

4) Centralized Standards and Control

Large defense ecosystems often emphasize:

- consistency across programs

- centralized standards

- governance frameworks

- interoperability

This helps manage huge multi-program complexity.

Why AInDotNet Emphasizes Different Things

AInDotNet targets organizations that usually have these realities:

- Legacy systems already running the business

- Work is already happening today

- Ownership and accountability matter more than novelty

- Success is measured in quarters, not decades

- Deployment failure has reputational, legal, and operational consequences

- Most constraints are organizational—not mathematical

So our architecture is designed to answer:

How do we apply AI safely, incrementally, and defensibly—without destabilizing operations?

That’s why AInDotNet prioritizes execution discipline over model-centric novelty. How We Think About AI in Enterp…

The AInDotNet Architecture in One Sentence

AInDotNet treats AI as a system built in construction order—from business intent to work definition, to reliable capabilities, to reusable AI services, to interfaces, and only then to agents and autonomy. AInDotNet Vertical Content Pill…

This is not a marketing taxonomy. It’s an architecture and execution model built for enterprises and operational government teams. AInDotNet Vertical Content Pill…

Compare and Contrast: Why the Architectural Emphasis Diverges

Here’s the clearest way to see the difference:

| Dimension | Government / Military R&D (Often) | AInDotNet Applied AI Architecture |

|---|---|---|

| Primary Goal | Discover new capability | Operationalize proven capability |

| Time Horizon | Years to decades | Weeks to quarters |

| Risk Tolerance | High (research failure acceptable) | Low (operational failure is costly) |

| Starting Point | Models, methods, autonomy | Business intent + work clarity AInDotNet Vertical Content Pill… |

| Success Metric | Capability demonstrated | Value delivered safely + repeatably |

| System Boundary | Greenfield / controlled | Legacy enterprise systems + constraints |

| Autonomy Bias | Push autonomy forward | Earn autonomy last (guardrails-first) AInDotNet Vertical Content Pill… |

| Core Engineering Question | “Can this be done?” | “Can this be run reliably?” AInDotNet Vertical Content Pill… |

Where We Explicitly Align With Government / Defense Discipline

AInDotNet is not “startup AI.”

We share several architectural instincts common in serious mission systems:

Capability-First Execution

We treat work as capabilities with explicit contracts, validation, logging, and fallback behavior—proving execution before orchestration. AInDotNet Vertical Content Pill…

Separation of Concerns

We separate:

- capabilities (execution)

- AI services (reusable intelligence)

- interfaces (human interaction)

- agents (orchestration and delegation)

This prevents the common failure mode where “chat becomes the architecture.” AInDotNet Vertical Content Pill…

Guardrails and Accountability

We assume:

- traceability matters

- escalation paths matter

- ownership matters

- governance must be built into the system, not stapled on later

Where We Intentionally Differ (and Why That’s Responsible)

1) We De-Emphasize “Model-First” Thinking

In enterprise environments, model-first approaches often fail because:

- the work is undefined

- the process is unstable

- ownership is unclear

- the data and handoffs are messy

So we start with:

intent → work clarity → reliable capability building blocks. AInDotNet Vertical Content Pill…

2) We Delay Agents and Autonomy

Many research efforts push autonomy to explore what’s possible.

We delay autonomy because enterprises and operational agencies must answer:

- “Who is accountable?”

- “What happens when it fails?”

- “How do we audit decisions?”

- “How do we contain blast radius?”

So autonomy is introduced last, and only after reliable execution exists. AInDotNet Vertical Content Pill…

3) We Optimize for Adoption and Maintainability

Enterprises don’t just need a system that works today.

They need a system that can:

- be maintained

- be monitored

- be improved safely

- survive staff turnover

- scale across departments

That’s why our architecture is explicitly designed to be explainable across roles—executives, engineers, operators, compliance, and security. AInDotNet Horizontal Content Pi…

Why This Matters

If your organization is trying to scale AI responsibly, the biggest risk is not “picking the wrong model.”

The biggest risk is building AI systems without:

- clear work definitions

- disciplined execution components

- testable boundaries

- ownership and accountability

- safe paths to automation

AInDotNet exists to make those requirements explicit—so progress compounds instead of collapsing.

Practical Next Steps

If you’re exploring AI for a medium to large organization, start here:

- Enterprise AI Operating Model (how AI becomes operational muscle)

- Capability-First Backend Framework (how AI becomes reliable execution, not demos)

- Enterprise AI Architecture & Execution Model (our construction-order backbone)

References

The following government and defense organizations have published influential research, standards, and architectural thinking related to AI systems.

AInDotNet does not replicate these architectures. We reference them to acknowledge their contributions, align where appropriate, and clearly explain where our applied focus differs.

- DoD Chief Digital and Artificial Intelligence Office (CDAO)

- AI Rapid Capabilities Cell (CDAO + DIU)

- Defense Innovation Unit (DIU)

- DARPA

- IARPA

- AFRL (Air Force Research Laboratory)

- Office of Naval Research (ONR)

- Naval Research Laboratory (NRL) – Navy Center for Applied Research in AI (NCARAI)

- NIST AI Risk Management Framework (AI RMF 1.0) + Generative AI profile

- MITRE (AI Assurance / Federal AI Sandbox / AI & Autonomy Innovation Center)

- Johns Hopkins APL (Robust & Resilient AI, broader AI impact portfolio)

Frequently Asked Questions

Are you saying U.S. government and military AI architectures are not suitable for enterprises?

No.

Government and military architectures are often highly effective for the problems they are designed to solve—such as capability discovery, extreme-environment operation, and long-term strategic research.

AInDotNet focuses on a different problem space: making AI adoptable, governable, and reliable inside existing organizations. The difference is not quality or rigor—it is context, constraints, and intent.

Is AInDotNet’s applied AI architecture less advanced than government research architectures?

No. It is more constrained by design.

Enterprise and operational environments value:

- predictability

- accountability

- maintainability

- controlled risk

Advanced capability without execution discipline often fails quietly in these environments. AInDotNet intentionally prioritizes clarity and reliability over novelty so systems can scale safely.

Why does AInDotNet delay agents and autonomy until the final layer of the architecture?

Because autonomy amplifies whatever already exists—good or bad.

In enterprises and operational government systems:

- ownership matters

- escalation paths matter

- auditability matters

AInDotNet introduces agents only after work is clearly defined and reliably executable. Autonomy is earned through demonstrated stability, not assumed upfront.

Does this architecture prevent innovation or experimentation?

No.

The architecture is designed to contain experimentation, not block it.

By separating capabilities, AI services, interfaces, and automation layers:

- experiments do not destabilize production systems

- learning compounds safely

- failures remain local and informative

This allows innovation to proceed without operational disruption.

How does this approach align with frameworks like NIST AI RMF or government assurance efforts?

Very closely.

Where many frameworks define what must be governed or evaluated, AInDotNet focuses on how those requirements become concrete system design decisions.

In practice, this often makes compliance easier by embedding governance directly into architecture rather than treating it as an external process.

Is this architecture only relevant for government or regulated industries?

No—but it is especially valuable in those environments.

Any organization that:

- operates at scale

- relies on real workflows

- cannot tolerate unpredictable behavior

- requires explainability and accountability

will benefit from an execution-first AI architecture.

How is this different from vendor reference architectures or AI platforms?

Vendor architectures typically optimize for:

- tool adoption

- platform usage

- specific product ecosystems

AInDotNet is tool-agnostic.

It provides a durable architectural model that:

- survives tool churn

- integrates with existing systems

- clarifies ownership and responsibility

- separates intelligence from execution and interaction

Platforms can be plugged into this architecture—but they do not replace it.

Have Questions?

If you have questions not addressed here, we’re happy to walk through how this architecture would apply to your specific environment and constraints. You can contact us here.