Enterprise AI rarely fails because someone forgot to get excited about it.

Most organizations have plenty of AI enthusiasm. They have executives asking about productivity gains. Department leaders identifying possible use cases. Technical teams experimenting with copilots, automation, Azure AI services, Power Platform, custom .NET applications, and internal knowledge systems.

The problem is not interest.

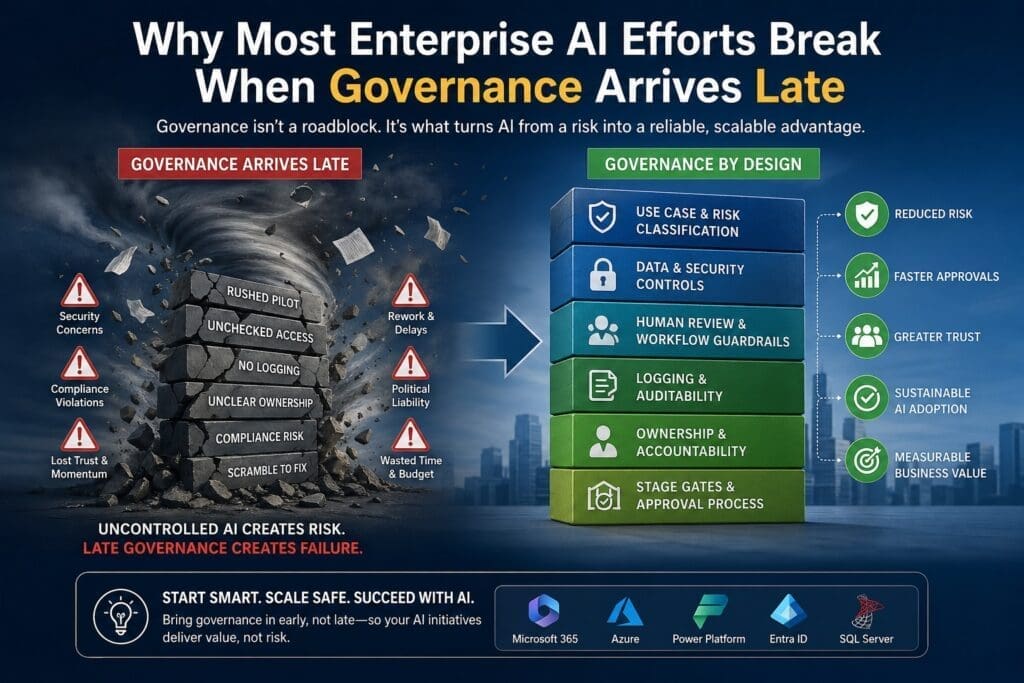

The problem is that many enterprise AI efforts move forward before anyone defines the rules for risk, security, compliance, approval, ownership, auditability, and support.

That is when governance shows up late.

And when governance shows up late, it does not feel like leadership. It feels like a roadblock.

That is one of the biggest reasons enterprise AI projects stall, get reworked, lose executive confidence, or quietly die after a promising pilot.

For Microsoft-centric organizations, this matters even more because AI is rarely isolated. It usually touches Microsoft 365, SharePoint, Teams, Azure, SQL Server, Power Platform, Active Directory / Entra ID, internal applications, sensitive business data, regulated workflows, or customer-facing systems.

In that environment, governance cannot be treated like paperwork at the end.

It has to be part of the design.

This topic fits the April 2026 content strategy: lead with the enterprise pain point first, then introduce the framework, architecture, and operating model as the solution.

The Core Problem: AI Enthusiasm Moves Faster Than Governance

AI projects often begin with a practical question:

“What could we automate?”

That quickly becomes:

“What could we build with Copilot, Azure AI, Power Platform, or a custom application?”

That is not a bad starting point. Exploration matters. Experimentation matters. Teams need room to learn.

But enterprise AI becomes dangerous when experimentation quietly turns into implementation before governance is involved.

The pattern usually looks like this:

- A department identifies a painful workflow.

- A technical team builds a quick AI prototype.

- The demo looks impressive.

- More people want access.

- The tool starts spreading.

- Security, legal, compliance, or IT leadership finally gets pulled in.

- Everyone discovers unanswered questions.

Those questions usually include:

- What data is this AI system allowed to access?

- Are prompts and responses being logged?

- Who reviews incorrect or risky outputs?

- Can the system expose confidential information?

- Does this violate any retention, privacy, or compliance requirement?

- Who owns the business process?

- Who supports the system when it breaks?

- What happens when the model gives a bad answer?

- What approval is required before more users get access?

- Is there a rollback plan?

By the time these questions are asked, the project may already have momentum, expectations, internal champions, and political pressure.

That is when governance becomes painful.

Not because governance is bad.

Because it arrived too late.

Late Governance Turns Reviewers Into Blockers

Security, legal, compliance, and infrastructure teams are often blamed for slowing down AI adoption.

Sometimes that criticism is fair. Some organizations do have slow approval processes, unclear standards, and overly defensive review cultures.

But in many cases, the real issue is that those teams were invited too late.

If security and legal are brought in after the solution direction has already been chosen, they are forced into a defensive position.

They are no longer helping shape the design.

They are being asked to approve something they did not help define.

That creates predictable friction.

The project team feels blocked.

The governance team feels cornered.

Executives get frustrated.

The business sponsor wonders why a promising AI pilot is suddenly stuck in review.

This is avoidable.

Governance should not be treated as a final inspection after the AI system is already built. In enterprise AI, governance should be a design input.

That means security, privacy, compliance, supportability, auditability, escalation, and approval rules should influence the project before the architecture is locked in.

Governance Is Not Just Restriction. Good Governance Is a Speed Tool.

A lot of teams think governance slows down AI.

Bad governance does.

Late governance definitely does.

But good governance speeds up AI adoption because it reduces uncertainty.

A practical AI governance model helps teams answer critical questions earlier:

- Which use cases are low risk enough to test?

- Which use cases require formal review?

- Which data sources are approved?

- Which systems require human review?

- Which outputs can be automated?

- Which outputs must remain advisory?

- What evidence is needed for production approval?

- Who signs off before rollout?

- What logs are required?

- What happens when something goes wrong?

Without those answers, every AI initiative becomes a custom negotiation.

That does not scale.

A good governance model creates repeatable decision paths. Teams know what is allowed, what is restricted, what requires approval, and what must be redesigned.

That reduces rework.

It also increases executive confidence because leadership can see that AI adoption is not just a collection of experiments. It is becoming an operating capability.

This is why the April content map frames governance as a speed tool, not just a restriction.

The Enterprise AI Failure Pattern

Most late-governance failures follow a similar pattern.

1. The AI project starts as a demo

Someone builds something useful.

Maybe it summarizes documents.

Maybe it drafts responses.

Maybe it helps classify support tickets.

Maybe it searches internal knowledge.

Maybe it automates a manual approval step.

The first version is narrow, informal, and often manually supported.

That is fine for learning.

The mistake is pretending that a useful demo is close to production.

2. The demo creates demand

The business sees value.

Users ask for access.

Managers want to expand the pilot.

Executives want to know when the organization can scale it.

Now the project is no longer just technical experimentation.

It has become an adoption question.

That means it is also a risk question.

3. Governance enters after expectations are already set

Security asks about access control.

Legal asks about data exposure.

Compliance asks about recordkeeping.

IT asks about support ownership.

Operations asks about failure handling.

Finance asks about cost.

Leadership asks about accountability.

The project team may have answers for some of these questions, but often not all of them.

That is where the project stalls.

4. The system requires rework

The architecture may need to change.

Logging may need to be added.

Data access may need to be restricted.

Human review may need to be inserted.

Sensitive use cases may need to be removed.

Approval workflows may need to be created.

The team now has to retrofit governance into a system that was not designed for it.

That is almost always more expensive than designing governance in from the beginning.

5. Trust weakens

This is the part many teams underestimate.

When an AI project gets slowed down by late governance, people often interpret it as failure.

Executives may start to wonder whether the team moved too fast.

Business sponsors may lose patience.

Security may become more cautious.

Users may become skeptical.

The technical team may feel punished for innovating.

Once trust weakens, future AI projects become harder to approve.

That is the real cost.

Late governance does not just damage one project.

It damages organizational confidence.

Why This Is Especially Important in Microsoft-Centric Organizations

Microsoft-centric organizations often have a major advantage when adopting AI.

They already have a strong enterprise foundation:

- Microsoft 365

- Teams

- SharePoint

- OneDrive

- Azure

- SQL Server

- Power Platform

- Power BI

- Entra ID

- .NET applications

- Existing security and identity infrastructure

- Existing business workflows

That gives them many practical ways to apply AI.

But it also creates complexity.

AI may interact with documents, permissions, business applications, databases, email, chat, workflows, reporting systems, and internal knowledge repositories.

That means governance cannot be generic.

A Microsoft-centric AI governance approach should account for:

- Identity and permissions

- Data boundaries

- SharePoint and document access

- Microsoft 365 content exposure

- Azure resource usage

- Custom .NET application integration

- Power Platform ownership

- Logging and telemetry

- Human review workflows

- Role-based access

- Security review

- Compliance requirements

- Support ownership

- Change control

- Production promotion criteria

The blunt version:

If your AI system can touch enterprise data, then governance is not optional.

And if your organization waits until the end to ask governance questions, the project is already at risk.

The Wrong Way to Handle AI Governance

The wrong model looks like this:

- Pick a use case.

- Build a prototype.

- Show the demo.

- Get people excited.

- Expand the pilot.

- Ask security and legal for approval.

- Discover problems.

- Rework the system.

- Lose momentum.

This model creates conflict because governance is introduced after the project has emotional and political momentum.

By then, every concern sounds like resistance.

That is a bad operating model.

It turns governance teams into villains when they should have been design partners.

The Better Way: Governance by Design

The better model is governance by design.

That does not mean every AI idea needs a six-month approval process.

That would kill innovation.

It means every AI initiative should be routed through the right level of governance based on risk.

Low-risk experiments should move quickly.

High-risk systems should get deeper review.

The key is to define the difference before teams start building.

A practical governance-by-design model includes:

1. Use case classification

Not all AI use cases carry the same risk.

For example, an internal brainstorming assistant is different from an AI tool that recommends financial decisions, processes HR records, handles legal documents, or communicates with customers.

Classify use cases by risk level before selecting tools or architecture.

Useful categories may include:

- Internal learning experiment

- Low-risk productivity assistant

- Department workflow assistant

- Decision-support system

- Customer-impacting system

- Regulated or sensitive-data system

- Autonomous action system

Each category should have different requirements.

2. Data sensitivity review

AI risk is heavily tied to data.

Before building, ask:

- What data will the AI system access?

- Is the data public, internal, confidential, regulated, or sensitive?

- Does the system need full access, or can access be limited?

- Are existing Microsoft permissions respected?

- Are prompts and outputs stored?

- Who can inspect the logs?

- Can users accidentally expose restricted information?

For Microsoft environments, this step is critical because permissions and data access often span SharePoint, Teams, OneDrive, SQL databases, and internal applications.

3. Human review rules

AI systems do not need the same level of human oversight in every scenario.

Some outputs can be fully automated.

Some should be suggestions only.

Some require approval before action.

Some should never be automated.

Define this early.

Examples:

- AI can summarize an internal meeting transcript.

- AI can suggest a response to a customer but require human approval.

- AI can classify support tickets but allow override.

- AI should not autonomously approve legal, financial, HR, or compliance-sensitive decisions without defined controls.

Human review should not be improvised after rollout.

It should be part of the workflow design.

4. Logging and auditability

No logging means no trust.

If the organization cannot inspect what happened, it cannot support the system.

At minimum, serious AI systems should consider logging:

- User request

- Input data references

- Prompt or instruction pattern

- AI response

- Model or service used

- Confidence indicators, if available

- Exceptions

- Latency

- User feedback

- Human overrides

- Escalations

- Version or configuration changes

This is not just for debugging.

It is for trust, compliance, improvement, and accountability.

5. Stage gates

Stage gates prevent teams from confusing experiments, pilots, MVPs, and production systems.

A simple AI stage-gate model might look like this:

| Stage | Purpose | Governance Requirement |

|---|---|---|

| Experiment | Learn what is possible | Lightweight review, no sensitive data |

| Pilot | Test with limited users | Data review, owner assigned, usage boundaries |

| MVP | Validate real workflow value | Logging, support path, human review, risk review |

| Production | Operational deployment | Formal approval, monitoring, escalation, change control |

This is one of the most practical ways to prevent AI chaos.

It allows experimentation without pretending every experiment is production-ready.

6. Clear ownership

Every AI system needs owners.

Not just a developer.

Not just a business sponsor.

Real ownership includes:

- Business process owner

- Technical owner

- Data owner

- Support owner

- Security reviewer

- Compliance/legal reviewer when needed

- Executive sponsor for higher-risk systems

Without ownership, AI systems become orphaned experiments.

Orphaned experiments become operational risk.

Start With Bounded-Risk Use Cases

One of the best ways to avoid governance problems is to start with bounded-risk use cases.

A bounded-risk AI use case has:

- Clear ownership

- Limited data exposure

- Reversible outcomes

- Human review where needed

- Measurable business value

- Low customer/legal/regulatory impact

- Defined support expectations

- A clear path to improvement or shutdown

This does not mean the use case has to be trivial.

It means the blast radius is controlled.

Good early candidates often include:

- Internal document summarization

- Drafting assistance with human approval

- Internal knowledge search

- Support ticket classification

- Meeting summary workflows

- Requirements analysis support

- Report drafting

- Data cleanup recommendations

- Workflow triage

- Internal training assistants

Riskier candidates include:

- Customer-facing autonomous responses

- HR decision support

- Legal interpretation

- Financial recommendations

- Compliance determinations

- Medical or safety-related decisions

- Fully automated approvals

- AI actions that change production data without review

The practical recommendation is simple:

Do not start your enterprise AI program with the use case most likely to trigger legal, compliance, and security resistance.

Start where you can prove value, build trust, and mature the governance model.

The Production Warning: Unsupported AI Systems Become Political Liabilities

This is where enterprise AI gets ugly.

A small unsupported AI tool may look harmless at first.

But once users rely on it, expectations change.

If it produces a bad answer, exposes the wrong data, makes a poor recommendation, or breaks during a critical workflow, the question becomes:

“Who approved this?”

That is when an AI tool becomes a political liability.

The danger is not just technical failure.

The danger is organizational embarrassment.

Executives do not want to defend systems that lack:

- Ownership

- Approval history

- Risk review

- Logs

- Support model

- Security controls

- Escalation process

- Clear usage boundaries

Once an AI project becomes politically risky, future AI projects face more scrutiny.

That is why governance needs to arrive early.

Not to slow innovation.

To protect it.

A Practical AI Governance Checklist

Before moving an enterprise AI project beyond early experimentation, ask these questions:

Business Fit

- What business problem does this solve?

- Who owns the workflow?

- What measurable value is expected?

- What happens if the AI output is wrong?

- Is the use case advisory, assistive, or autonomous?

Data and Security

- What data does the system access?

- Is any data confidential, regulated, or sensitive?

- Are permissions inherited from existing Microsoft systems?

- Can users access data they should not see?

- Are prompts and responses stored?

- Where are logs stored?

Workflow and Human Review

- Where does AI fit in the workflow?

- What decisions remain human-owned?

- What outputs require review?

- What exceptions require escalation?

- Can users override the AI?

Production Readiness

- Who supports the system?

- What monitoring exists?

- What failure modes are expected?

- What fallback process exists?

- How are changes tested and approved?

- What is the rollback plan?

Governance and Approval

- Who must review the project?

- What evidence is required for approval?

- What stage is the project in: experiment, pilot, MVP, or production?

- What risk level has been assigned?

- What conditions must be met before broader rollout?

If the team cannot answer these questions, the project is not ready for broad deployment.

That does not mean the project is bad.

It means the operating model is incomplete.

The Role of Stage Gates and Guardrails

Stage gates and guardrails are not bureaucracy when they are designed well.

They are construction controls.

They help the organization decide:

- What can be tested quickly

- What needs review

- What cannot move forward yet

- What must be logged

- What requires human approval

- What is safe to scale

- What needs executive signoff

The best AI governance systems do not treat every idea the same.

They separate lightweight experimentation from serious production deployment.

That distinction matters.

Without it, organizations either move too recklessly or become so cautious that nothing ships.

Both are bad.

The goal is not maximum speed.

The goal is controlled speed.

What Enterprise Leaders Should Do Next

If your organization is already experimenting with AI, do not wait until a pilot gets popular before defining governance.

Start now.

The next practical steps are:

- Inventory current AI experiments and pilots.

- Classify each by risk level.

- Identify what data each system touches.

- Assign business and technical owners.

- Define logging expectations.

- Decide where human review is required.

- Establish stage gates for experiment, pilot, MVP, and production.

- Bring security, legal, compliance, IT, and business leadership into the design process earlier.

- Start with bounded-risk use cases.

- Create a repeatable AI approval process.

This does not need to be perfect on day one.

But it does need to exist.

Because without governance, enterprise AI becomes a collection of disconnected experiments.

And disconnected experiments do not scale.

Conclusion: Late Governance Breaks Trust

Most enterprise AI efforts do not break because the technology is useless.

They break because the organization moves faster than its operating model.

Governance arrives after expectations are set.

Security and legal become blockers.

Technical teams are forced into rework.

Business sponsors lose momentum.

Executives lose confidence.

Users lose trust.

That is avoidable.

Enterprise AI needs governance early, not late.

The organizations that figure this out will move faster because they will know how to evaluate risk, approve use cases, protect sensitive data, support production systems, and scale AI responsibly.

The organizations that ignore it will keep repeating the same pattern:

Exciting demo.

Messy review.

Painful rework.

Lost momentum.

AI governance is not the enemy of progress.

Late governance is.

Frequently Asked Questions

What is enterprise AI governance?

Enterprise AI governance is the set of policies, review steps, ownership rules, risk controls, and approval processes used to manage how AI systems are selected, built, deployed, monitored, and supported inside an organization.

In practical terms, it answers questions like: what data can AI access, who owns the workflow, who approves rollout, what gets logged, when human review is required, and what happens when the AI system fails or produces a bad result.

Why do enterprise AI projects fail when governance arrives late?

Enterprise AI projects fail when governance arrives late because security, legal, compliance, infrastructure, and support concerns are discovered after the project already has momentum.

At that point, the team may need to redesign data access, add logging, restrict functionality, create approval workflows, define ownership, or insert human review. That causes rework, delays, frustration, and loss of trust.

The problem is not governance itself. The problem is governance being treated as a final approval step instead of an early design input.

Does AI governance slow down innovation?

Bad governance slows down innovation. Late governance slows down innovation. But good governance can speed up AI adoption by creating clear rules for what can move forward, what needs review, and what is too risky.

A practical AI governance framework helps teams avoid repeated custom approval battles. It gives business leaders, developers, security teams, and compliance teams a shared process for evaluating AI use cases.

The goal is not bureaucracy. The goal is controlled speed.

When should security, legal, and compliance teams be involved in an AI project?

Security, legal, and compliance teams should be involved before the solution direction is locked in, especially if the AI system touches sensitive data, customer information, regulated workflows, employee records, financial data, legal documents, or production systems.

They do not need to control every early experiment. But they should help define risk categories, data boundaries, approval requirements, logging expectations, and human review rules before the project moves from experiment to pilot or production.

What are AI stage gates?

AI stage gates are review checkpoints that help an organization decide whether an AI initiative is still an experiment, ready for a pilot, ready for MVP, or ready for production.

A simple stage-gate model might include:

- Experiment: learning what is possible

- Pilot: limited-user test

- MVP: real workflow validation

- Production: supported operational deployment

Each stage should have different requirements for logging, data access, ownership, human review, security review, and approval.

What is a bounded-risk AI use case?

A bounded-risk AI use case is an AI project where the potential damage is limited, the workflow is clearly owned, the data exposure is controlled, and the outcome can be reviewed or reversed.

Examples include internal document summarization, meeting summaries, support ticket classification, draft generation with human review, internal knowledge search, and report drafting.

Bounded-risk use cases are usually better starting points than customer-facing, regulated, autonomous, or high-liability AI systems.

What should be logged in an enterprise AI system?

An enterprise AI system should usually log enough information to support troubleshooting, auditing, improvement, and accountability.

Depending on the use case, logs may include:

- User request

- Input references

- Prompt or instruction version

- AI response

- Model or service used

- Exceptions

- Latency

- User feedback

- Human overrides

- Escalations

- Configuration changes

The blunt rule is simple: if you cannot inspect what happened, you cannot support or govern the system.

How should Microsoft-centric organizations approach AI governance?

Microsoft-centric organizations should connect AI governance to the systems they already use, including Microsoft 365, SharePoint, Teams, Azure, Power Platform, SQL Server, Entra ID, and custom .NET applications.

The governance model should address identity, permissions, data access, logging, workflow ownership, human review, approval rules, support responsibility, and production promotion criteria.

This is especially important because AI in a Microsoft enterprise usually touches real business data, existing workflows, and operational systems.

Should you add FAQ schema?