This is the contrarian point many teams need to hear:

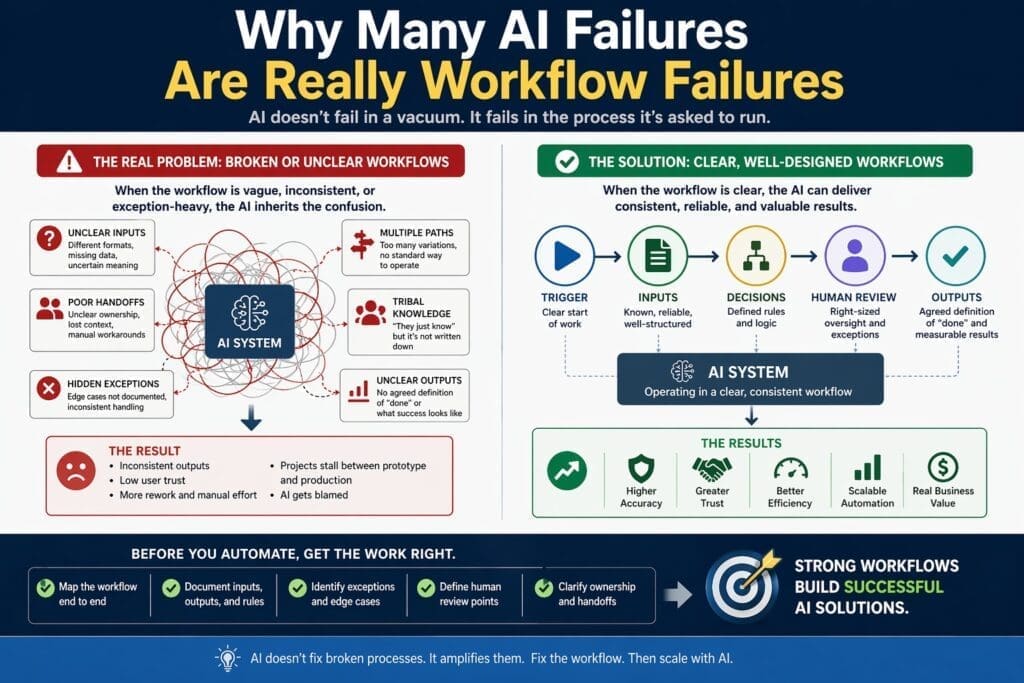

Many AI failures are actually workflow-definition failures, not model failures.

The model becomes the most visible part of the system, so it gets blamed first. But if the workflow around it is unclear, even a capable model will look unreliable.

Examples include:

- inconsistent outputs because the desired result was never clearly defined

- bad routing because handoff rules were vague

- poor confidence in automation because exceptions were undocumented

- user frustration because review points were not specified

- operational tension because no one owned the process end to end

In those cases, the AI did not create the confusion. It exposed it.

That is why mature AI teams diagnose failures at two levels:

- model behavior

- workflow definition around the model

If you only inspect the model, you may spend time tuning prompts, changing providers, or rebuilding interfaces while the real problem remains untouched.

Hidden Exceptions Destroy Automation Credibility

One undocumented exception path can make an AI workflow look unreliable.

That is because users do not judge automation only by the happy path. They judge it by what happens when reality becomes inconvenient.

Common hidden exceptions include:

- incomplete records

- unusual document formats

- conflicting source data

- late approvals

- special customer handling

- policy exceptions

- manual overrides known only by experienced staff

If the team does not surface these conditions during workflow analysis, they become visible later as production failures.

This is why exception discovery is a critical part of workflow readiness. Teams should ask:

- What goes wrong most often?

- What cases get escalated?

- What do experienced employees watch for?

- Where does rework happen?

- Which conditions require mandatory human review?

A workflow does not need to eliminate every exception before AI can help. But the organization does need to know what those exceptions are and how they should be handled.

Users can tolerate limits more easily than they can tolerate surprise.

Workflow Clarity Before Orchestration

Many teams get excited about orchestration too early.

They start discussing:

- agentic flows

- tool calling

- routing logic

- multi-step automation

- cross-system handoffs

- retrieval and decision loops

But orchestration only works well when the underlying work is already clear.

Good orchestration depends on:

- known work

- bounded decisions

- explicit handoffs

- defined review points

- understood escalation paths

If the workflow is unclear, orchestration does not solve the problem. It magnifies it.

A more complex system moving ambiguity from one step to another is still an ambiguous system. It is just harder to diagnose and support.

This is why workflow clarity should be treated as a prerequisite for orchestration, not something architecture is supposed to compensate for.

A Simple Workflow-Readiness Check Before AI Design

One of the best practical steps an organization can take is to introduce a lightweight workflow-readiness check before proposing AI.

Ask:

- Is the trigger clear?

- Are the inputs known and available?

- Are the main decision points understood?

- Are outputs defined clearly enough for people to agree on them?

- Are common exceptions documented?

- Are human review points explicit?

- Is there a real owner for the process?

If several of those answers are unclear, the workflow is probably not ready for AI design yet.

That does not mean the idea is bad. It means the organization has preparatory work to do first.

In many cases, improving workflow definition creates business value before any AI is introduced at all.

Why This Matters for Microsoft-Centric Enterprises

For medium and large organizations using Microsoft technologies, workflow clarity is especially important because the toolset is broad and the temptation to move quickly is high.

Teams may have access to:

- Microsoft 365

- Power Platform

- Azure AI

- custom dot net applications

- workflow automation tools

- reporting and data platforms

That flexibility is a strength. But it also creates risk. If the workflow is poorly defined, teams can build impressive-looking solutions in the wrong place, using the wrong pattern, with weak operational fit.

Workflow clarity protects against that.

It helps organizations choose better starting points, align the right stakeholders, reduce rework, and build AI solutions that fit real business operations rather than theoretical use cases.

Final Thoughts

Enterprise AI works best when it is applied to work that is already understood well enough to improve.

If the workflow is vague, undocumented, politically dependent, or packed with hidden exceptions, the automation will inherit those weaknesses. Then the project gets blamed for being an AI failure when it was really a workflow failure from the start.

The practical lesson is simple:

You cannot automate work you cannot clearly define.

Before selecting a model, pattern, or platform, define the workflow. Map the trigger, inputs, decisions, outputs, exceptions, and review points. Make ownership explicit. Expose the hidden complexity early.

That discipline may feel less exciting than jumping straight into AI. But in real enterprise environments, it is one of the clearest differences between a demo that looks smart and a system that actually works.

Frequently Asked Questions

What does it mean to say an AI failure is really a workflow failure?

It means the AI system is being blamed for problems that were actually caused by unclear processes, weak handoffs, undocumented exceptions, inconsistent inputs, or poor operational design. In many cases, the AI exposed workflow weaknesses that were already there.

Why do unclear workflows cause AI projects to fail?

Because AI depends on structure. If the workflow is vague, politically dependent, exception-heavy, or based on tribal knowledge, the automation inherits that confusion. The result is unstable output, inconsistent decisions, and low trust from users.

Are AI models usually the main reason enterprise AI projects fail?

Not always. Sometimes the model is the issue, but many enterprise AI failures are caused by problems outside the model, such as workflow ambiguity, unclear ownership, weak governance, poor data quality, or missing review paths.

How can a workflow problem look like an AI problem?

If the desired output is not clearly defined, exceptions are undocumented, or handoffs are inconsistent, the AI system may appear unreliable. Users then blame the model, even though the deeper issue is that the process itself was never stable enough to automate cleanly.

What are common signs that a workflow is not ready for AI?

Common warning signs include different people describing the same process differently, unclear ownership, inconsistent inputs, undocumented exceptions, vague review points, and heavy dependence on experienced employees to “just know” what to do.

Why is workflow clarity important before choosing AI tools?

Because the workflow determines what kind of solution fits. Teams should understand the trigger, inputs, decisions, outputs, exceptions, and review points before deciding whether the use case belongs in Copilot, Power Platform, Azure AI, or a custom dot net application.

Can AI still help if the workflow is messy?

Sometimes, but the results are usually weaker and harder to trust. In many cases, the better first step is to clarify the workflow before introducing AI. That often improves operations immediately and creates a more stable foundation for automation later.

What is the difference between automating a task and improving a workflow?

Automating a task improves one visible step. Improving a workflow means understanding and improving the full process, including approvals, handoffs, exception handling, review points, and downstream effects. Enterprise value usually comes from workflow improvement, not isolated task automation.

How should teams evaluate whether a workflow is AI-ready?

A simple readiness check should ask whether the trigger is clear, the inputs are known, the decision points are understood, the outputs are defined, common exceptions are documented, review points are explicit, and ownership is clear.

Why do hidden exceptions damage trust in AI systems?

Because users do not judge automation only on the happy path. They judge it by how it handles real-world complexity. One undocumented exception can make the system feel unreliable, even if it works well most of the time.

What roles should be involved in defining workflows before AI implementation?

Department heads, project managers, business analysts, programmers, operations leaders, and data stakeholders should all help define the workflow. Each sees different parts of the process, and that broader view helps expose hidden assumptions early.

How can organizations reduce workflow-related AI failures?

They can reduce failures by mapping one workflow end to end, documenting exceptions, defining review points, clarifying ownership, improving data quality, and introducing a workflow-readiness check before starting AI design.