Enterprise AI often gets blamed when projects fail. The model was inconsistent. The output was weak. The prompt did not work. The automation missed edge cases. The workflow broke under real usage.

Sometimes those complaints are true.

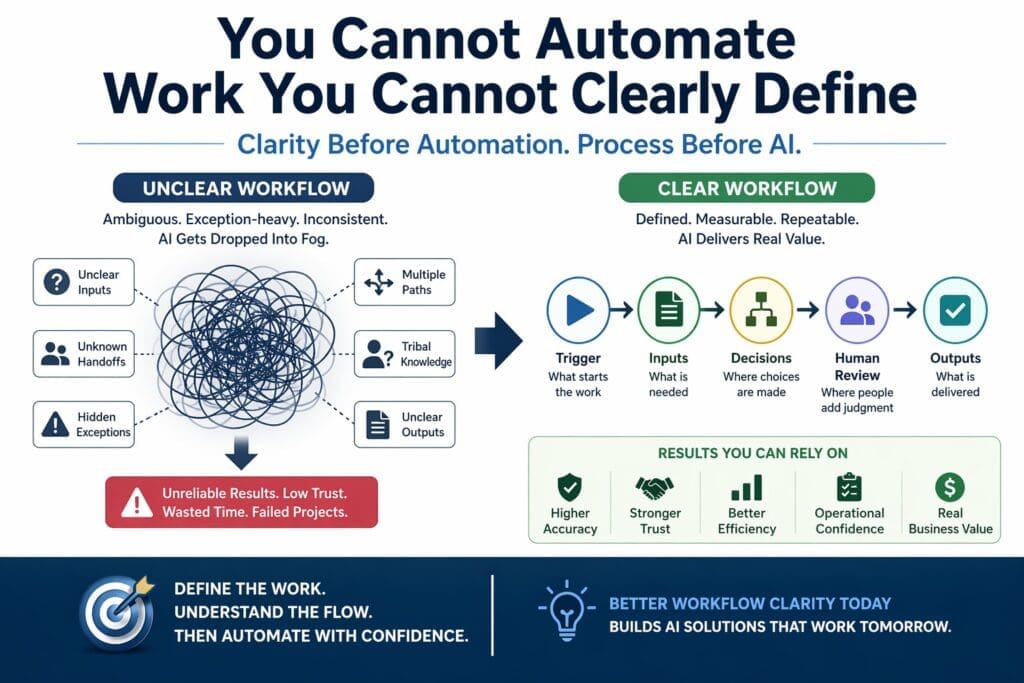

But in many organizations, the deeper problem starts earlier. The real issue is not that the AI was bad. It is that the work itself was never clearly defined in the first place.

That matters because AI cannot reliably improve work that the business cannot clearly explain. If the process is vague, politically dependent, exception-heavy, or based on tribal knowledge, the automation inherits that confusion. Then the project gets labeled an AI failure when it was really a workflow-definition failure wearing AI clothing.

For medium and large Microsoft-centric organizations, this is one of the most important realities in applied AI. Before choosing Copilot, Power Platform, Azure AI, or custom .NET development, teams need a clearer answer to a more basic question:

Do we actually understand the work well enough to automate it responsibly?

Why Workflow Clarity Matters in Enterprise AI

Many business leaders think of AI as a way to make work faster. That is true, but only when the work has enough structure to support automation.

A workflow is not just a task. A workflow includes:

- what triggers the work

- what inputs are required

- what decisions happen along the way

- what outputs are expected

- what exceptions show up

- where human review is required

- who owns the result

When those things are unclear, the AI solution gets dropped into fog.

The team may know that people spend hours reviewing documents, triaging requests, classifying records, drafting responses, or checking forms. That pain is real. But pain alone is not enough. If nobody has clearly mapped how the work actually moves from start to finish, the organization is trying to automate ambiguity.

That is where enterprise AI projects become unstable.

The Hidden Problem: People Know the Work, but the Organization Does Not

One reason this issue is so common is that experienced employees often know how to get the work done even when the workflow itself has never been formally defined.

They know:

- which exceptions matter

- when to escalate

- when to ignore a rule

- which data source is usually wrong

- which step takes priority when two things conflict

- which customer or department gets special handling

That hidden operational knowledge keeps the business moving. But it does not translate cleanly into automation.

Humans can compensate for weak process design because they use judgment, context, and experience. AI systems cannot safely depend on unwritten institutional memory in the same way. If the workflow is not explicit, the system has nothing stable to anchor to.

This is why many AI projects look reasonable in theory but break in practice. The business assumed the process was understood because experienced people could do it. But doing the work and defining the work are not the same thing.

Why Unclear Workflows Kill AI Projects

When the workflow is unclear, the AI initiative begins to fail in predictable ways.

1. Requirements become unstable

The team starts with one version of the process, then discovers exceptions, alternative paths, and informal rules later. Scope keeps shifting because the work was never fully understood.

2. Automation solves the visible task, not the real process

Organizations often automate the part that looks easiest, such as summarization, extraction, or classification, while ignoring approvals, handoffs, rework loops, and escalation points.

3. Testing becomes weak

If the business cannot define success clearly, technical teams cannot test the solution properly. The system may appear correct in a demo but fail under real operating conditions.

4. Trust breaks quickly

Users encounter edge cases the team never documented. The system feels unpredictable, and confidence drops fast.

5. The AI becomes the scapegoat

The model gets blamed for behavior that actually came from poor operational definition. Leaders think the AI failed when the real failure happened earlier in workflow analysis.

This is one reason enterprise AI stalls between prototype and production. The prototype proves that something is possible. Production reveals that the workflow itself was never solid enough to automate cleanly.

The Common Mistake: Automating the Task Instead of the Workflow

One of the most common enterprise AI mistakes is focusing on the visible task rather than the full workflow.

For example, a team may decide to automate document classification. On the surface, that sounds reasonable. But classification is only one step. The broader workflow might also include:

- document intake

- source verification

- duplicate checking

- confidence thresholds

- exception handling

- human review

- routing to the next stage

- downstream data updates

- compliance retention rules

If the AI improves classification but the rest of the process remains unclear, the business may gain very little. In some cases, it may create more work because employees now have to correct, validate, or reroute output that the system handled inconsistently.

This is why automating a task is not the same thing as improving a workflow.

A local improvement can look impressive in a meeting. But enterprise value comes from improving the actual operating chain, not just one visible action inside it.

How to Know If a Workflow Is Too Unclear for AI

Not every process is ready for AI. Some are too vague, too political, or too exception-heavy to automate responsibly without first cleaning up the operational design.

Warning signs include:

- different people describe the same process differently

- no one can agree on the correct output

- exceptions are handled informally

- approvals are based on personal judgment rather than defined rules

- the process depends heavily on tribal knowledge

- handoffs between teams are poorly defined

- data inputs are inconsistent or unreliable

- there is no clear owner for the process

- staff say things like “it depends” at almost every step

None of these mean the workflow is hopeless. But they do mean the organization should not jump straight into AI implementation.

First, the workflow needs to become more explicit.

Map One Workflow End to End Before Choosing the AI Pattern

A practical way to improve AI project quality is to map one workflow end to end before selecting tools or patterns.

That means documenting:

Trigger

What event starts the workflow?

Inputs

What information is required, and where does it come from?

Decision points

Where does judgment enter the process?

Outputs

What result should exist at the end?

Exceptions

What goes wrong, and what alternative paths are common?

Human review points

Where should a person approve, reject, confirm, or override?

This does not need to become an overengineered process documentation exercise. It just needs to be honest and operationally useful.

The value of this step is that it exposes the real shape of the work before the team starts debating technology.

Without that map, organizations tend to choose tools too early. They start discussing copilots, orchestration, extraction flows, agents, or custom .NET solutions before they have qualified the work itself.

That reverses the correct order.

Workflow Clarity Comes Before Tool Selection

In Microsoft-centric organizations, teams often ask whether a use case belongs in:

- Microsoft Copilot

- Power Platform

- Azure AI services

- a custom .NET application

- a hybrid architecture

Those are legitimate questions, but they should not come first.

Before tool selection, teams should ask:

- What problem are we solving?

- What workflow is involved?

- Is that workflow stable enough to automate?

- Where does human judgment belong?

- What exceptions are common?

- What level of risk is acceptable?

- Who owns the process?

Only after those questions are answered should the organization decide what technology pattern fits best.

This is one reason workflow clarity is so important. It shapes architecture. If the work is unclear, architecture becomes guesswork.

Frequently Asked Questions

What does “workflow clarity” mean in enterprise AI?

Workflow clarity means the organization can clearly describe how work starts, what inputs are used, where decisions happen, what outputs are expected, what exceptions occur, and where human review belongs.

Why do AI projects fail when workflows are unclear?

Because the automation inherits operational ambiguity. Requirements shift, exceptions are missed, outputs become inconsistent, and trust breaks quickly.

Should teams map workflows before selecting AI tools?

Yes. Workflow mapping should happen before choosing tools, patterns, or architecture. Otherwise, the team risks selecting technology that does not fit the real work.

Are many AI failures actually workflow failures?

Yes. In many cases, the model gets blamed for problems caused by poor process definition, weak handoffs, undocumented exceptions, or unclear ownership.

How do I know if a workflow is ready for AI?

A workflow is more likely to be AI-ready when the trigger, inputs, decision points, outputs, exceptions, human review points, and ownership are clearly defined.