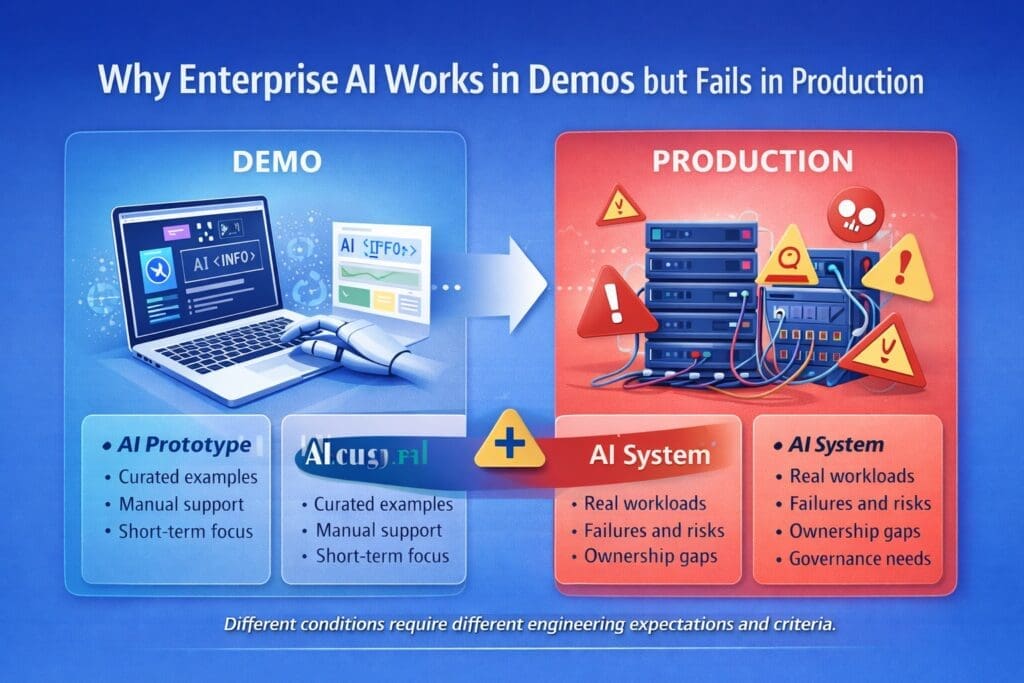

Most enterprise AI systems do not fail because the model is bad.

They fail because the demo was never a real system.

That is one of the biggest sources of confusion in enterprise AI. A team creates a proof of concept that looks impressive in a controlled environment. The output seems useful. Stakeholders get excited. Leadership sees potential. Then the organization tries to push the idea further and everything starts breaking down.

The logging is weak.

The workflow fit is unclear.

The ownership model is fuzzy.

The review process is undefined.

The fallback behavior is missing.

The support burden lands on people who never agreed to own it.

That is why enterprise AI can look strong in a demo and still fail in production.

Your April content map frames this as one of the four core pain points keeping Microsoft-centric organizations stuck: organizations keep confusing model output with production readiness.

Why Demos Survive and Systems Fail

A demo gets to live in a protected environment.

It is narrow.

It is curated.

It uses clean examples.

It usually avoids the worst edge cases.

It often has manual support hiding behind the scenes.

And it does not have to survive real organizational complexity.

That is why demos often look better than the systems they are meant to represent.

Your Week 2 structure says this directly: many prototypes look impressive because they are narrow, manually supported, and disconnected from production constraints.

A production system lives under very different conditions. It has to tolerate bad inputs, inconsistent user behavior, partial data, latency, failures, exceptions, governance review, support demands, and the political reality of cross-functional ownership.

A demo only has to look promising for a short period of time.

A production system has to keep working after the excitement wears off.

That is a very different standard.

The Most Common Mistake: Confusing a Prototype with a Production Candidate

This is one of the most common enterprise AI errors.

A prototype proves that something might be possible. It does not prove that it is ready for production.

Your content plan identifies this as the central mistake of Week 2: if a system has no observability, support ownership, fallback behavior, or change control, it is not production-ready.

That distinction matters because organizations often see a good demo and start acting as though the hard part is done.

It is not.

A prototype might show that:

- a model can generate a useful draft

- a chatbot can answer a narrow class of questions

- a summarization flow can produce decent output

- a classification model can detect a pattern

- an AI assistant can help inside a specific use case

But none of that proves:

- the system can be monitored reliably

- the outputs can be governed appropriately

- the exceptions can be handled cleanly

- the workflow integration is stable

- the user expectations are realistic

- the support model exists

- the system can survive production change over time

Those are different questions. And in enterprise AI, they are often the harder questions.

The Model Is Usually Not the Hardest Part

This is the contrarian point in your Week 2 plan, and it is one of the most important ones.

A lot of people still talk about AI as though the model is the system.

It is not.

The model is one component inside a larger operational structure. In many enterprise environments, the harder parts are:

- integration with real business workflows

- output review and exception handling

- access control and data boundaries

- support ownership

- logging and observability

- governance and compliance alignment

- user training and expectation management

- change control over prompts, models, and configuration

- escalation when confidence is low or output is wrong

That is why many AI initiatives stall after a strong demo. The organization solved the easiest visible part first and postponed the harder operational work until later.

Then later arrives.

What Production Actually Demands

Production is not “the demo plus some cleanup.”

Production is a different construction state.

Your April plan says that prototype, Minimally Viable Product (MVP), and production are different construction states requiring different engineering expectations, not just more polish.

That is exactly right.

A prototype asks:

Can this idea work at all?

An MVP asks:

Can this solve a narrow real problem under controlled conditions?

A production system asks:

Can this operate reliably, safely, and supportably inside the business?

That means production readiness includes more than output quality. It includes operational fitness.

A production-grade AI capability typically needs:

- clear business ownership

- workflow definition

- logging and traceability

- exception handling

- support and escalation paths

- human review where appropriate

- performance expectations

- failure behavior

- governance alignment

- release control

- monitoring and alerting

- user guidance and adoption planning

That is a system.

A demo is usually just a glimpse of one piece.

Why No Logging Means No Trust

Your Week 2 plan makes this point bluntly: if you cannot inspect prompts, inputs, outputs, exceptions, latency, and human overrides, you cannot support the system.

That is exactly right.

If an enterprise AI system is used in real work, then sooner or later someone will ask:

- Why did it produce that answer?

- What input did it receive?

- Which prompt or configuration was used?

- Was the model behaving normally?

- How often is this failing?

- How much latency are users seeing?

- When did quality start drifting?

- What did the reviewer override?

- Which department or workflow is generating the most exceptions?

If your team cannot answer those questions, then your AI system is not supportable.

And if it is not supportable, it is not trustworthy.

This is where many demos hide reality. In a demo, no one needs operational evidence. In production, evidence is everything.

Why Workflow Fit Matters More Than Demo Quality

A demo can still look good even when the workflow fit is wrong.

That is another reason enterprise AI systems fail after early success.

A use case may perform well in isolation but still break down when inserted into real work. The model output may be acceptable, yet the surrounding process may still be poorly defined. Users may not know when to trust the result, when to review it, when to reject it, or how to escalate it.

That is not a model problem. That is a workflow design problem.

Enterprise AI succeeds when the output fits into a known workflow with clear handoffs, bounded decisions, and realistic expectations. It fails when the AI component is dropped into operational fog and asked to somehow make the workflow coherent.

Why Ownership Problems Kill AI After the Demo

A surprising number of AI initiatives die because nobody truly owns the production reality.

During a demo or proof of concept, this is easy to hide. A few motivated people keep things moving. A developer tweaks the prompt. A manager explains away the rough edges. A champion manually supports the result.

That arrangement does not survive scale.

In production, ownership has to be explicit:

- Who owns business outcomes?

- Who owns user adoption?

- Who owns support?

- Who owns model or prompt changes?

- Who owns exception review?

- Who owns governance evidence?

- Who owns escalation when the system behaves badly?

If those answers are vague, the system may still demo well, but it will not survive production pressure.

Why Enterprises Keep Repeating This Pattern

There are a few reasons this happens repeatedly.

1. Demos are easier to celebrate than operations

A demo is visible. Operations are not. Leaders often reward visible progress before operational readiness exists.

2. AI output is mistaken for system readiness

People see useful output and assume the capability is close to done. It is not.

3. The organization delays hard conversations

Support, governance, ownership, logging, and failure modes are less exciting than the demo. So they get pushed later.

4. Teams assume production is a later polish step

It is not. Production changes what the system has to be.

5. No promotion criteria exist

Your Week 2 plan says every AI initiative should define what must be true before it graduates.

That is one of the cleanest fixes.

If the organization never defines promotion gates, then prototypes drift forward based on enthusiasm instead of standards.

What Enterprises Should Define Earlier Than They Think

Your plan recommends defining production criteria earlier than most teams expect, including logs, support model, review path, failure handling, escalation, and user expectations.

That is the right move.

Before building too much, enterprise teams should decide:

Logging

What will be recorded about prompts, inputs, outputs, latency, errors, overrides, and workflow events?

Support model

Who supports the system when something goes wrong?

Review path

When is human review required, and who performs it?

Failure handling

What happens if the output is low quality, incomplete, unavailable, or clearly wrong?

Escalation

When does the issue move beyond the local team? Who gets involved?

User expectations

What should users trust, what should they verify, and what should they never assume?

These are not late-stage details. They are design inputs.

A Better Way to Think About AI Construction Order

If an organization wants enterprise AI to survive beyond the demo stage, it needs construction order.

A better sequence looks like this:

- define the business problem

- define the workflow context

- clarify ownership and support expectations

- identify risk, review, and governance needs

- define observability and failure behavior

- build the prototype

- test the MVP in bounded conditions

- promote only when production criteria are actually met

That is slower than hype, but faster than rework.

It also aligns with your broader monthly strategy of pain first and framework second. The practical pain is that demos do not survive contact with production. The framework response is to treat prototype, MVP, and production as different construction states with different requirements.

What Good Enterprise AI Promotion Gates Look Like

Most enterprises need explicit standards for moving an AI capability from prototype to MVP to production.

At minimum, those standards should cover:

- defined use case and workflow scope

- named business owner

- known risk level

- logging and traceability

- support model

- review and escalation path

- exception handling

- rollback or fallback behavior

- governance signoff where required

- production hosting and operational responsibility

Without promotion gates, demo success becomes political pressure.

With promotion gates, demo success becomes evidence to evaluate.

That is a much healthier model.

Why This Matters Even More in Microsoft-Centric Organizations

Microsoft-centric organizations often have powerful advantages: existing workflow systems, structured data environments, security controls, familiar developer tooling, Power Platform options, Azure services, and strong .NET integration paths.

But those advantages do not remove the need for production discipline.

In fact, they make discipline more important, because the organization has many ways to build something quickly. That makes it even easier to confuse speed of prototyping with readiness for production.

The goal is not just to prove that an AI feature can exist somewhere in the Microsoft stack.

The goal is to build a capability that can survive enterprise reality.

Final Thought

Enterprise AI works in demos because demos are protected.

Enterprise AI fails in production because production is where real systems have to prove themselves.

That means surviving:

- messy inputs

- workflow friction

- support demands

- review and escalation needs

- governance requirements

- user misunderstanding

- operational drift

- ownership ambiguity

A good demo is not meaningless. It can be useful. It can prove potential. It can help teams learn.

But it is not the same thing as a production candidate.

If your enterprise wants AI systems that survive beyond the presentation stage, then you need to stop treating prototypes like polished systems and start defining production criteria before enthusiasm outruns engineering reality.

That is how enterprise AI moves from impressive demo to usable system.

Enterprise AI Operating Model

If your organization is struggling to move AI initiatives from prototype to production, this is exactly why we created our Enterprise AI Operating Model—a structured system for discovering, selecting, validating, and advancing the right enterprise AI initiatives. It helps Microsoft-centric organizations bring more discipline, clarity, and production awareness to enterprise AI efforts. You can learn more here: Enterprise AI Operating Model

Frequently Asked Questions

Why does enterprise AI work in demos but fail in production?

Because demos are narrow, curated, and manually supported, while production systems must handle real workflows, failures, logging, support, governance, and user behavior.

What is the difference between an AI prototype and a production system?

A prototype shows that an idea may be possible. A production system must be supportable, observable, governed, and reliable enough for real business use.

Why is logging important in enterprise AI?

Logging helps teams inspect prompts, inputs, outputs, latency, exceptions, and overrides so the system can be supported, improved, and trusted.

What should enterprises define before promoting an AI system to production?

They should define ownership, workflow fit, risk level, observability, support model, review path, escalation path, and failure handling.

Is the model the hardest part of enterprise AI?

Usually not. Integration, workflow fit, governance, supportability, and operational ownership are often harder than the model itself.