Most enterprise AI backlogs do not fail because organizations lack ideas.

They fail because nobody is forcing order on the ideas.

In many Microsoft-centric organizations, AI suggestions come in from every direction. Executives want strategic wins. Department heads want efficiency. IT wants control. Developers want to test what is possible. Vendors keep introducing new features. Everyone sees opportunity, but very few teams stop long enough to separate curiosity from value, experiments from real candidates, or pilots from projects that could survive production.

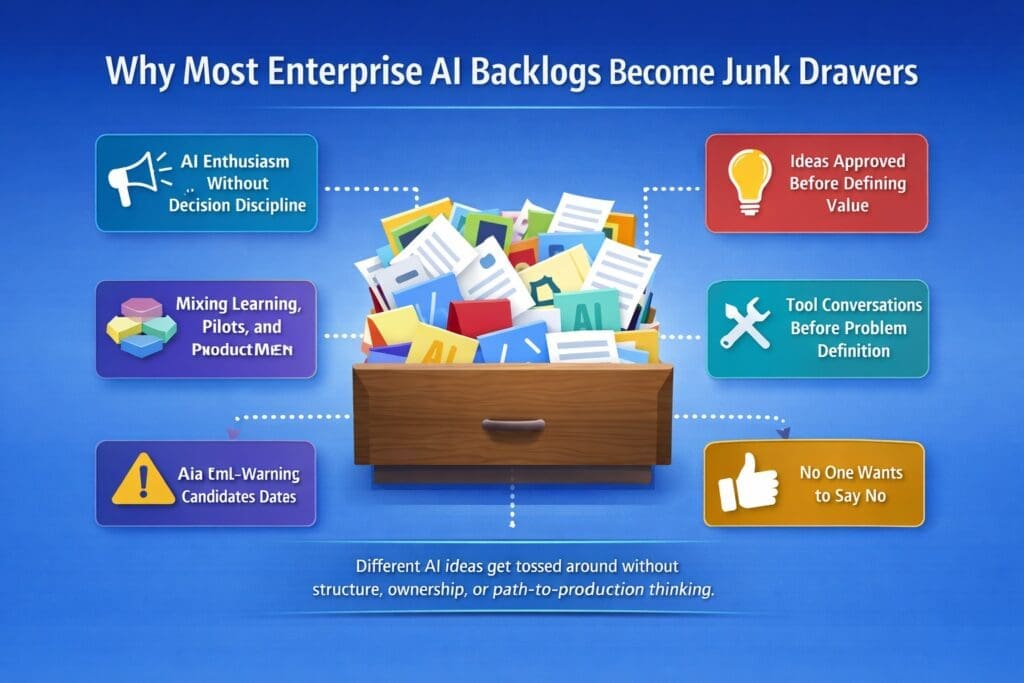

That is how an AI backlog becomes a junk drawer.

It fills up with disconnected ideas, half-formed requests, vendor-driven suggestions, department wish lists, and random proof-of-concepts. The backlog looks active, but it is not structured. It does not guide execution. It does not support decision-making. It does not create momentum.

It becomes a storage bin for unresolved thinking.

What an Enterprise AI Junk Drawer Looks Like

A junk drawer backlog usually has some predictable characteristics.

It contains ideas with no clear business owner.

It mixes strategic initiatives with low-value curiosities.

It includes projects that sound impressive but have no measurable business gain.

It contains requests that depend on unclear workflows, weak data, or undefined support models.

And it keeps growing because people assume collecting more ideas is the same as making progress.

It is not.

Your April content plan frames this problem directly: enterprises are generating AI ideas faster than they can evaluate them, and the core issue is not lack of enthusiasm but lack of construction order, gating, and decision discipline.

That is the real problem.

Most AI backlogs are not pipelines. They are holding areas.

Why This Happens So Often

There are several reasons enterprise AI backlogs drift into junk drawer territory.

1. AI enthusiasm outruns decision discipline

When organizations first get serious about AI, idea volume spikes fast. That sounds healthy, but it creates a side effect. People start submitting possibilities faster than leadership can evaluate them. Every meeting creates more ideas. Every vendor demo creates more ideas. Every executive conversation creates more ideas.

Without a decision model, enthusiasm becomes noise.

2. Teams approve ideas before defining business value

This is one of the biggest problems in enterprise AI. A use case sounds modern, strategic, or exciting, so it gets attention before anyone answers the basic questions:

- What business problem does this solve?

- How painful is the current process?

- Who owns the workflow?

- What measurable gain should result?

- Is this actually worth implementing?

Your Week 1 plan explicitly calls this out as the common mistake: approving ideas before defining business value.

That wrong sequence creates a backlog full of ideas that are interesting but weak.

3. Curiosity, pilots, and production candidates get mixed together

Not every AI idea deserves the same level of attention.

Some ideas are useful for learning.

Some deserve a bounded pilot.

Some are serious production candidates.

But in many organizations, all three go into the same list with equal visual weight. That creates confusion for executives, PMs, department heads, and technical teams alike. It also makes prioritization almost impossible because nobody is classifying the work honestly.

Your Week 1 structure already points to the fix: separate ideas into curiosity or learning items, bounded pilot candidates, and production candidates.

Without that separation, the backlog becomes editorially messy and operationally useless.

4. Tool discussions happen before problem clarity exists

A lot of enterprise teams start by asking:

- Should we use Copilot?

- Should we use Azure AI?

- Should this be Power Platform?

- Should we build this in custom .NET?

Those are legitimate questions, but they are not the first questions.

When tool selection happens before the organization clearly defines the problem, workflow, owner, measurable gain, and risk level, the backlog becomes technology-led instead of business-led. Your plan says that directly: prioritization should come before tool selection.

That mistake adds more clutter because the organization starts evaluating platforms before it has earned the right to.

5. Nobody wants to say no

This is a management problem as much as a technical one.

In many enterprises, rejecting ideas feels politically harder than collecting them. So instead of filtering aggressively, teams keep adding to the list. The backlog grows because it is socially easier to keep options open than to apply standards.

That creates a false sense of opportunity. In practice, it weakens execution.

Why More AI Ideas Usually Means Less Progress

This sounds backward, but it is usually true.

Your April plan makes the contrarian point clearly: more AI ideas usually means less actual progress, because when idea volume rises without gating, delivery confidence drops.

That happens because every additional idea creates overhead:

- more evaluation

- more meetings

- more expectation management

- more stakeholder alignment

- more technical investigation

- more governance considerations

- more context switching

A large backlog feels productive because it is visible. But size is not the same thing as progress. A backlog can grow for months while real implementation stays flat.

That is one reason enterprise AI efforts often feel busy but stuck.

The Hidden Cost of Junk Drawer Backlogs

A junk drawer backlog is not harmless. It creates real costs.

Strategic cost

Leadership loses visibility into what actually matters. High-value opportunities sit beside weak ideas with no meaningful distinction.

Operational cost

Teams waste time evaluating things that never should have advanced past initial discussion.

Political cost

Different departments begin lobbying for their own AI ideas instead of aligning around measurable business value.

Technical cost

Developers and architects spend time exploring tools and patterns for ideas that are not mature enough to deserve design effort.

Support cost

Random experiments start creating expectations. Then those expectations turn into support debt when someone wants a prototype kept alive.

Your Week 1 plan calls this out well: disconnected pilots teach teams to tolerate operational chaos and create support debt.

That is one of the most important points in the whole month.

Because once a junk drawer backlog starts feeding random pilots, the mess becomes more expensive.

How to Tell If Your AI Backlog Is a Junk Drawer

Most organizations do not need a formal audit to know they have this problem. The warning signs are obvious.

Your backlog is probably a junk drawer if:

- most ideas have no named business owner

- many items have no measurable value attached

- use cases are described vaguely

- workflow details are missing

- nobody has classified items as learning, pilot, or production

- multiple items exist because of tool excitement rather than business need

- projects stay in “discussion” mode too long

- teams keep adding ideas but few are graduating into delivery

- there is no scoring model or decision gate

- no one can explain why one idea should move before another

If several of those are true, your problem is not idea generation.

It is backlog discipline.

How to Fix the Problem

The fix is not complicated, but it does require adults in the room to force structure on the process.

Step 1: Separate the backlog into three buckets

Start by classifying every item as one of the following:

- curiosity or learning

- bounded pilot candidate

- production candidate

This one move improves clarity immediately. It stops people from pretending every idea belongs in the same conversation.

Step 2: Add a decision gate

Your Week 1 plan recommends a simple scoring screen based on six criteria: workflow clarity, business value, data readiness, risk level, ownership, and path to production.

That is the right filter.

For each AI idea, ask:

- Is the workflow clearly defined?

- Is there measurable business value?

- Is the data usable enough?

- Is the risk acceptable?

- Is there a real owner?

- Is there a plausible path to production?

If the answer is weak in several areas, the item should not move forward.

Step 3: Put business value ahead of tool enthusiasm

Do not let the first conversation be about platforms.

The first conversation should be about pain, workflow, ownership, gain, and feasibility. Once those are clear, then decide whether the solution belongs in Copilot, Azure AI, Power Platform, custom .NET, or somewhere else.

Step 4: Reduce active priorities

Most organizations are trying to move too many AI initiatives at once. That spreads attention thin and creates a backlog full of motion instead of progress.

Fewer priorities, chosen with more discipline, usually produce better outcomes.

Step 5: Create real standards for advancement

A backlog item should not move just because someone senior likes it or because it demos well.

It should move because it clears the standards.

That means your enterprise needs actual advancement rules, not mood-based decision-making.

What a Healthy Enterprise AI Backlog Looks Like

A healthy backlog is not necessarily small. It is structured.

It has categories.

It has filters.

It has owners.

It has scoring logic.

It has business reasoning.

It distinguishes curiosity from commitment.

And it makes it easier to explain why certain initiatives should move first.

A healthy backlog also supports communication across roles.

Executives can see value and risk.

Department heads can see workflow relevance.

Project managers can see sequencing.

Technical teams can see readiness.

Security and governance teams can see where review belongs.

That is what a useful backlog does. It supports coordinated decision-making instead of collecting noise.

Why This Matters More in Microsoft-Centric Organizations

Microsoft-centric organizations often have an advantage because they already have a broad platform ecosystem, deep workflow environments, and practical enterprise tooling. But that advantage becomes a liability if the organization starts thinking the availability of tools removes the need for prioritization discipline.

It does not.

In fact, broad platform availability can make junk drawer behavior worse. When teams know there are many possible ways to build something, they are even more likely to start solutioning too early.

That is why structure matters.

The problem is rarely lack of possible AI options.

The problem is lack of disciplined selection.

Final Thought

Most enterprise AI backlogs become junk drawers because organizations confuse collecting ideas with making progress.

They keep adding without sorting.

They keep discussing without filtering.

They keep experimenting without classifying.

They keep approving before defining value.

And they keep talking about tools before they understand the work.

That is not an innovation strategy.

It is unmanaged accumulation.

If an enterprise wants better AI outcomes, the first move is not to generate more ideas. The first move is to clean up the backlog, separate signal from noise, and apply a decision model that forces clarity.

That is how real prioritization begins.

Enterprise AI Operating Model CTA

If your organization is struggling to prioritize AI projects, this is exactly why we created our Enterprise AI Operating Model—a structured system for discovering, selecting, validating, and advancing the right enterprise AI initiatives. It helps Microsoft-centric organizations move beyond scattered ideas and disconnected pilots toward a more disciplined, production-aware approach. You can learn more here: Enterprise AI Operating Model

Frequently Asked Questions

What is an enterprise AI backlog?

An enterprise AI backlog is a list of potential AI initiatives, use cases, experiments, pilots, and project ideas under consideration by an organization.

Why do AI backlogs become junk drawers?

They become junk drawers when organizations collect ideas without separating learning items, pilots, and production candidates, and without applying business value, ownership, and path-to-production filters.

How should enterprises prioritize AI backlog items?

Enterprises should prioritize AI backlog items using criteria such as workflow clarity, business value, data readiness, risk, ownership, and path to production.

Should every AI idea go into the main backlog?

No. Some ideas belong in a learning or experimentation bucket rather than the main list of serious project candidates.

Why is tool-first thinking a problem in AI planning?

Tool-first thinking leads teams to evaluate platforms before they have clearly defined the business problem, workflow, owner, measurable gain, and risk level.